on-chain

5K posts

on-chain

@JibrinMD005

Crypto thinker//market mover// blockchain believer//Decoding the crypto universe//one block at a time

The way I see it, speed built the internet, but memory will shape the next one. 0G_labs engineers a performance layer for decentralized AI, creating modular infrastructure for real workloads. It handles high throughput, composable layers, and scalable intelligence. Scaling intelligence alone isn’t enough. If outputs disappear, we lose history. permacastapp turns conversations, media, research, and governance into permanent public memory. It’s indexed, contextualized, and retrievable, surviving refresh cycles. 0G pushes intelligence forward. Permacast ensures it doesn’t forget. The future of decentralized systems is about auditing, referencing, and building on their outputs years later.

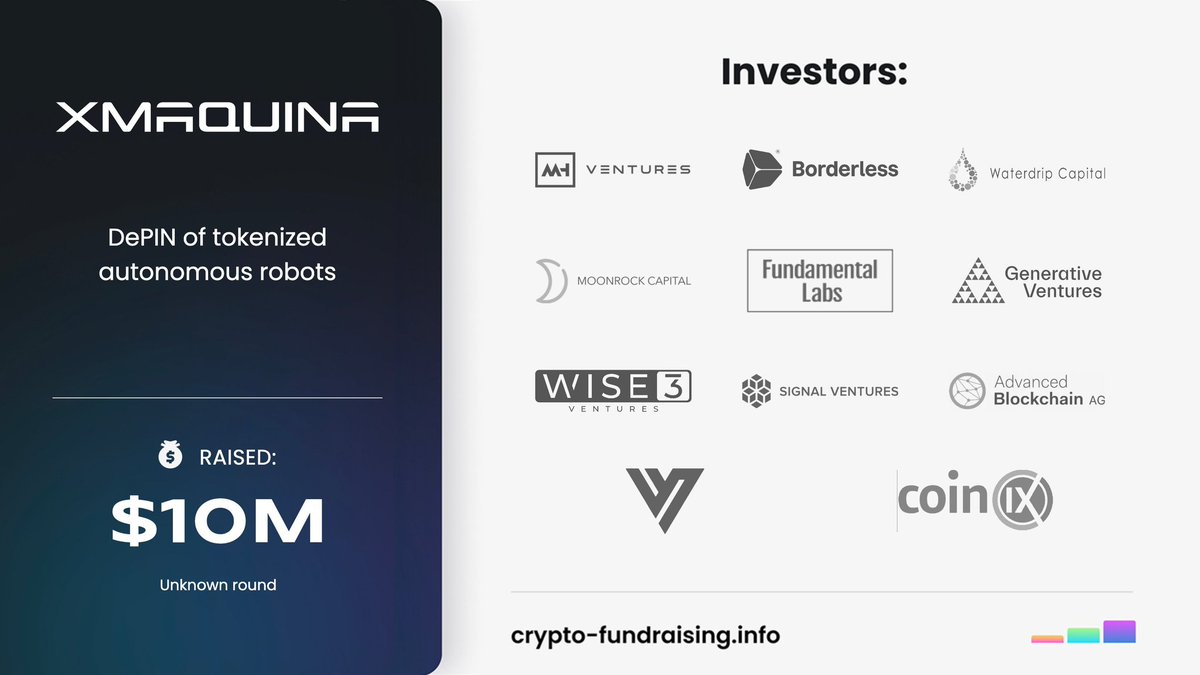

Lasting projects rarely make noise; they become indispensable infrastructure. Over time, builders rely on them by default. @xmaquina shows this path, with $DEUS embedding into the foundational layer, shaping a future where systems become impossible to replace. @xmaquina

Implementation is where narratives are tested, and in Web3 most ideas fail not because they lack vision but because they lack execution architecture. What differentiates Permacastapp, 0G_labs, Xmaquina and Dgrid_ai is not just ambition but how their infrastructure translates theory into operational success across decentralized systems, AI-native tooling and modular blockchain coordination. Permacastapp approaches implementation from a knowledge-layer perspective. By embedding Web3 social distribution, creator monetization rails and permissionless broadcasting into a decentralized content stack, it turns attention into programmable value. Its success lies in aligning onchain identity, tokenized incentives and community-driven growth loops so that distribution is not rented from Web2 platforms but owned at the protocol layer. The keyword strength around decentralized media, creator economy, onchain social and programmable content is not branding; it is execution embedded into product architecture. 0G_Labs pushes implementation deeper into infrastructure. As a modular AI blockchain with decentralized data availability, scalable compute layers and verifiable storage, it focuses on making AI execution native to Web3 rather than dependent on centralized clouds. The integration of modular architecture, high-throughput DA, AI inference, zk-proofs and decentralized storage forms a stack where builders can deploy autonomous AI agents with verifiable outputs. Success here is measured in throughput, latency reduction and composability across rollups, not in surface-level hype. @xmaquina represents implementation at the coordination layer between machines, AI agents and tokenized ownership. By combining DePIN, robotics infrastructure, machine RWAs and autonomous coordination frameworks, it creates economic rails for physical AI systems to operate onchain. The keywords decentralized robotics, machine economy, AI agents, tokenized hardware and autonomous coordination are reflected in real-world deployment logic where machines become productive crypto-native actors. Implementation means machines earning, transacting and scaling without centralized intermediaries. $DEUS Dgrid_ai closes the loop by focusing on decentralized AI grids, distributed compute marketplaces and community-powered infrastructure. Its implementation strategy revolves around aggregating idle compute, enabling permissionless AI workloads and aligning incentives through tokenized participation. With decentralized GPU networks, AI compute marketplaces, blockchain coordination and community governance, it demonstrates how distributed infrastructure can compete with centralized hyperscalers when incentives are correctly engineered. Across these ecosystems, success is not defined by token velocity but by infrastructure permanence. Decentralized media ownership, modular AI execution, machine-token economies and distributed compute grids are converging into a unified implementation thesis: Web3 succeeds when coordination costs fall, verification becomes native and communities become infrastructure providers. That is not theory. That is execution turning vision into resilient, scalable systems.

@xmaquina lets you own machines that create real value not just tokens. Capital becomes tool for clear strategy, not guesswork. $DEUS aligns incentives and guides decisions. It brings scale and safer exposure. Not just access to the machine economy but steady, protected growth

Storing the enormous datasets and model weights required for cutting-edge AI poses a deepening dilemma: centralized cloud providers dominate, charging premium rates for what often feels like fragile permanence, while decentralized alternatives struggle with slow retrieval, high latency, or questionable long-term durability. This creates a precarious situation where critical AI assets—training corpora, inference checkpoints, sensor streams—risk becoming inaccessible, altered without trace, or prohibitively expensive to maintain at scale. For global teams pushing boundaries in research or application development, the fear isn’t just cost; it’s the erosion of sovereignty over data that could define tomorrow’s intelligence. @0G_labs confronts this head-on with its AI-native storage solutions, engineered for secure, global data permanence that redefines reliability in decentralized environments. By prioritizing AI workloads from the ground up, the system delivers ultra-low-cost persistence alongside verifiable guarantees, ensuring data remains retrievable and unaltered indefinitely. Developers gain the freedom to archive vast unstructured blobs or manage mutable states without the trade-offs of traditional clouds, achieving up to 95% cost reductions while preserving high-speed access essential for real-time AI operations. The broader impact lies in enabling a truly open ecosystem where data isn’t rented but owned, empowering collaborative innovation across borders without vendor lock-in. Central to its distinction is a dual-layer architecture: an append-only Log Layer for immutable archives like model weights and datasets, paired with a flexible State Layer for structured, updatable information. Advanced cryptographic mechanisms, including Proof of Random Access and erasure coding with sharding, enforce unbreakable durability and instant verifiability—every piece of data carries commitments that nodes must prove during challenges, preventing silent failures or tampering. This setup achieves remarkable throughput (up to 50 Gbps in bursts) tailored to AI’s data-hungry nature, while the permissionless network incentivizes global participation through balanced rewards, making permanence not an afterthought but a native property. If storage could evolve from a liability into a foundational strength for decentralized AI, how might that accelerate equitable progress in underrepresented regions? Could verifiable permanence reshape trust in collaborative models, from open-source research to cross-border applications? And as we lean into such systems, will economic incentives align sufficiently to sustain this permanence indefinitely, or might new vulnerabilities emerge in the quest for true global sovereignty over intelligence?

The most durable digital ecosystems aren’t defined by speed alone they’re defined by balance. Scalable infrastructure, adeptive intelligence and govarnance that endures over time are what separate lasting networks from short lived experiments. 0G Labs, DGrid AI and PermawebDAO embody this balanced architecture. 0G Labs is strengthening the execution foundation for decentralized AI through scalable data availability and performance focused network design. As AI applications grow more data intensive and complex, reliable throughput becomes non negotiable. By reinforcing the base layer 0G Labs enables developers to deploy ambitious, high demand applications without sacrificing decentralization or efficiency. DGrid AI enhances the ecosystem with coordinated, adaptive intelligence. Its distributed learning frameworks respond to shifting data conditions across decentralized environments, increasing operational resilience. Instead of rigid systems locked into static assumptions, DGrid AI supports intelligence that evolves alongside the networks it serves. PermawebDAO anchors the permanence and governance layer. By prioritizing immutable data preservation and transparent, decentralized stewardship, it transforms information into a long term asset rather than a temporary artifact. Accessible and verifiable records reinforce accountability, strengthening community trust over time. Together 0G Labs, DGrid AI and PermawebDAO illustrate a layered blueprint for Web3 growth one grounded in scalability, adaptability and continuity. 0G_labs • dgrid_ai • permacastapp

SMART MONEY IS WATCHING THESE MOVES BEFORE THE CROWD ARRIVES dango entering a phase that could redefine its ecosystem with the upcoming point program and the introduction of clob. Incentivized participation plus deeper trading infrastructure usually signals a project preparing for massive user growth. dango looks like it is setting the foundation for a highly active onchain economy. 0G_labs doubling down on the AI narrative with infrastructure designed for decentralized intelligence at scale. As AI demand explodes, the need for open and verifiable compute becomes unavoidable. 0G_labs positioning itself to power that future is a move that cannot be ignored. dgrid_ai focusing on the AI layer where data, models, and decentralized networks converge. The vision of permissionless AI coordination is becoming more realistic, and dgrid_ai is aligning perfectly with where the technology is heading. This could be a key player in the AI powered Web3 era.

In small systems, inefficiencies hide. In large systems, they compound. That’s why the future of Web3 won’t be decided by isolated product launches, but by whether ecosystems can scale without amplifying fragility. The structural interplay between OG Labs, DGrid, Dango, and PermawebDAO reflects an attempt to engineer for scale across layers rather than patch problems reactively. @0G_labs addresses the coordination layer. Infrastructure defines how efficiently information and value move. Scalability and interoperability are multipliers — they either expand opportunity or magnify congestion. Optimized rails make growth additive instead of chaotic. DGrid strengthens computational capacity. As decentralized applications integrate AI inference, data indexing, and complex financial logic, compute demand increases exponentially. Distributed processing ensures that performance scales horizontally instead of collapsing into centralized bottlenecks. @dango operates where performance becomes tangible. On-chain trading platforms compress latency, liquidity, and execution efficiency into measurable outcomes. Markets act as stress chambers. When infrastructure and compute are balanced, execution quality improves even under volatility. PermawebDAO anchors long-term coordination. Governance frameworks align incentives, while permanent data storage preserves institutional memory. Systems without memory repeat cycles. Systems with preserved state evolve more coherently. Layered together, these components reduce systemic drag. Infrastructure handles movement. Compute handles transformation. Applications handle interaction. Governance handles continuity. Complex systems that distribute strength across layers tend to survive scaling shocks. In a space defined by rapid expansion, structural balance may be the quiet differentiator between temporary momentum and durable ecosystems.

Not every project is serious but this one looks different. 0G_labs is building tools for AI on blockchain. They care about speed and low cost. The roadmap is clear and updates are consistent. I think this is one project to keep on your radar.

🎙️ EPISODE THIRTY-ONE: THE LEGIBILITY LAYER @permacastapp × @dgrid_ai Making Systems Understandable at Scale As systems grow, they usually become harder to understand. More users. More data. More rules. More hidden layers. Complexity increases. Transparency decreases. Episode Thirty-One explores a different direction: What if scale increased clarity instead of obscurity? Permacast anchors podcasts permanently onto Arweave’s permaweb through SmartWeave contracts. Each episode becomes immutable and verifiable. Authorship is cryptographic. History is fixed. The memory layer is legible. You can trace origin. You can verify integrity. You can reference permanence. But understanding a system requires more than stable data. It requires visible coordination. Dgrid_AI distributes governance and intelligence across a stake-based architecture. Influence is tied to participation. Decisions are transparent. Economic exposure makes behavior measurable. The power layer is legible. In traditional systems: Algorithms are opaque. Policy changes are quiet. Influence is invisible. Participants operate in uncertainty. In a legible stack: Content history is permanent. Governance activity is observable. Stake exposure is measurable. Participants operate with clarity. When memory and governance are both visible, complexity becomes navigable instead of intimidating. Creators know their work will not be altered retroactively. Communities can observe how decisions are made. Developers can build tools without fearing silent rule shifts. AI systems operating within Dgrid_AI interact with a stable archive from Permacast. Interpretation happens above immutable history — not in place of it. Over time, this produces systemic comprehension. The larger the archive grows, the richer the context becomes. The more stakeholders participate, the clearer the incentive map becomes. The more coordination occurs, the easier it is to observe patterns. Episode Thirty-One is about clarity as infrastructure. Not simplification through control. Transparency through design. Permacast ensures memory remains readable. Dgrid_AI ensures governance remains interpretable. Together, they create a legibility layer — where growth does not mean opacity, and scale does not mean confusion. When systems are understandable, trust does not rely on blind faith. It rests on visible structure. This is The Legibility Layer. And in the long arc of durable infrastructure, legibility is the foundation of stability.