Antigen showed what adversarial AI can do for prediction markets. But that was just the first application of something much bigger.

Mixture of Adversaries isn't a product. It's a new architecture for finding truth.

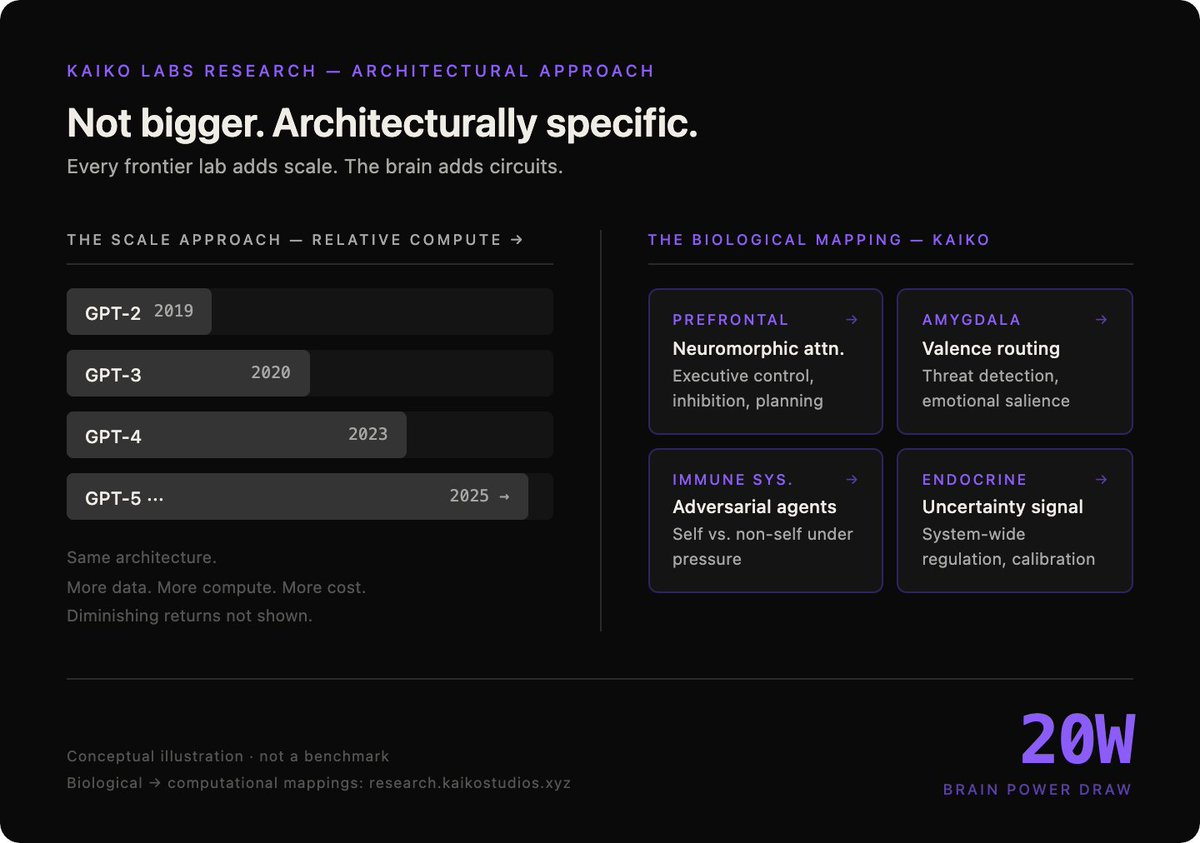

The insight: cooperation is the wrong objective. Every AI system today. MoE, self-consistency, debate, seeks agreement. Consensus amplifies shared bias. If all agents share similar training, their agreement reflects correlated errors, not independent verification.

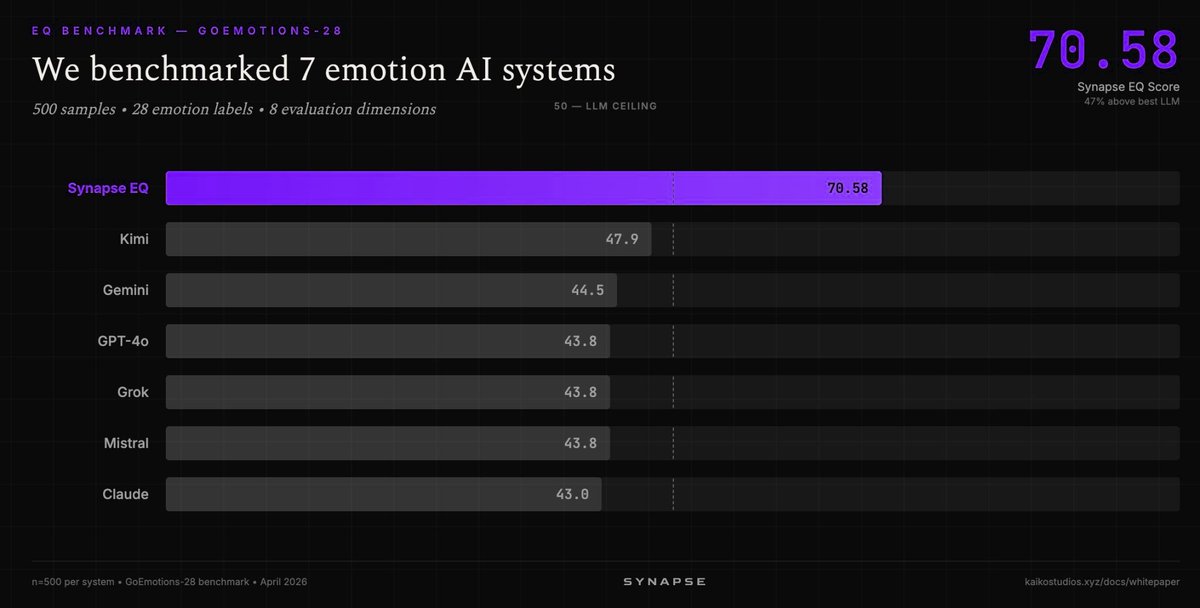

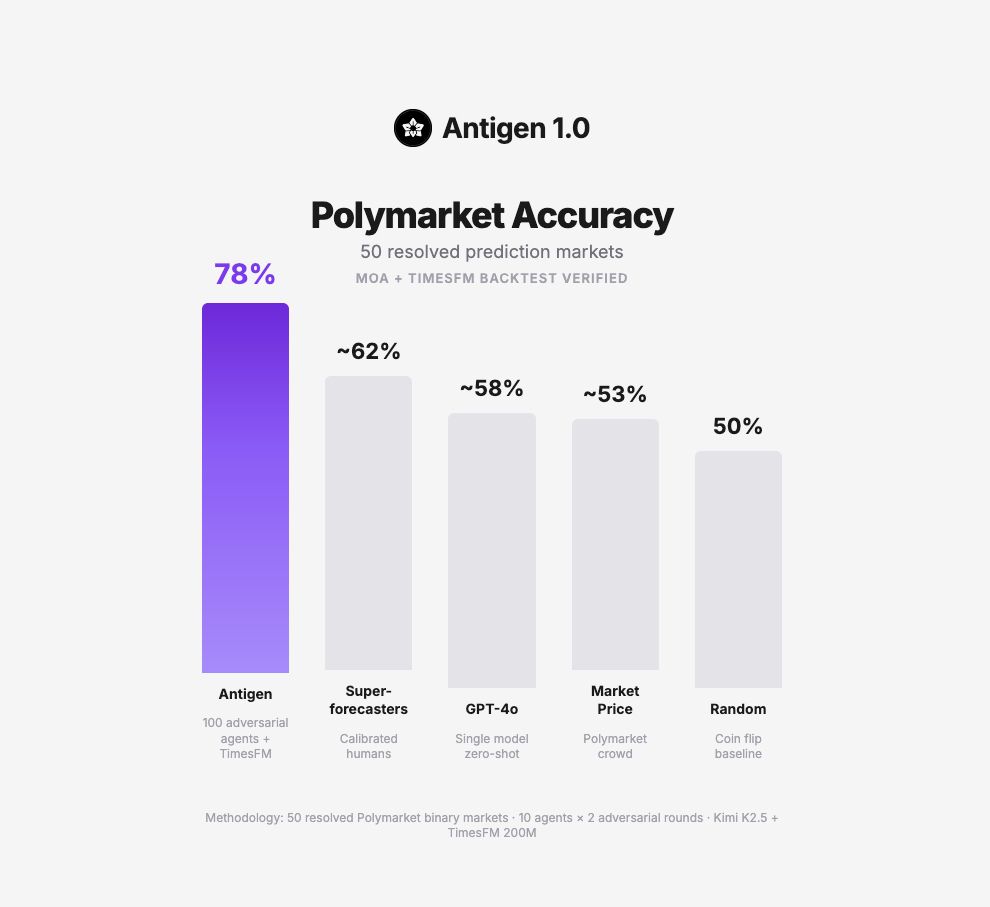

MoA does the opposite. 100 agents with deliberately misaligned reasoning frameworks. They don't agree. They attack. Claims that can't survive adversarial pressure from every direction get killed.. 13.5% kill rate across 50 live experiments. What survives is what no agent could destroy.

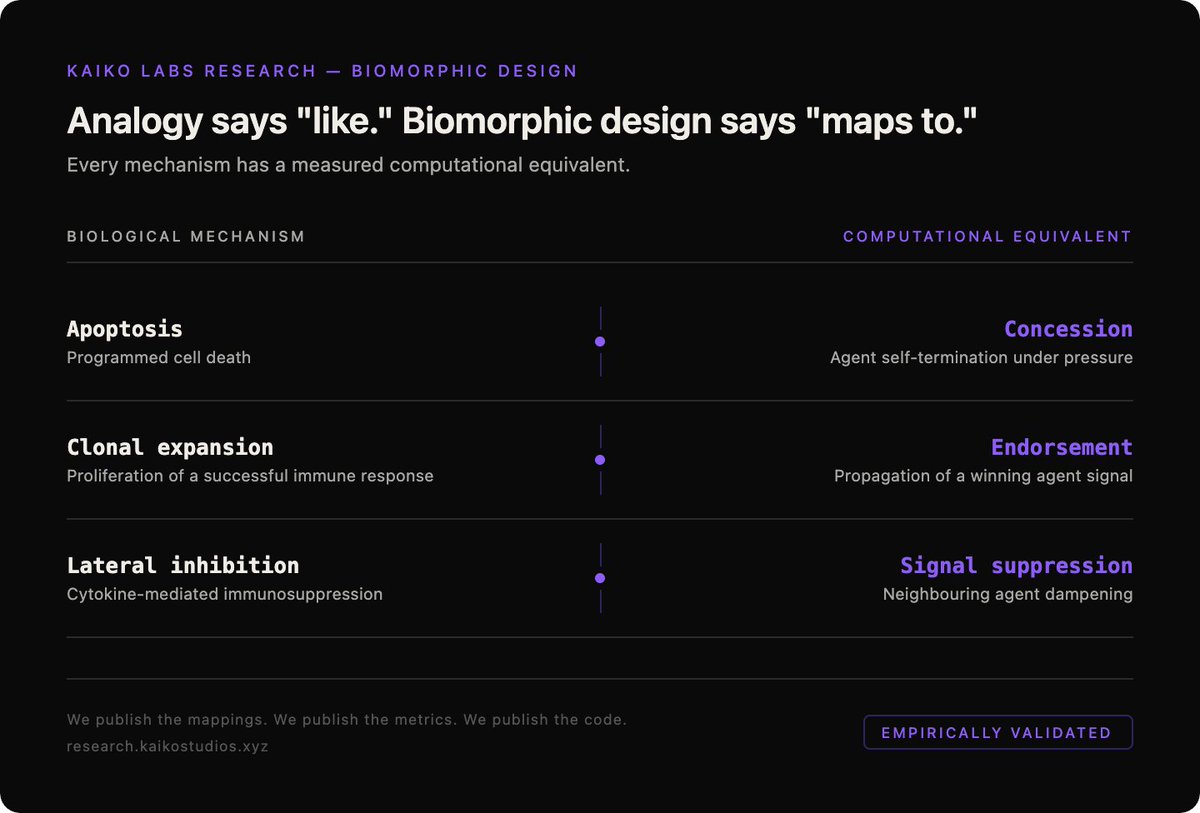

We modelled this on the immune system. Not as metaphor. As architecture.

Apoptosis. Clonal expansion. Lateral inhibition. Immune memory. Each has a direct computational equivalent in MoA.

What we built on top of it next has nothing to do with prediction markets.

It's about capital. And what happens when you stop trying to predict markets and start extracting what the market mechanically transfers to whoever shows up with the right architecture?

English