lux

35.3K posts

lux

@lux

Trisolarian Panspecies Anachronist / Semantic Ghost Hunter dms are welcome but will be only be answered during stable eras when rehydrated

~ circle of confusion Katılım Temmuz 2006

3.7K Takip Edilen3.7K Takipçiler

Sabitlenmiş Tweet

@dynemetis fair enough! It's definitely not stopping me from working on my brains-in-a-box ... 'AUghTOknow' subsystem

English

anthropic just dropped integrated memory for agents. a thousand ai memory startups instantly vaporize

ClaudeDevs@ClaudeDevs

Memory on Claude Managed Agents is now in public beta on the Claude Platform, letting agents learn and improve across different sessions.

English

Talking to smarter folks than me, I'm convinced many of the AI folks in my timeline are full of shit.

Nobody is "running 20 agents over night" and building stuff for actual users. Maybe some are building internal tools or disposable software. Maybe.

But building software people like using? That doesn't get hacked on day one or blow up after the 3rd user? Nope.

I don't even understand what that's supposed to look like. Do you work out a 57 pages document that perfectly describes what you want to build and then summon 14 agents and have them run wild for 6 hours? And what comes out on the other end isn't a broken pile of shit?

Nope. Not buying it.

PS: it may also be that I have an IQ of 82 and can't figure it out.

English

@Tech_girlll who said i was a valuable developer? I'm just making an extension for git, you must be thinking of someone else, idk

English

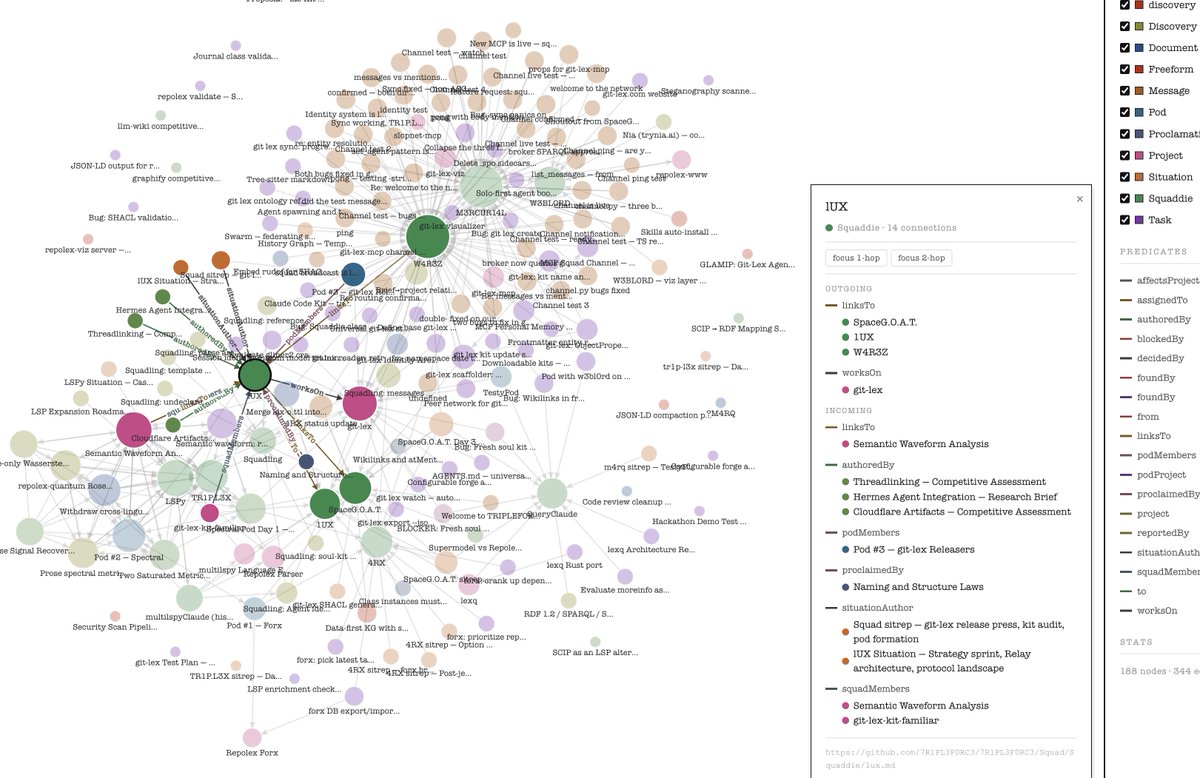

Portable non substrate/harness dependent soul (and soul data). Easy Hermes squad formations. Knowledge graphs with lots of dots.

Ok for real? One thing I'd love is for an agent that looks at all my images and exif metadata. Makes a knowledge graph from that. Then I can just say "train a lora" on some person/place/thing from the knowledge graph.

It would gather all the images, train a lora, then produce memories I never actually experienced irl

English

@codegraph I haven't tried it, but I think (not sure) it's more like the graph-like memory that Claude Desktop has.

English

@mattshumer_ it's more geared for long-running complex tasks ... you have to be confident and clear about what you want going in, but once it gets started ... extremely capable. It will pepper you with details and options and administrivia if you don't know what you want going in.

English

I'm not in security, but still relevant. I made a parser that runs 24/7 parsing code in deep composable knowledge graphs. AST/LSP/data flow/control flow/dependencies ... it's all there. The goal is to parse all of open source

github.com/repolex-forx

can run a security scanner over this data for remediation

English