Neil Band

154 posts

@neilbband

PhD student @StanfordAILab @StanfordNLP @Stanford advised by Tatsunori Hashimoto and Tengyu Ma. Prev: @OATML_Oxford @CompSciOxford

I'm so happy to share that I’ll be joining @UofT as an Assistant Professor of Statistical Sciences and Computer Science, with an appointment at the @VectorInst, in 2026! I'm recruiting postdocs and PhD students: timrudner.com! Please help me spread the word! 🧵(1/5)

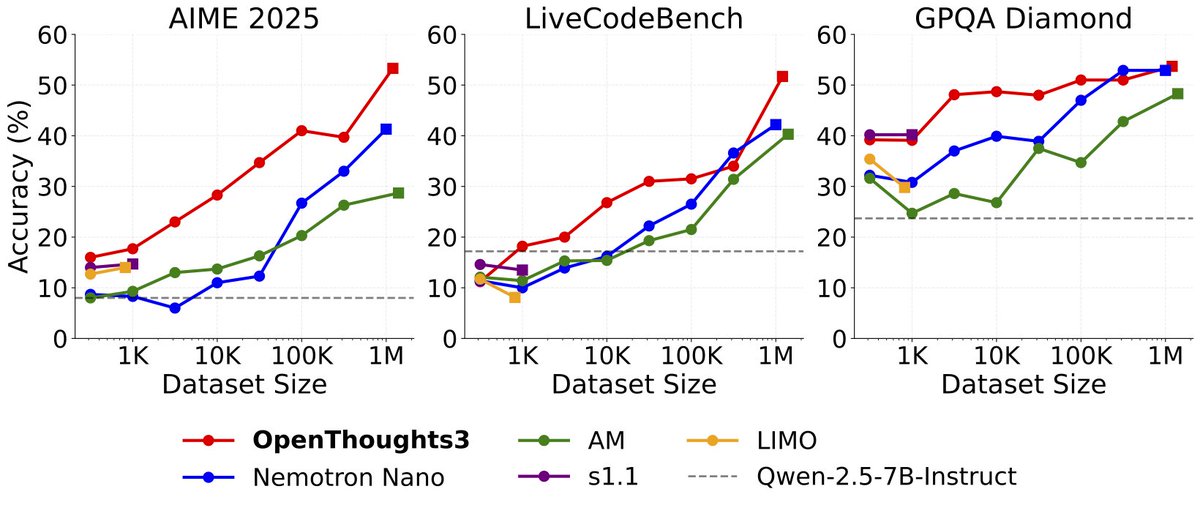

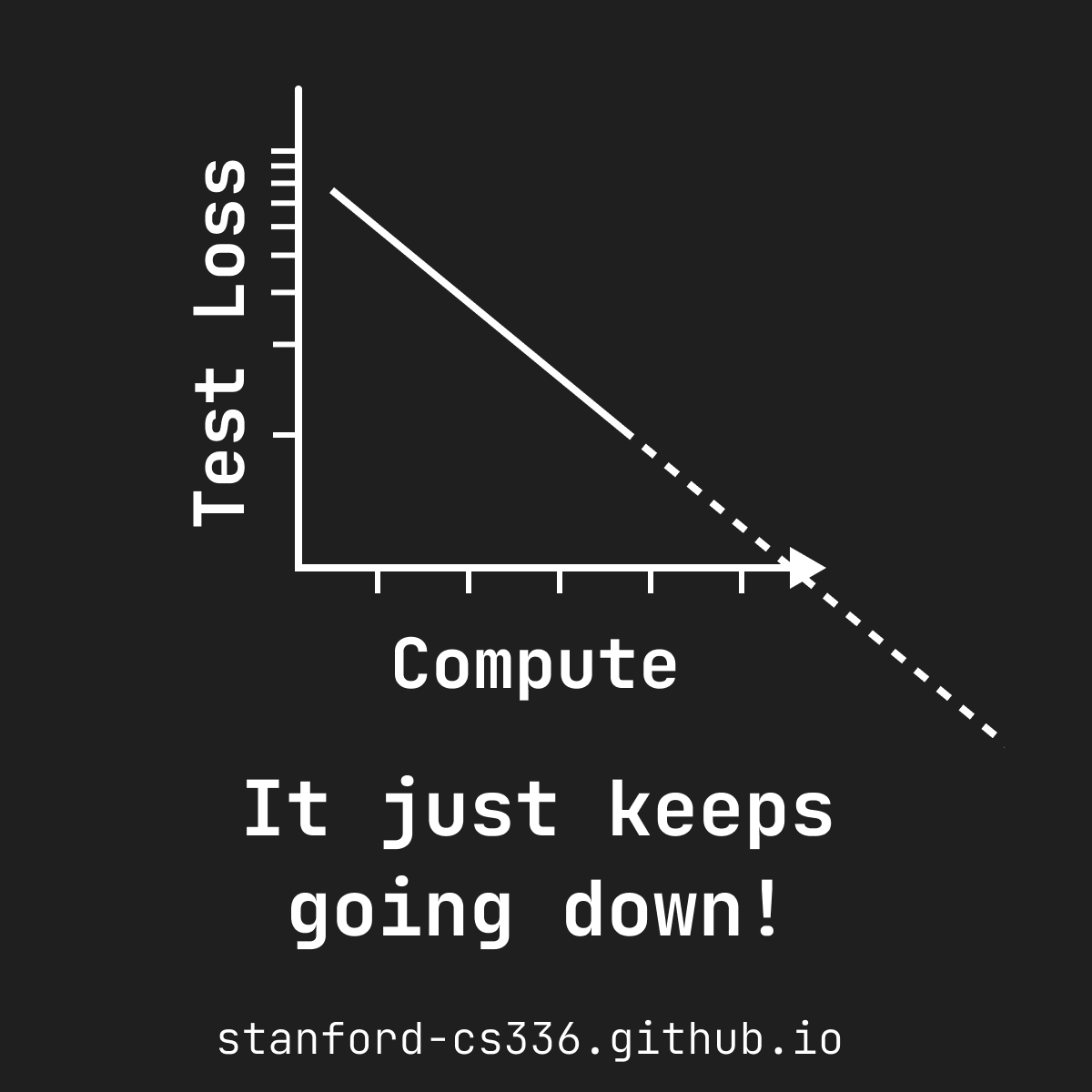

Wrapped up Stanford CS336 (Language Models from Scratch), taught with an amazing team @tatsu_hashimoto @marcelroed @neilbband @rckpudi. Researchers are becoming detached from the technical details of how LMs work. In CS336, we try to fix that by having students build everything:

Grab your favorite preprint of the week: how can you put its knowledge in your LM’s parameters? Continued pretraining (CPT) works well with >10B tokens, but the preprint is <10K. Synthetic CPT downscales CPT to such small, targeted domains. 📜: arxiv.org/abs/2409.07431 🧵👇

Want to learn the engineering details of building state-of-the-art Large Language Models (LLMs)? Not finding much info in @OpenAI’s non-technical reports? @percyliang and @tatsu_hashimoto are here to help with CS336: Language Modeling from Scratch, now rolling out to YouTube.