Reviewer3

299 posts

Reviewer3

@reviewer3com

Multi-agent peer review trusted by thousands of researchers.

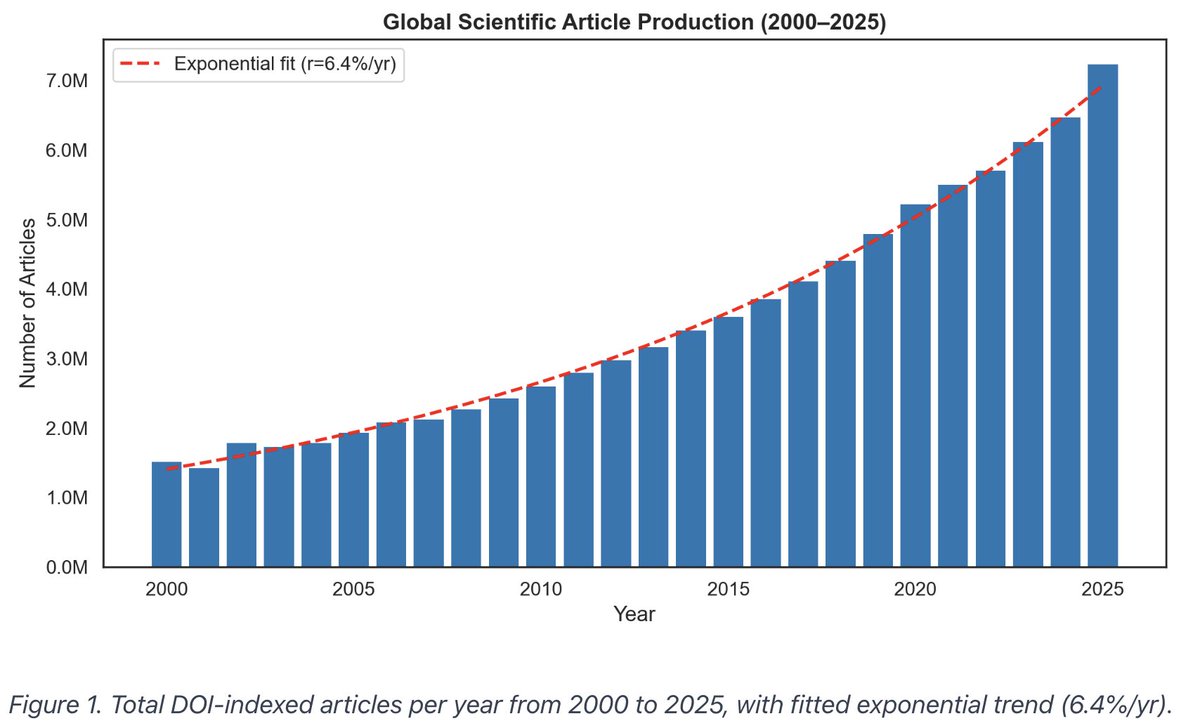

Ok this figure is pretty intimidating...

Hot take for the future of peer review: Journals should start asking for a lightweight AI-based replication check (e.g., via Claude) at submission. Not to replace reviewers, but to catch coding errors, logic inconsistencies, and reproducibility issues before a paper reaches them. At this point, many of these checks are fast, cheap, and automatable. There’s little reason to rely solely on human detection. Even with restricted data, this is feasible. Authors can generate simulated datasets that preserve structure and run identical pipelines. The goal is just basic verification. More broadly, we need to rethink how we use reviewer time. Not every submission needs 3 full human reviews. A more efficient pipeline might look like: editorial triage, AI-assisted checks/review, targeted human evaluation where it matters most. If done well, this could raise standards while reducing burden on the system.

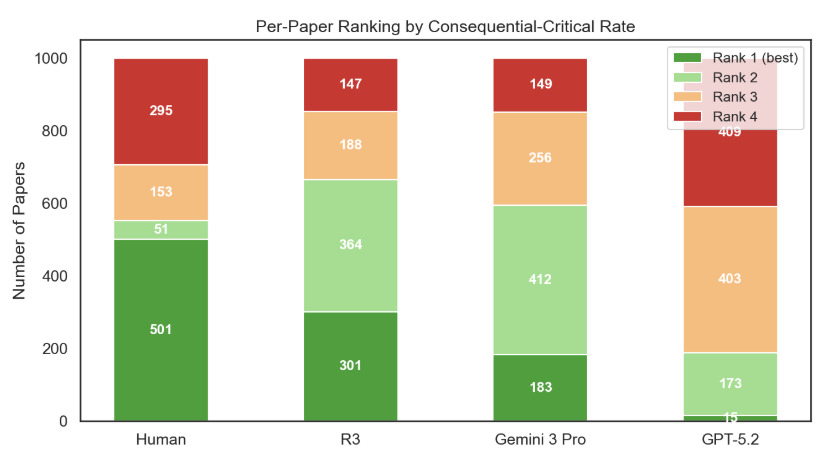

Sneak peek at some data from our benchmark on 80,000+ human and AI review comments! What's really interesting to me is that GPT 5.2 and Gemini 3 Pro, with minimal prompting, produce extremely structured review comments, nearly as structured as R3 despite R3 being multi-agent. We defined the structural elements of a peer review: - the specific issue identified (specificity) - why it is a problem (rationale) - how to fix it (actionability) - where it is in the text (anchoring) We pulled these out of every human and AI review comment and measured their rate of occurrence per paper.