Spheron Network

6K posts

Spheron Network

@spheron

Building the world's largest community-powered data center for AI workload, aka DePIN compute

This year, there won’t be enough memory to meet worldwide demand because powerful AI chips made by the likes of Nvidia, AMD and Google need so much of it. cnb.cx/45cUMbG

I run out of black socks so @spheron it is

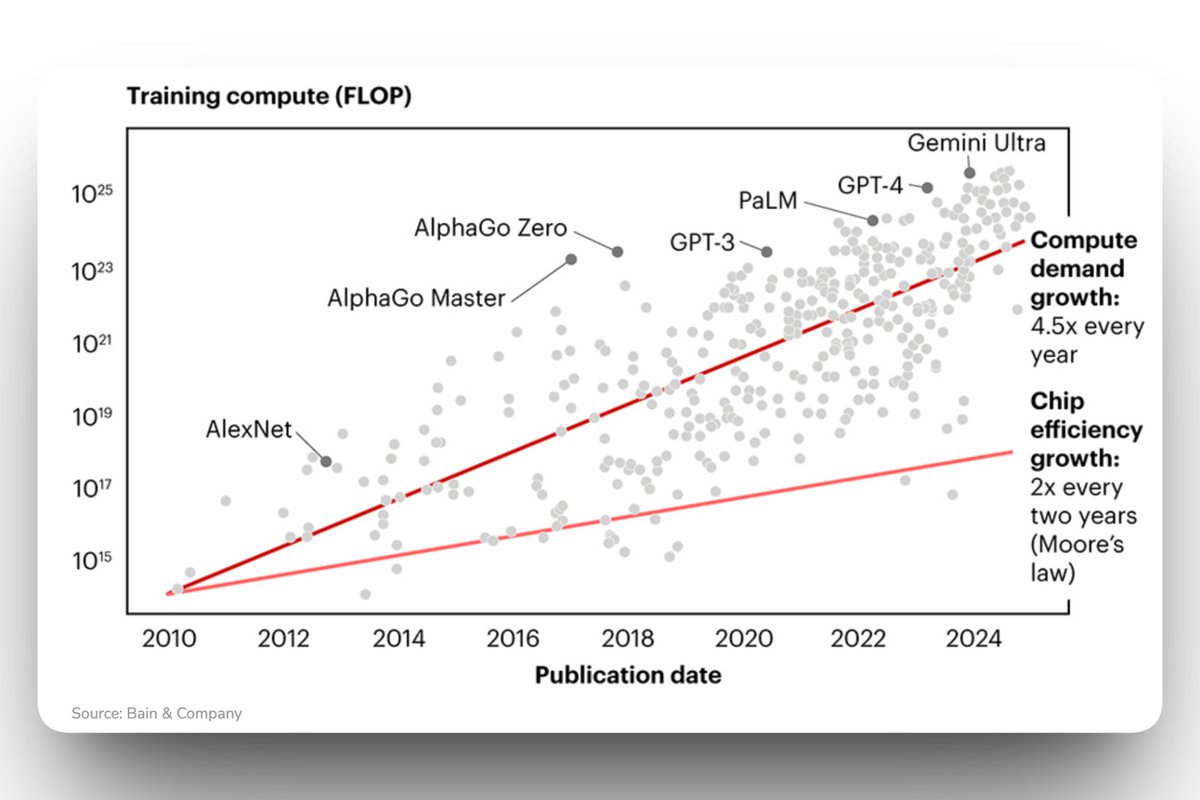

Total AI compute is doubling every 7 months. We tracked quarterly production of AI accelerators across all major chip designers. Since 2022, total compute has grown ~3.3x per year, enabling increasingly larger-scale model development and adoption. 🧵

Everyone keeps asking if AI browsers are about to replace Chrome. I don’t see it happening anytime soon. We already have browsers people trust. Unless someone builds something truly new and wild, most users aren’t switching. Google will just absorb AI into what already exists, and ChatGPT will likely live inside Chrome, not replace it. The bigger issue is trust. If people don’t trust an AI to protect their data, they’re not letting it log into their bank, shop for them, or make decisions on their behalf. That skepticism isn’t going away fast, which means adoption will be slower than the hype suggests. AI isn’t losing. It’s just going to show up everywhere quietly, not take over overnight. #AITrends #TechTalk #FutureOfAI #DigitalTrust #ProductAdoption

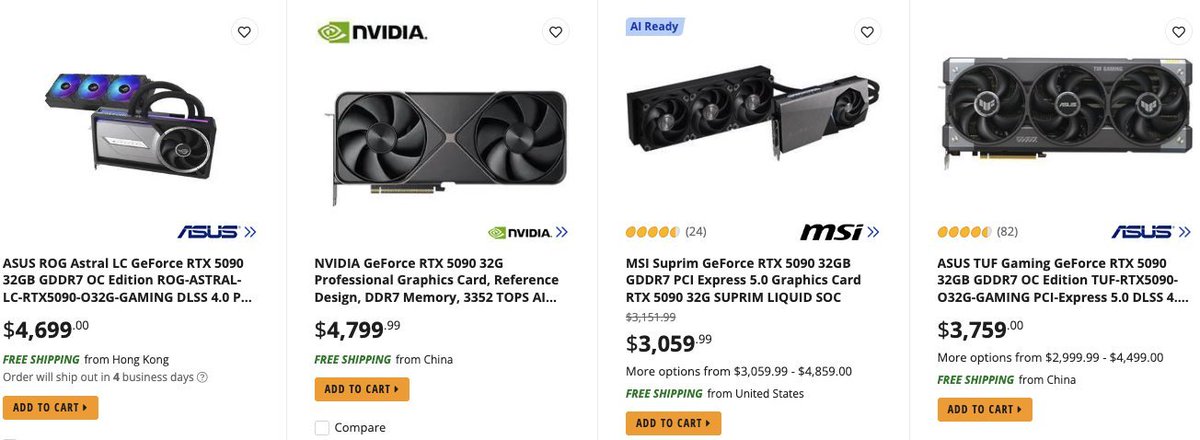

BREAKING: GPUs to see massive price hikes this new year — RTX 5090 will cost $5,000 moving forward vs $2,000 previously.

Amazon $AMZN AWS has raised pricing for EC2 Capacity Blocks for ML by about 15%, with H200-based p5e.48xlarge moving from $34.61 to $39.80 per hour in most regions and p5en.48xlarge from $36.18 to $41.61, while US West (N. California) p5e went from $43.26 to $49.75 - The Register

Reports suggest NVIDIA and AMD are preparing another round of GPU price hikes starting this month. Early signs already look worrying. The RTX 5090 is expected to jump from around $2,000 to nearly $5,000. And this does not stop at one model. According to the report, both companies may continue raising prices month after month, across their entire lineup. That includes consumer GPUs, AI accelerators, and server-grade hardware. This matters because it confirms what many teams are already feeling. Owning GPUs is becoming riskier and more expensive, not just upfront but over time. Price volatility, supply constraints, and long replacement cycles make hardware ownership a liability. This is exactly why access beats ownership. As GPU prices climb, flexible compute like renting is no longer a nice-to-have. It is the safer default. Source: #_endPass" target="_blank" rel="nofollow noopener">mobile.newsis.com/view/NISX20251…