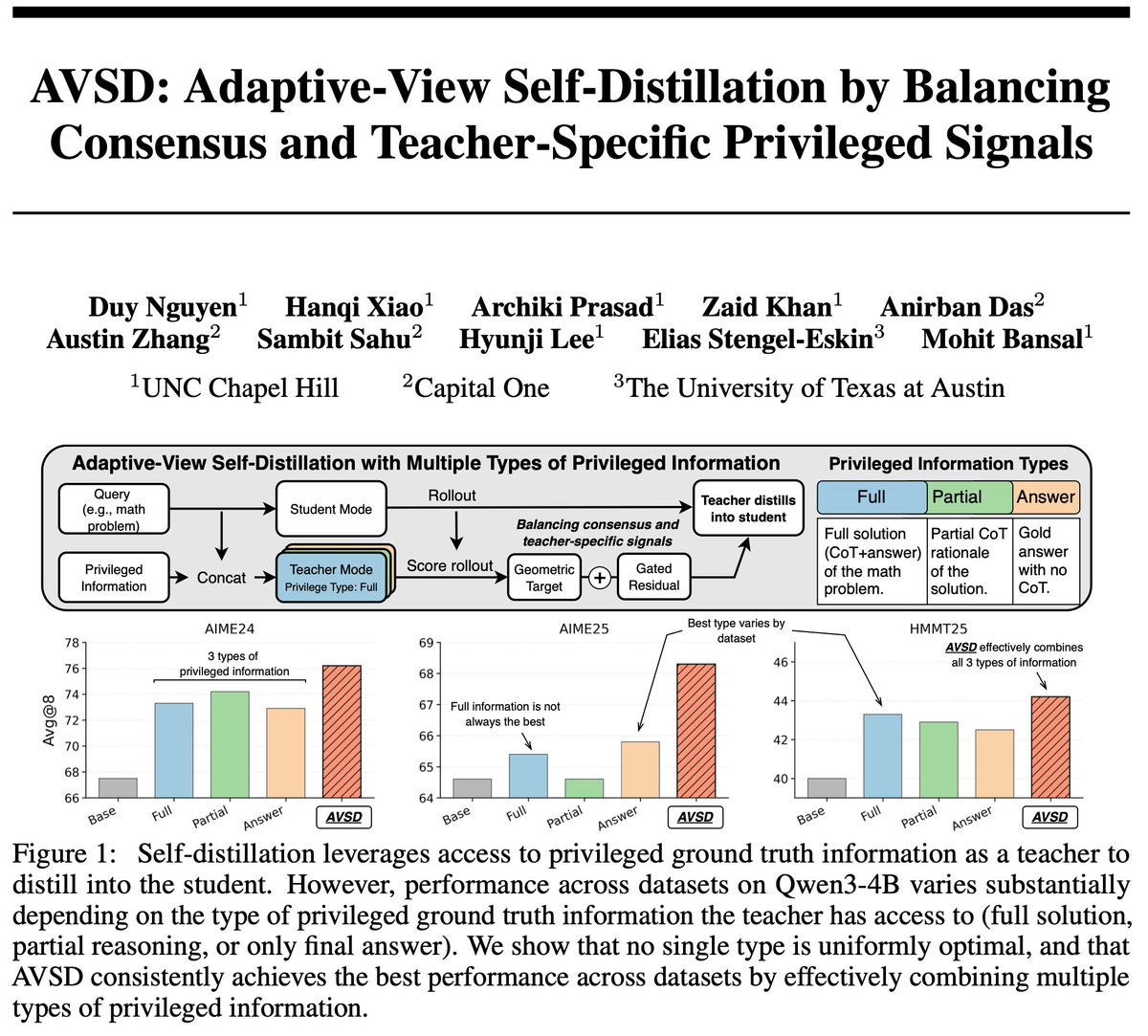

Sparse binary rewards bottleneck LLM RL, motivating the use of privileged information in self-distillation as dense teachers. How can we use and balance multiple types of privileged info: leveraging stable cross-view info, while preserving view-specific info? Current on-policy self-distillation methods often condition the teacher on only one type of privileged view: full solution, partial rationale, answer-only, reference code, feedback, etc. This can be suboptimal: 1️⃣ No single privileged view consistently performs best when used as a teacher. 2️⃣ Views can introduce teacher-specific artifacts from information unavailable to the student. 🧠 Adaptive-View Self-Distillation (AVSD) considers multiple privileged views jointly as a teacher family, balancing cross-view consensus and view-specific signals through a token-level gate to construct better dense learning signals. 🧵👇