Antonio Oroz

24 posts

🚀Echo-2 is here - our new world model! These aren’t videos. These are 𝟑𝐃 𝐬𝐜𝐞𝐧𝐞𝐬. Generated from a single image. - Stunning visual quality. - Real-time rendering. - Interactive camera control. - Physically grounded. 🧵More details👇

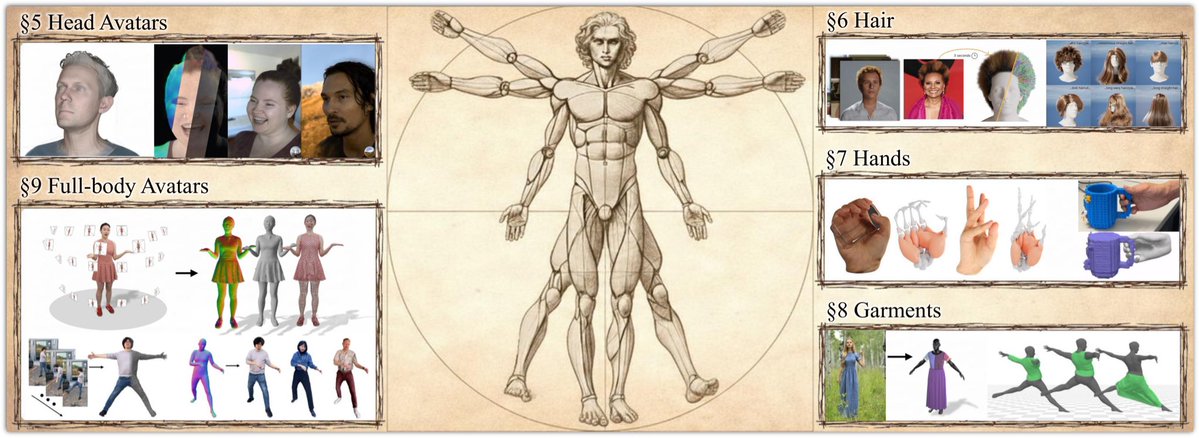

Want to create an avatar from a single image? FlexAvatar is a transformer model that creates full 360°, high-quality, and expressive 3D head avatar from just a single portrait image in minutes. Real-time Demo: FlexAvatar's lightweight architecture allows both animation and rendering in real-time, enabling interactive user experiences. To create a new 3D head avatar, only one image is required, e.g., from a webcam. The final avatar is ready after 2 minutes. Architecture: Under the hood, FlexAvatar adopts a transformer-based encoder-decoder design. The encoder maps the input image onto a latent avatar space, while the decoder produces 3D Gaussian attribute maps by incorporating the animation signal via cross-attention. The model learns all facial animations directly from the data without relying on pre-built 3D face models. This equips the avatars with realistic facial expressions. The internal avatar latent space can be conveniently used to integrate additional observations of a person via fitting. This enables use-cases where more than one image of a person is available, e.g., from a phone scan of the person. We train jointly on 2D monocular videos and multi-view data. However, in monocular videos, the animation signal leaks the target viewpoint, causing the model to produce incomplete 3D heads. We call this phenomenon entanglement of driving signal and target viewpoint. To prevent entanglement, we introduce bias sinks. These are learnable tokens that indicate whether a training sample stems from a monocular or a multi-view dataset. During training, the model learns to produce incomplete 3D heads only when the monocular token is present. During inference, FlexAvatar then always uses the multi-view token for which the model has learned to produce complete 3D heads. This simple design allows to combine the generalizability from monocular data with the quality of multi-view data. FlexAvatar summary: - Input: Single-image, phone scan, or monocular video - Output: Full 360° head avatar - Expressive animations - Real-time rendering and animation - Generalization to any portrait - Create a new avatar in 2 minutes - Use bias sinks to combine 2D and 3D data 🏠tobias-kirschstein.github.io/flexavatar/ 🌍arxiv.org/pdf/2512.15599 🎥youtu.be/g8wxqYBlRGY Great work by @TobiasKirschst1 and @SGiebenhain!

🚀 Announcing Echo — our new frontier model for 3D world generation. Echo turns a simple text prompt or image into a fully explorable, 3D-consistent world. Instead of disconnected views, the result is a single, coherent spatial representation you can move through freely. This is part of a bigger shift in AI: from generating pixels and tokens to generating spaces. Echo predicts a geometry-grounded 3D scene at metric scale, meaning every novel view, depth map, and interaction comes from the same underlying world — not independent hallucinations. Once generated, the world is interactive in real time. You control the camera, explore from any angle, and render instantly — even on low-end hardware, directly in the browser. High-quality 3D world exploration is no longer gated by expensive equipment. Under the hood, Echo infers a physically grounded 3D representation and converts it into a renderable format. For our web demo, we use 3D Gaussian Splatting (3DGS) for fast, GPU-friendly rendering — but the representation itself is flexible and can be easily adapted. Why this matters: consistent 3D worlds unlock real workflows — digital twins, 3D design, game environments, robotics simulation, and more. From a single photo or a line of text, Echo builds worlds that are reliable, editable, and spatially faithful. Echo also enables scene editing and restyling. Change materials, remove or add objects, explore design variations — all while preserving global 3D consistency. Editing no longer breaks the world. This is only the beginning. Echo is the foundation for future world models with dynamics, physical reasoning, and richer interaction — environments that don’t just look right, but behave right. Explore the generated worlds on our website and sign up for the closed beta. The era of spatial intelligence starts here. 🌍 #Echo #WorldModels #SpatialAI #3DFoundationModels Check it out: spaitial.ai