AquaVitae

169 posts

AquaVitae

@AquaVitae

Stonks, Options trading, $NVDA, $NDX, $MU options

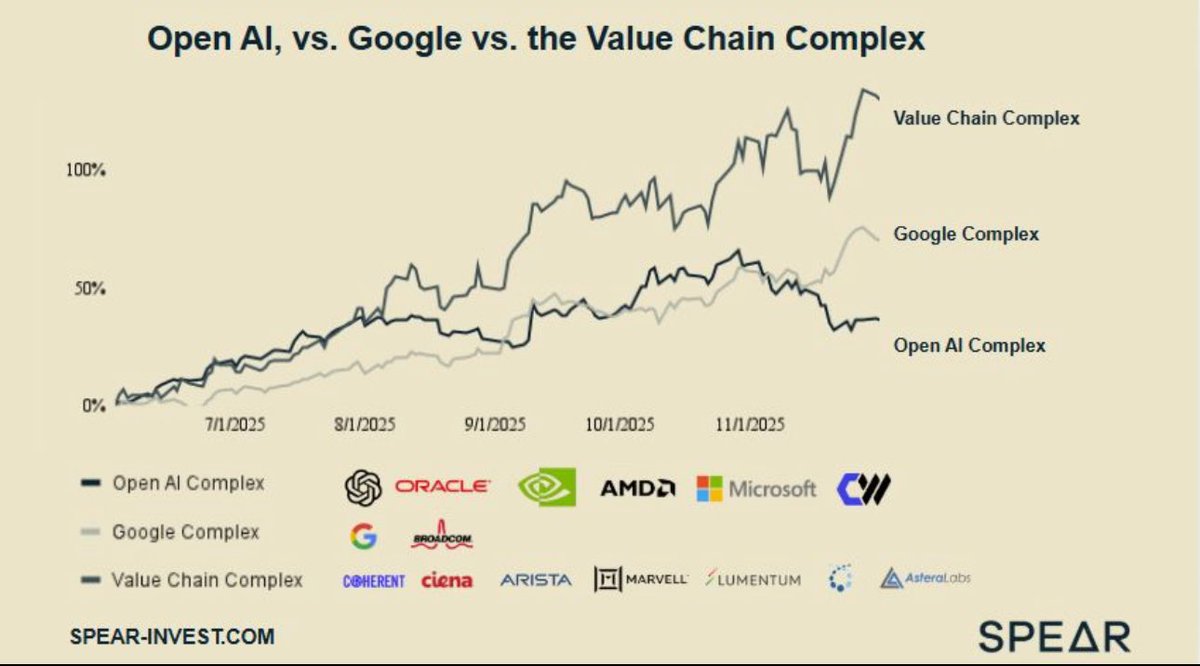

Robotics and CPU is the next theme setting up. If you still don’t see it, $ARM is the name getting ready for a real move. We already saw it with $MRVL and $NBIS. Then came space. Next - $ARM

@jukan05 Only a matter of time till truth social post about Korea and memory prices

So what's up with Micron Technology $MU? Why did so many analysts lower the price targets but still rated it as Bullish? A bit contradicting isn't it? Well, here's the answer. The DRAM spot prices have been mostly flat. The 'recovery' didn't come as soon as they initially thought. They are humans too and got euphoric too early. Remember, many predicted the rates to happen earlier this year instead of last week. The rates have a lot of influence to cyclicals. There is also this thing called DRAM contract prices which is different from spot prices. Contracts as the name implies, is an agreement between Micron and the buyer. These contract prices have actually gone up 5-10% which is bullish for Micron for near term BUT.. the next wave of contracts might be neutral as spot prices has been mostly flat, a good indicator for DRAM demand. So why do companies make contracts? Two reasons: it's more predictable in their balance sheet which in turn is acts as a hedge against unexpected DRAM price fluctuations. My suspicion is that the contract prices got inflated early this year due to the whole AI and Rate Cut euphoria and companies rushed in to make DRAM contracts before it goes up 'too much' So while DRAM contracts may not be a problem for Q4 (Weds), it's an uncertainty for Q1/Q2 2025. The recovery is on its way but the rate cuts didn't happen until just a week ago. I don't know how fast the the benefits of rate cuts will materialize to DRAM prices. So what else can $MU do? Answer: SHIP a TON of HBM (Super Fast AI Memory) to $NVDA, $AMD and $AVGO. In Q3 Micron made about $100M in HBM sales. I am expecting about $200-300M in Q4 HBM sales and I am guessing $400-500M for Q1 2025. HBM is orders of magnitude more expensive than DRAM and its highly margin accreditive (it adds shit ton of earnings per share). They are expecting billions for 2025 and having more than $NVDA as its customer base will help to re-negotiate the prices to a more premium (10% more in 2025). Furthermore, over the last 2 quarters, Micron has been making huge improvements on HBM wafer yields (as all semi companies do over time) and this will add extra margin to their dollars. The market reaction will be all about guidance. The double beat is a requirement. It's already baked in. So IF Micron is able to surprise the market with a huge HBM sales guidance for the next a couple of quarters, market could react positively. I think elevated DRAM contract prices will not come until 2nd half of 2025. Neutral DRAM + Bullish HBM could move the needle. On our charts, $MU is still BLUE candled, meaning its bullish cycle remains intact. Remember playing earnings can be nerve-wracking even if you have a high conviction. I am long in $MU, we are not selling until DRAM prices have fully recovered.

30 year treasury bonds yield 5% now $1 million invested is $4,200/month Completely risk free & state tax free That’s $50k/year paid out for 30 years Then get the million back after 30 years Why aren’t more people doing this? 🤔

$NVDA Rubin is Nvidia’s next rack‑scale AI platform after GB300, pairing a new GPU family with the Vera CPU and a refreshed NVLink/NVSwitch fabric. The platform’s headline architectural change is that the scale‑up network inside the rack moves from thousands of discrete twinax cables to a central printed‑circuit midplane with blind‑mate connectors. When Jensen said Rubin is “completely cableless,” he was referring to this internal rack interconnect and power distribution: GPU compute blades and NVLink switch blades now plug into a rigid midplane/bus structure instead of being laced together with hand‑routed copper bundles. It does not mean the system has no external cabling or cooling manifolds; it means the in‑rack scale‑up fabric and much of the in‑rack power wiring are now implemented as a backplane with blind‑mate interfaces. Nvidia’s own materials for Vera Rubin NVL144 call this out explicitly: the compute tray is 100% liquid‑cooled and a “central printed circuit board midplane replaces traditional cable‑based connections,” and Kyber, Rubin’s successor rack generation, uses a “cable‑free midplane” that integrates NVLink switch blades at the rear. This is the physical basis for “no cables/wires.”  Functionally, Rubin expands the NVLink domain relative to GB300 and formalizes disaggregated inference. On the GPU side, there are 2 distinct parts: the standard Rubin GPU, optimized for bandwidth‑bound “generation” with large HBM4 capacity and NVLink scale‑up, and Rubin CPX, a separate, monolithic GPU optimized for the compute‑bound “context” phase with 30 PFLOPs NVFP4 and 128 GB of GDDR7. Nvidia positions the combined Vera Rubin NVL144 CPX rack as a single‑rack inference engine with 8 exaFLOPs NVFP4, 100 TB of fast memory and 1.7 PB/s memory bandwidth, delivering roughly 7.5x the AI performance of a GB300 NVL72 rack. Nvidia’s description of CPX and the NVL144 CPX rack is unambiguous: CPX handles million‑token contexts and long‑video prefill, while standard Rubin services generation; the rack aggregates 144 Rubin plus 144 Rubin CPX GPUs with 36 Vera CPUs. Timeline guidance remains 2026 availability for the Rubin platform, with Rubin and Vera having taped out and associated “NVLink 144” and Spectrum‑X switches in fab.  Why eliminate the internal cable harness now? Three reasons. First, signaling physics. NVLink 6 is widely reported to double per‑GPU bidirectional bandwidth versus NVLink 5; every doubling on copper halves practical reach. GB200 NVL72 already needed more than 5,000 copper cables to tie 72 GPUs to the NVSwitch spine. As bandwidth rises and GPU trays densify, those cable runs become too short, too lossy and too bulky to route. A rigid, impedance‑controlled backplane minimizes insertion loss and skew at high data rates, enabling the higher NVLink lane speeds and more GPUs per domain that Rubin targets. Second, thermals and serviceability. GB300 is fully liquid cooled, but cable bundles still impede airflow and human access. Midplane blind‑mates let you slide a compute tray or a switch tray straight in and out, improving mean time to repair, consistency of assembly torque and connector seating, and reducing accidental dislodgement during service. Third, manufacturing scale. A backplane collapses thousands of manual cable terminations into a handful of high‑layer PCB panels and standardized board‑to‑board connectors, raising factory throughput, reducing workmanship variability, and shrinking integration time on the line. Nvidia and partners explicitly highlight the backplane approach and cable‑free midplane as part of standardizing MGX racks for Rubin and beyond.  The direct performance and TCO implications of “no cables/wires” are material. Signal integrity improves because the number of connectors and twinax runs drops sharply; that reduces equalization and retimer power and shaves a few microseconds of fabric latency in large all‑to‑all collectives. Better SI headroom at NVLink 6 speeds facilitates larger single‑rack “world sizes” without resorting to optical or retimed links inside the rack, which is key to keeping inference tokens/sec high for mixture‑of‑experts and agentic workloads. On cost, independent teardown/estimates peg GB‑era NVLink scale‑up hardware at roughly $8k/GPU, about a low‑teens % of all‑in cluster TCO. A midplane consolidates that bill of materials and removes thousands of cable ends, which should translate to lower material cost, lower assembly labor and fewer infant‑mortality RMA events. The aggregate effect is lower $/M‑tokens at fixed latency SLOs, which is the metric hyperscalers and SaaS operators are now optimizing.  The system‑level consequences run beyond the NVLink fabric. Rubin’s MGX rack includes modular expansion bays for ConnectX‑9 SuperNICs at 800 Gb/s and optional CPX trays, and Nvidia is aligning the rack’s mechanicals and power with OCP. The move to a liquid‑cooled busbar and, in Kyber’s timeframe, 800 VDC distribution, reduces copper mass in the rack by hundreds of kilograms, improves power density and simplifies in‑rack wiring. The same MGX footprint that supports GB300 NVL72 will support Vera Rubin NVL144, NVL144 CPX and Rubin CPX add‑on racks, which means a customer can stage‑deploy Rubin without rearchitecting aisles or plinths. This is critical for commissioning velocity in gigawatt campuses and for translating capex into deployed tokens/sec within the fiscal year.  There are also architectural consequences for how clusters are built. A clean, deterministic scale‑up fabric in the rack makes it easier to separate roles across racks: in Rubin CPX, the context prefill can run on compute‑dense, NVLink‑light trays that talk to the rest of the plant over PCIe Gen6 to NICs, while decode runs on NVLink‑heavy Rubin trays with HBM and large expert counts. That reduces expensive HBM and NVLink content where it isn’t used, with Nvidia and third‑party analysis arguing this materially lowers idle memory and under‑utilized fabric during prefill‑heavy workloads like RAG and long‑video. The trade‑off is that customers must manage evolving prefill:decode ratios across SKUs and live workloads; Nvidia offers both “single‑rack NVL144 CPX” and “sidecar CPX racks” to give operators flexibility in how they balance that ratio. This disaggregation is a bet that inference, not just training, will drive the next leg of demand and that $/token will be the buying criterion, not $/FLOP.  Risk is not zero. Backplanes of this size push PCB fabrication limits, laminate availability and yield; connector reliability under thermal cycling must be proven at fleet scale; and a cableless design increases vendor lock‑in because the midplane pinout and NVLink topology are Nvidia‑specific. The system also bets on NVLink bandwidth doubling on copper in Rubin and higher still in Kyber; if SI margins or thermal envelopes miss, Nvidia may need to accelerate silicon photonics into the scale‑up path, not just scale‑out switches. The company is already migrating Quantum‑X InfiniBand and Spectrum‑X Ethernet to co‑packaged optics to cut transceiver power and flatten topologies; the physics that forced optics into scale‑out could eventually force optics into scale‑up if domain sizes and speeds keep rising. Finally, the backplane centralizes a single point of failure; while it improves day‑2 serviceability, a midplane fault is harder to field‑repair than a damaged cable harness.  For investment, “no cables/wires” is not cosmetic; it is how Nvidia keeps Huang’s Law compounding when transistor‑level gains slow. The midplane enables higher NVLink speeds and larger world sizes without resorting to costly, lossy optics inside the rack; it lowers assembly cost and RMA rates; it shortens install times; and it increases density, all of which reduce $/token and expand the viable workload envelope at fixed latency. That strengthens Nvidia’s rack‑scale pricing power and increases multi‑component wallet share: GPUs, CPUs, NVLink switches, ConnectX SuperNICs, BlueField DPUs, Spectrum‑X or Quantum‑X fabrics and the MGX rack itself. It also shifts content to high‑layer PCBs, blind‑mate connectors, liquid cooling and 800 VDC power gear, benefiting the supply chain tiers tied to backplanes, manifolds, busbars and high‑voltage DC, and away from twinax and plug‑in optical transceivers in the near term. Strategically, the proprietary midplane raises switching costs; a customer moving to a competitor would need to rip and replace the rack interior, not just swap cards, which biases life‑of‑site refreshes toward Nvidia’s annual cadence. We should underwrite higher Nvidia content per rack and better conversion of announced capex into shipped revenue in 2026, sized against the tape‑out status and NVL144 CPX disclosure already on record.  Bottom line for the committee: Rubin’s “no cables/wires” claim is shorthand for a backplane‑first, blade‑style rack that turns the NVLink pod into a true appliance. The midplane is the enabling mechanism for NVLink 6 speeds, larger single‑rack world sizes and a disaggregated inference topology that targets $/token, not $/FLOP. It improves SI, latency, reliability, manufacturability and commissioning velocity, all of which directly lower service cost and accelerate revenue per watt per square meter. The risks are manageable engineering and supply‑chain execution items rather than conceptual ones. Given the official specs on NVL144 CPX and the platform’s 2026 timing, the cableless Rubin rack should be treated as a credible, near‑dated step‑function in both performance and deployability, and a durable moat‑widening move at the rack level rather than just the chip.