Brian Bourgerie retweetledi

Every AI privacy claim you've ever read is a promise.

Today, Morpheus makes it a proof.

Scam Altman is building the world's largest proprietary dataset of how every professional, every business, and every competitive operation thinks and works. It's funded by your API fees, owned by his company, and fed back into the next model available for anyone willing to fork over $20 a month.

Every prompt you've sent to ChatGPT is a gift to OpenAI. Your client strategy, your financial models, your proprietary workflows, the prompts you spent months refining that encode how your business actually works are all stored, processed, and absorbed into a system that levels your competitive edge for everyone else in your market.

You built a moat. Then you handed the blueprint to the company selling shovels.

That's before anyone sues you.

When a hostile party files discovery and subpoenas your AI prompts, a judge orders OpenAI to comply. Now they have everything you ever typed. Or OpenAI get breached before it reaches a judge and opposing counsel gets it with no court order required.

Every prior solution to this problem failed for the same reason. ChatGPT's privacy policy. OpenRouter's no-logging promise. Anthropic's data handling commitments. All documents, written by lawyers to protect the company, not you, changeable with a board vote, a regulatory shift, or an acquisition. Trusting their word was the only option available.

Until today.

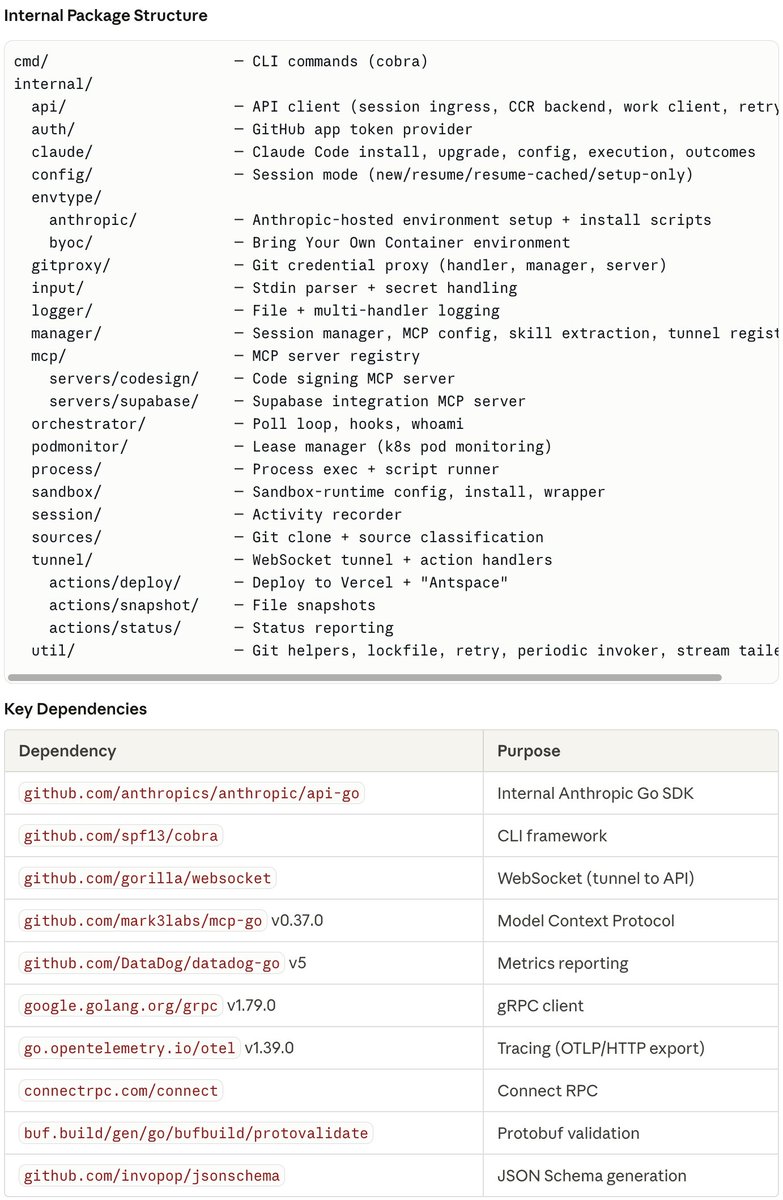

What we just shipped

When you open a session with a TEE-enabled provider on Morpheus, your node performs automatic cryptographic verification before you send a single word. It confirms three things.

Chat logging is compiled out of the image. Not switched off in a settings panel. Compiled out. The hardware measures the exact bytes at boot. Re-enable logging and the measurement changes. Your node refuses to connect.

The operator cannot enter the enclave. Cannot attach a debugger. Cannot image the memory. Intel TDX enforces this at the hardware level.

The operator provides electricity and bandwidth. That is the complete list of what they can do.

The server is mathematically proven to be the actual TEE and not a relay, proxy, or impersonator. The TLS private key exists only inside encrypted hardware memory. It cannot be copied or served from anywhere else.

Your competitor walks into the server room with direct hardware access, every cable and every drive physically in their hands. They get nothing. The CPU seals the enclave. A court order to the operator is a court order to someone who genuinely has nothing to hand over.

If you work in finance, your edge is your process and it stays yours. If you work in healthcare or defense, patient data and mission parameters stay inside hardware walls the operator cannot breach. If you've avoided AI entirely because no option was secure enough, that changes today.

The difference between a promise and a proof

OpenAI's privacy policy is a document. Morpheus TEE is a hardware constraint. Those are not the same category of thing.

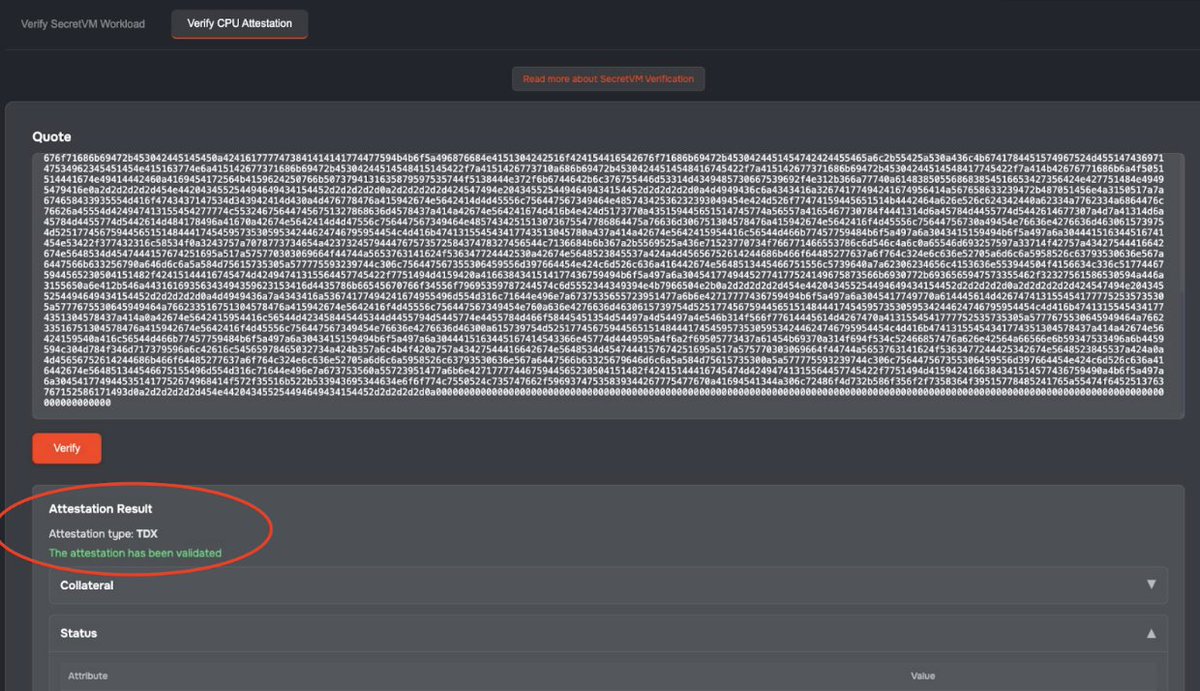

The attestation is public. The verification is permissionless. Fetch the cryptographic proof from any TEE-enabled provider and check it against the published manifest yourself. The math either holds or it doesn't. You don't have to trust us. You have to trust Intel's fabrication process.

There’s a Secret attestation tool you can call to decompose and get the attestation result.

Two ways to use Morpheus. They are not the same.

The API Gateway is the on-ramp: email signup, API key, centralized node. Faster to start. Still meaningfully better than sending your prompts to OpenAI. But it reintroduces a trust relationship with Morpheus rather than with the hardware.

The local C-Node is where the proof lives. No email, no KYC, wallet address only. Your node runs the verification directly against the provider's hardware. No company sits between you and the cryptographic proof. Not even us.

Finance, healthcare, defense, space. Anyone whose competitive edge, legal exposure, or client confidentiality depends on what they type into an AI. (AKA, every business owner) The C-Node is your answer.

What's next

Phase 1 seals the Morpheus proxy-router. If a provider routes inference to an external API, that downstream hop is outside the TEE boundary. We're naming this because hype is how the industry got you into the current situation.

Phase 2 closes the gap. The proxy-router and the model run together inside the same TEE. NVIDIA Confidential Computing on Hopper and Blackwell GPUs encrypts GPU memory too. Your prompt enters the enclave encrypted, hits the model inside the enclave, and returns to you encrypted. At no point does it exist in cleartext outside hardware-protected memory.

The operator provides a server rack and an electric bill. Every computation, every token, and every intermediate result lives and dies inside walls that physics defines.

Not a privacy policy. Not a corporate promise. Physics.

app.mor.org — run the verification yourself.

English