ChimeraDefi.eth

2.7K posts

ChimeraDefi.eth

@ChimeraDefi

Defi dev | founder @ https://t.co/yg2s4CRWl7 | ex - @meta @uwaterloo | Medium @chimera_defi | Email [email protected] | GH chimera-defi |

The Resolv USR exploit wasn't a bug - it was a feature working exactly as designed. And that's the problem. How USR minting works: you deposit USDC, then an off-chain service with a privileged key decides how much USR to mint for you. The contract checks the minimum but has no maximum. No cap. No ratio to collateral. Whatever the key holder says - gets minted. You could deposit $1 and mint billions. This design was live since day one. It wasn't a code bug. The threat model was simply: "the key won't leak." It did. Attacker got the key. Deposited $200K across two txs, minted 80M unbacked USR. Dumped on DEXes, walked away with ~$23M in ETH. Single point of failure: one private key, no on-chain sanity checks. No max mint ratio, no multisig, no timelock. One compromised key = unlimited money printer. The contract worked perfectly. That's the scariest part.

[EXPLOIT] @ResolvLabs @ResolvCore $USR seems to have been exploited for $50m Due to a large wstUSR/DOLA LP, $DOLA has been depegged too @_SEAL_Org @yieldsandmore etherscan.io/tx/0xfe37f25ef…

if your building something cool on or for AI agents. you need to be in this groups. Any TG groups I am missing?!

I noticed something interesting: Claude Code auto-adds itself as a co-author on every git commit. Codex doesn’t. That’s why you see Claude everywhere on GitHub, but not Codex. I wonder why OpenAI is not doing that. Feels like an obvious branding strategy OpenAI is skipping.

Someone swapped 4668 $ucvxcrv, worth $766, for $53 through metamask swap interface. More interestingly, the uniswap pool was loaded a month ago with $97 USDC and virtually no ucvxcrv. Two people making tragic errors, coming together in a beautiful way. etherscan.io/tx/0x9eb33e28a…

I'm not the only one doing this. - karpathy best thought leader, best person to learn from imo. Nanochat is the best way to get into training LLMs its the simplest and most digestible source for building your first AI model - steipete This guys GitHub is a national treasure, his writing is also very strong. Peekaboo, summarize.sh, openclaw, oracle, just talk to it, etc.. all unique and very useful - badlogicgames Mario’s Pi is a staple AI engine and possibly the best, simplest, open source agentic loop to learn from. Despite what people say about his methods, I think he’s going to set some new standards for Open source contribution. Big respect. - TheAhmadOsman This man is the GPU king, giveaways and lots of dense educational content around self hosting and home inference. He’s also tight with pretty much all the open weight labs and has them on for interviews regularly - sudoingX This is an up and comer who will change the game, he's pushing the limits of what a single gpu can do - Ex0byt I can confidently say this man will be fundamental in making local inference on massive models possible. - alexinexxx I genuinely feel motivated by her drive. She’s a real hard worker learning about GPU kernel programming. Also good aesthetics - gospaceport I would not have gotten into building my own hardware without this man’s hard work. He’s taught me so much about hardware and the economics of this. He also has the most impressive homelabs I’ve ever seen. - alexocheema The founder of Exolabs, pioneering Apple hardware inference, he’s also very engaged in the community and a good guy all around. If you are interested in Mac minis and Mac Studios this is your guys. - nummanali This guy is so prolific, he’s made tons of CLI tools for managing llm subscription budgets, using Claude code with alternative models etc.. - thdxr The entire Opencode team is wonderful but Dax specifically is a good writer. More anti-doomer content to sooth your anxieties. - juliarturc If you are interested in the science, Julias channel is where it’s at. Almost everything I’ve learned about LLM compression has been from her. - Teknium The Nous research & Prime intellect teams are both some of the most hard-working and principled people around. Tough fight in an industry so aggressive. - victormustar Head of Product for Huggingface, enabling us all to publish our work. - louszbd Head of community at ZAI some of the top LLMs available right now that are open weights. They supercharged the movement - SkylerMiao7 Making frontier intelligence fit on 10k USD of hardware. Via MiniMax - crystalsssup Building the best Open Weight model on the market, and releasing their latest research before their next gen model. Believe it or not these people are carrying the entire industry and giving us a fighting chance.

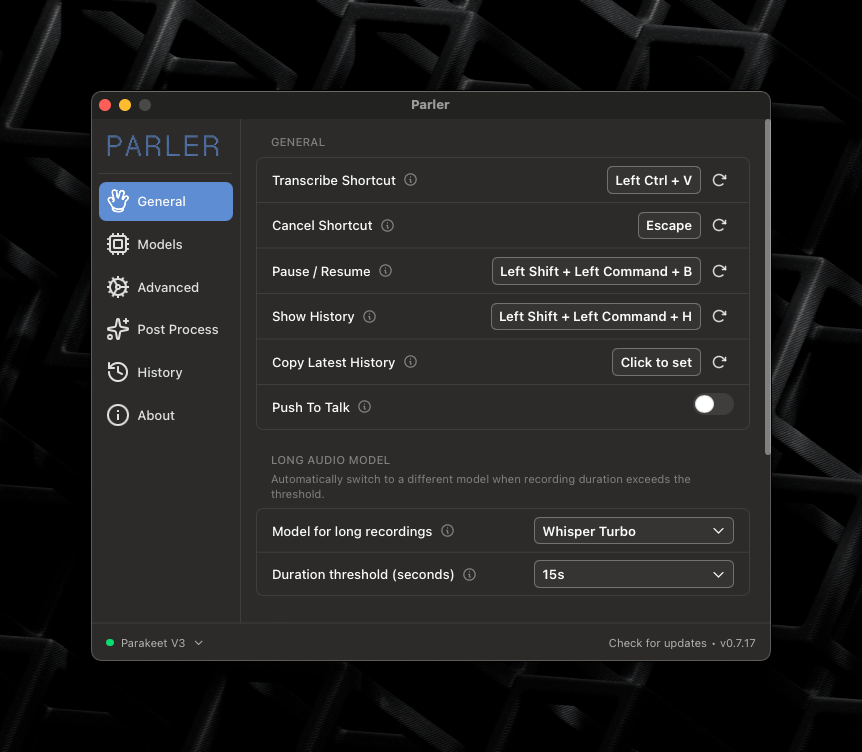

bro, literally the same software is free and open source (not $10 nor $14/m) handy.computer how people can still pay for speech to text tools lmfao

Prototypes become products at ETHGlobal. These 4 standout teams are proof. 💫 Introducing ETHGlobal Cannes Spotlight: @1clawAI @AutoPayProtocol @hintonapp @0xPulsePlay They'll have booths at Pragma Cannes + the hackathon. Stop by, say hi, and see what they've shipped. ↓

Yep, Composer 2 started from an open-source base! We will do full pretraining in the future. Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training. This is why evals are very different. And yes, we are following the license through our inference partner terms.

If you make Forbes 30u30, I’m just going to assume your company is a fraud

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

1/ Here's the intuition. When you learn Fibonacci in Python, you can write it in Java tomorrow without years of Java training. You transfer the logic. The loop, the state, the termination condition. Syntax is just a costume. LLMs claim to do this. We wanted to see if they actually can.

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵