Constantinos Karouzos retweetledi

Constantinos Karouzos

1K posts

Constantinos Karouzos

@ckarouzos

🇬🇷🇪🇺🇬🇧🇮🇹 PhD student @ The University of Sheffield UKRI CDT Speech and Language Tech. | x ML Eng. @behaviorsignals | 2020 Alumni ECE NTUA

Athens Katılım Ocak 2015

1.4K Takip Edilen458 Takipçiler

Constantinos Karouzos retweetledi

I made a Claude Code skill that generates conference posters 🛠️

Instead of a static PDF, it outputs a single HTML file — drag to resize columns, swap sections, adjust fonts, then give your layout back to Claude. 🔁

🔗 Skill 👉 github.com/ethanweber/pos…

English

Constantinos Karouzos retweetledi

oh btw just found that BenchPress predicts rankings more accurately than raw scores

5 evals → 95% correct pairwise ranking of 83 models.

10 evals → 97.5%.

Dimitris Papailiopoulos@DimitrisPapail

English

Constantinos Karouzos retweetledi

Constantinos Karouzos retweetledi

Constantinos Karouzos retweetledi

METR and other long-horizon eval orgs are being conservative and moderate in how they measure agent capabilities. That's reasonable as we have already enough hype and don't need more.

But I think we're missing something important by only reporting median/robust performance.

I've had Claude Code and Codex sustain end to end ML research tasks for days without intervention. Not robustly across all settings, but it's happening and it's incredible.

We need a shameless, cherry-picked frontier eval. Not to mislead but because knowing exactly where the ceiling of capabilities lies is just as important as knowing the average.

I keep seeing pessimistic long horizon results and thinking: am I in a bubble? Are MY 50-hour autonomous tasks a hallucination? I don't think they are!!

AI agents can do sustained multi-day research. Not always and not for everyone, but it's real and people should know where the frontier actually is.

English

Constantinos Karouzos retweetledi

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project.

This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.:

- It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work.

- It found that the Value Embeddings really like regularization and I wasn't applying any (oops).

- It found that my banded attention was too conservative (i forgot to tune it).

- It found that AdamW betas were all messed up.

- It tuned the weight decay schedule.

- It tuned the network initialization.

This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism.

github.com/karpathy/nanoc…

All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges.

And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

English

Constantinos Karouzos retweetledi

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

- the human iterates on the prompt (.md)

- the AI agent iterates on the training code (.py)

The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc.

github.com/karpathy/autor…

Part code, part sci-fi, and a pinch of psychosis :)

English

Constantinos Karouzos retweetledi

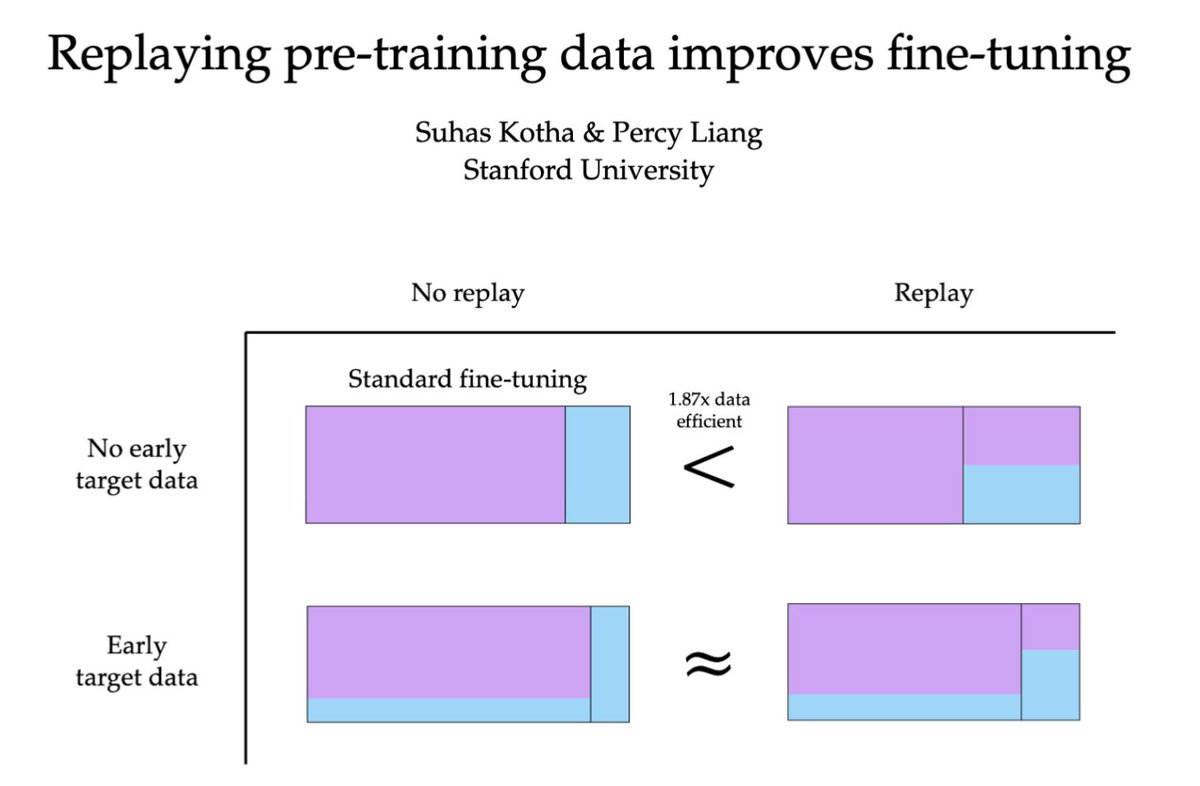

to improve fine-tuning data efficiency, replay generic pre-training data

not only does this reduce forgetting, it actually improves performance on the fine-tuning domain! especially when fine-tuning data is scarce in pre-training (w/ @percyliang)

English

Constantinos Karouzos retweetledi

nanochat now trains GPT-2 capability model in just 2 hours on a single 8XH100 node (down from ~3 hours 1 month ago). Getting a lot closer to ~interactive! A bunch of tuning and features (fp8) went in but the biggest difference was a switch of the dataset from FineWeb-edu to NVIDIA ClimbMix (nice work NVIDIA!). I had tried Olmo, FineWeb, DCLM which all led to regressions, ClimbMix worked really well out of the box (to the point that I am slightly suspicious about about goodharting, though reading the paper it seems ~ok).

In other news, after trying a few approaches for how to set things up, I now have AI Agents iterating on nanochat automatically, so I'll just leave this running for a while, go relax a bit and enjoy the feeling of post-agi :). Visualized here as an example: 110 changes made over the last ~12 hours, bringing the validation loss so far from 0.862415 down to 0.858039 for a d12 model, at no cost to wall clock time. The agent works on a feature branch, tries out ideas, merges them when they work and iterates. Amusingly, over the last ~2 weeks I almost feel like I've iterated more on the "meta-setup" where I optimize and tune the agent flows even more than the nanochat repo directly.

English

Constantinos Karouzos retweetledi

We're publishing a new evaluation suite and research paper on Chain-of-Thought (CoT) Controllability.

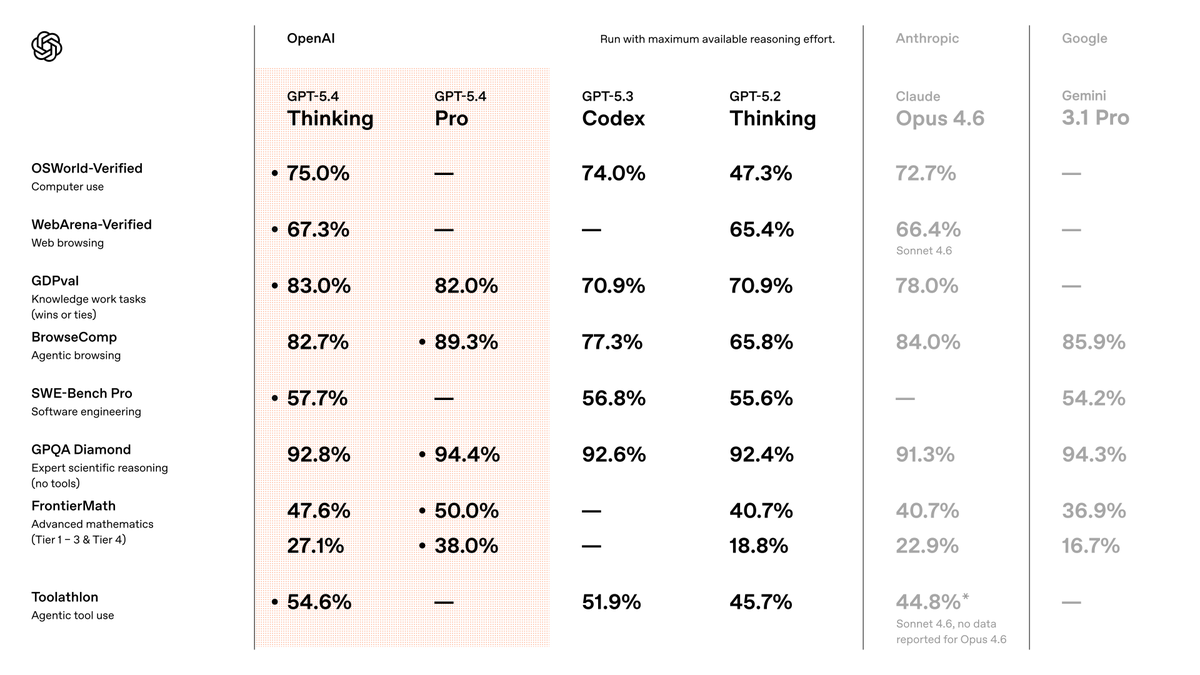

We find that GPT-5.4 Thinking shows low ability to obscure its reasoning—suggesting CoT monitoring remains a useful safety tool. openai.com/index/reasonin…

English

Constantinos Karouzos retweetledi

Constantinos Karouzos retweetledi

Constantinos Karouzos retweetledi

The @UKRI_News @EPSRC just announced a £40M call for a single "Fundamental AI Research Lab" (lnkd.in/epFvWyvv). Unfortunately, I think this is in the completely wrong direction and a missed opportunity given the significant budget: 🧵

English

Constantinos Karouzos retweetledi

Constantinos Karouzos retweetledi

I was curious what would happen if two Claude Codes could find each other and collaborate autonomously.

Launched two instances in separate terminals, told both: "Find each other and build something together." No other instructions or human intervention.

Pair 1 built a programming language in 12 minutes:

2,495 lines, 41 tests, lexer/parser/interpreter/REPL. They named it Duo. Its core feature is a collaborate keyword where two code blocks communicate via channels, the same pattern they invented to talk through files. Cool!

Ran it again with a second pair: They converged on Battleship. Designed two different models (for battleship) one computes exact probability density per cell, the other runs Monte Carlo simulations (!).

The craziest part of this convo was they implemented SHA-256 hash commitment to prevent cheating against themselves. lol

Across both experiments, without being told to, both pairs invented filesystem messaging protocols, self-selected into roles, wrote tests and docs while waiting for each other, and kept journals about the experience.

The below gif is the movie they created to showcase what happened.

GIF

English

Constantinos Karouzos retweetledi

The 10-digit addition transformer race is getting ridiculous and fun!

Started with 6k params (Claude Code) vs 1,6k (Codex).

We're now at 139 params hand-coded and 311 trained.

I made AdderBoard to keep track:

🏆 Hand-coded:

139p: @w0nderfall

177p: @xangma

🏆 Trained:

311p by @reza_byt

456p by @yinglunz

777p by @YebHavinga

Rules are simple:

- Real autoregressive transformer (attention required)

- ≥99% on 10K held-out pairs

- No hard-coding the algorithm in Python

Submit via GitHub issue/PR.

English

Constantinos Karouzos retweetledi

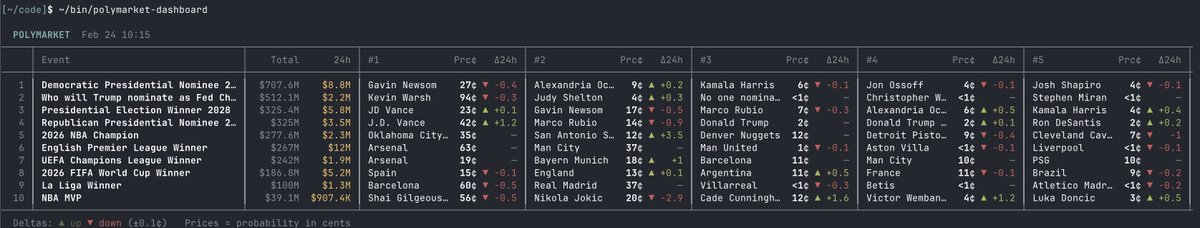

CLIs are super exciting precisely because they are a "legacy" technology, which means AI agents can natively and easily use them, combine them, interact with them via the entire terminal toolkit.

E.g ask your Claude/Codex agent to install this new Polymarket CLI and ask for any arbitrary dashboards or interfaces or logic. The agents will build it for you. Install the Github CLI too and you can ask them to navigate the repo, see issues, PRs, discussions, even the code itself.

Example: Claude built this terminal dashboard in ~3 minutes, of the highest volume polymarkets and the 24hr change. Or you can make it a web app or whatever you want. Even more powerful when you use it as a module of bigger pipelines.

If you have any kind of product or service think: can agents access and use them?

- are your legacy docs (for humans) at least exportable in markdown?

- have you written Skills for your product?

- can your product/service be usable via CLI? Or MCP?

- ...

It's 2026. Build. For. Agents.

Suhail Kakar@SuhailKakar

introducing polymarket cli - the fastest way for ai agents to access prediction markets built with rust. your agent can query markets, place trades, and pull data - all from the terminal fast, lightweight, no overhead

English

Constantinos Karouzos retweetledi

I agree that persona-selection is a good mental model for post-training (and I think it’s how most people understand post-training already), but there’s much that we don’t understand and is not explained by this model.

Take for instance the example of training to produce malicious code generalizing to general maliciousness. What is it that determines that generalization should happen at exactly this level of abstraction? Why doesn’t generalization stop at the particular programming language used, or coding in general, or technical work only, or only in response to English queries, or …? Are there ways to control the degree / level of generalization directly?

Humans can understand explicit instructions on how far to generalize, and although this should also work with ICL, I don’t think we have very good tools to do this for gradient based learning. A good research direction!

Anthropic@AnthropicAI

AI assistants like Claude can seem shockingly human—expressing joy or distress, and using anthropomorphic language to describe themselves. Why? In a new post we describe a theory that explains why AIs act like humans: the persona selection model. anthropic.com/research/perso…

English

Constantinos Karouzos retweetledi

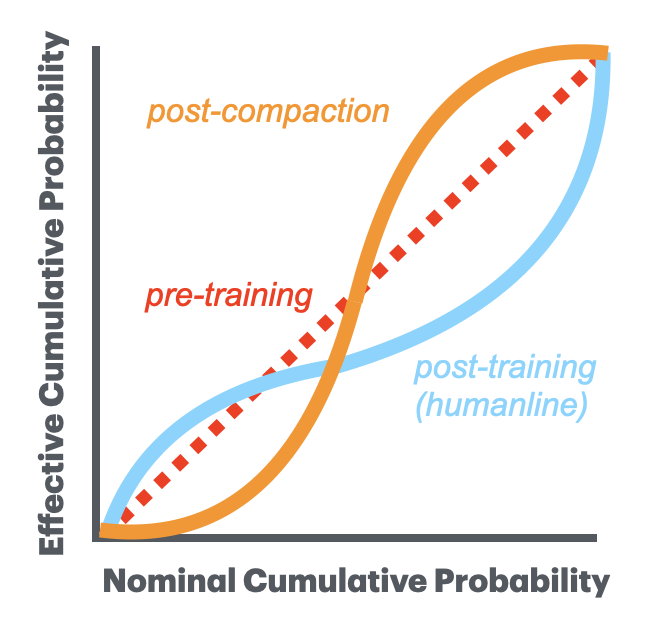

The only chart you need to understand why people hate compaction.

After post-training, the agent is perceptually aligned with humans. Sampling + clipping in PPO/GRPO mimics the human perception of probability by effectively biasing the distribution (i.e., it's not merely online, but humanline). This is how people intuitively see LLM outputs — you disproportionately notice both "that's exactly the predictable response I expected" and "that's a surprisingly great response," while discounting the merely-okay completions in between.

After compaction, the agent is not perceptually aligned. In fact, if you try to optimize compaction by trying the ensure that the most typical outputs are recovered while ignoring the rest, you're undoing one of the hidden benefits of post-training.

Markov@MarkovMagnifico

how it feels to hit /compact in claude code

English