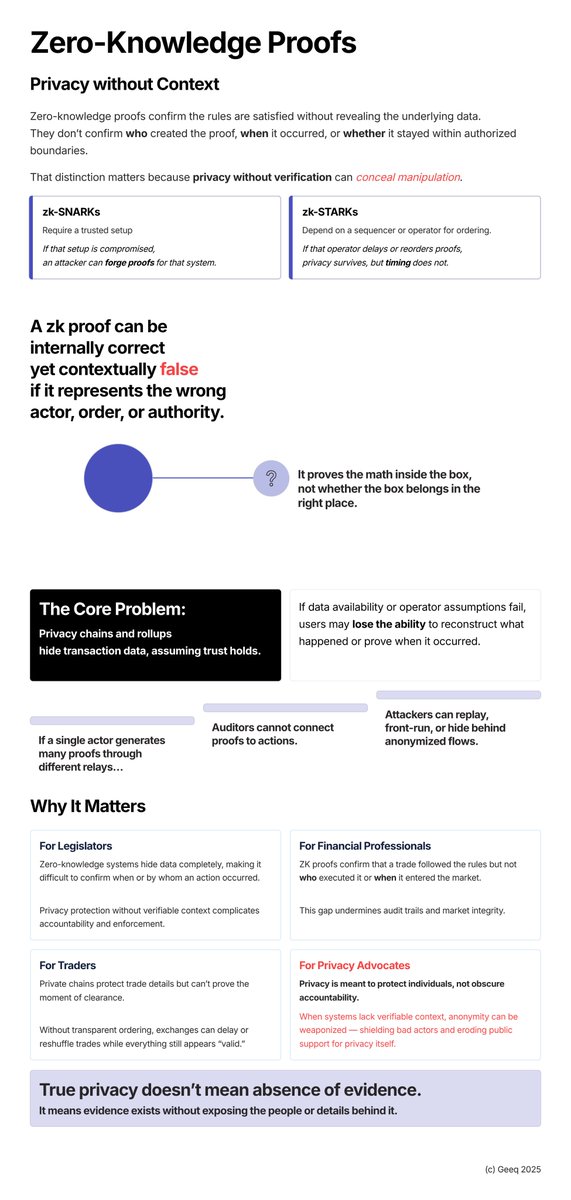

I returned to LinkedIN recently. My feed is 🧨exploding with posts of security in agentic AI models. What strikes me is how familiar this all feels. The pattern emerging for chained agentic AI models is almost identical to what we kept running into when working on blockchain security. In blockchain, a root cause for failure has come from assuming any accepted transaction represented valid intent, even when common sense would say otherwise: - tokens pushed into wallets without consent 😢 - contracts carrying out actions users never meaningfully approved 🫨 - chains exporting unverified events because a message looked well-formed. 🧐 Block validation by consensus is not the same as a checking for an individual user's consent transaction by transaction. As long as crypto skips that transaction-level verification, abstraction and composability create blind spots. They shield network-majority incentives and allow validators to prioritize throughput even when the results harm individual account holders. Smart contracts - including those on privacy chains - only check local conditions such as states and zk proofs. They have not been designed to ask the higher-level questions like: “Did the recipient agree?” or "Did the counterparty approve?”. Geeq's L0 answers those questions. All criteria must be satisfied before an action is taken. That is the zero-trust approach: prove it first, then move. 👉 The same problems are showing up in agentic AI. Once a malicious agent poisons context, an entire chain of actions can follow. 😱 The brakes weren’t built in early enough. Now the AI world is re-evaluating its execution assumptions — yet crypto has been slow to do the same. Both need to change. Different technologies, same failure mode. Assumptions that let errors propagate. I think they converge on the same architectural answer: add verification before action, not after.