Katja Grace 🔍

1.7K posts

@KatjaGrace

Thinking about whether AI will destroy the world at https://t.co/pMilDvd4ya. DM or email for media requests. Feedback: https://t.co/zGAm1i7SKH

I find it quite amusing that the AI 2027 authors publicly updated for longer timelines a few months ago and now back to shorter closer to original timelines. Shows the tumultuous times we're all in.

Yeah I had a similar reaction, all they said was: a) AI has massive potential upside (I don't disagree!) b) Technological advancement has been good in the past so why not assume it will be in future (an ok heuristic but not a fundamental law of reality; does not actually address the arguments)

Pause AI rhetoric is predicated on the notion that the AI companies are recklessly racing toward dangerous tech and that a government controlled pause button is therefore necessary, but this seems really hard to reconcile with the fact that government is attempting to destroy an AI company because *the government* is racing toward plausibly dangerous AI uses (Sec. Hegseth has stated in official directives that he wants to deploy AI into critical systems regardless of whether it is aligned, for example) and *the company* is pushing back. The roles are totally reversed from the logic that Pause AI and frankly other AI safety advocates confidently assumed for years. It is *industry* that is in favor of alignment and at least somewhat measured deployment risks, and government whose actions seem much closer to reckless. I predicted this for years. I said, in particular, that pauses and bans and licensing regimes gave government a dangerously high degree of control over AI, and that the incentives of government are much more dangerous than those of private industry with competitive market incentives. I believe the events of the last month are good evidence in favor of my view. At this point if you are an AI safety advocate whose policy proposals do not wrestle seriously with the brutal political economic reality of the state and AI, I don’t take you seriously. It gives me no pleasure to have been right about this, by the way. The state has an incredibly strong structural incentive to centralize power using AI, and we are, all of us, not so empowered to stop it. I am quite concerned about this.

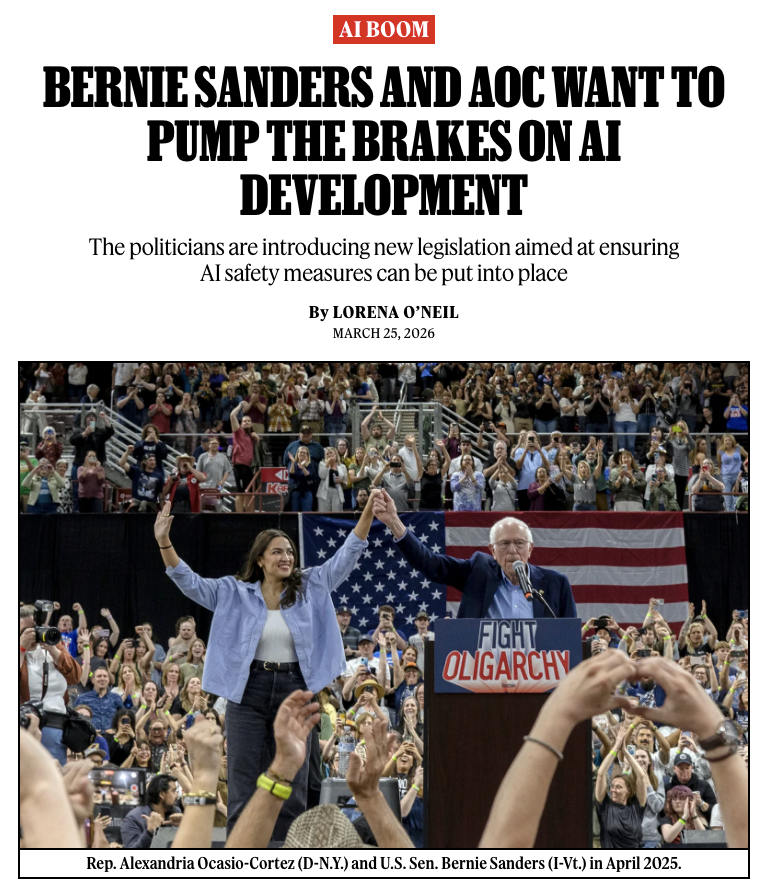

This is what democracy looks like! It is our right to demand safety. Tell the AI company CEOs that you want them to stop building dangerous AI.

On our way to OpenAI!

We have about 150 people signed up to walk on Anthropic, OpenAI and xAI in 2 days! This is shaping to be the biggest US AI Safety protest to date, come join us!