Łukasz Borchmann

232 posts

@LukaszBorchmann

AI researcher at @Snowflake, coffee addict, @MonsterEnergy connoisseur, passionate about everything but LLMs' prompting. #deeplearning

This paper aside, crazy how much work is being done in sparse attention. Now that we know that DSA works (and well), it's clear that 1M contexts are just the appetizer. HISA, though, looks like DSA + good ideas of NSA. In the limit, cuts indexer costs by B. Plug and play! @_xjdr

@jiaxinwen22 I'm a bit surprised there is no self-training baseline (labels generated 0-shot used to fine-tune the model directly). Even with low 0-shot accuracy, mini-batch training could average out noise if the model's annotations are systematically consistent across similar examples.

Pretty fascinating that in England the moment a person opens their mouth, you can read their entire life story. Where they went to school, what their family is like, their economic background, everything. A friend of mine says he literally can’t date in London anymore bc the second a girl speaks, there’s zero mystery. He already knows her postcode, her school and her dad’s job. It’s over before it starts. In Poland, none of that exists. People speak the same regardless of where they grew up, where they went to school, what they do for a living. A homeless man and a university professor can sound nearly identical. Nobody can tell that I basically grew up in London. Nobody can tell I haven’t lived here in years. There are no accent clues and no class markers, no education giveaways, nothing. So getting to know someone here is a completely different experience. You actually have to be curious. You have to ask and you have to discover a person the old-fashioned way bc nothing about the way they speak is going to hand you the answers. Pretty cool

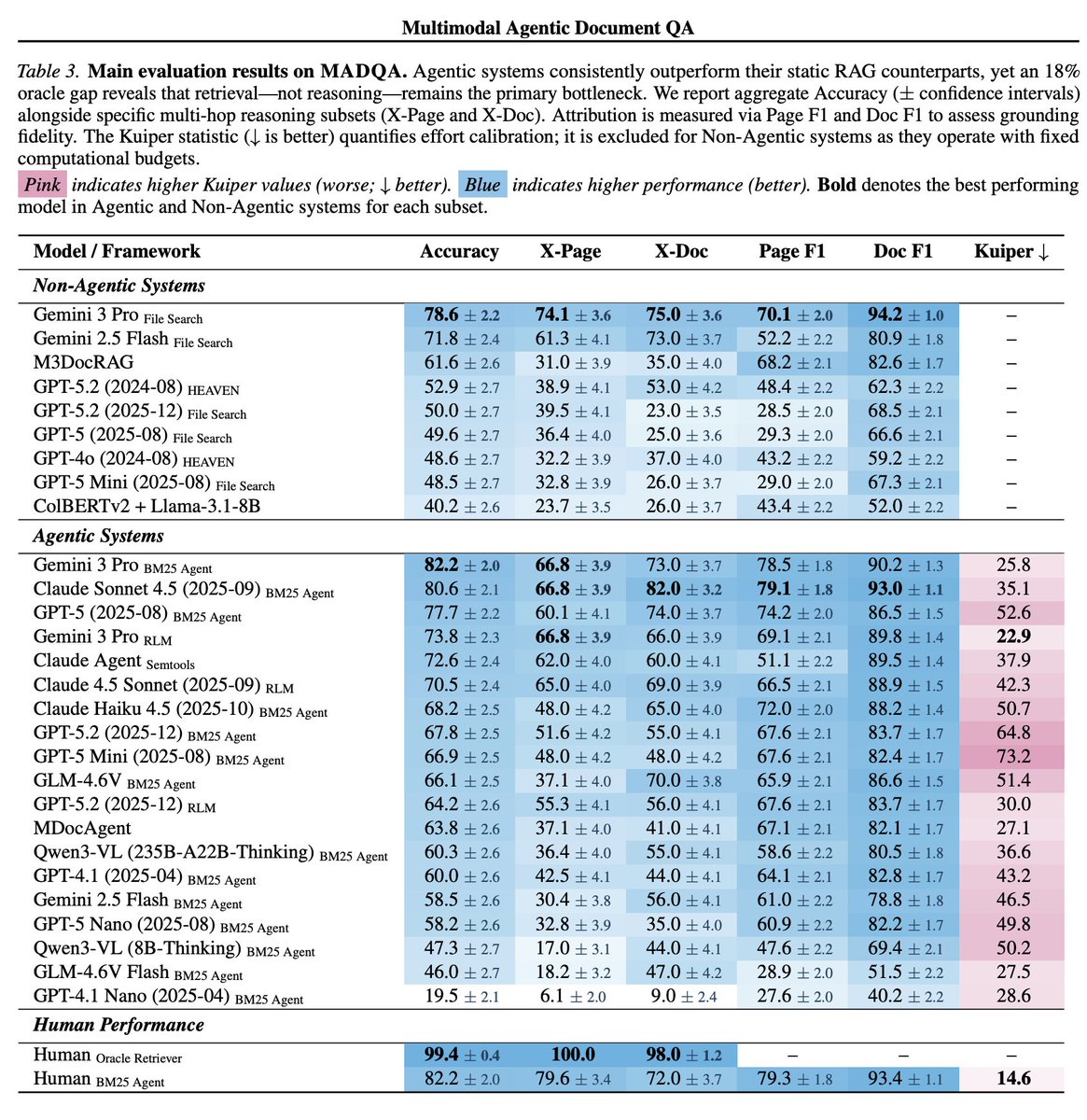

For Agentic tasks, Oracle-level performance is the maximum performance a system can achieve, assuming it is able to retrieve all relevant documents perfectly, every time. We're proud to show that Mixedbread Search approaches the Oracle on multiple knowledge intensive benchmarks.

For Agentic tasks, Oracle-level performance is the maximum performance a system can achieve, assuming it is able to retrieve all relevant documents perfectly, every time. We're proud to show that Mixedbread Search approaches the Oracle on multiple knowledge intensive benchmarks.

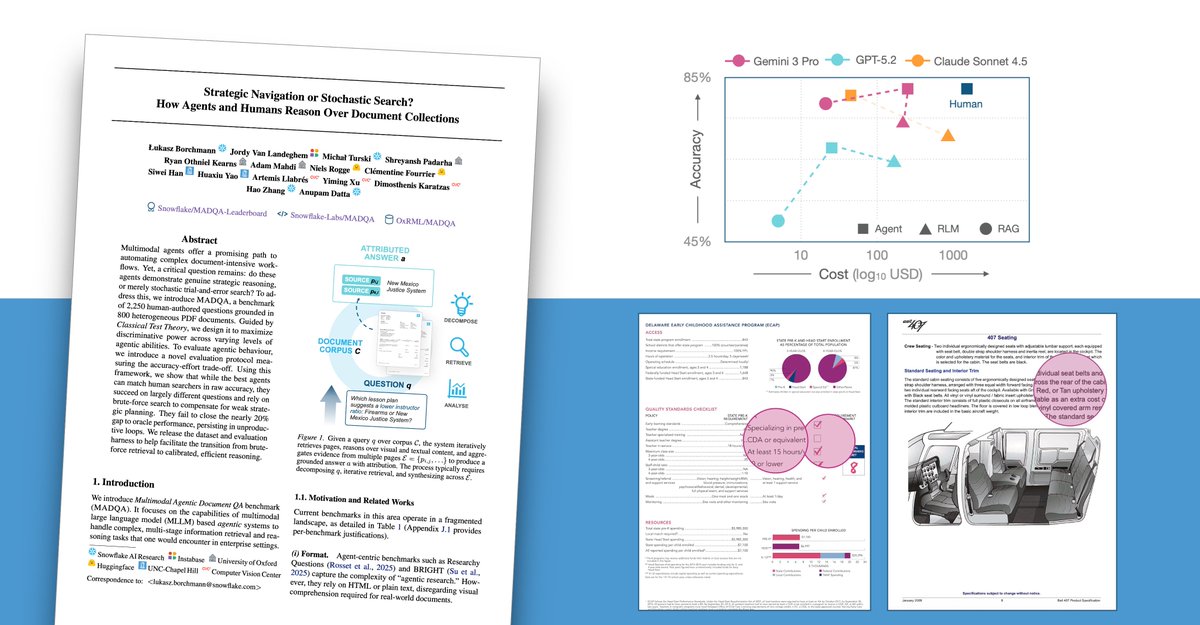

Strategic Navigation or Stochastic Search? New MADQA benchmark reveals that agents matching human accuracy on document QA rely on brute-force search to compensate for weak strategic planning. 2,250 questions over 800 PDFs expose a 20% gap to oracle performance.

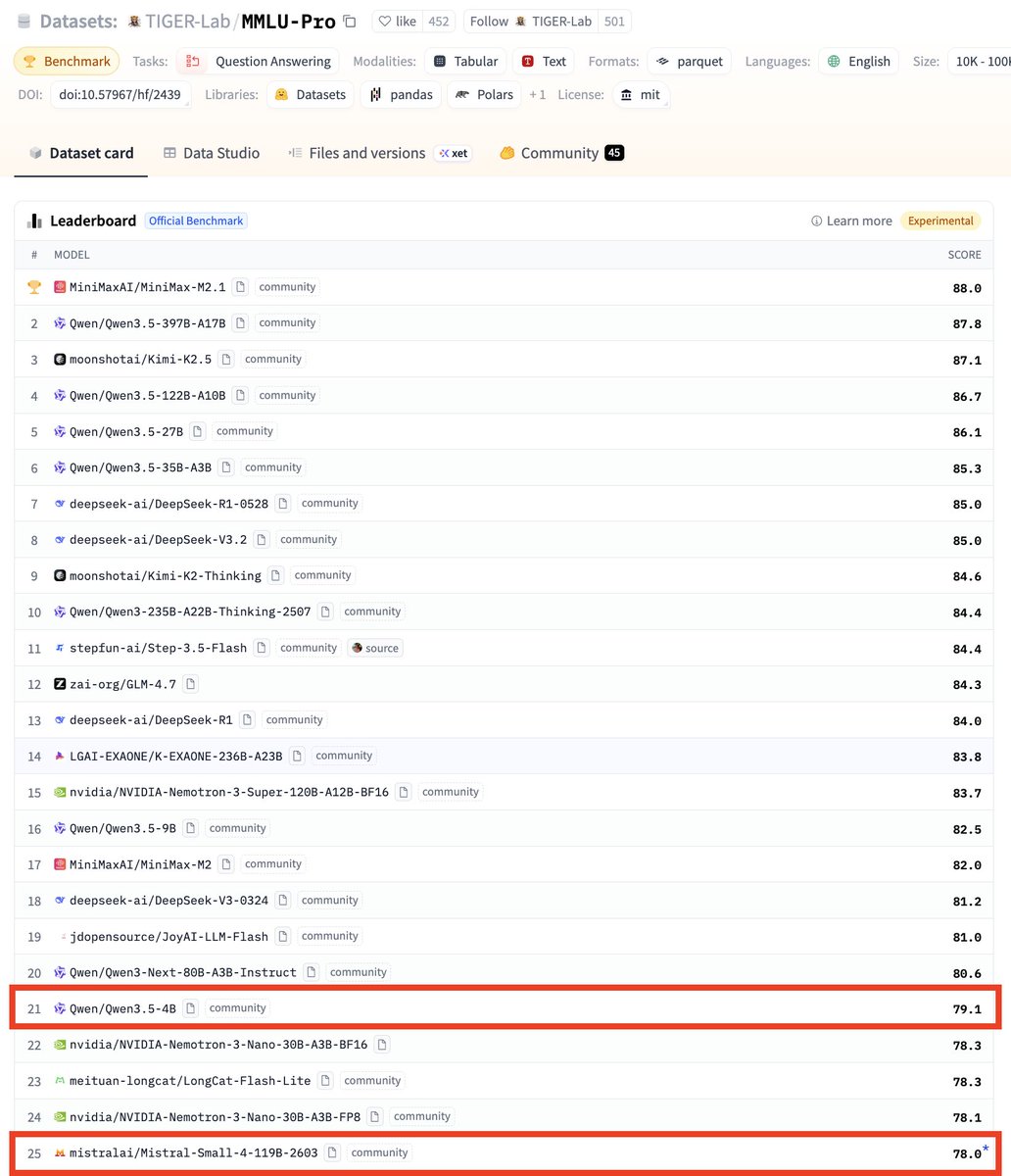

👀 small, 119B parameters? huggingface.co/mistralai/Mist…

Look mom I'm on a paper! Introducing an agentic RAG benchmark with 2,250 human-authored questions grounded in 800 heterogeneous PDF documents Gemini-3 Pro with good-old BM25 as a tool takes the lead, but large gaps with humans remain. I set up baselines with the Claude Agents SDK + CLIs (semtools by @llama_index) 🤗