Kris D.

177 posts

@M33pinator

Research Fellow @ https://t.co/7J7uozCcmA; @UAlbertaCS alum interested in AI, robotics, and penguins—dabbles w/ game dev, pixel art, speedrunning, and speedcubing.

Over the years I’ve noticed two schools of thought in ML: 1. prototype on synthetic tasks first (examples - ARC, computer games) 2. avoid synthetic tasks entirely i started in camp (1), but slowly converged to (2). the planning and reasoning capabilities we care about are too entangled with the visual diversity of the real world.

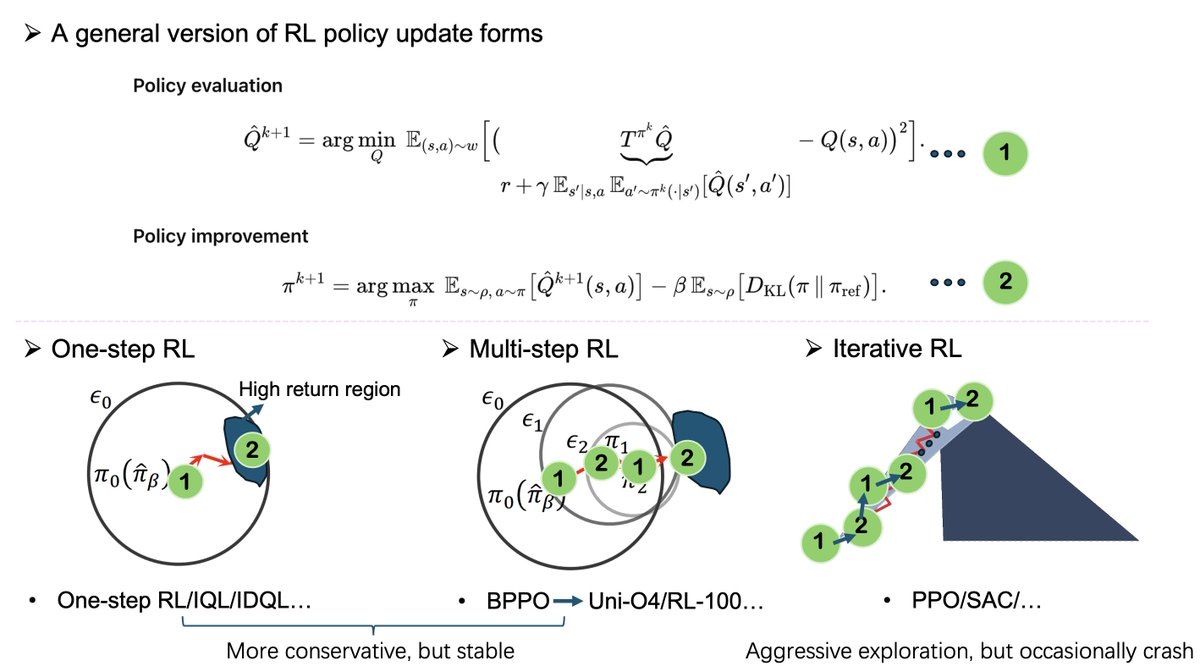

GPT-5 on Sudoku-Bench 🧩 Since releasing Sudoku-Bench in May 2025, when no LLM could solve a classic 9x9 puzzle, we've been evaluating the latest generation of models. GPT-5 now leads our leaderboard with 33% puzzles solved--approximately 2x the previous leader--and is the first LLM we've tested to solve a 9x9 Sudoku variant. However, with 67% of the much harder puzzles remaining unsolved, Sudoku-Bench continues to present significant challenges for AI reasoning. Modern Sudoku variants require models to first understand novel rulesets through meta-reasoning, then maintain global consistency across long reasoning chains. Our experiments with GRPO fine-tuning on Qwen2.5-7b and "Thought Cloning" (training on expert human reasoning from Cracking the Cryptic) show that current approaches still struggle with the spatial reasoning and creative "break-in" points that human solvers use naturally. We believe new approaches are required to solve our benchmark. These results highlight persistent gaps between computational problem-solving and human-like reasoning, particularly in tasks requiring integrated mathematical logic, spatial awareness, and creative insight. Read more about our update here: 🔗 Blogpost → pub.sakana.ai/sudoku-gpt5/

Reinforcement learning 🧠 on robots 🤖 can’t stay in simulation forever. My new post explores why direct, on-hardware learning matters and how we also need smarter mechanical design to enable it. kris.pengy.ca/designforlearn…

Finally, our paper "Safe Reinforcement Learning on the Constraint Manifold: Theory and Applications" has been accepted for publication as an evolved paper in T-RO! In this work, we analyze our ATACOM approach and perform real-world RL in our Robot Air Hockey System!

Reinforcement learning 🧠 on robots 🤖 can’t stay in simulation forever. My new post explores why direct, on-hardware learning matters and how we also need smarter mechanical design to enable it. kris.pengy.ca/designforlearn…

The @karpathy interview 0:00:00 – AGI is still a decade away 0:30:33 – LLM cognitive deficits 0:40:53 – RL is terrible 0:50:26 – How do humans learn? 1:07:13 – AGI will blend into 2% GDP growth 1:18:24 – ASI 1:33:38 – Evolution of intelligence & culture 1:43:43 - Why self driving took so long 1:57:08 - Future of education Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!