Mingze Dong retweetledi

We are excited to have @Mingze7316 presenting “Stack: In-context learning of single cell biology” tomorrow!

📍CoDa E160 | 2/3 2:30pm | Stanford + Zoom

English

Mingze Dong

27 posts

@Mingze7316

PhD student @YaleCBB; Integrate Science B.S. @PKU1898

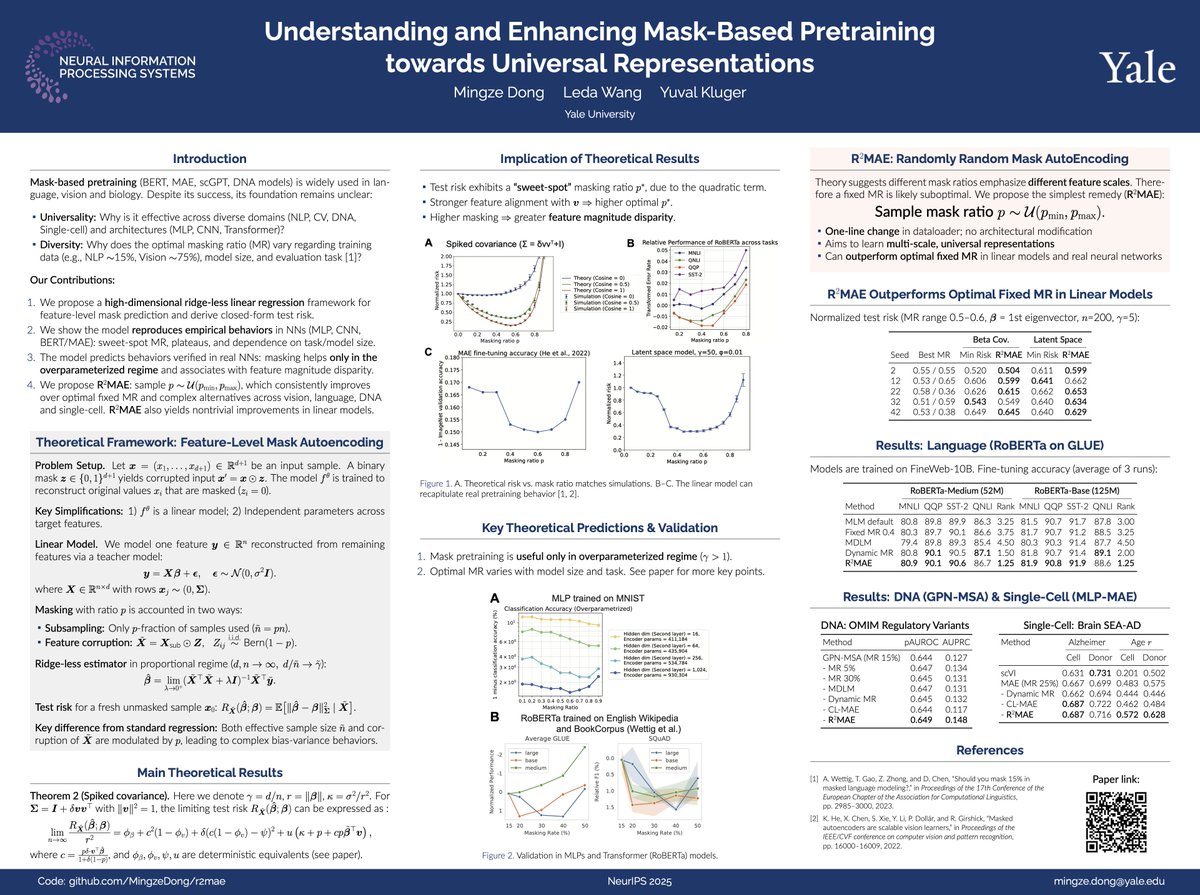

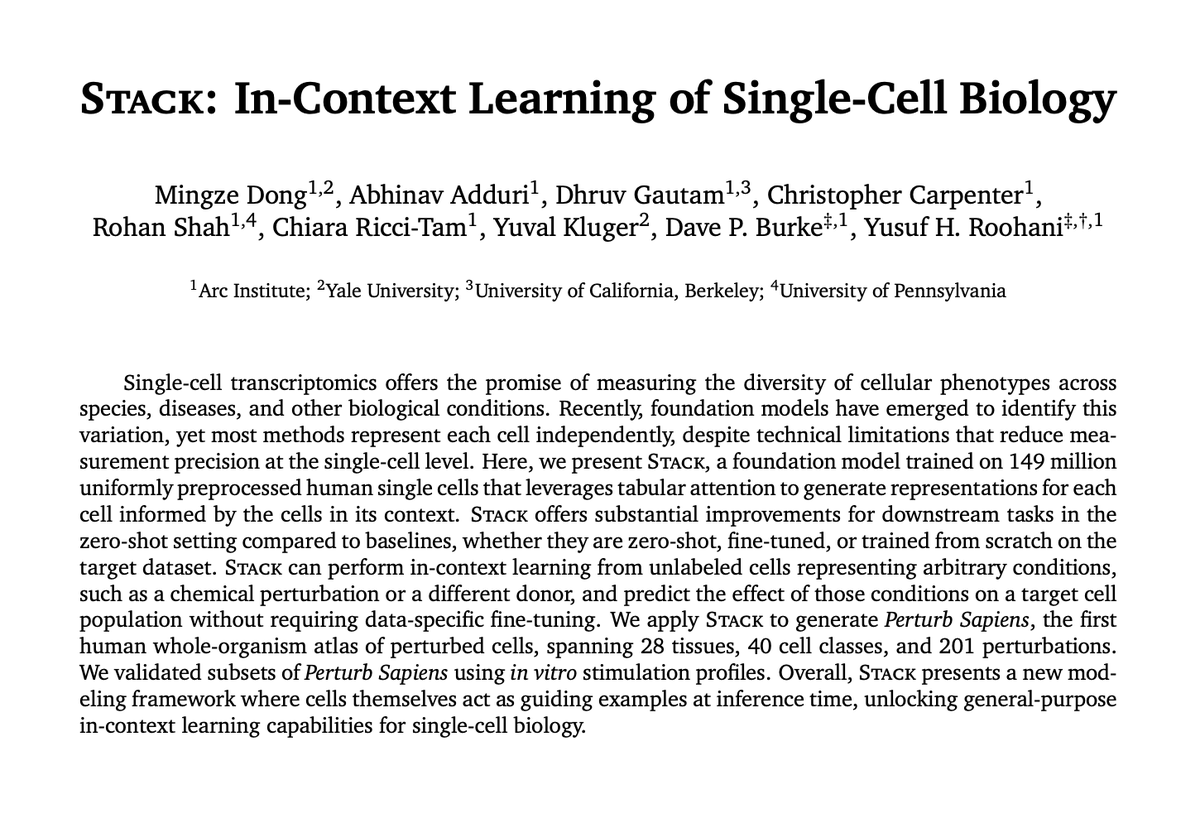

Predicting cell state in previously unseen conditions such as disease or in response to a drug has typically required retraining for each new biological context. Today, Arc is releasing Stack, a foundation model that learns to simulate cell state under novel conditions directly at inference time, no fine-tuning required.

Why define conditions, donors or even *tasks* when we can just use cells themselves to guide model output Presenting Stack, in-context learning using just cells! Use cell context -> enhance its embedding Engineer cell context ->modify its state Led by the brilliant @Mingze7316