Robert Metcalfe

5.2K posts

Robert Metcalfe

@RDMetcalfe

Economist, professor @ColumbiaSIPA @nberpubs | Co-Editor @jpubecon | Chief Economist @CentreNetZero | Co-founder @tbehaviouralist & @signol_io | 🏴

Manhattan, NY Katılım Kasım 2011

1.7K Takip Edilen7.9K Takipçiler

Sabitlenmiş Tweet

Robert Metcalfe retweetledi

My copy from the @UChicagoPress just arrived! At nearly 900 pages, if you don’t like it I bet it can stop any door from shutting abruptly!

Mine will rest next to a cherished treasure that brings me back to where the roots of this book started, in the early 1990s!

English

Robert Metcalfe retweetledi

PhD decision season. My inbox is more flooded than ever. Prospective PhD students asking some version of the question: "Should I keep going? Is an Econ PhD still worth it in the age of AI?"

I get it. The uncertainty is real. And, honestly no one knows the answer. My response begins with the caveat that I have no real certainty around my thoughts, I merely have a hunch. And, that intuition comes from combining my experiences in the academy with my recent field work alongside charities, governments, Walmart, and Anthropic itself.

My hunch is that AI will reveal expertise, not replace it, at least for the foreseeable future. Indeed, I wrote about this earlier with my justification.

As such, I do not view a PhD in economics as a credential. It's a forcing function for building that kind of deep, durable expertise. The expertise that AI amplifies rather than erodes.

So my advice? The uncertainty about AI may be the best reason yet to double down and go for an econ PhD. Why? Because the future belongs to people who know things deeply enough that AI becomes a multiplier, not a replacement.

English

Robert Metcalfe retweetledi

📢 Call for Papers: Advances with Field Experiments (AFE) 2026

Join leading researchers using field experiments to answer big economic questions. Submit your abstract by May 29. ⬇️

bfi.uchicago.edu/events/event/a…

English

Robert Metcalfe retweetledi

Over my career I have tried to use science in naturally-occurring settings to learn something of social import. Whether baseball card and collector shows, charitable organizations, schools, elections, government agencies, and more recently businesses, nothing has been off limits.

One of the first hurdles I always face when I talk to firms is that “field experiments are too expensive.” It is high time we begin to think like economists and retire that myth!

The real cost isn't the field experiment. It's the opportunity cost of not knowing something.

Ignorance is rarely free. Every failed new product, bad public policy, incentive backfire, or mispricing that a well-designed test could have caught before scaling is the true price tag.

The key that nearly everyone misses is that every organization rolls out new ideas all the time. New pricing schemes. New onboarding flows. New incentive structures. New web design, new choice architecture, new public policies. They go live, usually untested.

What you need to ask is simple: what is the marginal cost of embedding a field experiment into a rollout that you are already doing? Most of the time trivial, especially compared to the knowledge gains. You are already paying for the infrastructure. The experiment is what converts that action into knowledge.

In my new book, Experimental Economics: Theory and Practice (amazon.com/Experimental-E…), the economics of this argument become crystal clear, especially in the chapters on experiments with organizations and the overarching model of optimal design.

The question was never "can we afford to run this experiment?"

The real question: "can we afford not to?"

English

Robert Metcalfe retweetledi

From field experiments to policy interventions at scale.

My latest commentary @ScienceMagazine - after reading @Econ_4_Everyone hard to think of anything else!

science.org/doi/10.1126/sc…

English

Robert Metcalfe retweetledi

Some AI+econ opportunities coming up:

1. Schmidt Sciences grants up to $200k schmidtsciences.org/ai-at-work/

2. Microsoft AI economy grants $75k microsoft.com/en-us/research…

3. UChicago AI in social science conference bfi.uchicago.edu/events/event/2…

English

Robert Metcalfe retweetledi

Hi Tom. That feels too narrow. This really has nothing to do with preserving rents. I am too old to care about that!

Part of my worry was that people would look at AI and decide that learning economics was unnecessary. Why invest years in mastering causal inference or market design when you can just ask a machine and I won't get paid for it anyway? As my twitter handles suggests, learning economics was never just about producing economic analysis.

Our classes train critical thinking skills: what thinking on the margin means, how to reason about incentives, how to use demand identification before making causal claims, and spot what is actionable and what is not. I always says, Economics is life and life is economics Such thinking habits spill over into everything. How you evaluate a policy proposal. How you run a business. How you make decisions in your own life. How to vote.

f AI convinces a generation of youngsters that they can skip that training because the machine will handle it, we lose something that goes well beyond the economics profession.

English

Robert Metcalfe retweetledi

When AI first arrived on the scene, I worried it would make economists, or even critical thinkers more broadly, less valuable. In my travels in the past 6 months to work with non-profits, for profits, and government agencies, I have observed how people are actually using AI. I have watched them fumble around with insights they clearly did not create themselves.

My fears are now assuaged. One observation is that AI can produce something that in some cases is very wrong and in others looks nearly right, but is not quite there. Even if in time AI improves to "nearly right" or "exactly right" every time, a second issue still arises: explaining the materials.

Explaining why an answer is almost correct but subtly off requires exactly the critical thinking skills that created the knowledge in the first place. Even explaining "exactly right" material takes critical thinking. I've watched smart people confidently present AI-generated material they clearly don't fully understand. The words sound right. But when someone pushes back just a little bit, the sand castle crumbles.

It is quite difficult to defend what you didn't build. This leads me to now make the optimistic case for human expertise. The value of deeply understanding something — of having built the knowledge yourself — hasn't diminished with AI. If anything, it's increased. The people who can tell the difference between "nearly right" and "right" are more valuable than ever. The people who can explain the subtle details about something that is exactly right are invaluable.

Creating knowledge still matters. Maybe now more than ever.

English

Robert Metcalfe retweetledi

I'm hiring pre-docs interested in applied microeconomics, especially with AI. Check out the link and apply!

Deadline for the first round of review is tomorrow, Feb. 24. evavivalt.com/2026/02/pre-do…

English

David and Paulina are awesome. If you’re looking to do an economics PhD in the future, this is a fantastic pre-doc to help prepare you.

David Schönholzer@davidfromterra

Hi everyone, Paulina Oliva and I are hiring Pre-doctoral Research Fellows to work with us here at USC Economics in Los Angeles. If you know of suitable candidates, please encourage them to apply! dornsife.usc.edu/paulina-oliva/…

English

Robert Metcalfe retweetledi

Hi everyone,

Paulina Oliva and I are hiring Pre-doctoral Research Fellows to work with us here at USC Economics in Los Angeles. If you know of suitable candidates, please encourage them to apply!

dornsife.usc.edu/paulina-oliva/…

English

Robert Metcalfe retweetledi

***Big Announcement!***

I'm thrilled to let everyone know that the Chicago School in Experimental Economics is heading to Buenos Aires! This edition of CSEE will take place November 4-8, 2026 at the Universidad del CEMA.

This intensive one-week summer school is designed to deepen scholars' understanding of frontier experimental methods. Lessons will range from designing and conducting experiments to analyzing and interpreting data to writing up your findings. The curriculum draws from my forthcoming textbook, Experimental Economics: Theory and Practice (amazon.com/Experimental-E…), and participants will also have the opportunity to present and discuss their own research.

I'm honored to be joined by an outstanding group of lecturers:

Gwen-Jirō Clochard (The University of Osaka)

Jared Gars (University of Florida)

Luca Henkel (Erasmus University Rotterdam)

Justin Holz (University of Michigan)

Sally Sadoff (UC San Diego)

Julia Seither (University of Chicago)

Karen Ye (Queen's University)

Applications are now open to researchers across disciplines who work with experimental methods, want to apply them in the future, or teach experimental approaches. Priority goes to junior faculty, but doctoral and postdoctoral researchers are also welcome to apply. There is no program fee, and financial assistance for accommodations is available.

Application deadline: April 30, 2026

Please apply here: voices.uchicago.edu/jlist/the-chic…

Questions? Contact Melissa De Vries at melissade4@uchicago.edu

I look forward to seeing you in Buenos Aires!

English

Robert Metcalfe retweetledi

Robert Metcalfe retweetledi

Robert Metcalfe retweetledi

Forthcoming in the AER: "A Welfare Analysis of Policies Impacting Climate Change" by Robert W. Hahn, Nathaniel Hendren, Robert D. Metcalfe, and Ben Sprung-Keyser. aeaweb.org/articles?id=10…

English

Robert Metcalfe retweetledi

Today I presented some of our recent first-gen research to a group of educators. The finding that surprised the audience the most?

Family income and school quality together explain only about one-third of the gap. The remaining two-thirds point to something deeper: parental human capital channels that operate beyond income, likely through information, guidance, and the navigational knowledge that college-educated parents transmit to their children.

We also find that some teachers have a genuine comparative advantage in helping first-gen students reach excellence, which has real implications for how we think about teacher assignment. The bottom line for policymakers: if we wait until college access interventions to address first-gen disadvantage, we've already lost most of the game. These gaps emerge early, compound over time, and demand comprehensive support starting in elementary school.

The study is available for free download here: ideas.repec.org/p/feb/artefa/0…

English

Robert Metcalfe retweetledi

Are you interested in interdisciplinary research at the nexus of science and policy? If so, apply today for the @AllianceProg Summer School in Paris June 2-5. Applications are due April 15. Apply here: blogs.cuit.columbia.edu/sdds/alliance-… @columbiaclimate @centerforsus @CSD_Columbia @Polytechnique

English

@alexolegimas A problem with the decoupling is that retractions might not fully correct beliefs. The clever REStud paper by @JlibDoesEcon (with Gonçalves & Willis) shows that this is the case in their setup and might be worse in the future with the inc in quantity.

uploads.strikinglycdn.com/files/5d3e0acc…

English

Robert Metcalfe retweetledi

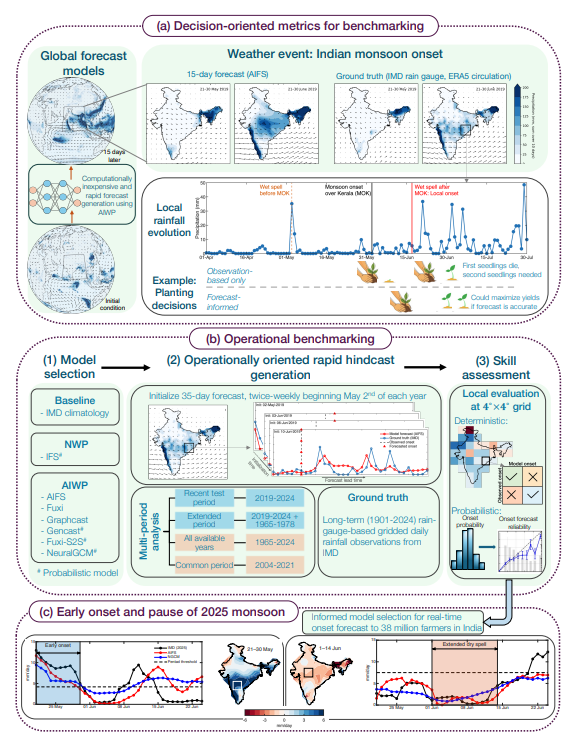

Can artificial intelligence weather prediction models help large vulnerable populations adapt to weather shocks in the face of climate variability and change? Research by @ColumbiaSIPA PhD alum, @amirjina , et al, looks at monsoon onset forecasts in India to answer this question. arxiv.org/pdf/2602.03767

English

Robert Metcalfe retweetledi

"Weather and U.S. railways: risk, adaptation, and congestion." Research by @ColumbiaSIPA PhD alum @xinmingd and Andrew J. Wilson in @JPubEcon. sciencedirect.com/science/articl…

English