Simon Ouellette

55 posts

Simon Ouellette

@SimonOuellette6

I work with robots, lasers and neural networks.

Google search volume for "OpenClaw" has experienced a massive crash, plummeting right back down to pre-launch baseline levels almost as quickly as it spiked.

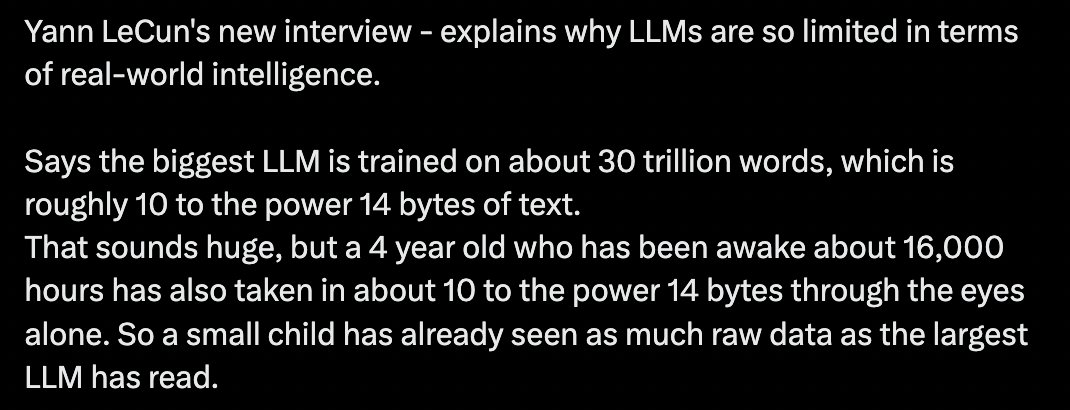

Ok, I think my experiment leaving AI working on stuff 24/7 ends here. It doesn't work. Code explodes in complexity, results are not that great, the AI can't get past hard walls (it is still completely unable to even *grasp* SupGen), and it is insanely expensive (spent ~1k over the last 2 days). The best results are on the JS compiler, mostly because it is familiar (compared to inets), but not worth losing control over the codebase. I think the dream of having AI's working on the background and making real progress on things that matter (i.e., truly new things) isn't here yet. It is still a machine hard-stuck on its own training data, incapable of thinking out of the box. It is great for building things that were already built. But not new things Also coding normally has the under-appreciated advantage that you're doing two things at the same time: building a codebase *and* learning it. AI's do only half of that. The other half is obviously impossible 🤔

the red lines represent trains

This paper tweaks a looped Transformer so a small model gets better at multi-step puzzle reasoning without getting bigger. It reports 53.8% correct on its 1st try on ARC-AGI1 and 16.0% on ARC-AGI2, both pattern puzzles. The authors argue most prior gains came from recurrence, repeating the same computation, plus nonlinearity, using activations that bend numbers. A Universal Transformer shares 1 layer’s weights across depth, and that layer mixes parts of the input, then runs many times to refine the internal representation. The Universal Reasoning Model (URM) adds a short 1D convolution, a tiny sliding filter, inside the feed-forward gate, the nonlinear part, so nearby positions mix locally. URM also truncates backpropagation, the training step that sends an error signal backward to update weights, so only later loops get that signal. That keeps training steadier when many loops are used, because very long backward paths can turn the learning signal into noise. Ablations show both tweaks matter, and stripping nonlinear parts drops accuracy a lot, which points to iterative nonlinear refinement as the main driver. ---- Paper Link – arxiv. org/abs/2512.14693 Paper Title: "Universal Reasoning Model"