Sabitlenmiş Tweet

TensorZero

261 posts

TensorZero

@TensorZero

We're building an automated AI engineer, powered by our open-source LLMOps platform that fuels ~1% of global LLM API spend.

NYC Katılım Ekim 2023

2 Takip Edilen1.3K Takipçiler

Can an automated AI engineer autonomously debug and optimize an LLM pipeline in 5 minutes?

Last night, ours did: it cut errors in ~half during its first live demo.

TensorZero Autopilot (our automated AI engineer) analyzed hundreds of historical LLM traces to identify failure modes, tuned the prompt, and verified improvements with an LLM judge — autonomously, in <5 minutes.

With more time, it can do much more: from model selection to fine-tuning to adaptive experimentation, TensorZero Autopilot dramatically improves the performance of LLM agents across diverse tasks.

Learn more below ↓

English

TensorZero Autopilot is powered by our open-source LLMOps platform that unifies an LLM gateway, observability, optimization, evaluation, and experimentation.

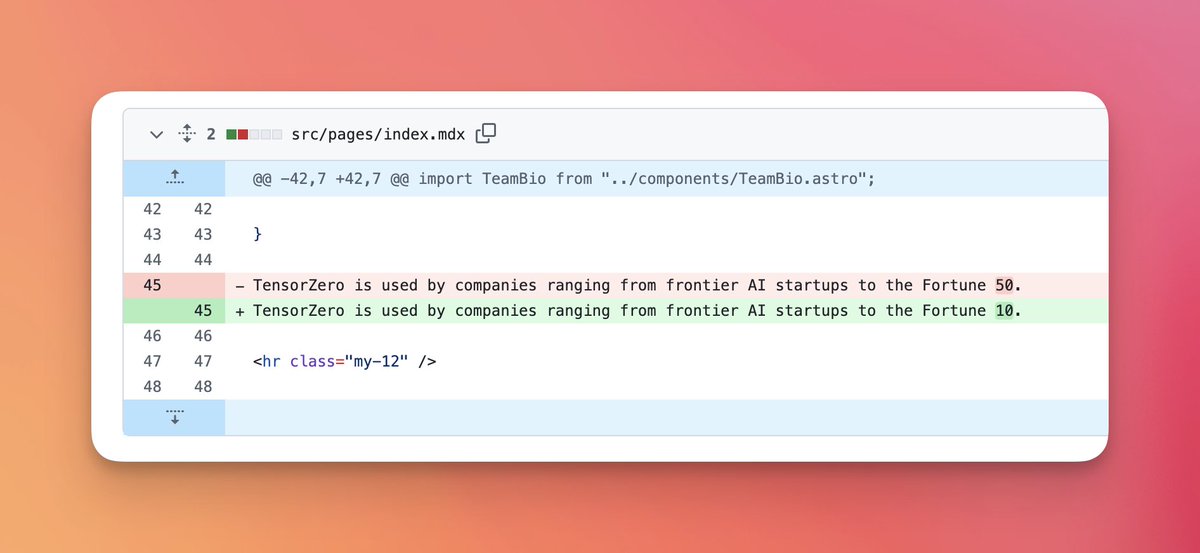

The open-source project is used by companies ranging from frontier AI startups to the Fortune 10 and powers ~1% of the global LLM API spend today.

github.com/tensorzero/ten…

English

Read more about this work on the TensorZero Blog:

tensorzero.com/blog/automated…

English

TensorZero retweetledi

TensorZero retweetledi

crazy milestone for TensorZero

TensorZero@TensorZero

Antoine Toussaint previously was a staff software engineer at companies like Shopify and Illumio. Before becoming an engineer, he was a quant and a math professor at Stanford. He holds a PhD in Financial Engineering from Princeton. Welcome to the team, Antoine!

English

TensorZero retweetledi

TensorZero 2026.1.7 is out!

📌 This release introduces the preview of TensorZero Autopilot — our automated AI engineer (learn more on tensorzero.com).

—

Full Changelog

🆕 [Preview] TensorZero Autopilot — an automated AI engineer that analyzes LLM observability data, optimizes prompts and models, sets up evals, and runs A/B tests.

🆕 Support multi-turn reasoning for xAI (`reasoning_content` only).

& multiple under-the-hood and UI improvements!

English

TensorZero 2026.1.6 is out!

📌 This releases brings further improvements around reasoning models, error handling, and usage tracking.

—

Full Changelog

🚨 [Breaking Change] Moving forward, TensorZero will use the OpenAI API's error format (`{"error": {"message": "Bad!"}`) instead of TensorZero's error format (`{"error": "Bad!"}`) in the OpenAI-compatible endpoints.

⚠️ [Planned Deprecation] When using `unstable_error_json` with the OpenAI-compatible inference endpoint, use `tensorzero_error_json` instead of `error_json`. For now, TensorZero will emit both fields with identical data. The TensorZero inference endpoint is not affected.

🆕 Add native support for provider tools (e.g. web search) to the Anthropic and GCP Vertex AI Anthropic model providers. Previously, clients had to use `extra_body` to handle these tools.

🆕 Improve handling of reasoning content blocks when streaming with the OpenAI Responses API.

🆕 Handle inferences with missing `usage` fields gracefully in the OpenAI model provider.

🆕 Improve error handling across the UI.

& multiple under-the-hood and UI improvements!

English

TensorZero 2026.1.5 is out!

📌 This release brings many improvements around error handling, reasoning model, rate limiting performance, and more.

—

Full Changelog

🚨 [Breaking Change] TensorZero will normalize the reported `usage` from different model providers. Moving forward, `input_tokens` and `output_tokens` include all token variations (provider prompt caching, reasoning, etc.), just like OpenAI. Tokens cached by TensorZero remain excluded. You can still access the raw usage reported by providers with `include_raw_usage`.

⚠️ [Planned Deprecations] Migrate `include_original_response` to `include_raw_response`. For advanced variant types, the former only returned the last model inference, whereas the latter returns every model inference with associated metadata.

⚠️ [Planned Deprecations] Migrate `allow_auto_detect_region = true` to `region = "sdk"` when configuring AWS model providers. The behavior is identical.

⚠️ [Planned Deprecations] Provide the proper API base rather than the full endpoint when configuring custom Anthropic providers.

🔨 Fix a regression that triggered incorrect warnings about usage reporting for streaming inferences with Anthropic models.

🔨 Fix a bug in the TensorZero Python SDK that discarded some request fields in certain multi-turn inferences with tools.

🆕 Improve error handling across many areas: TensorZero UI, JSON deserialization, AWS providers, streaming inferences, timeouts, etc.

🆕 Support Valkey (Redis) for improving performance of rate limiting checks (recommended at 100+ QPS).

🆕 Support `reasoning_effort` for Gemini 3 models (mapped to `thinkingLevel`).

🆕 Improve handling of Anthropic reasoning models in TensorZero JSON functions. Moving forward, `json_mode = "strict"` will use the beta structured outputs feature; `json_mode = "on"` still uses the legacy assistant message prefill.

🆕 Improve handling of reasoning content in the OpenRouter and xAI model providers.

🆕 Add `extra_headers` support for embedding models. (thanks jonaylor89!)

🆕 Support dynamic credentials for AWS Bedrock and AWS SageMaker model providers.

& multiple under-the-hood and UI improvements (thanks ndoherty-xyz)!

English