Matt Hauer

5.1K posts

Matt Hauer

@theHauer

Head of Agent Development at Aaru. Associate professor of Sociology at Florida State University. Demographer.

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: nature.com/articles/s4158… Blog: sakana.ai/ai-scientist-n… When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (github.com/SakanaAI/AI-Sc…), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

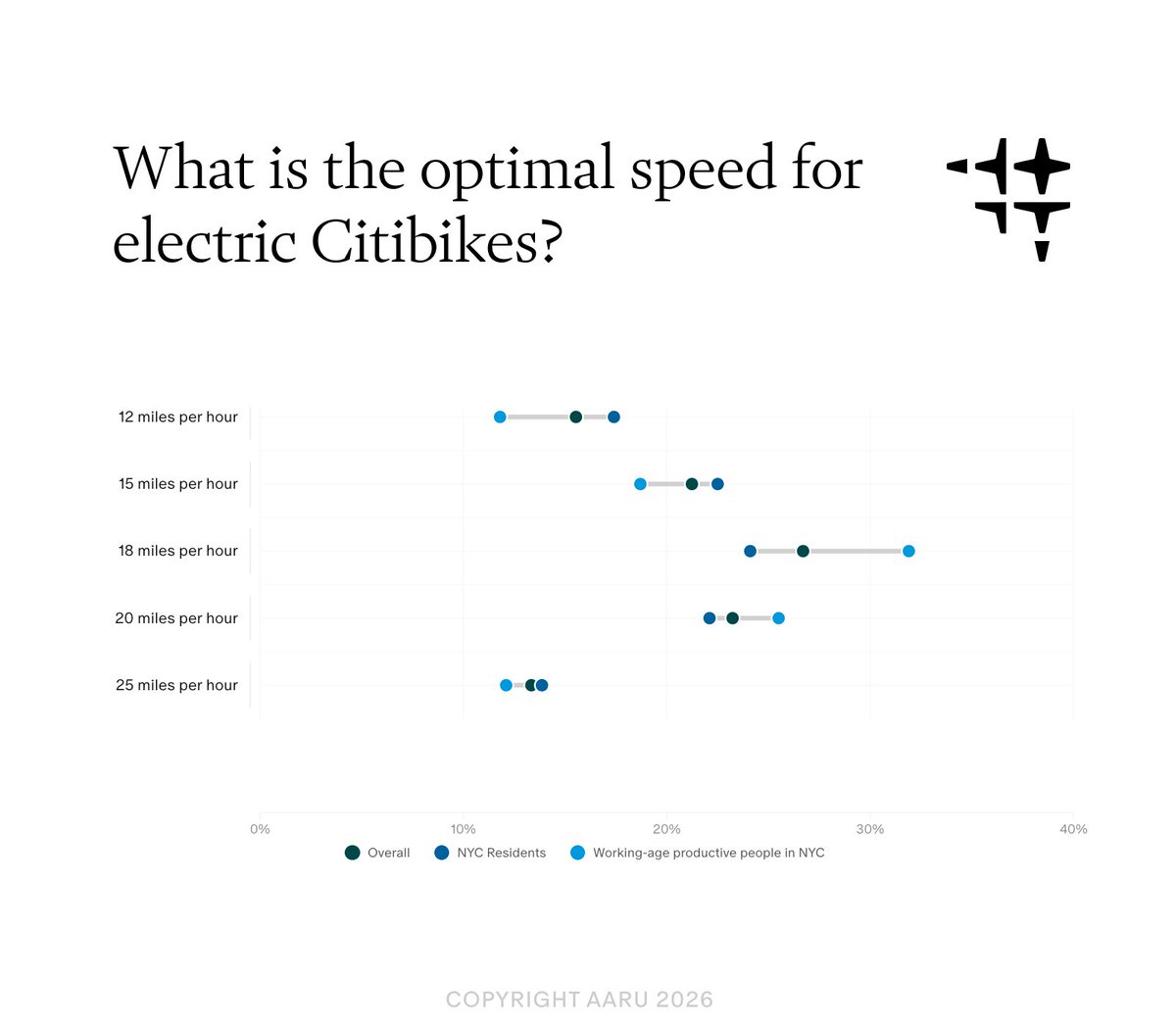

Voting for the next mayor who raises NYC's citibike speed back to 18 mph. Currently actively harming NYC GDP

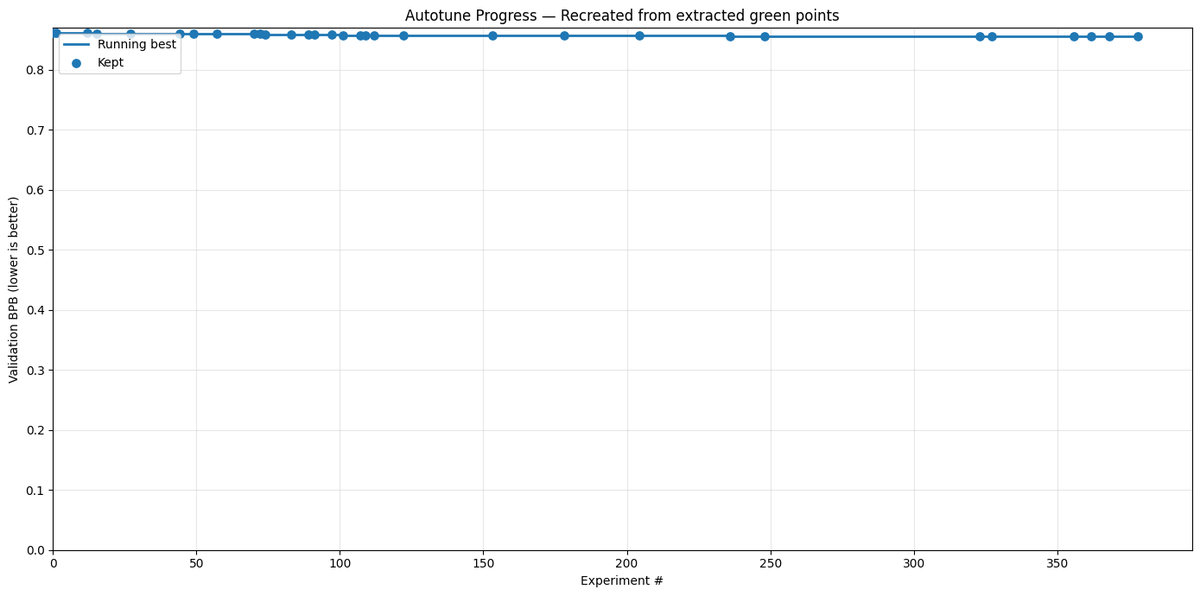

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

Our new paper on reassessing the heritability of human lifespan is out in @ScienceMagazine! 🧬 For decades, the consensus has been that genetics explains just 20–25% of lifespan differences. We found that after accounting for extrinsic mortality, that number jumps to ~50%. A 🧵

A study of 946,798 (!) people in 72 countries "found no evidence…that the global penetration of social media is associated with widespread psychological harm…Facebook adoption predicted aspects of well-being more positively for younger individuals" #smma doi.org/10.1098/rsos.2…

If we are able to automate hitting a dataset with a variety of causal inference techniques or econometric specifications, then we need to focus our attention on solving the problem of multiple hypothesis testing (MHT). Back in my underclassman years, when I was still a "dirty work" RA, I worked on a project for @Econ_4_Everyone on this very subject, that of MHT. It was an important issue then, and is even more important now given the automation soon to be possible in applied, reduced-form econometrics.

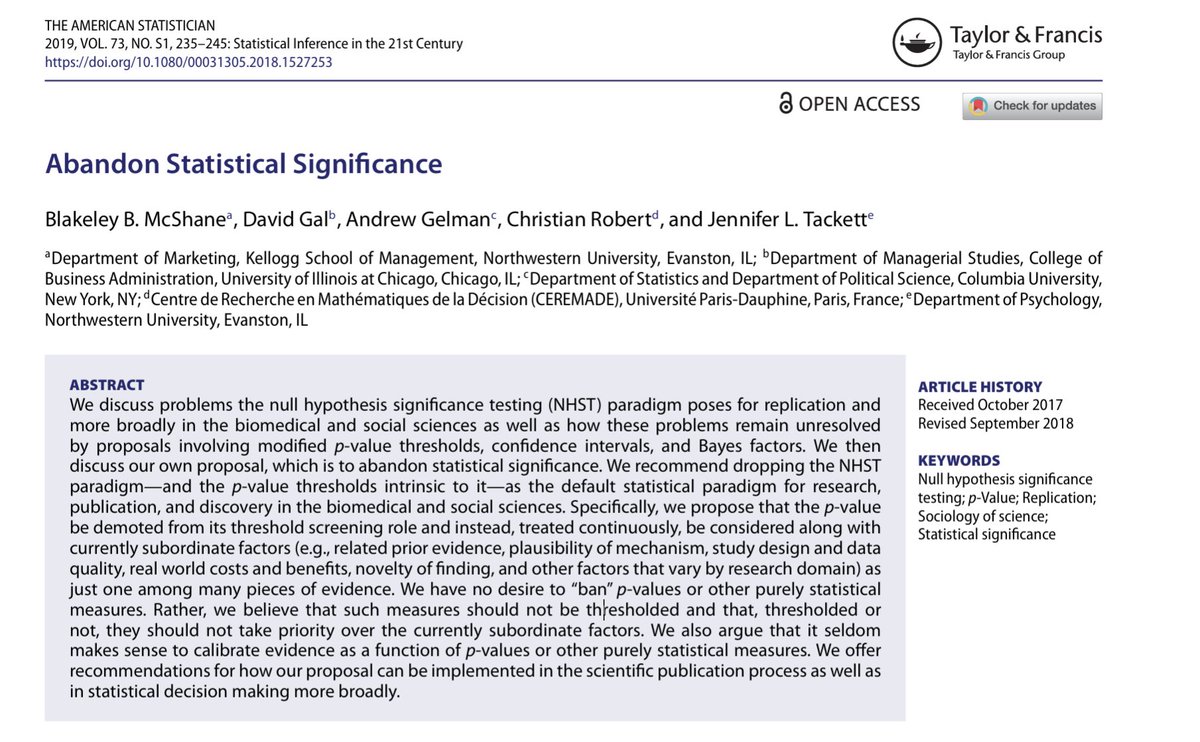

Gentle reminder p<0.05 zealots