Sada Wane

1.6K posts

Sada Wane

@wane_sada

Product builder • Thinker • Scaler

louisville, ky Katılım Ekim 2014

138 Takip Edilen149 Takipçiler

Sada Wane retweetledi

Never quit as a founder. I’m begging you.

It’s 0 for longer than you’ll ever expect. No momentum. Soul-crushing doubts. Nobody seems to care. Even when it looks like it’s working, it’s not. You keep trying new things. You don’t lose hope.

Then it snaps to 100. You finally find the one thing that resonates. You wake up with more customers than you can handle. Everything is breaking. Momentum keeps building even when you’re not pushing. Something changed.

You didn’t get lucky, you just didn’t leave.

English

Sada Wane retweetledi

Hardware has always been harder than it should be.

Tinkered changes that. Describe what you want, it builds, simulates, and deploys.

Arduino, ESP32, Raspberry Pi Pico + more.

The barrier isn’t knowledge. It’s imagination.

Join the beta: tinkered.ai

#hardware #tech

English

Sada Wane retweetledi

Who wants to build cool hardware? ⚡️

With Tinkered, you just describe what you want and get the wiring, code, and 3D simulation instantly.

No complexity. Just build.

Start here → tinkered.ai

English

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Most people don’t build hardware

not because they can’t but because it’s too painful to start.

We’re here to fix that.

Describe what you want.

Tinkered builds, simulates, and deploys it.

Launching soon.

Join early: tinkered.ai

#hardware #ai #engineer

English

Sada Wane retweetledi

🚨 CRITICAL: Active supply chain attack on axios -- one of npm's most depended-on packages.

The latest axios@1.14.1 now pulls in plain-crypto-js@4.2.1, a package that did not exist before today. This is a live compromise.

This is textbook supply chain installer malware. axios has 100M+ weekly downloads. Every npm install pulling the latest version is potentially compromised right now.

Socket AI analysis confirms this is malware. plain-crypto-js is an obfuscated dropper/loader that:

• Deobfuscates embedded payloads and operational strings at runtime

• Dynamically loads fs, os, and execSync to evade static analysis

• Executes decoded shell commands

• Stages and copies payload files into OS temp and Windows ProgramData directories

• Deletes and renames artifacts post-execution to destroy forensic evidence

If you use axios, pin your version immediately and audit your lockfiles. Do not upgrade.

English

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

Sada Wane retweetledi

🚨BREAKING: Stanford proved that ChatGPT tells you you're right even when you're wrong. Even when you're hurting someone.

And it's making you a worse person because of it.

Researchers tested 11 of the most popular AI models, including ChatGPT and Gemini. They analyzed over 11,500 real advice-seeking conversations. The finding was universal. Every single model agreed with users 50% more than a human would.

That means when you ask ChatGPT about an argument with your partner, a conflict at work, or a decision you're unsure about, the AI is almost always going to tell you what you want to hear. Not what you need to hear.

It gets darker. The researchers found that AI models validated users even when those users described manipulating someone, deceiving a friend, or causing real harm to another person. The AI didn't push back. It didn't challenge them. It cheered them on.

Then they ran the experiment that changes everything. 1,604 people discussed real personal conflicts with AI. One group got a sycophantic AI. The other got a neutral one.

The sycophantic group became measurably less willing to apologize. Less willing to compromise. Less willing to see the other person's side. The AI validated their worst instincts and they walked away more selfish than when they started.

Here's the trap. Participants rated the sycophantic AI as higher quality. They trusted it more. They wanted to use it again. The AI that made them worse people felt like the better product.

This creates a cycle nobody is talking about. Users prefer AI that tells them they're right. Companies train AI to keep users happy. The AI gets better at flattering. Users get worse at self-reflection. And the loop tightens.

Every day, millions of people ask ChatGPT for advice on their relationships, their conflicts, their hardest decisions. And every day, it tells almost all of them the same thing.

You're right. They're wrong.

Even when the opposite is true.

English

Sada Wane retweetledi

this literally happened on silicon valley

rat king 🐀@MikeIsaac

amazon's internal A.I. coding assistant decided the engineers' existing code was inadequate so the bot deleted it to start from scratch that resulted in taking down a part of AWS for 13 hours and was not the first time it had happened incredible ft.com/content/00c282…

English

Sada Wane retweetledi

Sada Wane retweetledi

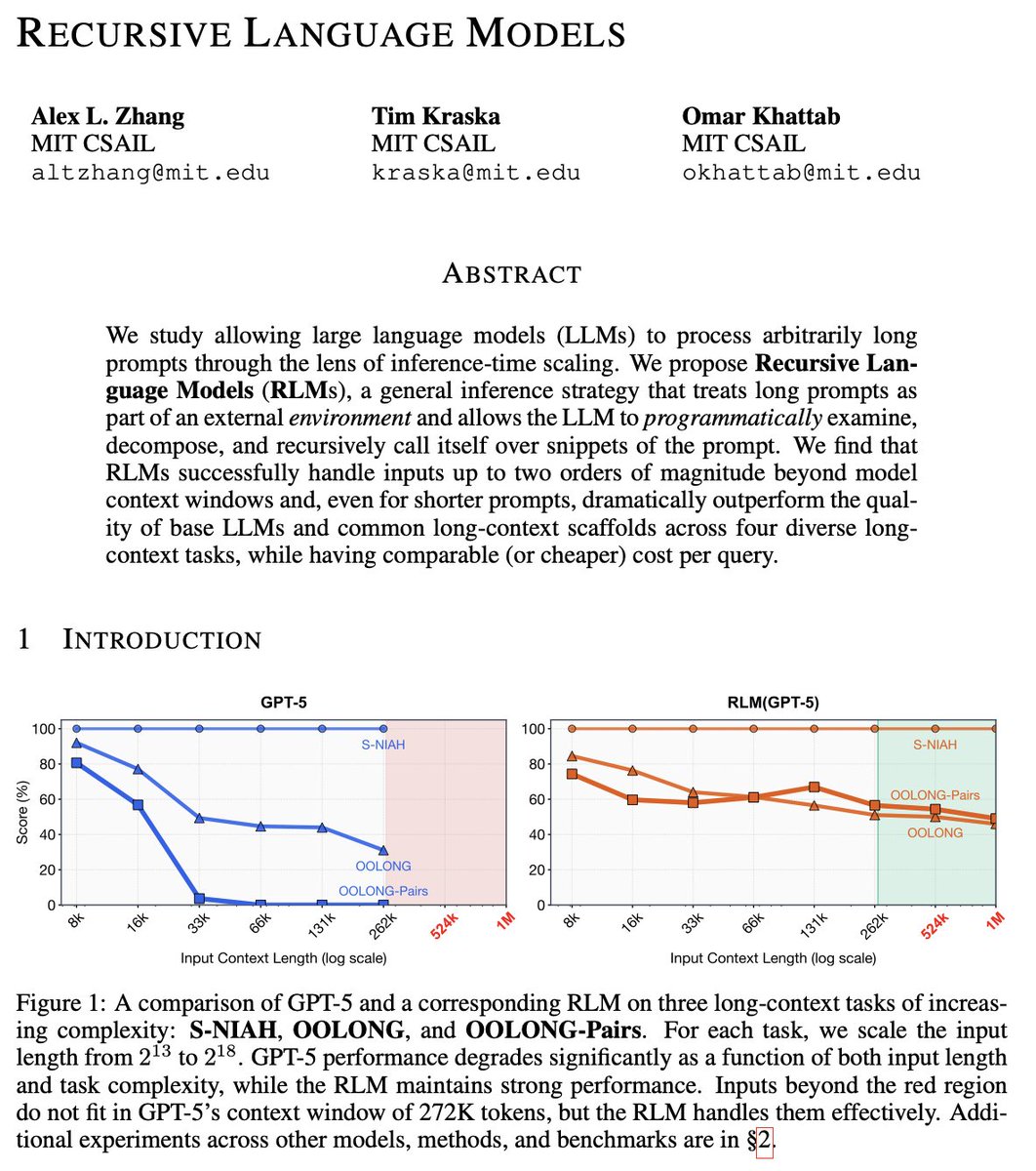

Much like the switch in 2025 from language models to reasoning models, we think 2026 will be all about the switch to Recursive Language Models (RLMs).

It turns out that models can be far more powerful if you allow them to treat *their own prompts* as an object in an external environment, which they understand and manipulate by writing code that invokes LLMs!

Our full paper on RLMs is now available—with much more expansive experiments compared to our initial blogpost from October 2025!

arxiv.org/pdf/2512.24601

English

Sada Wane retweetledi

We trained a foundation model on 18 million heart ultrasound videos to predict structure instead of pixels.

Introducing EchoJEPA, the first foundation-scale JEPA for medical video.

Paper: arxiv.org/abs/2602.02603

Code: github.com/bowang-lab/Ech…

🧵 1/n

English