Z.ai

1.1K posts

Z.ai

@Zai_org

The AI Lab behind GLM models, dedicated to inspiring the development of AGI to benefit humanity. https://t.co/7a5aSCUNcZ https://t.co/x14hb3klXm

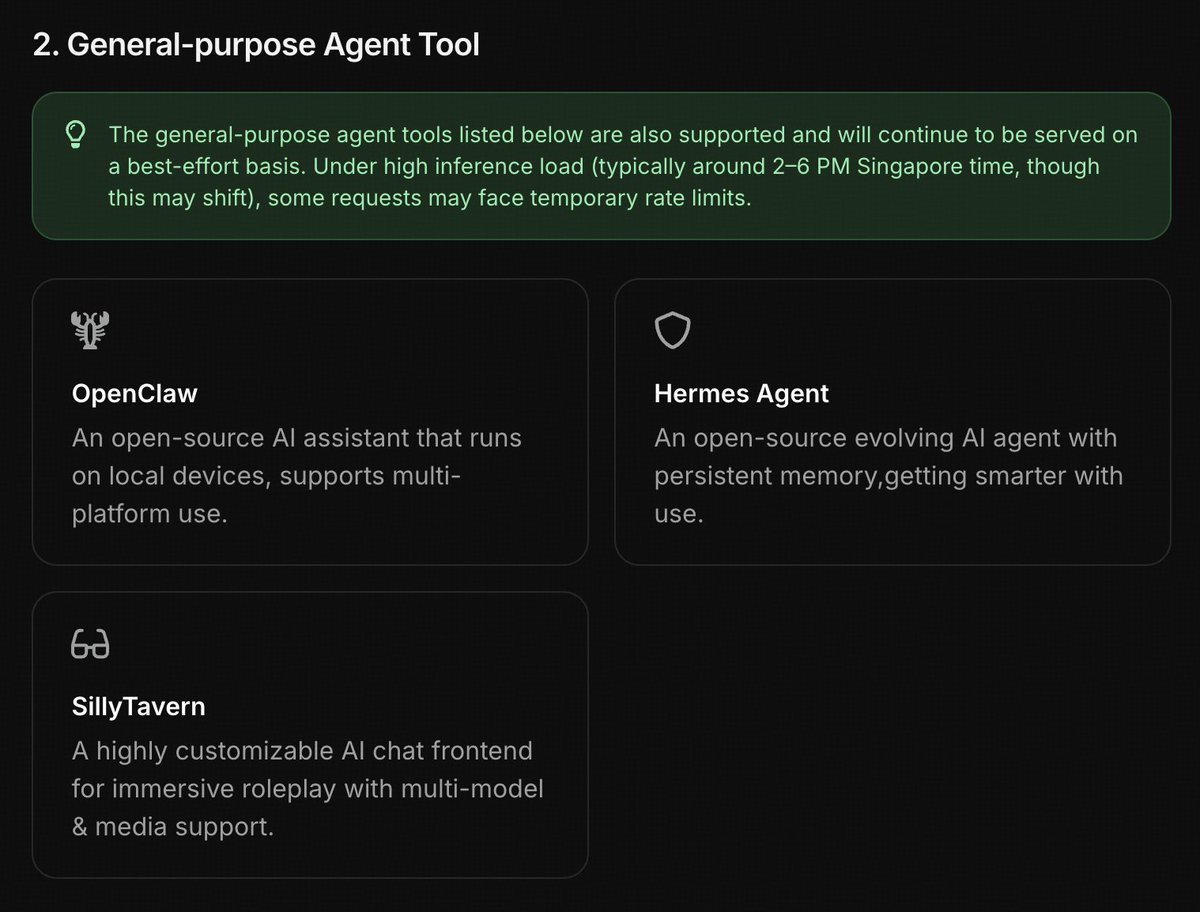

Usage limits tripled for GLM-5-Turbo in GLM Coding Plan! Enjoy the same high-volume capacity as GLM-4.7 during non-peak hours. Availability: Anytime except 2–6 AM ET. Ends: April 30.

Doing some stress tests on OpenMAIC’s Interactive Simulation with a DNA Replication case. 💻 Both powered by @Zai_org — with GLM-5.1 and GLM-5V-Turbo each generating these complex pedagogical simulations in real time. Can you spot the difference? The "Turbo" is catching up surprisingly well... Wait, this looks... different from what we had before? Stay tuned for April 20! 🚀 🔗 Live Demo: open.maic.chat ⭐️ GitHub: github.com/THU-MAIC/OpenM…