atulit@atulit_gaur

i love diffusion models and idk how but i went down a rabbit hole to trace their origins

and honestly this stuff is kinda beautiful when you see how it evolved

like it didn’t just appear out of nowhere… it’s literally years of ideas stacking on top of each other

it starts with

"deep unsupervised learning using nonequilibrium thermodynamics" (sohl-dickstein et al., 2015)

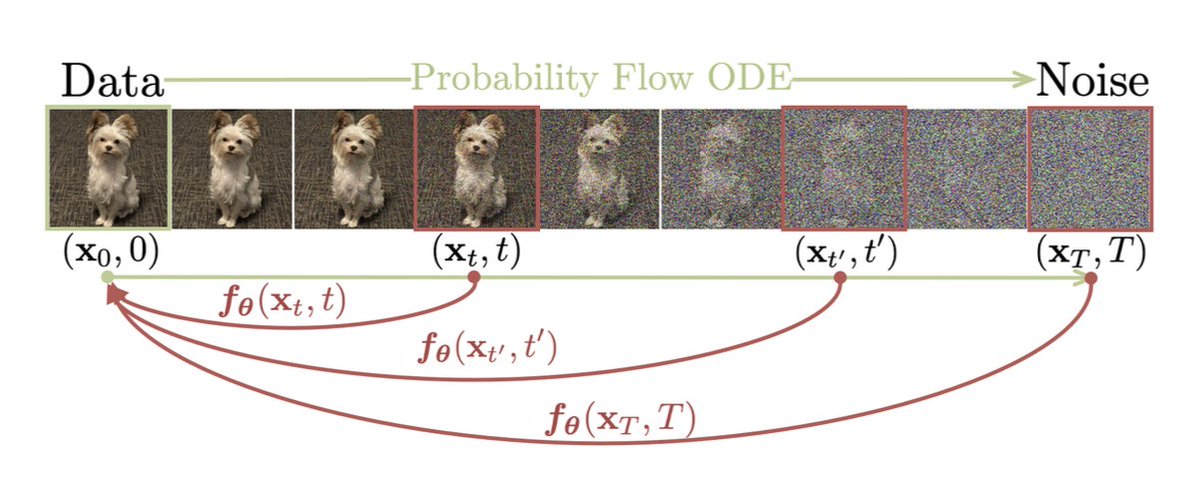

-> slowly destroy data with noise -> learn to reverse it

sounds simple but that’s the seed

then comes

"generative modeling by estimating gradients of the data distribution" (song and ermon, 2019)

-> learn gradients of data distribution -> understand where real images live

this is where score-based models come in

then things get real with

"denoising diffusion probabilistic models" (ho et al., 2020)

-> clean probabilistic formulation -> stable training -> actually works

this paper made diffusion practical

then

"diffusion models beat gans on image synthesis" (dhariwal and nichol, 2021)

-> diffusion > gans

this was a big shift

but still slow as hell

then the most important jump imo

"high-resolution image synthesis with latent diffusion models" (rombach et al., 2021)

-> compress images with vae -> run diffusion in latent space -> huge speedup

this is literally the backbone of stable diffusion

parallel to all this you have

"learning transferable visual models from natural language supervision" (radford et al., 2021)

-> align text and images -> enable text-guided generation

that’s clip

and finally stable diffusion (rombach et al., 2022 release implementation)

-> vae + unet + clip -> trained on large-scale data like laion

what’s crazy is the core idea never changed

add noise -> remove noise -> repeat

but layered with better math, better architectures, and better data

also something about this feels kinda philosophical

instead of solving a hard problem directly

they broke it into tiny easy steps

don’t generate an image

just make it slightly less noisy each time

again -> again -> again

and somehow that builds something meaningful