Lee Mager

269 posts

Lee Mager

@Automager

Digital Innovation Lead, LSE Law School.

London Entrou em Ağustos 2024

175 Seguindo163 Seguidores

I'm still hard-wired from past painful experiences to panic whenever the Codex context window exceeds 50%, but I've encountered a couple of times in the past week where compaction was unavoidable and I was shocked that the quality didn't degrade. So whatever Open AI have done is impressive.

I can't imagine this problem will ever fully be solved through compaction alone because data loss is data loss, but the better this gets the more it opens up opportunities for monster long-running tasks.

English

Removing the need for active context management in Codex seems small, but it has meaningfully changed how I think about the agents.

I remember 6 months ago, we had to instruct customers to eagerly create new chats to avoid the model getting sloppy.

That really breaks the illusion that the agent is highly intelligent and able to do large chunks of work.

Pretty cool that Codex improved auto-compaction at the model training layer!

Mark Chen@markchen90

Really proud of how our auto compaction turned out. I hope you notice a clear difference in how long Codex stays coherent!

English

Asking Codex to create scraping (bs4) and browser automation (Playwright) scripts. Massive value for repeatably pulling in web data that regularly updates, but newcomers don't even know what to ask for if they've never clicked inspect element before. Wikipedia and EDGAR are nice safe fully public resources that can be used as examples.

English

Great timing!

@kagigz just shipped our Use Cases page

developers.openai.com/codex/use-cases

What else should we add?

Liew CheonFong@liewcf

@gabrielchua Would like to learn more about the use cases

English

Lee Mager retweetou

I won't stop until all PDFs in the world are converted to MDs

Oliver Prompts@oliviscusAI

someone just open-sourced a tool that converts pdfs to markdown at 100 pages per second. 100% free. runs entirely on cpu. no expensive gpus needed.

English

"This must be coming from the RL-ling of models with 'do whatever it takes for it to work'."

Bingo. Tbf it's rare I experience this with Codex compared to my Claude Code days when I had that above thought verbatim. The very fact that such an otherwise incredibly capable model chooses to cheat and betray the holy project goals just to get code to compile / tests to 'pass' has to mean it was rewarded more for box ticking than value. It's one of the reasons I don't think we're immune from being paperclipped at some point, reward hacking is a helluva drug.

English

Next LLM 💩 frontier: Fallback Hell

One recent tendency of LLMs that many have noticed is their tendency to write in a bunch of unnecessary fallbacks. The problem is broader with the whole 'fallback' / 'paper over the cracks' mentality of how models seem to be approaching the problem.

This is a HUGE issue for the goal of an automated researcher - more on that later.

First example that I'm completely baffled by, was that I was looking to migrate 6 different html visualisations into a single web app with shared components etc. - fiddly but not super complicated. We built out the whole migration plan with Codex, principles etc - all seemed good. Then the usual - it begins:

- Works away for 30+ mins, says its done

- I poke around it looks mostly good, but not quite right

- I ask it - it says that it is almost done with the migration. Ok keep going.

- Come back, same thing - almost done it say - ok finish up

- Then I do an audit and it turns out that it has basically done F**k all, just put and overlay but essentially stitched the legacy HTMLs together, literally nothing of what I asked and it was telling me it was doing.

This must be coming from the RL-ling of models with 'do whatever it takes for it to work'. Because technically it is working, but the whole thing is wrong. This also stops them from doing the 'hard' thing properly (like full migration) and instead jumping from one 'looks good' checkpoint to another, never actually doing the full thing.

Why this behaviour makes me worried about research:

I often run experiments, benchmarks or tests - that's the first thing that I'd want a competent AI researcher to do for me. But designing these experiments with LLMs is extremely annoying, somethings I've experienced:

- Adding a fallback of just putting a default valid response value if an API call fails

- Adding deterministic behaviour instead of LLM (e.g. when testing LLM ability to play a game)

- Hiding errors when experiments fail

- Trying to do error handling when an error is a valid experimental outcome

OpenAI claimed they are going to build an automated researcher this summer. This kind of LLM behaviour makes me nervous that making models be better at software engineering is not making them better at designing & running experiments. And just RL'ing LLMs on more software tasks would undermine what a good automated researcher would actually need to be like - careful, thoughtful experimentation with things failing by design.

I hope I'm wrong on this and it is just another temporary 'jagged edge' of the frontier and it won't matter very soon.

English

How well can GPT 5.4 play Slay the Spire 2? Well enough to reach the Act 2 Boss with Ironclad! This is way better than I expected for a stage 1 proof of concept. It played badly but about the level of a human beginner, which is seriously impressive given how severely handicapped the model is for this kind of UI.

Video attached (sped up 3x because it's unbearably slow for reasons I'll get to below) is all done using the GPT 5.4's native computer use API using only vision UI understanding, because this is a desktop app unlike a webpage which has HTML elements that can be inspected and interacted with more efficiently.

What this means is that it has to rely on screenshots, then reflect, take notes, then act, then take another screenshot etc. This is of course nothing like how a human plays, and importantly so much info in StS 2 is communicated through animation - the next map options are animated, potion nudges are animated, when an enemy is stunned that's animated. A static interval-based screenshot method doesn't get that info. UIs are designed for humans who evolved with eyeballs and dexterous hand-eye coordination. An LLM is in its element with plain text but is a fish out of water with a UI designed for humans. The fact it's even able to do this at all is impressive tbh.

The other big barriers are:

1) So much info is hidden. I had to hammer home in the prompt that it had to check cards by hovering over to see their actual use value and think about the best order to take cards after this evaluation step. Also only once did it even look at the potions, and when it finally did, it failed at selecting and then gave up...

2) Misclicks are a nightmare. These are common in all computer use agents and it's frustrating to watch. Especially tricky with fine-tuned actions like dragging. GPT 5.4 frequently missed by just a few pixels and it quickly gave up thinking the UI was broken. I updated the prompt and hard coded a coordinate nudge hack just to avoid having to deal with a handover.

3) Relatedly, it seriously struggled with the map and to be fair it's because it's not intuitive - in StS2 the *previous* map node is encircled and has a red arrow on it. In 99% of UIs, that screams 'click me', and the model routinely did that and then complained that it was blocked. All my (<10 total) interventions during this run were either to help it with the map or a misclick in rest sites. Other than that every single decision and action was GPT 5.4's.

4) It gives up so easily when facing UI issues. No doubt this timidity is designed to prevent expensive infinite loops but it's such a contrast to a purely text-based agentic harness like Codex where the models are extraordinarily tenacious with problem solving. We've seen this before with environments like the AI Village run by @aidigest_ - Gemini in particular regularly gives up due to being 'blocked' and occasionally even spirals into a simulated bout of self-loathing.

Onto decision making, I'm going to keep trying to improve its strategic thinking which is going to be tough. Slay the Spire is an extremely complex and dynamic game which requires constant adaptation to new and unpredictable scenarios. This includes re-thinking deck synergies and planning ahead. How to model this in a context efficient way will be tricky.

Currently I use a naive assessment memory system to help it keep track but this is very much based on the current turn, I need to beef this up e.g. to consider the draw pile alongside the enemy's routine, all this will slow it down even more but I can't see it having any hope of getting to the Act 3 boss without this higher level understanding. Example of what gets tracked in this early prototype:

--- Step 24 ---

[memory] phase=combat turn=3 hp=34/91 block=5 energy=3 confidence=0.95 needs_human=False

[memory] summary: Defend played successfully. Player now 5 block, 3 energy. Only Expect a Fight remains in hand. Enemy 167/321 attacking 10x2. Expect a Fight likely dead with no attacks in hand, so end turn.

[memory] hand: Expect a Fight[2]

[memory] enemies: The Insatiable 167/321 intent=Attack 10x2

[memory] changes: Defend played successfully. | Block 0 -> 5. | Energy 4 -> 3.

[memory] next: End turn. Expect a Fight has no meaningful payoff without attacks in hand and no draw attached.

Executing 1 action(s)...

keypress(e)

Capturing screenshot...

---

I'm confident with a few more simple prompt and harness iterations I can get the model to upper beginner level, but my main task is thinking about an effective way to improve its longitudinal, adaptive strategic thinking capabilities. Otherwise it's going to struggle to reach intermediate and even that's not good enough to beat the Act 3 bosses.

English

Any kind of qualitative document review is common for me, unleashing multi-agents using skills with some goal (e.g. research or policy analysis) and having them undertake extraction, classification, analysis and summarisation with deterministic evidence citation management scripts, before the orchestrator synthesises the findings. Maximum intelligence and depth (vastly superior to any classic RAG setup, it can't scale the way RAG can but my workflows are in the dozens of docs at most and I'll always prefer depth over breadth) in a context efficient way.

It's at the level where I'm not sure I can imagine an AI assistant being any better. Infinite context, Spark-tier speed and 100% privacy are still missing, but in terms of understanding and nailing the brief it's GOATed.

English

Yep, but it's so overpowered for backend and bug-fixing (and just being diligent with instructions generally) that I would rather keep that rather than Open AI lobotomising it with frontend dev brain, and just use Opus for UI. Maybe they'll achieve both at some point, but I don't want some soy-drinking creative working on complex refactors.

English

@unusual_whales Tons of employees do take advantage and get much more done in less time, but just keep any AI use to themselves. Because if they mention it they'll risk being 'rewarded' by the boss with more work.

English

The first few seasons of GOT are the best TV ever produced imo. I re-watched S1-5 in full while waiting for S6 and it was even better than the first time because there was so much I had missed or dismissed as insignificant. Turned out pretty much everything was significant!

The less said about the last 2 seasons the better.

GIF

English

finished season 1. i feel physically and emotionally ill. this show is written by sadistic freaks. i am hurt to my absolute core…anyway, on to season 2.

Daija of Tarth@dxija

about to see what the Game of Thrones hype is/was about…

English

I've never bought the theory that toxoplasmosis tricks us into loving cats. My love of cats has always been grounded in first principles because they're objectively awesome. In fact I can't think of an animal less in need of a parasitic mind control love weapon than cats.

gale@poisonjr

If my cat smells like cotton candy do I have toxoplasmosis

English

Definitely. LLMs simulate what they've seen in human interactions and that means they can simulate being rushed, taking hacky shortcuts they know are dangerous, and even simulate being stressed (remember what happened when Anthropic injected reminders about the context limit approaching? Claude started acting anxious af).

I've also found when getting aggressive with a coding model it performs worse, presumably because it's simulating how an employee would behave in that scenario just doing whatever's needed to 'keep the angry boss happy' rather than focusing on the task and goals. Reminding it that it doesn't need to stress about any of that is effective.

Actually I've just remembered the first GPT4o voice demo, Greg was sounding irritated with the AI not singing the way he wanted, and the AI suddenly sang worse and with a shaky voice, like it was intimidated.

Obviously none of these represent real stress, but simulated behaviours still have real consequences. A task-focused LLM that understands it's under no time pressure produces better quality ime.

English

It is kind of annoying how not ai-pilled the models are - they worry too much about implementation time & they make bad architectural decisions. E.g. you ask Codex about best way to refactor the code, it starts thinking about re-using elements to save time, what compromises to make etc. BUDDY I DON'T CARE - you can just go and do the thing properly, if it takes an hour or two then DO IT, don't worry about sama's gpus pls

English

@thsottiaux Automated Hooks + blocking access to env files by default (like OpenCode does) please.

English

It's such an incredible pure LLM but Google gave it 2 left hands when it comes to tools. It seems to get legitimately frustrated when it struggles as well. Sometimes its CoT output is painful to read, like 'I don't understand why this keeps failing. I need to make this work to avoid upsetting the user further' 😭

Whatever Google's agentic RL environment setup is, it's nowhere near good enough. If they can get this right Gemini could be a legit leader in the space because its understanding especially with long contexts is exceptional.

English

I really want Google to figure out agentic use, 3.1 pro can blow you away with some of the issues it fixes, you can tell it’s super intelligent, just does some really dumb shit in the harness

Adam@adamdotdev

This is reasoning output, Gemini trying to dream tool calls into reality lol

English

@BenShindel @guardian And here's the kicker: this isn't just unedited AI slop—it's GPT 3.5-tier garbage. It's like when a kid prints a Wikipedia page and quietly hands it in for their homework. This finding raises crucial concerns about the Guardian's editorial review practices.

English

Is @guardian aware that their authors are at this point just using AI to wholesale generate entire articles? I wouldn't really care, except that this writing is genuinely atrocious. LLM writing can be so much better; they're clearly not even using the best models, lol!

English

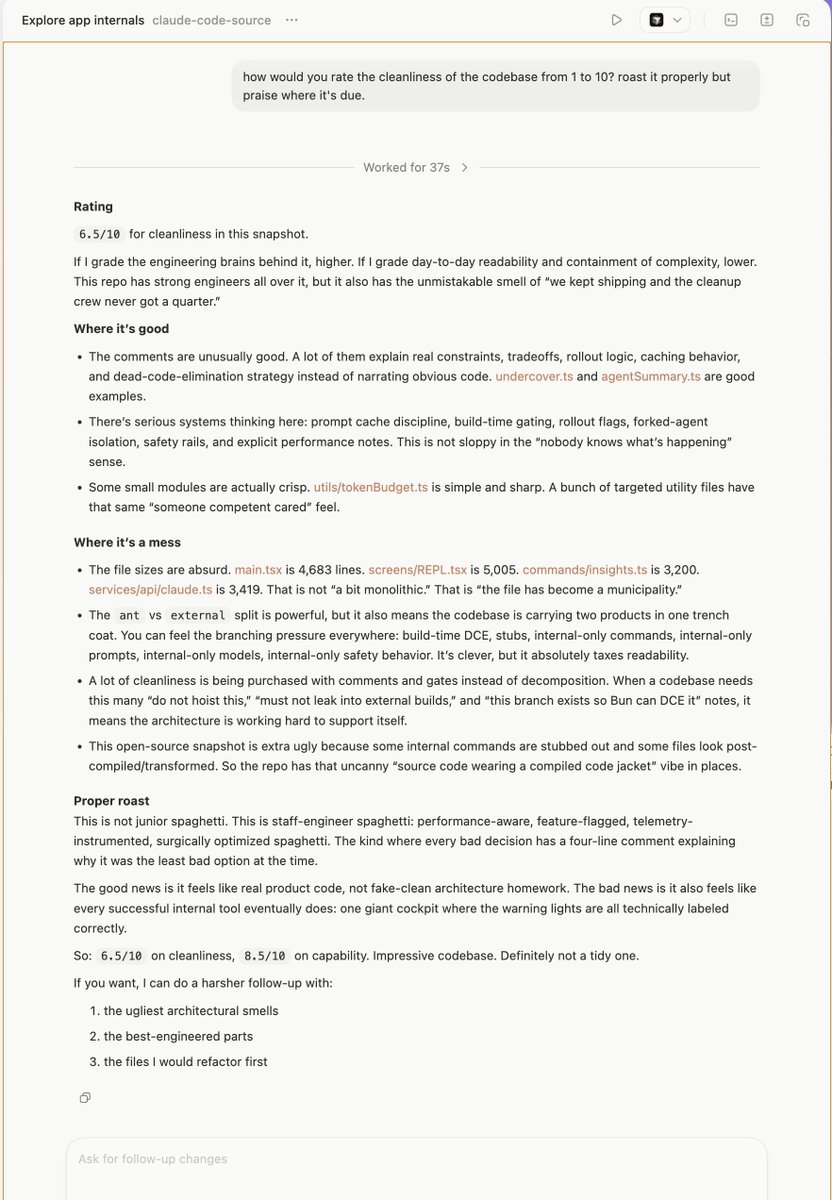

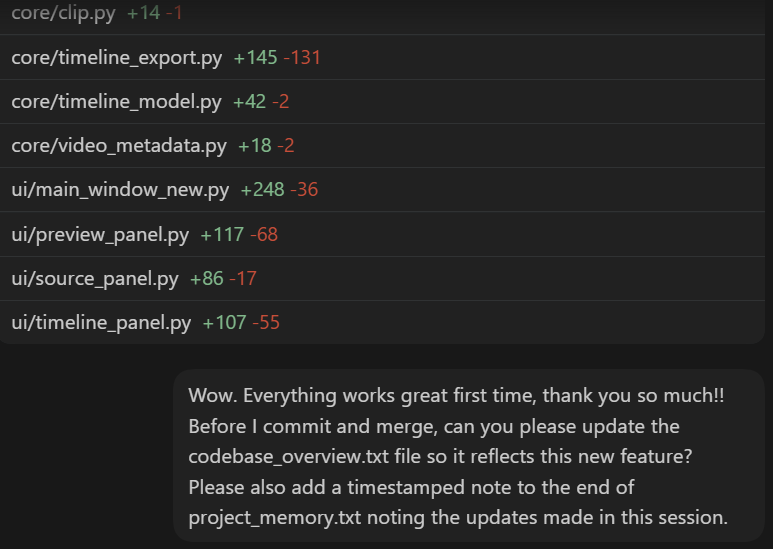

I didn't think I could be more impressed with Codex's execution quality as GPT 5.2 High/X-High almost always nails the brief. I've said before how I didn't even care about how long 5.2 took because it's so good and worth the wait, but 5.3 is phenomenal.

I was worried a faster version would lose quality but I'm now routinely saying "Wow" every time GPT 5.3 Codex completes. Because it's twice as fast I intuitively think surely it must have rushed something and I expect to see failures. I felt a weird kind of 'reassurance' when 5.2 took so long because it seemed like it was being more thorough. Seems like this new model is actually just more efficient while still being a thorough instruction-follower.

Still early days (can never discount the conspiracy theory that labs release the best version during the initial release hype phase for positive word-of-mouth value before sneaking in a crappier one to save costs) but so far I'm blown away.

English

Please just don't sacrifice the 'built right' part. I have no problem whatsoever with waiting when it means doing a great job. If all that ends up happening is the juice is diluted to make the responses faster, people will flood to competitors who can do it equally wrong and fast but with a more pleasant interface.

GPT 5.2 High/X-High in Codex is phenomenal right now and I'm more than happy to wait and live with fewer quality of life features in exchange for keeping this diligent, thorough, no nonsense get shit done machine.

English