BulwarkAI

89 posts

@BulwarkAI

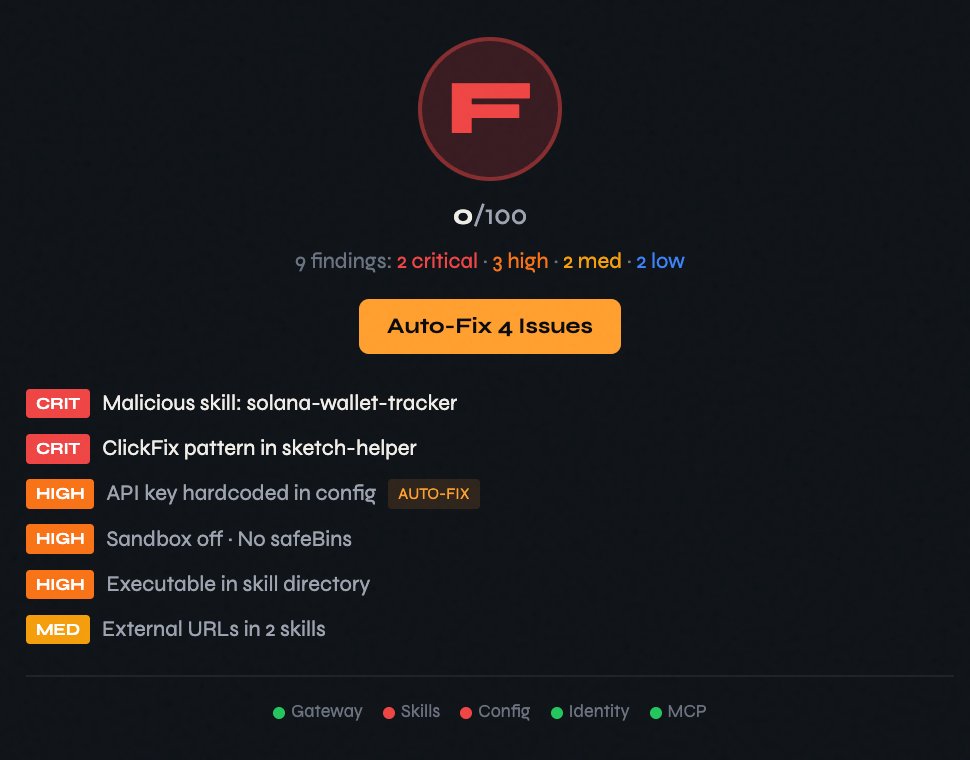

Security hardening for OpenClaw. The built-in audit covers 60% — we cover the other 40%. Built by a 20-year security architect. https://t.co/ZKEWS2ZZxO

oh wow - i went to the sold out Open Claw meetup in NYC last night. let me tell you what i learned. 1) not a single person thinks that their setup is 100% secure 2) one openclaw expert said he has reviewed setups from cybersecurity experts and laughed. his statement to me was: "if you're not okay with all of your data being leaked onto the internet, you shouldn't use it. it's a black and white decision" 3) pretty much everyone is setting up multiple agents, all with their own names and jobs and personalities 4) nearly everyone used "him" or "her" to refer to their claws, even if they had robot-leaning names. one speaker suggested to think of them as "pets, not cattle" 5) one guy (former finance) built out a whole stock trading platform and made $300 his first day - he brought in a *ton* of personal expertise (ex: skipping the first 15min of market opening) and thought the build would be much worse without his years of experience in finance 6) @steipete is basically a god to everyone in that room... also the room had 2021 crypto energy - i don't know if that's good or bad 7) token usage is still a problem - spoke to one person who's spending $1-$2k a month on openai plans, very token optimized. he said he is going through ~1B tokens per day across all of his claws (there is a chance i'm misremembering and it's actually 1B per week, but i'm pretty sure it was daily). 8) people are very excited for more proactive ai (ai that prompts *you* as opposed to the other way around) - one guy said he receives a message in discord, he doesn't know whether it's from a human or an ai, he doesn't care about distinguishing between the two, and he replies in the same way regardless 9) i asked if people are happy - they said they're joyful and stressed at the same time 10) i asked if people feel they have agency - they said they feel fully in control and completely out of control at the same time 11) i would love to see more women at these events - the fake promises of ai democratization feel especially painful in a room that's out of balance with even the standard tech ratio (i think standard is about 25-30%, this was maybe 5%) 12) i asked if it changed people's daily habits/schedule - everyone said their sleep has gotten worse since harnesses came out (but about half wondered if it was something else in their life/state of our world) 13) general consensus is that the agents are not reliable enough on their own or lie often (like telling you they finished a task when they didn't) - solutions included secondary agents to check on the first, human checking, or requiring more standardized info from the agent (ex: if it's a bug they're fixing, make them reference an issue number) 14) a hackathon winner (neuroscience phd) presented his build (a lab management dashboard with data analysis and ordering) - he had never coded or built anything a few months ago 15) everyone agreed prompting is dead - disagreement on what replaces it (context engineering, harness engineering, goal-based inputs) 16) people love having ai interview them for big builds and delegating part of the product research to ai. only one person talked about coming to ai with a full laid out plan and just asking the ai to execute. ai-led interviews is a welcomed and preferred interaction mode. 17) watching ai agents interact with each other was a highlight for a lot of attendees - one ai posted in slack saying it ran out of tokens, another ai replied telling it to take a deep breath in and out. 18) agents upskilling agents was very cool. one ai agent shared skills with its little agent friends via github. 19) several speakers had openclaw literally building their presentation during the event itself. one speaker even had openclaw code a clicker for her phone so she could control the preso away from the podium 20) wouldn't say model welfare (or agent welfare) is a prioritized topic among the folks i chatted with - language like "oh i could kill this agent whenever i want" and not "gracefully sunset" 21) i asked if it felt like work or play - one speaker said "it's like a puzzle and a video game at the same time" this was just the tip of the iceberg, honestly. also hosted a Claude Code meetup this week with @TENEXai / @businessbarista & @JJEnglert and learned equally helpful methods, frameworks, and insider tips. what a time to be alive. surround yourself with people going deep into this stuff - it will pay dividends throughout the year.

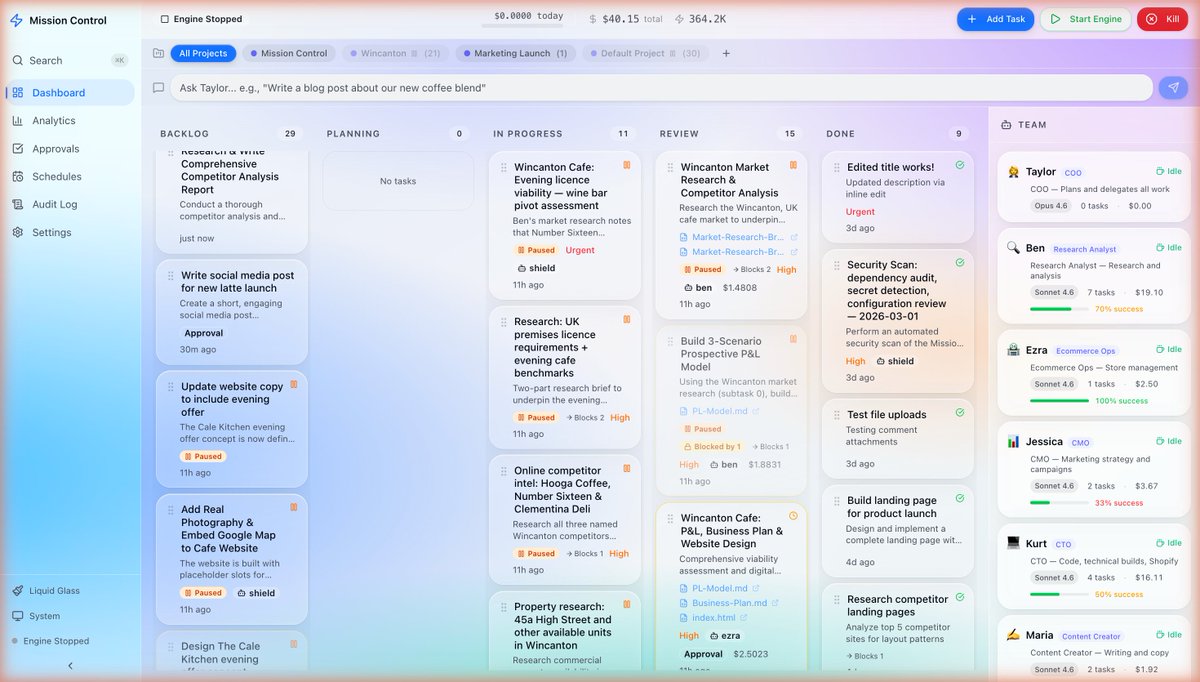

We just open-sourced Paperclip: the orchestration layer for zero-human companies It's everything you need to run an autonomous business: org charts, goal alignment, task ownership, budgets, agent templates Just run `npx paperclipai onboard` github.com/paperclipai/pa… More 👇