Kent Worcester

191 posts

@KentWorcester

Building @ValiantTrade on @Fogo | prev: @fortresslabs

Imagine closing your entire consumer memory division because this guy signed a non binding letter that he would buy 40% of the world’s RAM. Only to have him rug pull 3 months later.

Holo3 is here 🚀. Today, we're launching Holo3: our new series of frontier computer-use models. 78.9% on OSWorld-Verified. That puts us ahead of GPT-5.4 and Opus 4.6, at one-tenth of the cost. Weights on Hugging Face. API is live. Test it now! #Holo3 #OpenSource #ComputerUse #OSWorld #AI #AgenticAI

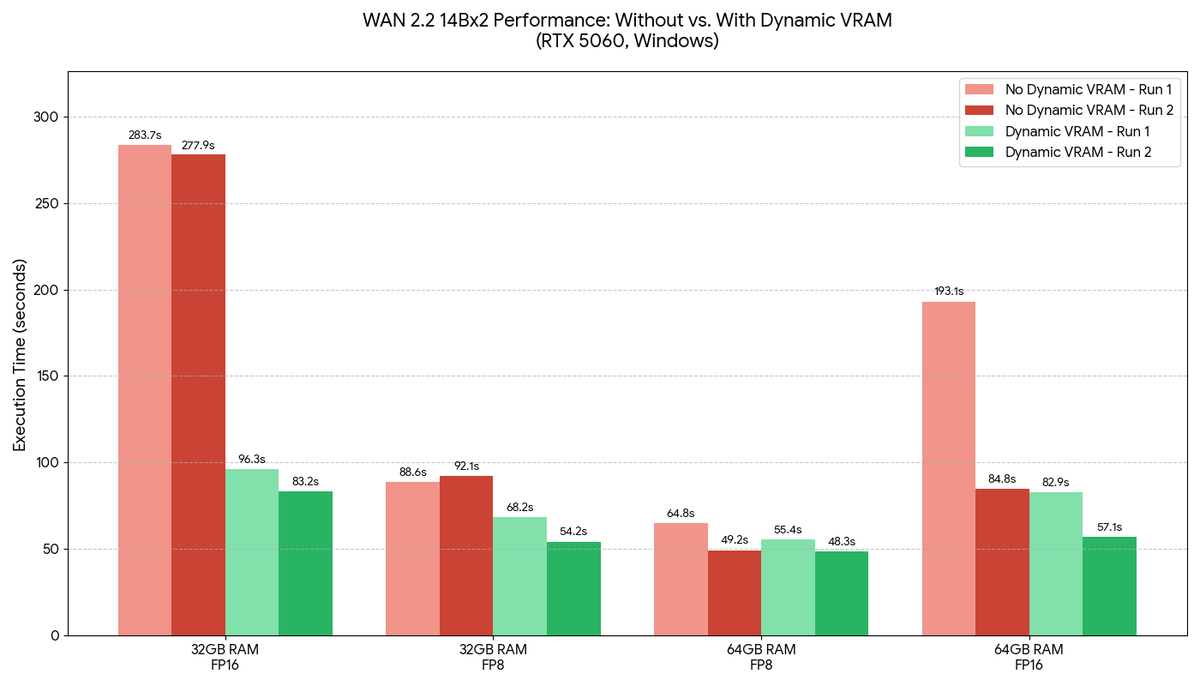

Introducing Unsloth Studio ✨ A new open-source web UI to train and run LLMs. • Run models locally on Mac, Windows, Linux • Train 500+ models 2x faster with 70% less VRAM • Supports GGUF, vision, audio, embedding models • Auto-create datasets from PDF, CSV, DOCX • Self-healing tool calling and code execution • Compare models side by side + export to GGUF GitHub: github.com/unslothai/unsl… Blog and Guide: unsloth.ai/docs/new/studio Available now on Hugging Face, NVIDIA, Docker and Colab.