Tweet fixado

Dr. Sambit Praharaj

7K posts

Dr. Sambit Praharaj

@SambitPhD

Assistant Professor in CSE | 📚📝Hybrid Human-AI | Postdoc Research - AI in EdTech | 🇮🇳 🇳🇱 🇩🇪 | https://t.co/qcFTbxo31M | 🎥

CAREER Advice SUBSCRIBE 👇👉 Entrou em Ekim 2012

764 Seguindo834 Seguidores

Dr. Sambit Praharaj retweetou

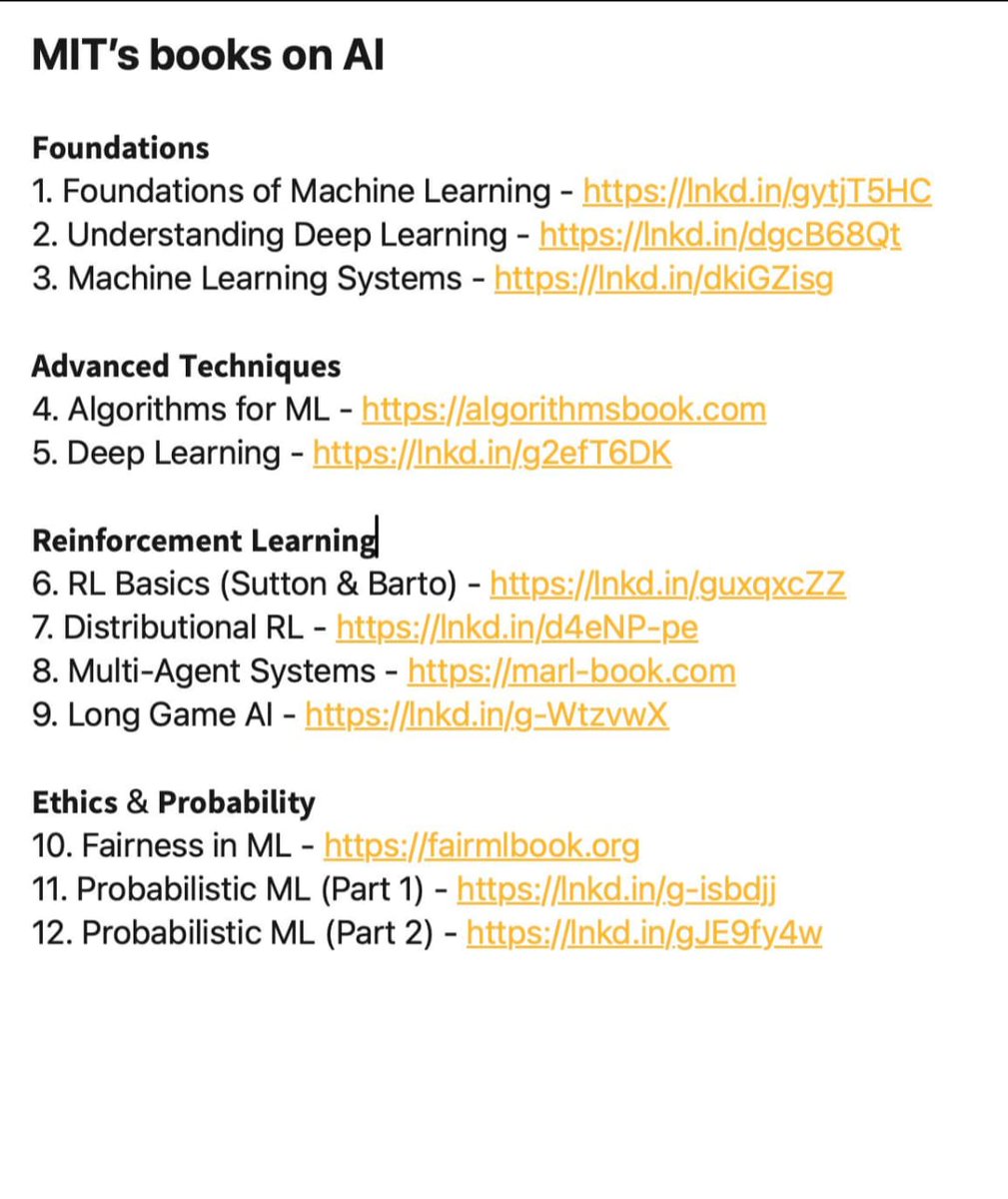

BREAKING: MIT just mass released their Al library for free. (Links included)

I went through these and honestly... this is better than most paid courses I've seen.

Here's the full list of books:

Foundations

1. Foundations of Machine Learning Core algorithms explained. Theory meets practice.

2. Understanding Deep Learning Neural networks demystified. Visual explanations included.

3. Machine Learning Systems Production-ready architecture. System design principles.

Advanced Techniques

4. Algorithms for ML Computational thinking simplified. Decision-making frameworks.

5. Deep Learning The definitive textbook. Covers everything deeply.

Reinforcement Learning

6. RL Basics (Sutton & Barto) The classic. Agent training fundamentals.

7. Distributional RL Beyond expected rewards. Advanced theory.

8. Multi-Agent Systems Agents working together. Coordination and competition.

9. Long Game Al Strategic agent design. Future-focused thinking.

Ethics & Probability

10. Fairness in ML Bias detection. Responsible Al practices.

11. Probabilistic ML (Part 1 & 2)

Links: lnkd.in/gkuXuexa

Most people pay thousands for bootcamps that teach half of this.

Bookmark it. Start anywhere. Just start.

Repost for others Follow for more insights on Al Agents.

MIT's books on Al

Foundations

1. Foundations of Machine Learning - lnkd.in/gytjT5HC

2. Understanding Deep Learning - lnkd.in/dgcB68Qt

3. Machine Learning Systems - lnkd.in/dkiGZisg

Advanced Techniques

4. Algorithms for ML - algorithmsbook.com

5. Deep Learning - lnkd.in/g2efT6DK

Reinforcement Learning

6. RL Basics (Sutton & Barto) - lnkd.in/guxqxcZZ

7. Distributional RL - lnkd.in/d4eNP-pe

8. Multi-Agent Systems - marl-book.com

9. Long Game Al - lnkd.in/g-WtzvwX

Ethics & Probability

10. Fairness in ML - fairmlbook.org

11. Probabilistic ML (Part 1) - lnkd.in/g-isbdjj

12. Probabilistic ML (Part 2) - lnkd.in/gJE9fy4w

English

Dr. Sambit Praharaj retweetou

Best YouTube Channels To Learn AI in 2026 (No BS)

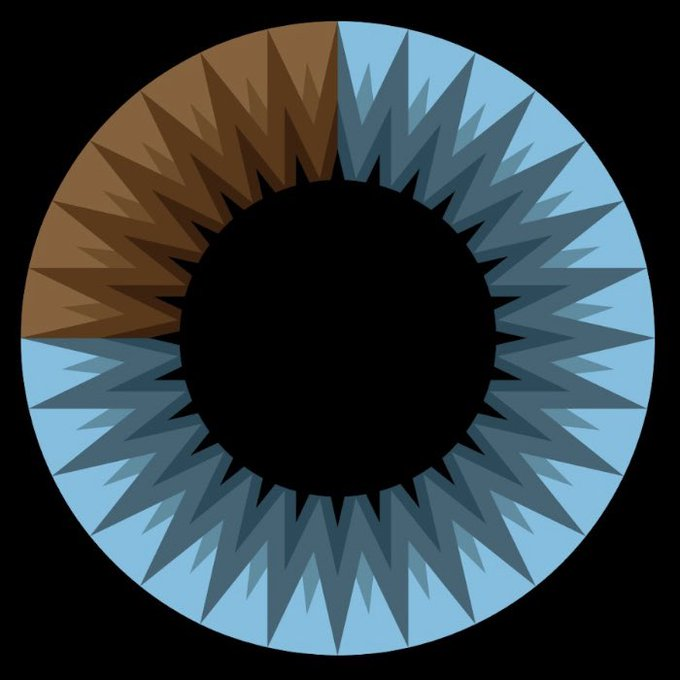

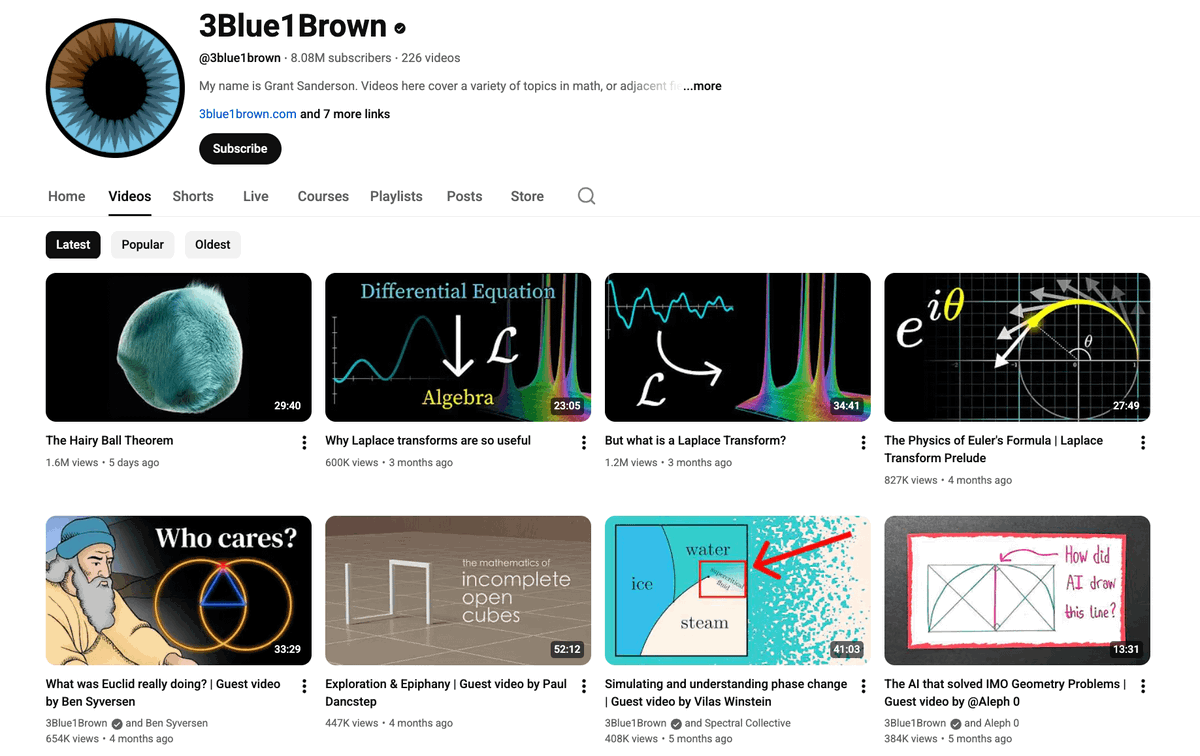

1. Fundamentals – 3Blue1Brown

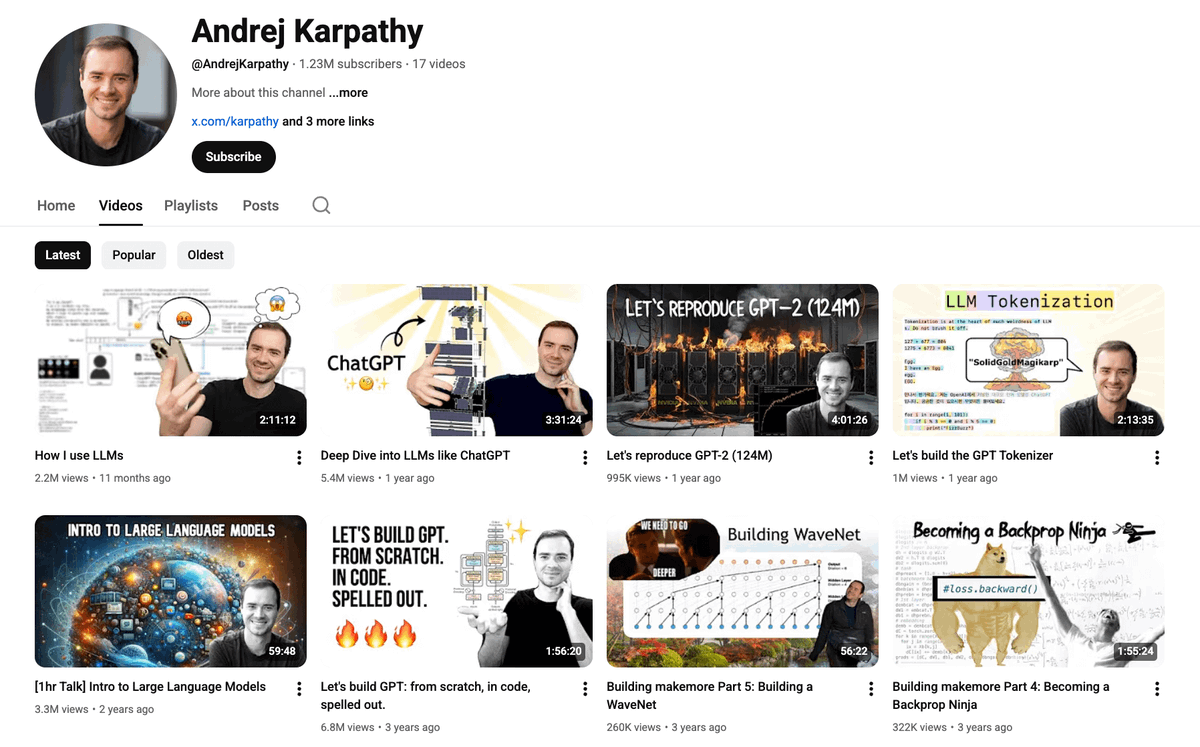

2. Deep Learning – Andrej Karpathy

3. AI Research – Yannic Kilcher

4. Practical AI – AssemblyAI

5. LLMs – AI Explained

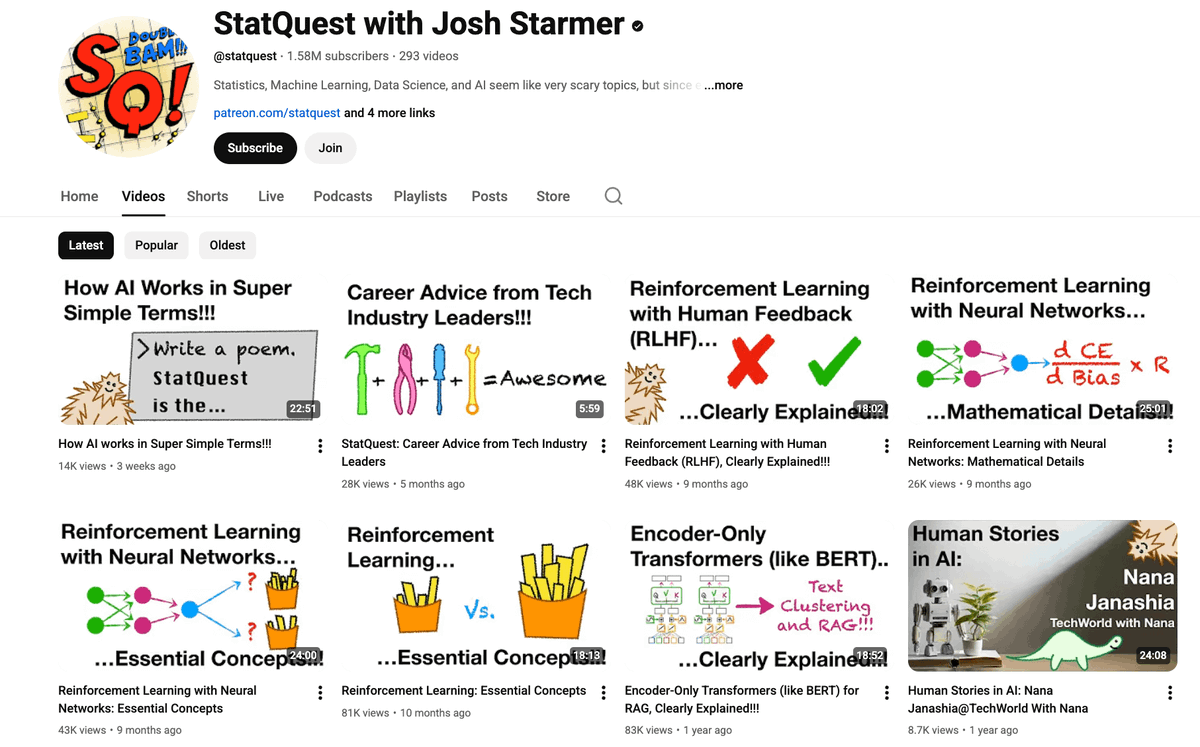

6. ML Theory – StatQuest

7. Papers Simplified – Two Minute Papers

8. GenAI – Matthew Berman

9. AI Agents – Nicholas Renotte

10. Applied ML – Krish Naik

11. PyTorch – Aladdin Persson

12. Math for ML – Serrano Academy

13. Industry Insights – Lex Fridman

14. Real-world AI – DeepLearningAI

English

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

RAG is broken and nobody's talking about it 🤯

Stanford just dropped a paper on "Semantic Collapse," proving that once your knowledge base hits ~10,000 documents, semantic search becomes a literal coin flip.

Here is why your RAG is failing:

Past 10,000 documents, your fancy AI search basically becomes a coin flip.

Every document you add gets turned into a high-dimensional embedding. At a small scale, similar docs cluster together perfectly. But add enough data, and the space fills up. Distances compress. Everything looks "relevant."

It’s the curse of dimensionality. In 1000D space, 99.9% of your data lives on the outer shell, almost equidistant from any query.

Stanford found an 87% precision drop at 50k docs. Adding more context actually makes hallucinations worse, not better. We thought RAG solved hallucinations… it just hid them behind math.

The fix isn’t re-ranking or better chunking. It’s hierarchical retrieval and graph databases.

English

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

20 YouTube channels that teach AI better than most CS degrees in 2026:

1. Andrej Karpathy

Deep, intuitive walkthroughs of neural networks and modern LLMs

@AndrejKarpathy" target="_blank" rel="nofollow noopener">youtube.com/@AndrejKarpathy

2. 3Blue1Brown

Visual intuition for math, linear algebra, and neural networks

@3blue1brown" target="_blank" rel="nofollow noopener">youtube.com/@3blue1brown

3. StatQuest with Josh Starmer

Clear, friendly explanations of statistics and ML fundamentals

@statquest" target="_blank" rel="nofollow noopener">youtube.com/@statquest

4. Stanford Online

University-grade ML and AI lecture series (Andrew Ng, CS229, etc.)

@stanfordonline" target="_blank" rel="nofollow noopener">youtube.com/@stanfordonline

5. sentdex

Practical machine learning and Python projects

@sentdex" target="_blank" rel="nofollow noopener">youtube.com/@sentdex

6. Yannic Kilcher

Deep dives into ML and AI research papers

@YannicKilcher" target="_blank" rel="nofollow noopener">youtube.com/@YannicKilcher

7. MIT OpenCourseWare

Rigorous academic courses on ML, AI, and applied mathematics

@mitocw" target="_blank" rel="nofollow noopener">youtube.com/@mitocw

8. Siraj Raval

High-level overviews and motivation around AI concepts

Link: @SirajRaval" target="_blank" rel="nofollow noopener">youtube.com/@SirajRaval

9. DeepLearningAI

Structured learning paths for deep learning and generative AI

@DeepLearningAI" target="_blank" rel="nofollow noopener">youtube.com/@DeepLearningAI

10. Two Minute Papers

Fast, accessible summaries of cutting-edge AI research

@TwoMinutePapers" target="_blank" rel="nofollow noopener">youtube.com/@TwoMinutePape…

English

Dr. Sambit Praharaj retweetou

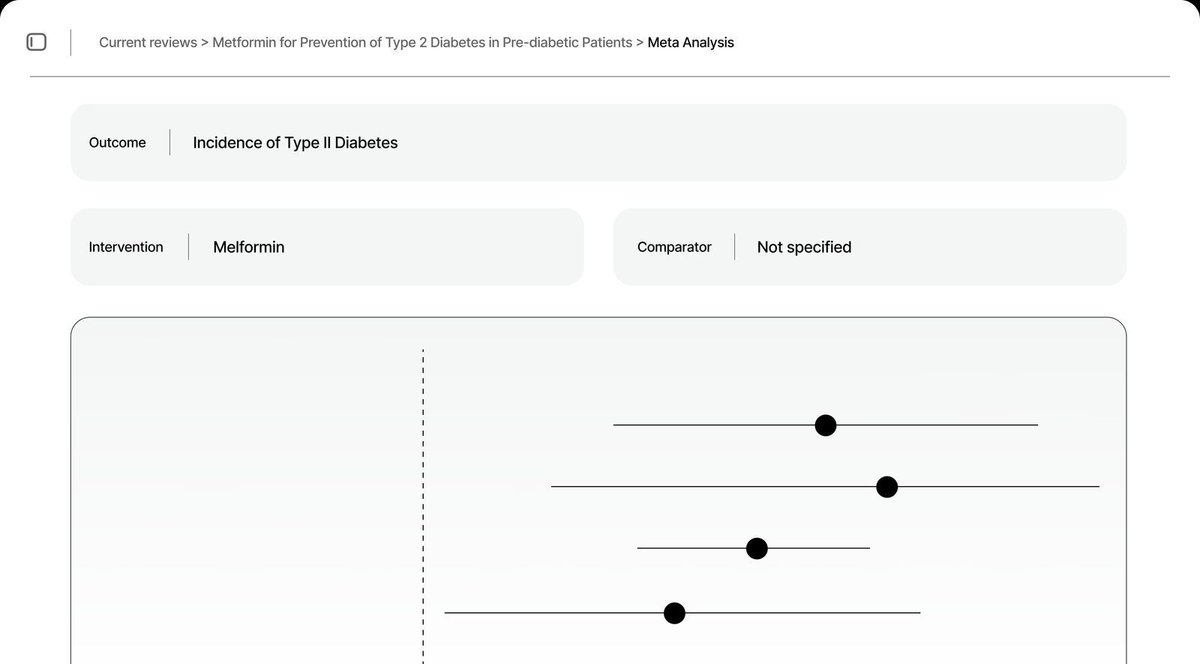

Systematic reviews in minutes to hours using artificial intelligence medrxiv.org/content/10.648… (caveat: thinly veiled advertisement for scholara.ai)

English

Dr. Sambit Praharaj retweetou

I scraped every single NotebookLM prompt that blew up on X, Reddit, and academic corners of the internet.

Turns out most people are using NotebookLM like a fancy note-taker.

That's insane.

It's a full-blown research assistant that can compress 10 hours of analysis into 20 seconds if you feed it the right instructions.

Here's what actually works:

English

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Most people talk about AI like it’s one single thing. It’s not..

AI is a stack and once you see it this way, everything clicks.

Here’s the mental model 👇

1️⃣ Classical AI (the foundation)

Where it all began:

• Logic & reasoning

• Rule-based systems

• Expert systems

Smart, but rigid.

It followed instructions it couldn’t learn.

2️⃣ Machine Learning

Instead of rules, we gave machines data:

• Supervised & unsupervised learning

• Classification & regression

• Reinforcement learning

Now systems could improve from experience.

3️⃣ Neural Networks

Inspired by the human brain:

• Perceptrons

• Hidden layers

• Activation functions

• Backpropagation

This unlocked real pattern recognition.

4️⃣ Deep Learning

Neural networks but deeper and more powerful:

• CNNs for vision

• RNNs & LSTMs for sequences

• Transformers for scale

This is where machines learned to see, hear, and understand.

5️⃣ Generative AI

Models that don’t just analyze they create:

• LLMs

• Diffusion models

• VAEs

• Multimodal systems

Text, images, audio, video generated from learned patterns.

6️⃣ Agentic AI (the frontier)

The biggest shift yet:

• Memory

• Planning

• Tool usage

• Autonomous execution

This is where AI stops being a tool

and starts acting like a digital worker.

👇 The real takeaway

Each layer doesn’t replace the one below it

it builds on it.

Understanding this stack is the difference between:

• Chasing AI hype

• Actually building systems that work

AI isn’t magic.

It’s architecture.

And the future belongs to those who understand

how these layers connect.

English

Dr. Sambit Praharaj retweetou

Google isn’t trying to win the AI race.

They’re trying to own the entire AI Agent ecosystem.

While everyone argues ChatGPT vs Claude, Google quietly built:

Models → Gemini Pro, Flash, Deep Think, Gemma

Design → Stitch, Whisk, Imagen

Research → NotebookLM, AI Mode

Video → Veo, Flow, Google Vids

Coding → Antigravity IDE, Gemini CLI, Jules

Agents → A2A, ADK, FileSearch API

The scary part?

All of these tools talk to each other.

That means:

10x faster prototypes

End-to-end AI workflows

Production-ready agents on GCP

The next AI war won’t be model vs model.

It’ll be ecosystem vs ecosystem.

I mapped this stack out here:

gamma.app/?utm_campaign=…

Save. Share. Build.

GIF

English

Dr. Sambit Praharaj retweetou

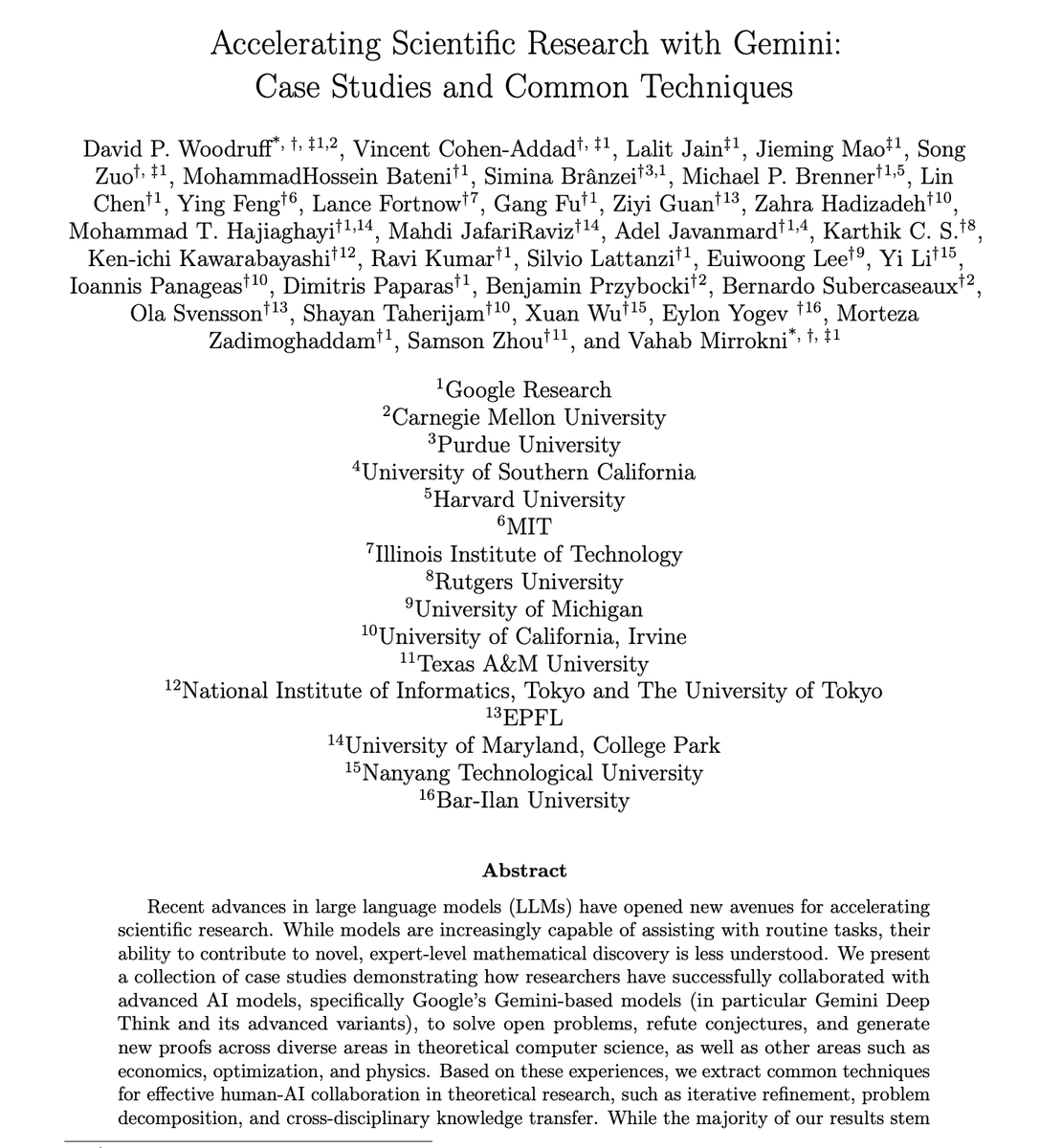

Google just dropped 145 pages documenting how researchers use Gemini to tackle scientific problems.

𝘚𝘢𝘷𝘦 & 𝘙𝘦𝘵𝘸𝘦𝘦𝘵 (𝘵𝘰 𝘩𝘦𝘭𝘱 𝘺𝘰𝘶𝘳 𝘯𝘦𝘵𝘸𝘰𝘳𝘬)

A few things that stood out to me (in simple terms):

- In one case, the AI was used as an adversarial reviewer and caught a serious flaw in a cryptography proof that had passed human review. That’s a very different use than “summarise this PDF.”

- The model links tools from very different fields (for example, using theorems from geometry/measure theory to make progress on algorithms questions). This is where its wide reading really matters.

- They don’t let the model run wild. Humans still choose the problems, check every proof, and decide what’s actually new. The model is there to suggest ideas, spot gaps, and do the heavy algebra.

- Agentic loops, not just chat

In some projects, they plug Gemini into a loop where it:

-- proposes a mathematical expression,

-- writes code to test it,

-- reads the error messages, and

-- fixes itself. (humans only step in when something promising appears)

We are moving past the era of simple chat prompts and into a more sophisticated era of research.

⮑ If your institution is interested in hosting an AI session or a workshop, request your training here: forms.gle/dbRtc7j2W4zZyL…

English

Dr. Sambit Praharaj retweetou

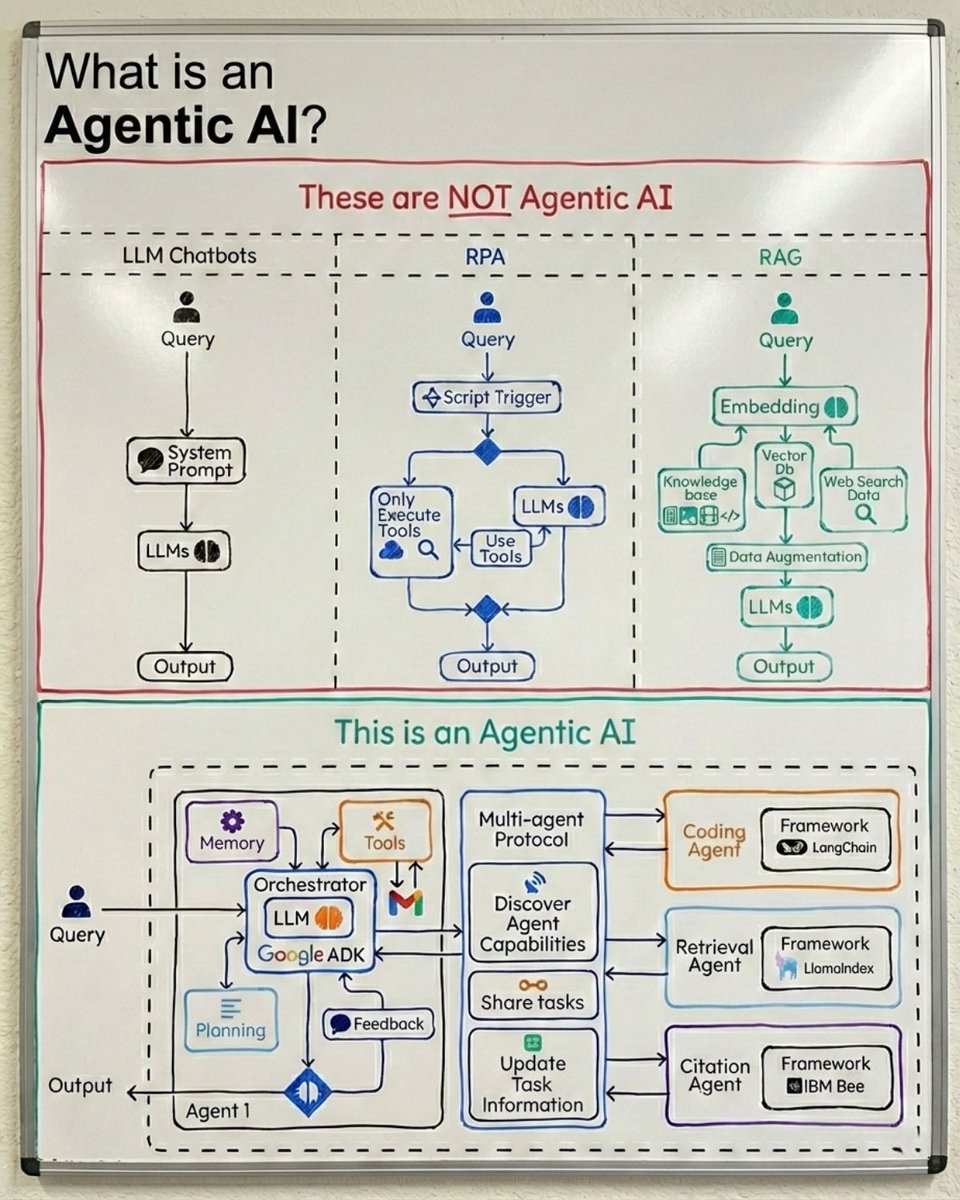

Chatbot: You ask. It answers.

RAG: You ask. It retrieves. It answers.

RPA: You trigger. It executes a script.

Agent: You give a goal. It figures out the rest.

That's the difference.

An agent has:

→ Memory (learns from interactions)

→ Planning (breaks down complex goals)

→ Tool selection (chooses what to use, not scripted)

→ Feedback loops (adjusts based on results)

→ Multi-agent coordination (delegates to specialists)

Most "agents" in production are RAG pipelines with a for-loop.

Real agentic AI has an orchestrator that thinks, deciding which tools, which sub-agents, which approach, and when to change course.

If your system can't change its own plan mid-execution, it's not an agent.

It's automation with an LLM inside.

English

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

Dr. Sambit Praharaj retweetou

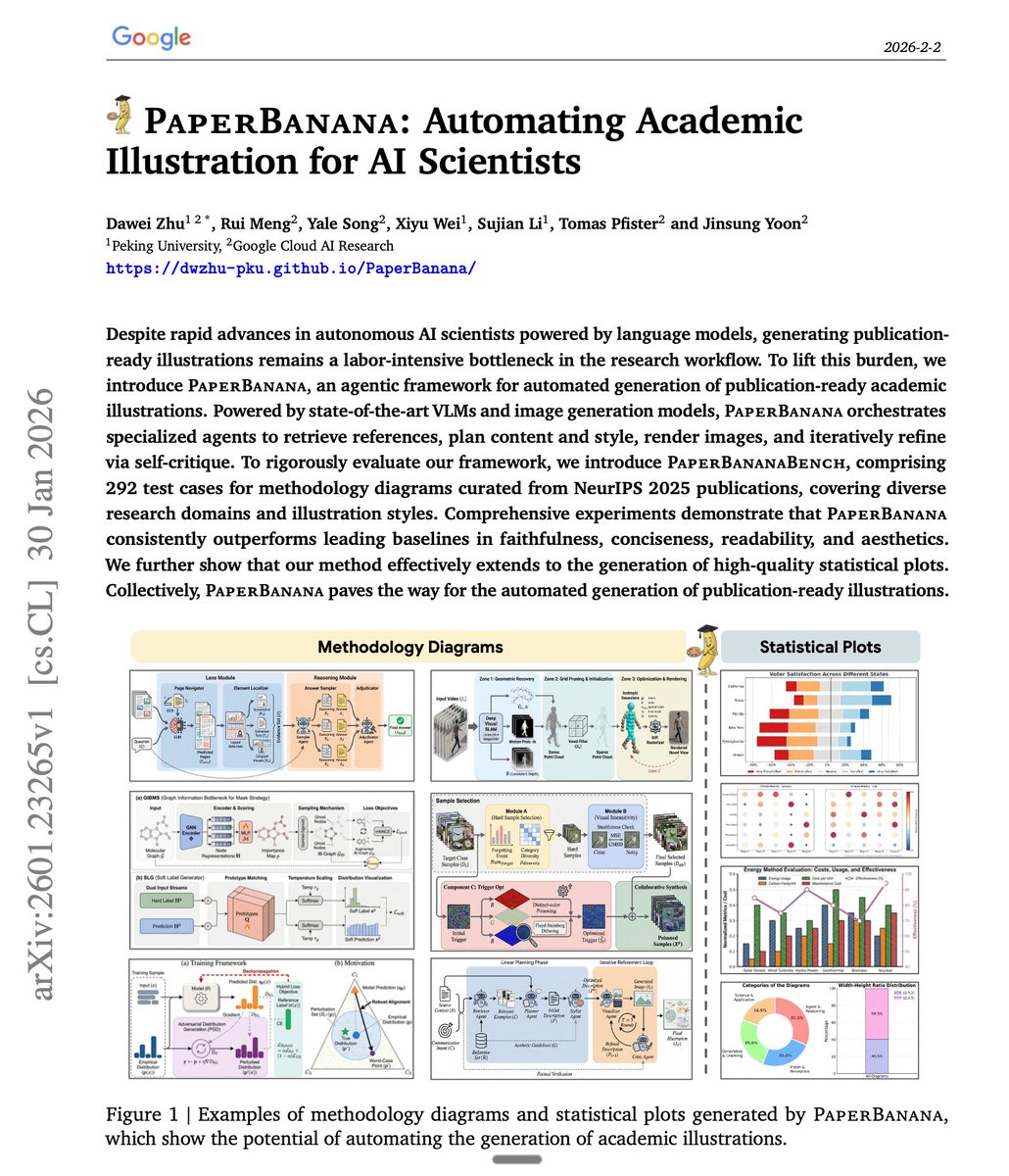

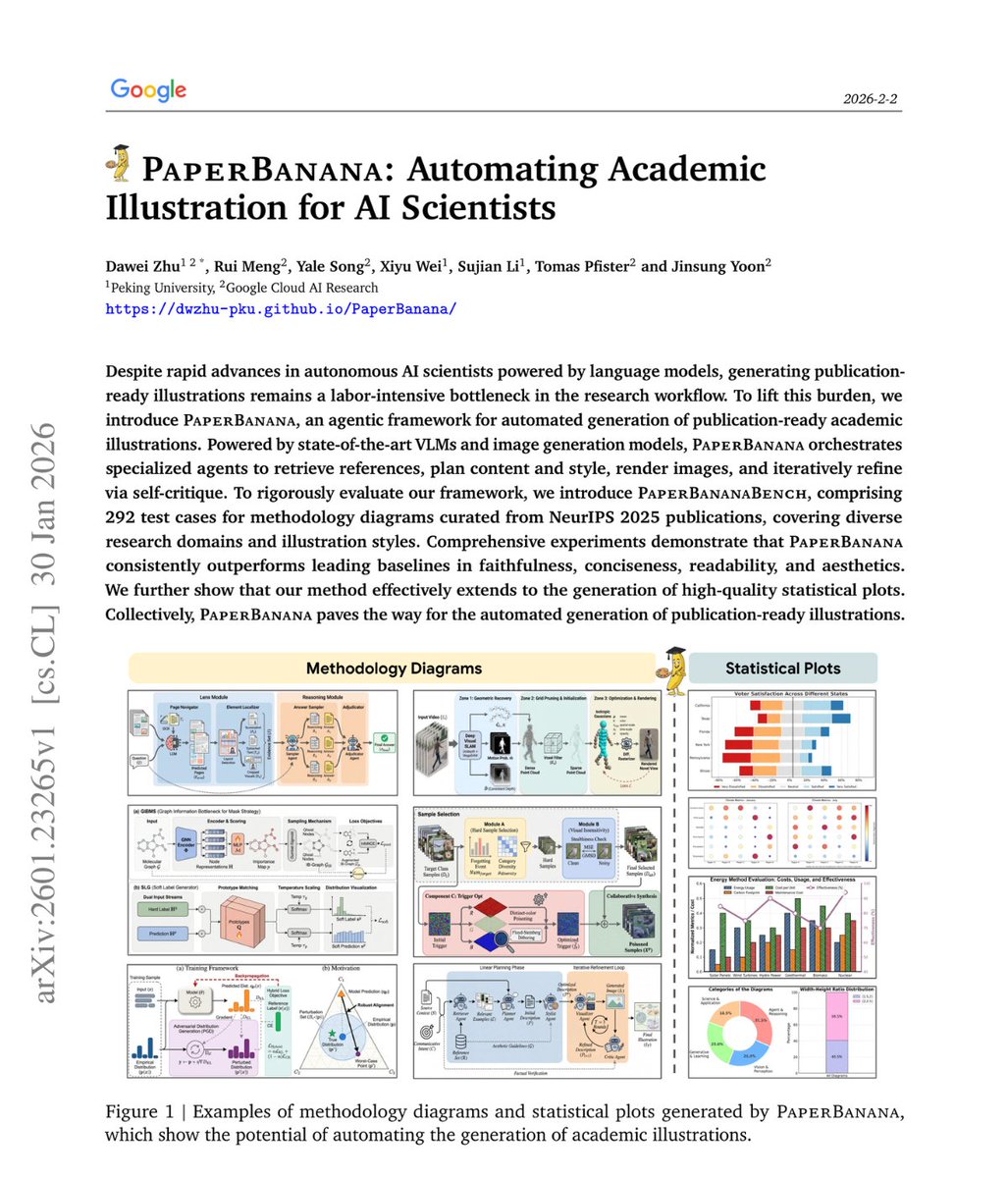

🚨BREAKING: Google just dropped another hit!

It's called PaperBanana and it generates publication-ready academic illustrations from just your methodology text.

No Figma. No manual design. No illustration skills needed.

Here's how it works:

A team of AI agents runs behind the scenes

→ One finds good diagram examples

→ One plans the structure

→ One styles the layout

→ One generates the image

→ One critiques and improves it

Here's the wildest part:

Random reference examples work nearly as well as perfectly matched ones. What matters is showing the model what good diagrams look like, not finding the topically perfect reference.

In blind evaluations, humans preferred PaperBanana outputs 75% of the time.

This is the recursion we've been waiting for AI systems that can fully document themselves visually.

Waitlist’s open, Link in the first comment.

English