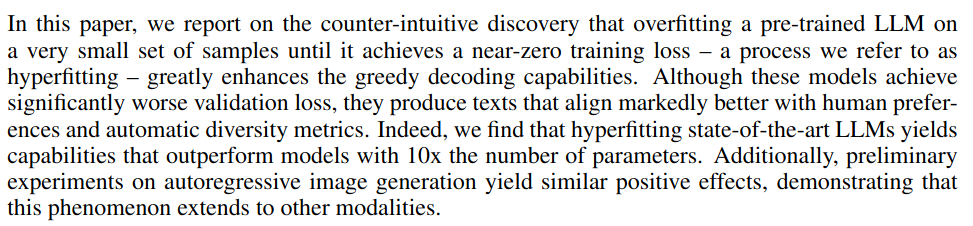

Scott Tyler (@[email protected])

2.6K posts

Scott Tyler (@[email protected])

@ScienceScottT

Developing new single cell omics methods and bench validating all the hypotheses those techniques give us. Opinions expressed are my own.

I have been arguing that we need to go back to basics. The pressure for hypercomplex papers kills the very fabric of life sciences. Papers with a bazillion figures, graphs, tables, half cooked omics and so called deep mechanistic insights (that are seldom reproducible or even meaningful) are hurting real progress. Who can honestly vouch for individual figures any more? The academic publishing system in its current form has outlived its usefulness. The problem with the current crisis ? There are too many forces supporting the status quo…

One of the remarkable things for me about NeurIPS this year was how quickly the entire AI for Biology community has gone all-in on biological foundation models. Virtual cell models will enable us to predict how cell states will change in response to chemical perturbations. Protein language models will enable us to identify better enzymes for degrading plastics, and so on. Everyone wants bigger data on more things to throw into bigger models. These models are going to be awesome, but real biology discoveries look somewhat different. Contrast these dreams of foundation models with the latest table of contents from Science or Nature: --“A long noncoding eRNA forms R-loops to shape emotional experience–induced behavioral adaptation” — The authors identified a lncRNA in mice that is expressed in response to neuronal activity that modulates the 3D structure of chromatin, thereby activating genes that are involved in neuronal plasticity. The authors further identified that this lncRNA is essential for certain forms of learning. --“Cancer cells impair monocyte-mediated T cell stimulation to evade immunity” — The authors identified that mouse melanoma cells secrete a lipid metabolite that prevents monocytes from activating CD8+ T cells. --“Postsynaptic competition between calcineurin and PKA regulates mammalian sleep–wake cycles” — By generating mouse knockout lines, the authors identified phosphatases and kinases that are critical for regulating the sleep-wake cycle, and showed that they act through regulation of proteins at excitatory postsynaptic sites. I struggle to imagine how any of these discoveries could fall out of a multimodal biology foundation model. This is not intended to be a straw man argument. Surely, a foundation model could potentially identify the lncRNA from the first paper, but I am not sure how such a foundation model would associate it with chromatin remodeling. A multimodal foundation model with enough data could also potentially identify metabolic changes associated with melanoma cells subjected to certain kinds of treatments, but I don’t see how that foundation model could identify the effect of those metabolites in preventing CD8+ T cell activation. Indeed, I do not think that any of the foundation models that are being developed today would be capable of generating rich new biological insights of the kind described in these papers. And yet, these are the kinds of insights that new therapies are made from. The issue, I think, is that machine learning models work extremely well on structured data, and so all the foundation models that are being built are highly structured. Take a protein sequence as input and produce a protein sequence as output. Take a cell state and a chemical perturbation as input and produce a new cell state as output. Biology, however, is poorly structured. The lncRNA insight is case in point: what structured representation can we use for the action of the lncRNA in modulating chromatin architecture? Protein models cannot represent it; DNA models cannot represent it; virtual cell models cannot represent it. Perhaps a model that incorporates RNA expression and 3D genome state could represent it, but then how would that model represent the lipid modulation of the monocytes? I worry that every discovery may need its own representation space. Indeed, the nature of biology is such that there likely is no representation, short of an atomic-resolution real-space model of the entire organism, that is sufficient to represent the diversity of biological phenomena that are relevant for disease. Except, of course, for natural language, which is evolved to represent all concepts that humans are capable of contemplating. Indeed, I think natural language has an essential role to play in representing biology, and is ultimately unavoidable, insofar as it is the only medium we know of that is sufficiently structured for machine learning and sufficiently flexible to represent the full diversity of biological concepts. At FutureHouse, we work on language agents, which is one way of combining language and biology, but this is not the only way. Models that combine natural language with protein, DNA, transcriptomics, and so on will also be extremely productive, provided the addition of the structured datatypes does not restrict their ability to represent unstructured concepts. However we do it, I think this essential role of natural language in representing biology is currently largely underappreciated. The history of biology is built on tools that we have found in nature to study biological phenomena. As all biologists know, trying to engineer things from scratch (almost) never works; what works is finding things in nature and repurposing them. It will be aesthetically pleasing if it turns out that our engineered representations are yet again insufficient for studying biology, and that natural language is simply another such tool that we have found in nature that must be applied instead.

Length biases in single-cell RNA sequencing of pre-mRNA. Check out this research by @lpachter & @GorinGennady in @BiophysReports #CellBio2024 hubs.li/Q02_LC2n0

Furthermore, since Caltech subsidizes the plans by different amounts, Caltech also pays an extra $1,865.28 annually for each staff member who chooses PPO 1800 over PPO 3000.

Relationship between chromosomes (orange) and the mitotic spindle (green) in four stages of cell division, as seen by Bessel Beam plane structured illumination microscopy cell.com/fulltext/S0092…

My new investigation for @newsfromscience: Did a top @NIH official manipulate Alzheimer's/Parkinson’s research for decades? Neuroscientist Eliezer Masliah found to engage in scientific misconduct; 132 of his papers fall under suspicion science.org/content/articl…