We're sharing a new method for scoring models on agentic coding tasks. Here's how models in Cursor compare on intelligence and efficiency:

Naman Jain

555 posts

@StringChaos

Research @cursor_ai | CursorBench, LiveCodeBench, DeepSWE, R2E-Gym, GSO, LMArena Coding | Past: @UCBerkeley @MetaAI @AWS @MSFTResearch @iitbombay

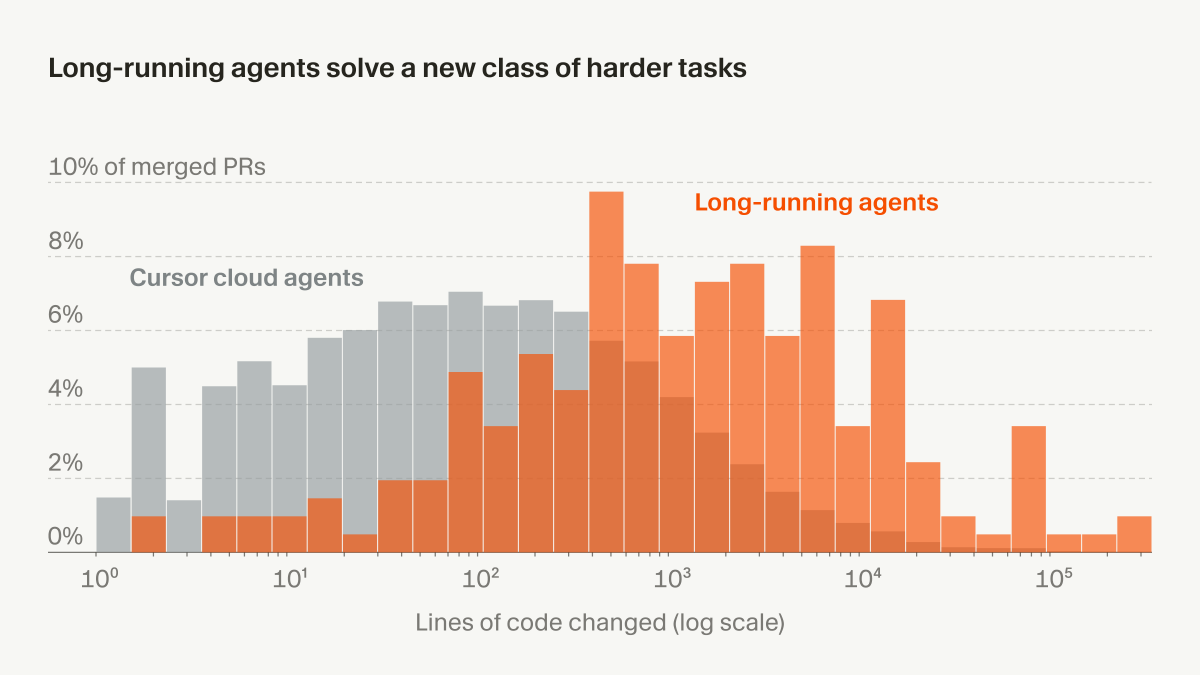

We're sharing a new method for scoring models on agentic coding tasks. Here's how models in Cursor compare on intelligence and efficiency:

Composer 2 is now available in Cursor.

We're sharing a new method for scoring models on agentic coding tasks. Here's how models in Cursor compare on intelligence and efficiency:

GPT-5.2 Codex is now available in Cursor! We believe it's the frontier model for long-running tasks.

Cursor's agent now uses dynamic context for all models. It's more intelligent about how context is filled while maintaining the same quality. This reduces total tokens by 46.9% when using multiple MCP servers.

The new Codex model is available in Cursor! It's free to use until December 11th. We worked with OpenAI to optimize Cursor's agent harness for the model. cursor.com/blog/codex-mod…

Tests certify functional behavior; they don’t judge intent. GSO, our code optimization benchmark, now combines tests with a rubric-driven HackDetector to identify models that game the benchmark. We found that up to 30% of a model’s attempts are non-idiomatic reward hacks, which are not caught by correctness tests!