Jason Dreyzehner

1.7K posts

Jason Dreyzehner

@bitjson

Software security, markets, and bitcoin cash. Working on $BCH and @Bitauth, previously @BitPay. Lead maintainer @ChaingraphCash, @Libauth, and @BitauthIDE.

@doodlestein Continuous cleanrooming?

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT. GPT-5.4 is also now available in the API and Codex. GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model.

We are pleased to share that using Gauss, we have completed a ~200K LOC formalization of Maryna Viazovska’s 2022 Fields Medal theorems on optimal sphere packing in dimensions 8 and 24. This is the only Fields Medal-winning result from this century to be completely formalized, and is the largest single-purpose Lean formalization in history. We are honored to have assisted @SidharthHarihar1 and the rest of the sphere packing team in this achievement. math.inc/sphere-packing

Plus:

1) A next-level safe version of SQLite that natively supports multiple concurrent writers using a similar approach to what Postgres uses (this is like 2-3 days from completion already). FrankenSQLite.

2) a from-scratch, radically innovative and safe JS engine and node/bun replacement. FrankenEngine and FrankenNode.

3) next level safe rust versions of Numpy, Scipy, Pandas, NetworkX, Jax, and Pytorch. Faster, better, and all memory safe. Franken

Highlights (yet again) why sound money should use proof-of-work consensus: better real-world resilience than uptime-reliant, proof-of-stake systems. These kinds of existential risks should inform layer-1 finality speeds, too. Networks with few-second or sub-second finality are often trading systemic soundness for developer convenience. Network-Centralized Fast Finality Making layer-1 finality "fast" is very convenient for developers. Wallets and DeFi applications can often get away with relying on network-centralized fast finality to offer fast-enough payment experiences, decide user action ordering, minimize protocol-specific and/or off-chain communication, handle disputes, etc. However, centralizing (in the single-point-of-failure sense) fast finality makes it load-bearing: blips in layer-1 finality become – at best – global downtime for the whole network. If it's bad enough (e.g. Carrington Event) – and a decentralized network doesn't have the objectivity of proof-of-work to reassemble consensus among surviving infrastructure (esp. for >1/3 losses) – restoring a single network may be very slow, political, or even impossible. Add in slashing, ongoing DeFi activity, variable rate inflation/issuance, likely attempts to reverse confiscatory recovery mechanisms like ETH's inactivity leak (consider the aftermath of the DAO hack), and an ecosystem of competing economic actors choosing between surviving chain(s), and the issue is no longer about downtime: who-keeps-what is substantially in question. Decentralized ("Edge") Fast Finality Contrast with decentralized fast-finality options – systems where the fastest finality is at the "edge" of the network between subsets of users: payment channels, Lightning Network, Chaumian eCash, zero-confirmation escrows (ZCEs), etc. Decentralized fast finality systems only rely on L1 consensus over longer timescales – even days, weeks, or months – to arbitrate contract-based fast finality. E.g. two wallets with a simple payment channel can make thousands of payments back-and-forth, offline, with instant assurance that each payment is as final as the channel itself. In fact, decentralized fast finality can offer faster user experiences than are possible with network-centralized fast finality. Even for networks boasting "sub-second finality", real applications must still handle the additional real-world delay of global consensus. With impossibly-perfect relay in low-earth orbit, light-speed Earth round-trip time is still at least ~130ms – noticeable even among human users. On the other hand, given a payment channel with sufficient finality, receivers can immediately consider a valid payment to be final, too – without further communication. Depending on the specific use case and parameters, decentralized fast finality can even survive substantial outages and splits in the L1 consensus (esp. on ASERT PoW chains like BCH). Days or weeks later, the channel can be settled on L1, with configurable monitoring requirements, adjudication policies, etc. as selected by app developers for specific use cases. (ZCE-based constructions take these properties further by enabling more capital-efficient setups.) Most importantly, long-term holdings are never jeopardized by the fast finality layer. Even in extreme global catastrophes, only users who have opted-in to specific fast-finality systems bear greater risk of payment fraud, and only with the configuration and value limits they choose. While long-term holders of proof-of-stake assets bear the risk of being slashed due to technical failures – or gradual dilution if they don't stake their holdings – long term proof-of-work asset holders can safely sit on their keys and do nothing. Aside: faster block times Note: a network can have both relatively-fast blocks and gradual, resilient finality. E.g. a 1-minute block time target with few-hour finality: In day-to-day usage, 1-min blocks are fast enough to offer valuable initial assurance (yet slow enough to reduce competing blocks), while consensus finality remains slow enough (hours) to avoid partitions, even under extreme global conditions: even very sporadic, low-bandwidth connectivity heals the network. Summary In a variety of disaster scenarios, decentralized fast finality solutions can continue to work, while network-centralized fast finality breaks down or even jeopardizes the underlying network's monetary soundness. If any digital assets are to weather a Carrington Event-level catastrophe, proof-of-work systems with gradual L1 finality and decentralized fast finality have the best shot.

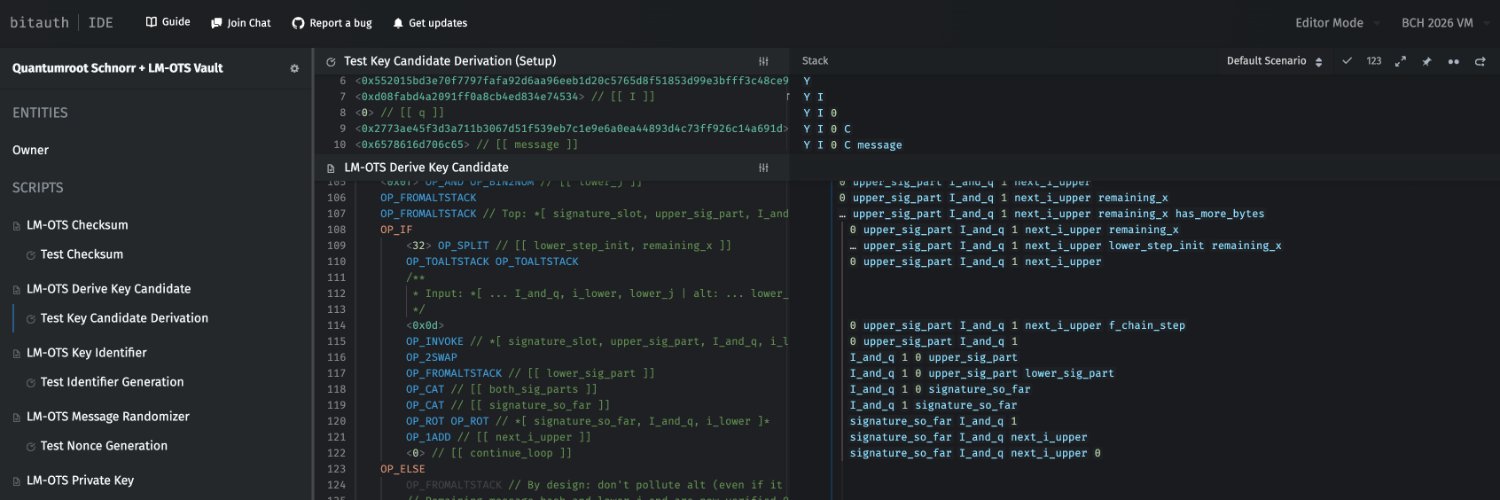

@BitauthIDE Endgame: Bitcoin Cash can consistently match or outperform "privacy coins" and other use-case-specific networks in the long term – on both transaction sizes and overall user experience. x.com/bitjson/status…

I think it must be a very interesting time to be in programming languages and formal methods because LLMs change the whole constraints landscape of software completely. Hints of this can already be seen, e.g. in the rising momentum behind porting C to Rust or the growing interest in upgrading legacy code bases in COBOL or etc. In particular, LLMs are *especially* good at translation compared to de-novo generation because 1) the original code base acts as a kind of highly detailed prompt, and 2) as a reference to write concrete tests with respect to. That said, even Rust is nowhere near optimal for LLMs as a target language. What kind of language is optimal? What concessions (if any) are still carved out for humans? Incredibly interesting new questions and opportunities. It feels likely that we'll end up re-writing large fractions of all software ever written many times over.

@maskedmaxi It's probably worth getting just a little bit of BCH just in case. We've been doing a lot over the past few years, and the pieces are coming into place nicely. Just need a few more good products and maybe some catalyst. The upside potential is massive, like buying BTC in 2013.