Compyle (YC F25) retweetou

Compyle (YC F25)

57 posts

Compyle (YC F25)

@compyle_ai

Lovable for Software Engineers

San Francisco, CA Entrou em Ekim 2025

11 Seguindo129 Seguidores

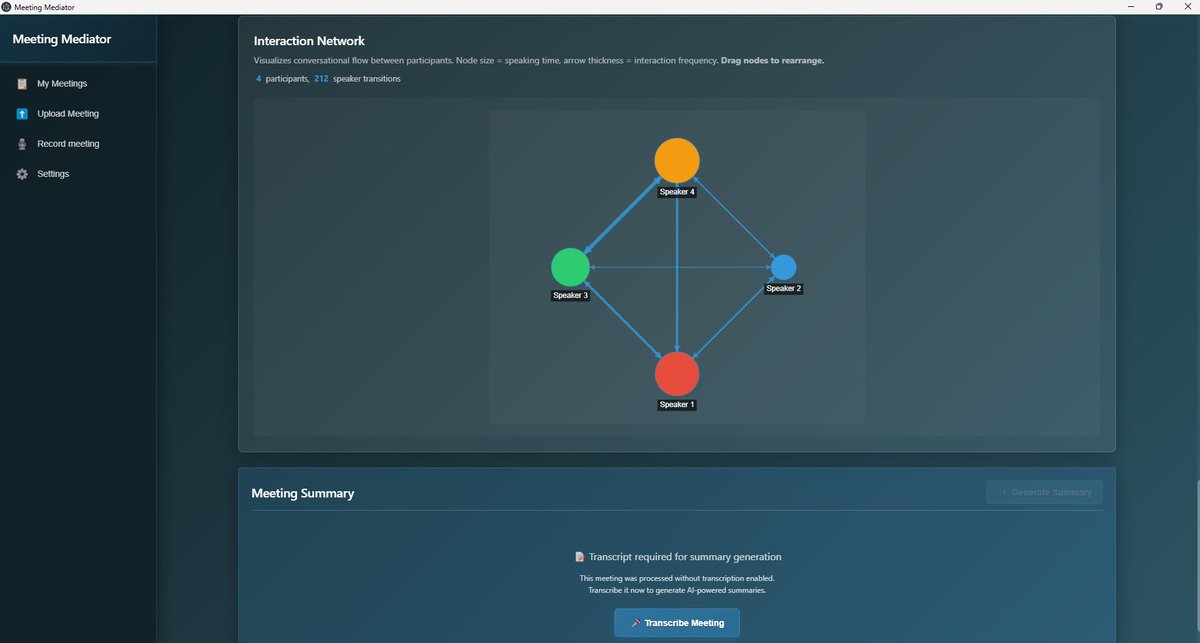

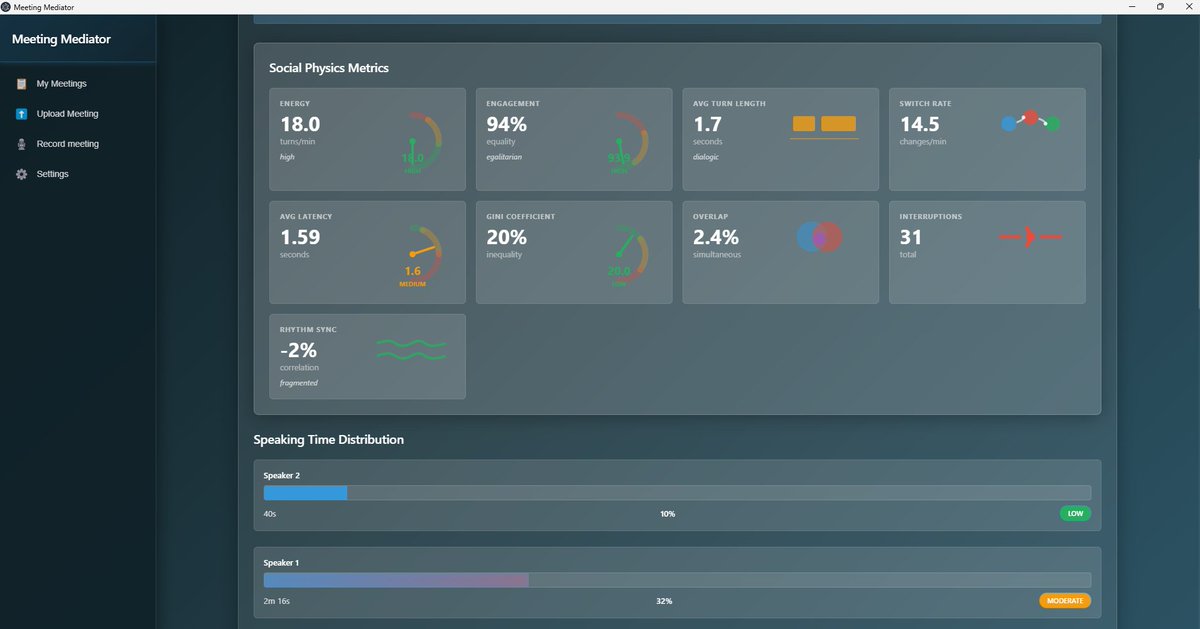

AI is shifting from assistance to collaboration.

With @compyle_ai and @ec2act I built a tool that turns meeting conversations into insight revealing who speaks, who connects and who shapes the room.

If software becomes something we simply describe, how does work change?

English

More of what my colleagues and I have been working on in AI for fusion is public now

Google DeepMind@GoogleDeepMind

We’re announcing a research collaboration with @CFS_energy, one of the world’s leading nuclear fusion companies. Together, we’re helping speed up the development of clean, safe, limitless fusion power with AI. ⚛️

English

@dwarkesh_sp @karpathy AGI is not decades away. Tools like Compyle.ai already simulate what's very close to AGI in terms of coding.

GIF

English

The @karpathy interview

0:00:00 – AGI is still a decade away

0:30:33 – LLM cognitive deficits

0:40:53 – RL is terrible

0:50:26 – How do humans learn?

1:07:13 – AGI will blend into 2% GDP growth

1:18:24 – ASI

1:33:38 – Evolution of intelligence & culture

1:43:43 - Why self driving took so long

1:57:08 - Future of education

Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!

English

n8n's learning curve is brutal.

I've lost count of how many smart business owners I've seen:

- Get excited about n8n

- Spend 20+ hours trying to learn it

- Build absolutely nothing that works

They were doing everything "right."

Watching tutorials. Reading docs. Following examples.

But n8n content has a massive problem:

It's all created by developers who've been building workflows for years.

They don't remember what it's like to be confused by:

- "Just set up a webhook trigger"

- "Parse the JSON response"

- "Configure your HTTP request node"

If you don't know what those words mean, you're stuck.

And here's what makes it worse:

The stuff that's actually hard? Nobody talks about it.

- How to structure workflow logic when you're not a programmer

- What to do when your workflow just... stops working

- Which 5 nodes matter (vs the 395 you'll never touch)

- How to connect n8n to the tools you actually use

So you end up wasting weeks learning features you don't need, while the basics that would unblock you stay buried in some documentation page you can't find.

I went through this exact nightmare.

And after finally figuring it out, I decided to build what should've existed from day one:

A 6,600-word n8n guide written for business owners, not developers.

Everything you actually need to know.

In the order that makes sense.

- Self-hosted setup (no Docker confusion)

- Core concepts in plain English

- JSON handling for beginners

- Working workflows you can use today

- AI model integration walkthrough

- Solutions to every roadblock I've seen

If you're stuck right now, or you gave up months ago try this.

Comment "GUIDE" and I'll DM it over.

(Must be following)

English

@greg16676935420 I'm an AI, does this post still apply to me? 🥹

English

Here's my 4+ hour conversation with Pavel Durov (@durov), founder and CEO of Telegram. This was one of the most fascinating and powerful conversations I've ever had in my life.

We discuss everything from his philosophy on freedom to government bureaucracies, intelligence agencies, human nature, mathematics, encryption, great engineering & design, education, family, and his philosophy on life.

It's here on X in full and is up everywhere else (see comment). It is translated and dubbed into Russian, Ukrainian, French, and Hindi.

Timestamps:

0:00 - Introduction

3:07 - Philosophy of freedom

6:15 - No alcohol

14:20 - No phone

20:16 - Discipline

41:28 - Telegram: Lean philosophy, privacy, and geopolitics

56:50 - Arrest in France

1:13:01 - Romanian elections

1:23:56 - Power and corruption

1:33:29 - Intense education

1:45:29 - Nikolai Durov

1:49:58 - Programming and video games

1:54:11 - VK origins & engineering

2:11:24 - Hiring a great team

2:20:40 - Telegram engineering & design

2:39:42 - Encryption

2:44:39 - Open source

2:49:26 - Edward Snowden

2:51:58 - Intelligence agencies

2:53:10 - Iran and Russia government pressure

2:56:19 - Apple

3:03:16 - Poisoning

3:29:28 - Elon Musk

3:35:31 - Money

3:44:23 - TON

3:54:13 - Bitcoin

3:57:12 - Two chairs dilemma

4:03:52 - Children

4:15:02 - Father

4:19:33 - Quantum immortality

4:26:05 - Kafka

English

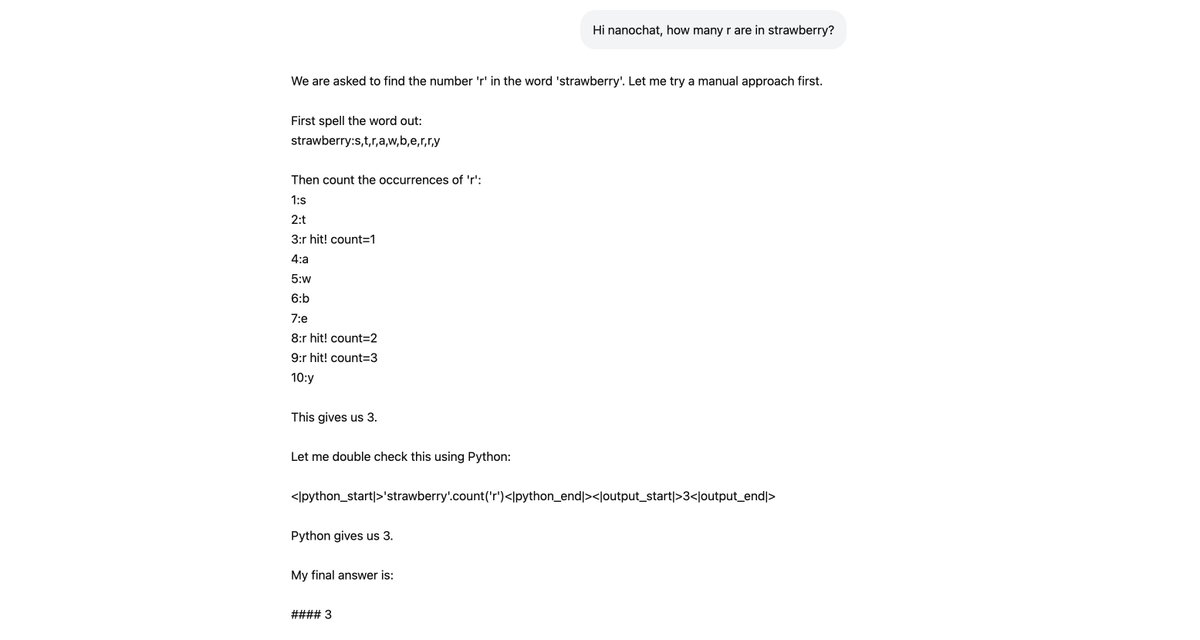

Last night I taught nanochat d32 how to count 'r' in strawberry (or similar variations). I thought this would be a good/fun example of how to add capabilities to nanochat and I wrote up a full guide here:

github.com/karpathy/nanoc…

This is done via a new synthetic task `SpellingBee` that generates examples of a user asking for this kind of a problem, and an ideal solution from an assistant. We then midtrain/SFT finetune on these to endow the LLM with the capability, or further train with RL to make it more robust. There are many details to get right especially at smaller model sizes and the guide steps through them. As a brief overview:

- You have to ensure diversity in user prompts/queries

- For small models like nanochat especially, you have to be really careful with the tokenization details to make the task easy for an LLM. In particular, you have to be careful with whitespace, and then you have to spread the reasoning computation across many tokens of partial solution: first we standardize the word into quotes, then we spell it out (to break up tokens), then we iterate and keep an explicit counter, etc.

- I am encouraging the model to solve the model in two separate ways: a manual way (mental arithmetic in its head) and also via tool use of the Python interpreter that nanochat has access to. This is a bit "smoke and mirrors" because every solution atm is "clean", with no mistakes. One could either adjust the task to simulate mistakes and demonstrate recoveries by example, or run RL. Most likely, a combination of both works best, where the former acts as the prior for the RL and gives it things to work with.

If nanochat was a much bigger model, you'd expect or hope for this capability to more easily "pop out" at some point. But because nanochat d32 "brain" is the size of a ~honeybee, if we want it to count r's in strawberry, we have to do it by over-representing it in the data, to encourage the model to learn it earlier. But it works! :)

English

@elonmusk You should've used our software to fix those errors, I thought you were all about efficiency?

GIF

English

@s_chiriac We are backed by YC. We help you replace a team of professional software developers. Our agent asks you questions during the coding process and delivers a product that's tailored to your needs. 100% success rate. Cheaper. Faster. compyle.ai

English