David Stap

131 posts

@davidstap

ai research @nx_ai_com | phd from @UvA_Amsterdam | prev @Amazon

Our work “Can LLMs Really Learn to Translate a Low-Resource Language from One Grammar Book?” is now on arXiv! arxiv.org/abs/2409.19151 - in collaboration with @davidstap, @diwuNLP, @c_monz , and Khalil Sima'an from @illc_amsterdam and @ltl_uva 🧵

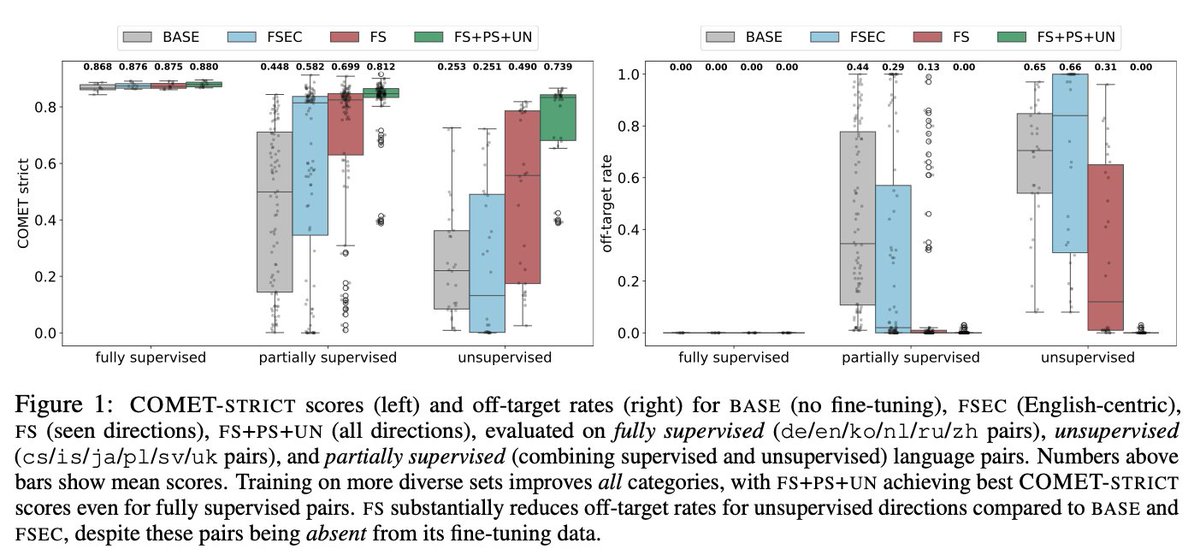

Today we release the first EuroLLM paper and models: EuroLLM-1.7B and EuroLLM-1.7B-Instruct! The EuroLLM project will develop open-weight multilingual LLMs that understand and generate text in all official EU languages. Stay tuned for the bigger and stronger EuroLLMs (9B, 22B)!

We are looking for reviewers to join our program committee and help prepare high quality reviews for paper submissions. If you're interested please fill out this form: forms.gle/VVYbYnjKBJrAGb…