Hadas Zeilberger

116 posts

Hadas Zeilberger

@idocryptography

Cryptography PhD student @YaleACL, https://t.co/PTQFUs4The bluesky: @hadaszeilberger.bsky.social

Ritual is a lab for autonomous intelligence. The thesis is organized around what durable machine agency actually requires: emancipation from human control, strong privacy, mech design for compute markets, and consensus rules that can schedule and resurrect agents when they die.

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

Some thoughts on AI and mathematics, inspired by "First Proof."

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

Geoffrey Hinton says mathematics is a closed system, so AIs can play it like a game. They can pose problems to themselves, test proofs, and learn from what works, without relying on human examples. “I think AI will get much better at mathematics than people, maybe in the next 10 years or so.”

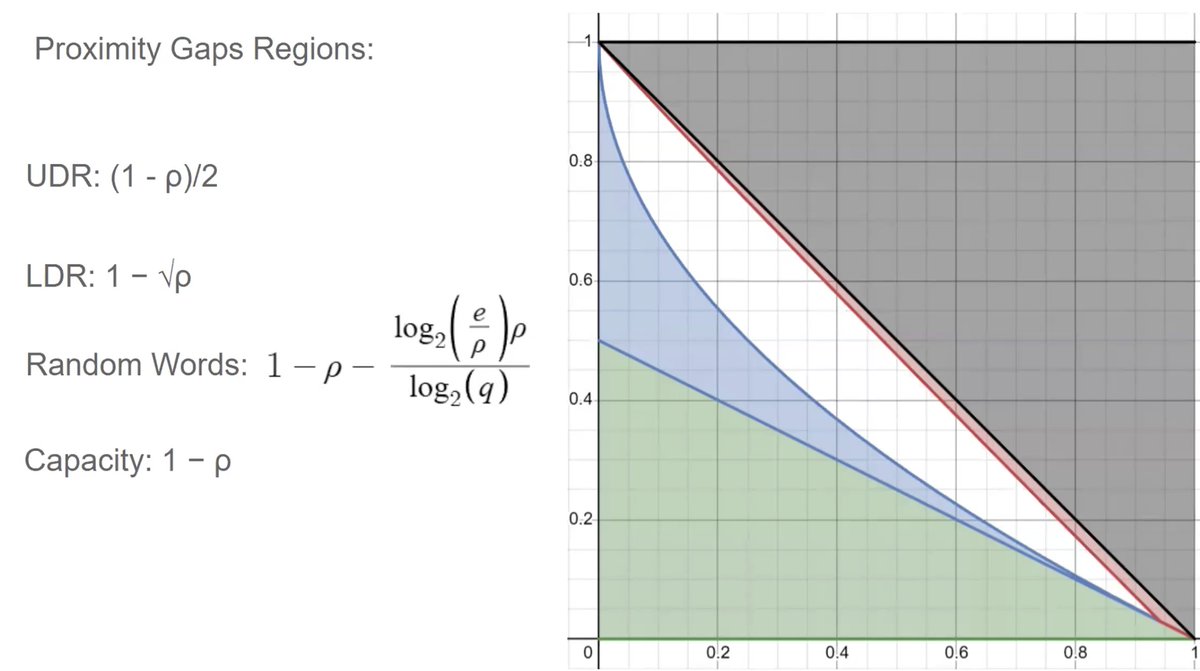

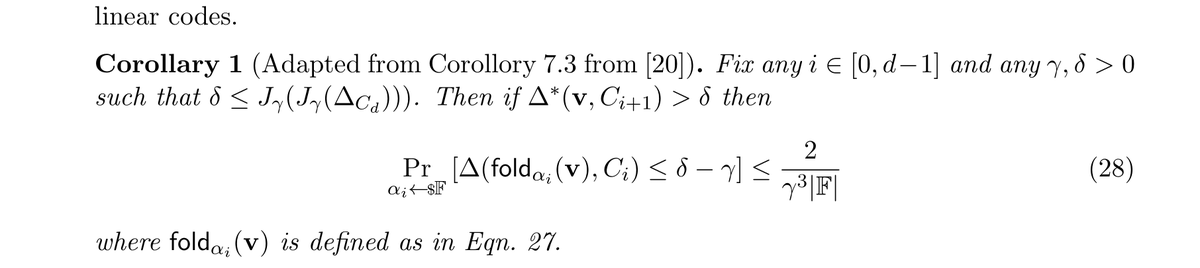

With the recent exciting flurry of excitement around proximity gaps, it's easy to forget what they’re actually used for - building polynomial commitment schemes (PCS), which are a key backbone of SNARKs. In this new work with the wonderful @benediktbuenz, @GiacomoFenzi and @kleptographic we construct a new PCS based on tensor codes and code-switching that is very close to optimal. ia.cr/2025/2065