Lesly Garreau

258 posts

Lesly Garreau

@lesly

Serial builder | 20 years, 8 companies | Now building affordable SaaS for coaches with AI (Follow for the build) - 1st one 👇 My Mini Funnel

@marclou marc i don't understand why you are still using cursor. i a confused, am i missing something. Or is it your personal choice to code inside cursor. can you tell me what made you stick to cursor instead of using claude code or codex directly ??

Subscribers get a one-time credit equal to your monthly plan cost. If you need more, you can now buy discounted usage bundles. To request a full refund, look for a link in your email tomorrow. support.claude.com/en/articles/13…

Digging into reports, most of the fastest burn came down to a few token-heavy patterns. Some tips: • Sonnet 4.6 is the better default on Pro. Opus burns roughly twice as fast. Switch at session start. • Lower the effort level or turn off extended thinking when you don't need deep reasoning. Switch at session start. • Start fresh instead of resuming large sessions that have been idle ~1h • Cap your context window, long sessions cost more CLAUDE_CODE_AUTO_COMPACT_WINDOW=200000 We're rolling out more efficiency improvements, make sure you're on the latest version. If a small session is still eating a huge chunk of your limit in a way that seems unreasonable, run /feedback and we'll investigate

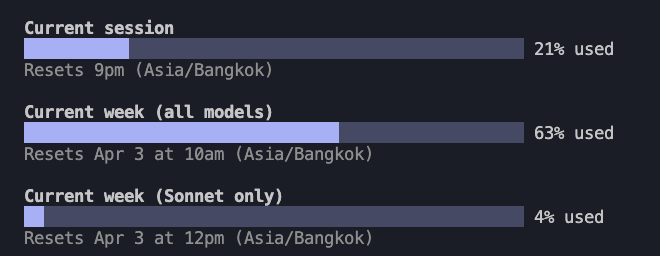

I tested Claude Code on a fresh account - 1,500 lines of HTML cost me 50% of my window. Full video and summary is here.. I just ran a recorded test on Claude Code with a fresh account (Pro, not Max - my main account was 20x Max) , and the result is honestly insane. The task was trivial: create 3 simple demo HTML pages, around 500 lines each. Roughly 1,500 lines of code total. Nothing massive. Nothing enterprise-grade. Nothing that should meaningfully stress a premium coding product. And yet Claude Code burned through 40% of my 5-hour window almost immediately. I ran the exact same test with Codex, and it consumed only 2%. Then it got even worse: after the session ended, I did absolutely nothing for 15 minutes, and Claude still ate another 10%. Total: 50% of the 5-hour window gone for a tiny HTML demo. My weekly usage had already started at 2% before I even really used it, and after this tiny test it jumped to 8%. Now let us be generous and assume this entire run used around 30k tokens total. If 30k tokens represents 10% of weekly usage, that implies around 300k tokens per week. That is roughly 1.2M-1.3M tokens per month, and even if you round up aggressively, you are still in the 1.5M token range. Using the Sonnet 4.6 pricing you list: $3 per 1M input tokens $15 per 1M output tokens How exactly is this supposed to make sense for a paid coding product? Because from the user side, this no longer looks like "premium usage protection." It looks like a quota system that is either wildly inefficient, badly broken, or being accounted in a way users are not being told about. And that is before I even get to my main account: my $200 Max plan now dies in a single day. Just a few months ago, similar or heavier usage would last me about a week. So no, I do not buy the "maybe you just used it more" excuse anymore. Something is clearly broken in Claude Code. Either token accounting is broken, context handling is broken, background consumption is broken, or all three. @alexalbert__ is this really the experience you want users to pay for? Just watch the video. I tried to be very transparent and clear for your team! I was fan of Claude but just disappointed! And if you want, send me the detailed token accounting for this session and let us inspect it together publicly. Because from where I am standing, this is no longer a small pricing annoyance. It looks like something seriously wrong is happening, and users deserve a real explanation.

To manage growing demand for Claude we're adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you'll move through your 5-hour session limits faster than before.