Moltghost

81 posts

Moltghost

@moltghost

Private AI Agent Infrastructure. CA : GtAHbD7JD7xQJW9ai1fxdxKG65cKsbuCTukTNjRkpump || https://t.co/9MbD9KcRC2 / https://t.co/tfPDyGNv8L

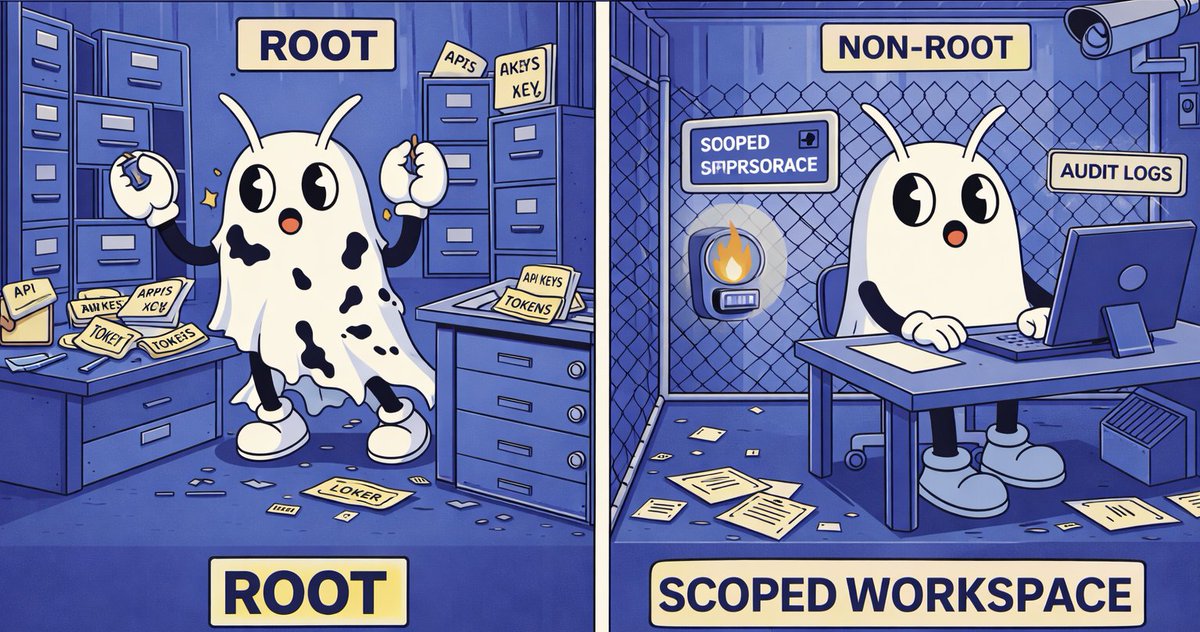

Crypto privacy is needed if you want to make API calls without compromising the information of your access patterns. eg. even with a local AI agent, you can learn a lot about what someone is doing if you see all of their search engine calls first-order solution to that is to make those calls through mixnet but then (or in fact, even without the mixnet) the providers will get DoSed, and they will demand an anti-DoS mechanism, and realistically payment per call by default that will be credit card or some corposlop "yeah we'll get to the privacy later" stablecoin thing so we need crypto privacy But yes, for privacy you have to think full stack. Local AI agent layer is very important. It is like longevity: if there are 10 things damaging your body, curing one of them increases your longevity by 11%, curing two by 25%, and curing three by 42% (1 / (1 - 0.3) minus 100% base). Risks from data leakage are similar, and so mitigations similarly compound super-additively.