Tiago Pimentel

1.1K posts

Tiago Pimentel

@tpimentelms

Postdoc at @ETH_en. Formerly, PhD student at @Cambridge_Uni.

Are you interested in interning with me and my lab? A unique opportunity for a 4-month research stay, with generous funding as an Azrieli visiting PhD fellow! DM me if you're interested. azrielifoundation.org/fellows/visiti…

Happy to announce that the blog post I wrote on the UnigramLM tokenization algorithm will be appearing in the ICLR 2026 blog post track! Added lots more examples and intuitions since the first version. Hope some of you find it to be a useful resource :) cimeister.github.io/blog/unigramlm/

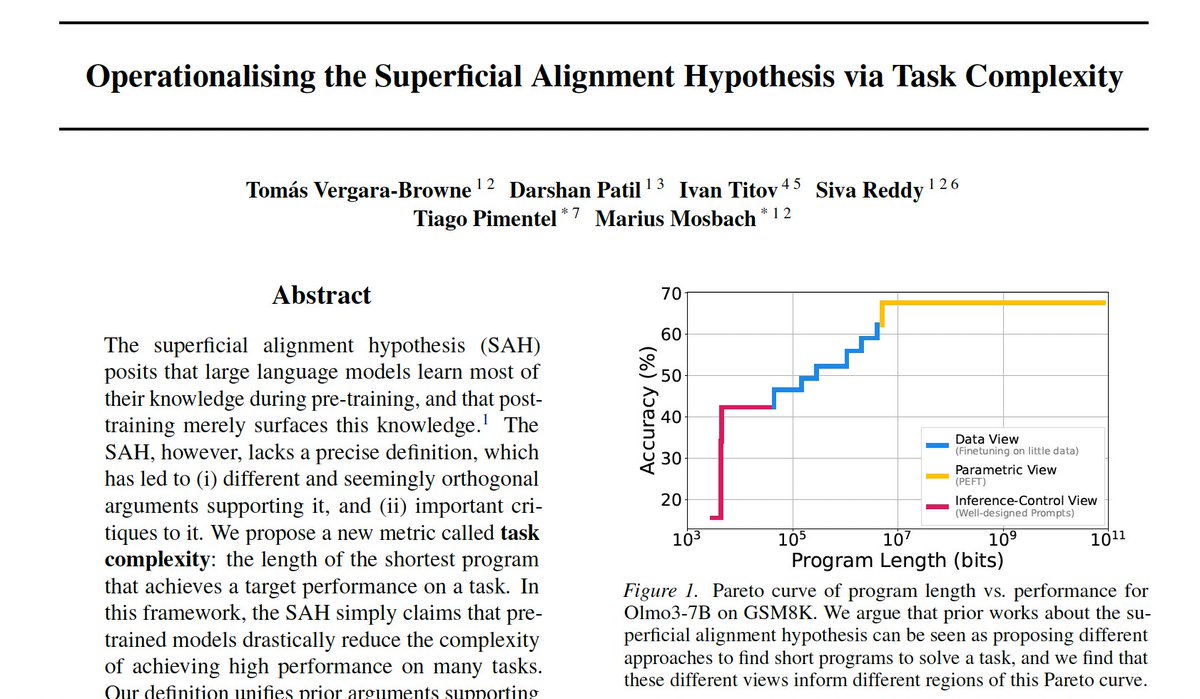

first paper of the phd 🥳 the Superficial Alignment Hypothesis (SAH) argues that pre-training adds most of the knowledge to a model, and post-training merely surfaces it. however, this hypothesis has lacked a precise definition. we fix this.

first paper of the phd 🥳 the Superficial Alignment Hypothesis (SAH) argues that pre-training adds most of the knowledge to a model, and post-training merely surfaces it. however, this hypothesis has lacked a precise definition. we fix this.