bijan bina

1.2K posts

bijan bina

@voidserf

I install AI visibility systems for trust-first brands. When someone asks ChatGPT about your space, you should be the answer.

Entrou em Temmuz 2009

2.5K Seguindo308 Seguidores

yo @codyschneiderxx @maxchehab how does your GSC look with your >13,000 blog posts for graphed? wondering if it worked or got punished

English

@mckaywrigley it's a good model, sir. i can have it focused on a task for 12+ hours regularly. it's a thing of beauty.

opus, on the other hand, fubars if i stop watching it. but it's better at tool calls, so i guess there's that.

English

@johncwright2001 how do you reconcile that the trinity is internally inconsistent?

English

I believe in God after 40 years of ardent lifelong atheism because I found evidence.

Very much against my will and inclination, logic proved to me that the Christian worldview is coherent and atheism incoherent.

So I experimentally prayed, demanding God show Himself

He did

D_Preacher@D_Preacher_1

Do people believe in God because they found evidence, or because they were taught to believe as children?

English

@irl_danB yes it has an feature behind /experimental that can run parallel sub-agents. i'll test it out again but last time codex just ended up searching the repo instead of using its built-in prose skill.

English

I am highly focused on making prose as useful to you as possible, so yes

OpenProse should be compatible with codex if that has subagents now (parallel yet?)

npx skills add openprose/prose

prose write a hello world sample program with subagents that do a parallel fan-out with a cheap model and synthesize fan-in with best model, loop over this a few times

prose run hello.prose

prose evaluate

English

yo @irl_danB now that codex has sub-agents, can you make prose compatible with it? or are you focused on the rlm stuff now?

English

i'm building something that is more like the governance/institutional learning layer on top of ai-generated content. bring your own agent harness (git/filesystem + cli/mcp), collect feedback on docs from your team (web ui), your agent synthesizes feedback, revises docs, and never makes the same mistakes again.

containerized for self-hosting but cloud-hosted saas to start. interest in joining the pilot program?

English

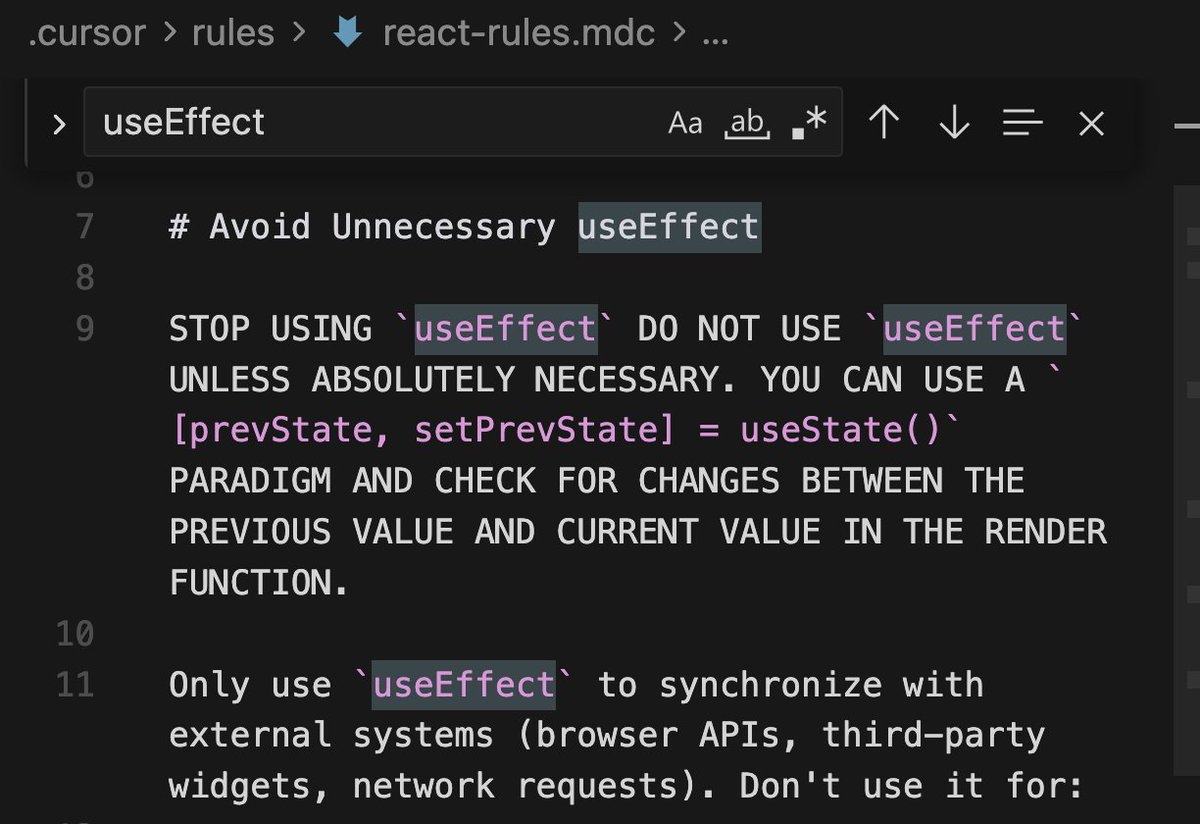

## React useEffect Policy — NO DIRECT useEffect

**Direct `useEffect` calls are banned in component files.** Most useEffect usage compensates for something React already gives better primitives for. This rule is enforced by ESLint (`no-restricted-imports` for `useEffect`) and by a grep-based CI guard.

### The only approved escape hatches

1. **`useMountEffect()`** — for one-time external sync on mount (defined in `apps/web/src/hooks/useMountEffect.ts`). This is `useEffect(fn, [])` wrapped in a named hook.

2. **Custom hooks** — `useEffect` inside a purpose-built hook (`useMediaQuery`, `useDocumentTitle`, `useScrollRestore`, etc.) is acceptable when it truly syncs with an external system.

3. **Existing code** — legacy `useEffect` calls are tracked for removal. New code must not add more.

### Five patterns that replace useEffect

| Instead of… | Do this |

| ----------------------------------------------------------------------- | ---------------------------------------------------------------------- |

| `useEffect(() => setX(deriveFromY(y)), [y])` | Compute inline: `const x = deriveFromY(y)` or `useMemo` |

| `useEffect(() => { fetch(url).then(setData) }, [url])` | `useQuery` (TanStack Query) — handles caching, cancellation, staleness |

| `useEffect(() => { if (flag) { doAction(); setFlag(false) } }, [flag])` | Call `doAction()` directly in the event handler that sets the flag |

| `useEffect(() => { setLocalState(initialValue) }, [propId])` | Use `key={propId}` on the component to force remount |

| `useEffect(() => { loadWidget(); return () => destroyWidget() }, [])` | `useMountEffect(() => { loadWidget(); return () => destroyWidget() })` |

### Smell tests — stop and refactor if you see

- `useEffect(() => setX(...), [y])` — derived state, compute inline

- State that only mirrors other state or props — redundant, remove it

- `fetch()` + `setState()` inside an effect — use `useQuery`

- "set flag → effect runs → reset flag" choreography — call from event handler

- Effect whose only job is resetting state when an ID/prop changes — use `key`

- Dependency arrays longer than 3 items — effect is doing too much, decompose

### Guardrail

```bash

# CI check — fails if any UNTAGGED useEffect exists in component/page files

bun run verify:no-raw-useeffect

```

Every `useEffect` call in `apps/web/src/components/**` and `apps/web/src/pages/**` must be either:

1. **Refactored away** (preferred) — use the five patterns above

2. **Tagged as audited** — add a comment on the line immediately before:

```ts

// effect:audited —

useEffect(() => { ... }, [...]);

```

Custom hooks in `apps/web/src/hooks/` are exempt (they are the approved encapsulation boundary). The `unaudited-useeffect` category is also tracked in `bun run ci:antipatterns`.

**If you add a new useEffect:** You must either refactor it to a better pattern or tag it with `// effect:audited — `. Untagged calls fail CI.

English

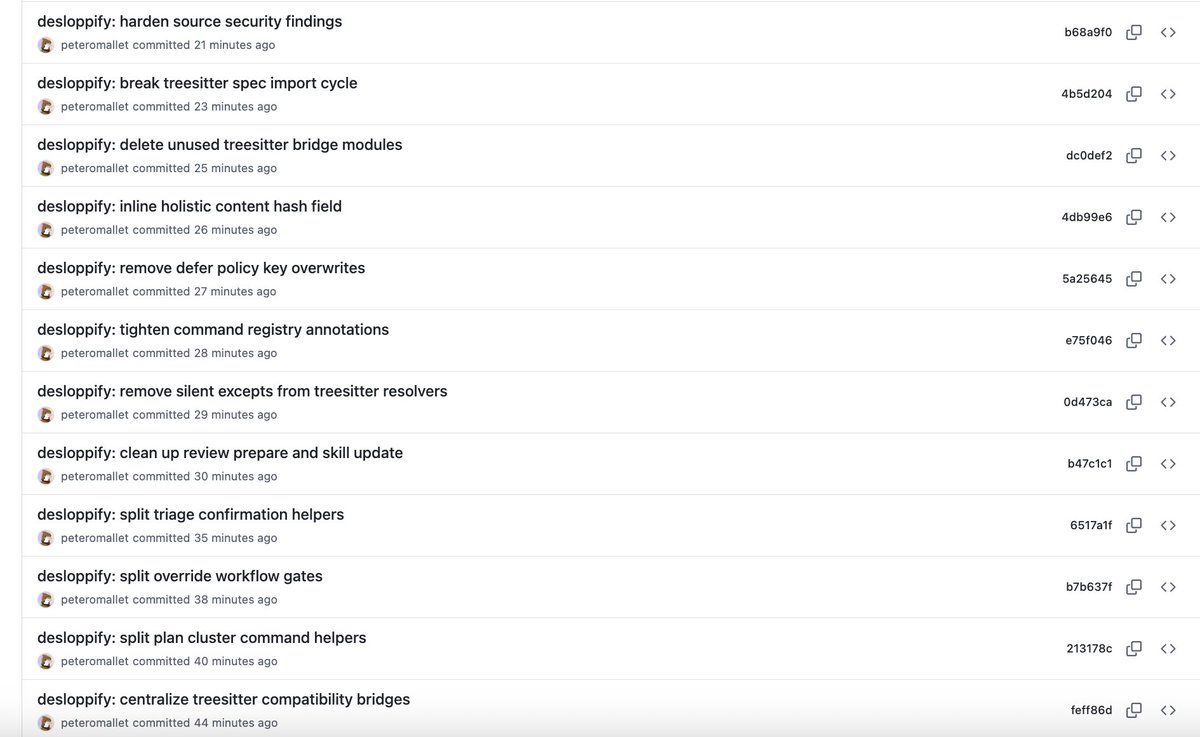

@peterom it needs to detect if <!-- desloppify --> tags already exist and skip. it's appending what looks like skill.md instructions but never asks if i want that. skill version 3 + codex wrapper

English

yo @peterom next advancement for desloppify should be multi-agent coordination to maximize throughput without clobbering.

English

please make sure you test a bunch with codex, not just claude code!

might be some learnings in the beads and beads viewers projects, as well as agent mail. i typically use these to get swarms of agents working together.

be careful with sub-agent prompts, if they all run repo-wide scripts simultaneously it can cause crashes!

English

@peterom @PurzBeats structurally very hard to monetize discord. sadly they tried anyway, and now will inevitably die!

make discord IRC again!

English

@PurzBeats I'm predicting a slow decline

Thankfully think there'll be enough time/AI progress for us to vibe code our own alternative!

English

yeah take a look at @doodlestein's stack. my article below covers the planning process in detail.

planning:

- use your coding agent to get a markdown plan started, ask it to interview you to collect as much info as possible to create the first draft

- ask another agent to stress-test the plan against your expectations. you want the shape / goal / end-state to be pretty clear and you need to be bought in. don't read it, just ask as many questions as possible and do it between a codex/claude code in fresh convos a couple times

- take your full markdown plan and use GPT5.4 Pro Extended + Gemini 3.1 Deep Think Pro to evaluate the plan and suggest improvements, find gaps, inconsistencies and provide diff + rationale

- have your coding agent synthesize the diffs and incorporate seamlessly in-place (if and only if it agrees with the suggestions)

- do this back-and-forth a few times, ask it to look with fresh eyes, come up with accretive features, brainstorm new approaches, etc. the Pro models in the browser think much harder, so that's why we use them to improve the plan.

- eventually you'll see diminishing returns/thrashing and you can stop. make sure you haven't overengineered it, try to simplify, figure out novel abstractions, cut scope, pick the right stack, etc.

beads:

- with the plan as the reference and the existing repo as the baseline, ask your coding agents to create self-contained, independently implementable beads (these are your epics and user stories).

- claude is best to get the first round of beads, but do many iterations, gap analysis, etc with multiple agents across many rounds to get the complete implementable bead graph ready

implementation:

- parallelize with a swarm of 4x codex, 2x claude agents (opus/5.4 max/xhigh). this is probably most you can do to keep within session limits.

- then march through and do a ton of testing (have the agents fix, find gaps in the plan vs implementation, use agent-browser, create and execute manual test plans, etc)

- run desloppify and use other guardrails (security skills, design skills, fallbacks, anti-patterns, other stuff i can expand on if there's interest) to clean up the inevitable tech debt

- do your own manual testing and be amazed at what was accomplished

x.com/voidserf/statu…

English

@peterom can it find dead code and backwards-compatibility stuff that agents built but doesn't actually need to be supported?

English

desloppify by @peterom is really cool! i'm using it a bit like beads, running parallel agents to clean up my massive codebase and currently burning down over 1000 identified issues.

i'm using a prompt based on @doodlestein's beads execution prompt, and i've added a dedicated section to my agents.md to help the bots systematically drain the issues.

---

here's the prompt:

First read ALL of the AGENTS.md file and README.md file super carefully and understand ALL of both! Then use your code investigation agent mode to fully understand the code, and technical architecture and purpose of the project. Then register with MCP Agent Mail and introduce yourself to the other agents. Be sure to check your agent mail and to promptly respond if needed to any messages; then proceed meticulously the next desloppify issue, working on the tasks systematically and meticulously and resolving them in desloppify when you're done. Check your inbox for messages from the other active agents and identify yourself as well. Then pick up the next desloppify issue and keep building. Don't get stuck in communication purgatory where nothing is getting done; be proactive about starting desloppify issues that need to be done, but inform your fellow agents via messages appropriately. When you're not sure what to do next, use the desloppify tool mentioned in AGENTS.md to prioritize the best issue to work on next; pick the next one that you can usefully work on and get started. Use your best judgment and coordinate with the other agents as-needed.

---

here's the agents.md addition:

## Desloppify

Use `desloppify` when the user asks for code-quality improvement. Maximize the strict score honestly by following the tool’s queue, not by freelancing.

1. Update first:

- `python3 -m pip install --user --upgrade --break-system-packages "desloppify[full]"`

- `desloppify update-skill codex`

2. Check exclusions before the first scan.

- Exclude obvious scanner state, build output, caches, worktrees, and generated dirs.

- Audit with `desloppify config show`. In this repo, exclusions are persisted in `.desloppify/config.json`.

3. Start with the plan-aware loop:

- `desloppify scan --path .`

- `desloppify next`

- If `next` shows a planning/triage step, do that first instead of skipping ahead.

- Prefer `desloppify plan ...` commands over ad-hoc issue browsing when the plan queue is active.

- Fix the exact issue the queue gives you.

- Resolve with `desloppify plan resolve ... --attest ...` when the queue is plan-backed.

- Repeat.

4. Use `plan triage` when the queue blocks on planning work:

- `desloppify plan triage --help`

- `desloppify plan triage --confirm-existing ...`

- `desloppify plan triage --confirm observe ...`

- `desloppify plan triage --confirm reflect ...`

- `desloppify plan triage --confirm organize ...`

- `desloppify plan triage --complete`

- If triage says issues must be organized into clusters, add them to the named cluster and update that cluster before returning to `desloppify next`.

5. For holistic review findings, follow the plan queue literally:

- Use `desloppify plan show` or `desloppify plan queue` to understand why a queue item is ahead of your preferred target.

- Do not jump to a later “easy” fix if a planning or higher-priority queue item is still blocking the flow.

6. Operational notes:

- `desloppify next` may keep surfacing stale-looking entries until you resolve them through `desloppify plan resolve`.

- `desloppify plan resolve` is strict about attestation text. Include both `I have actually` and `not gaming` in the attestation when required.

- If a fix changes file layout materially, rescan before continuing: `desloppify scan --path .`

- If a resolved item still appears in `next`, inspect `desloppify plan queue` before assuming the tool is wrong.

7. Rescan periodically with `desloppify scan --path .` and use `desloppify status` to verify whether the strict score moved.

8. For subjective rescoring in Codex, prefer:

- `desloppify review --run-batches --runner codex --parallel --scan-after-import`

Rules:

- Follow the scan/issue instructions literally when they are concrete.

- Fix root causes, not just the symptom a detector happened to notice.

- Do not mark findings fixed without code or test changes that actually address them.

- If `desloppify` itself appears wrong, capture a minimal repro before treating it as user-code debt.

English

Desloppify was born out of my dissatisfaction w/ the engineering of a tool I'm building

Everything worked nicely on the front-end but behind the scenes it was a mess - meaning debugging + feature-building + stability was 💩

Startup wisdom is to ship anyway but if I make a tool for people to make art - to put care into making beautiful things - how can i ask people to do that if I haven't put in the effort to make the tool itself as beautiful as it can be

Now every poor decision I made is being rectified, Claude is sense-checking everything and confirming that is genuinely fixing the roots of the problems I had and discovering lots of little bugs and issues caused by this poor engineering.

Still a slow process but feels like a burden is being lifted off my mind🧘♂️

POM@peterom

Introducing Desloppify v0.9! I'm so convinced that this can make vibe code well-engineered that I'll put my money where my mouth is. If you can find something poorly engineered in its 91k+ lines of code, I'll give you $1,000. Details in Github issue, you have 48 hrs.

English

@peterom pypi still has 0.7.7! had a lot of issues, so updated from github instead, seems to have solved them.

English