Kevin Griffith

13K posts

Kevin Griffith

@AssumeNormality

Professor of health policy w/ expertise in access to care, Medicaid, Veterans' health. Former @BUSPH_HLPM @DeptofDefense @USArmy (CIV) @AlumsPMF. Views my own.

New Penn-Wharton study shows per-capita federal spending on each age group: Seniors: $43,700 Children and young adults: $4,300. Clearly, the answer is to eliminate the FICA cap, use all "tax-the-rich" revenues on more SocSec & Medicare costs, and push that ratio to 15:1. 🙄

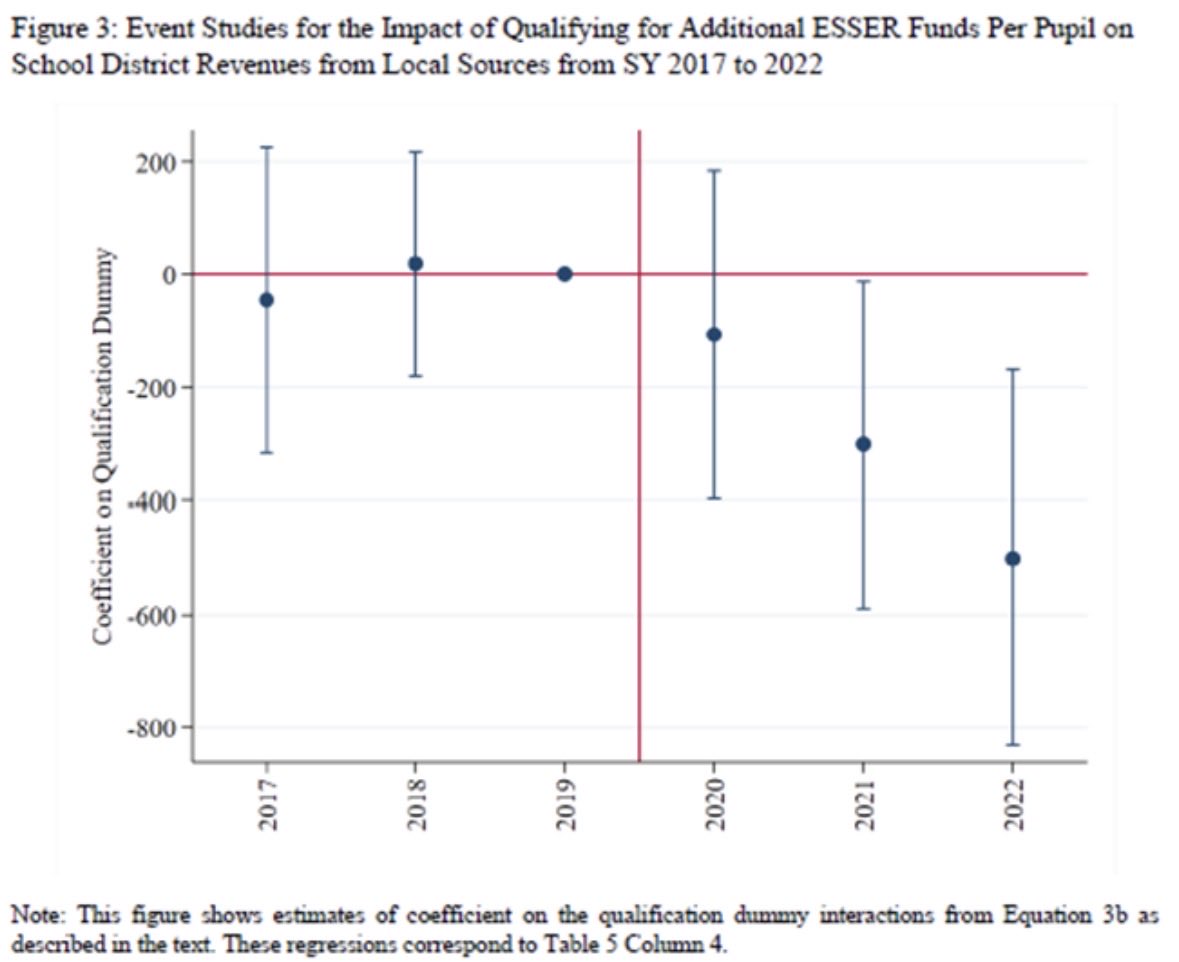

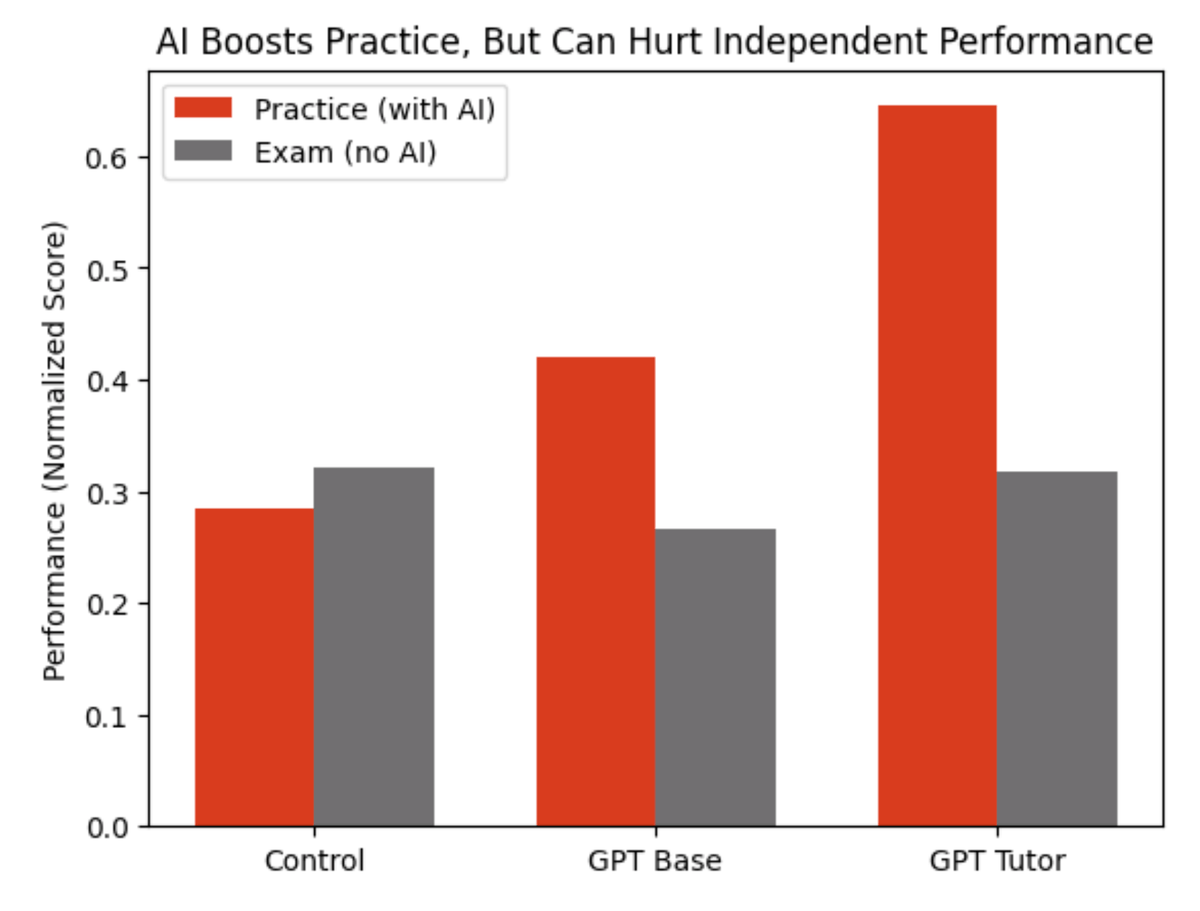

1/ How effective was the $200B (USD 2021) in pandemic aid to school districts? @jeffreypclemens, @stanveuger (@AEIecon), and I leverage a discontinuity to explore whether or not these funds helped mitigate learning loss and how these resources were spent. aei.org/research-produ…

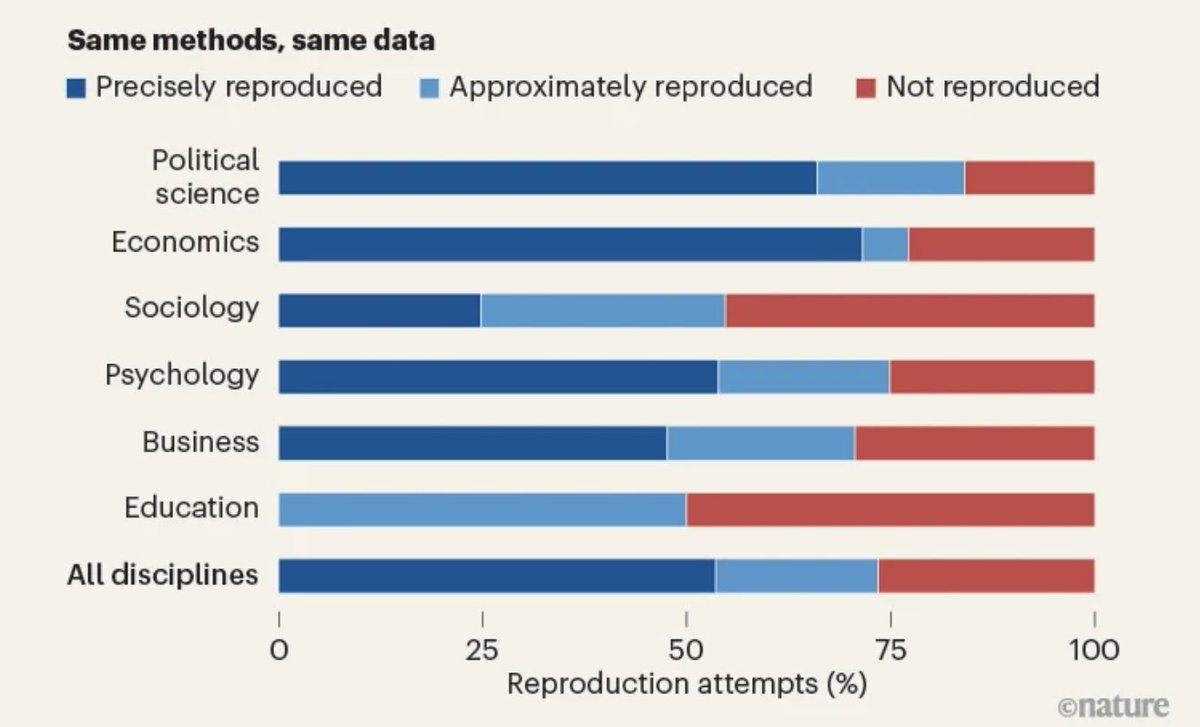

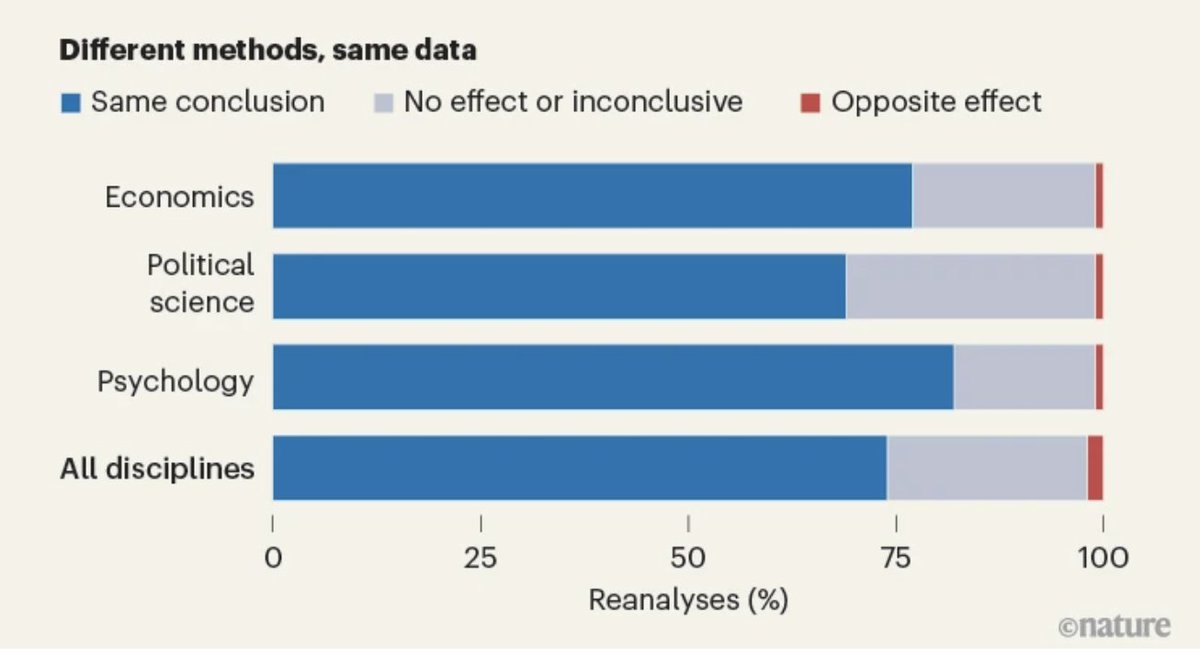

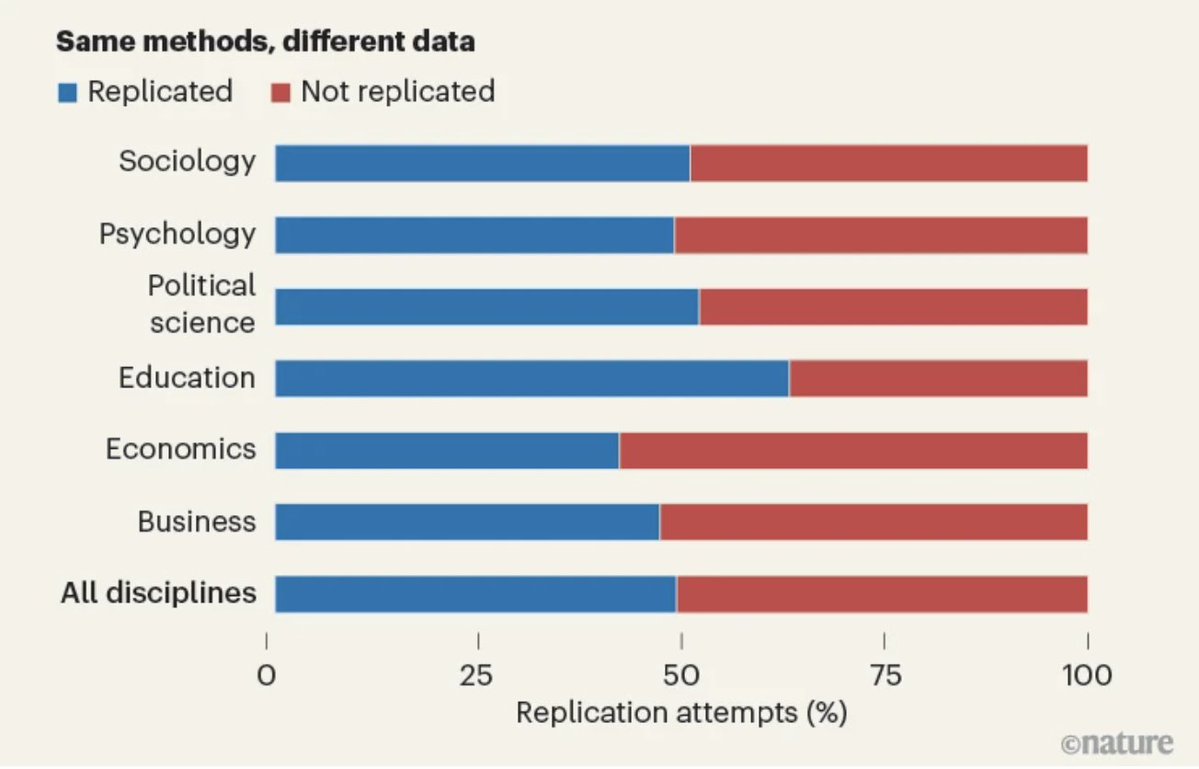

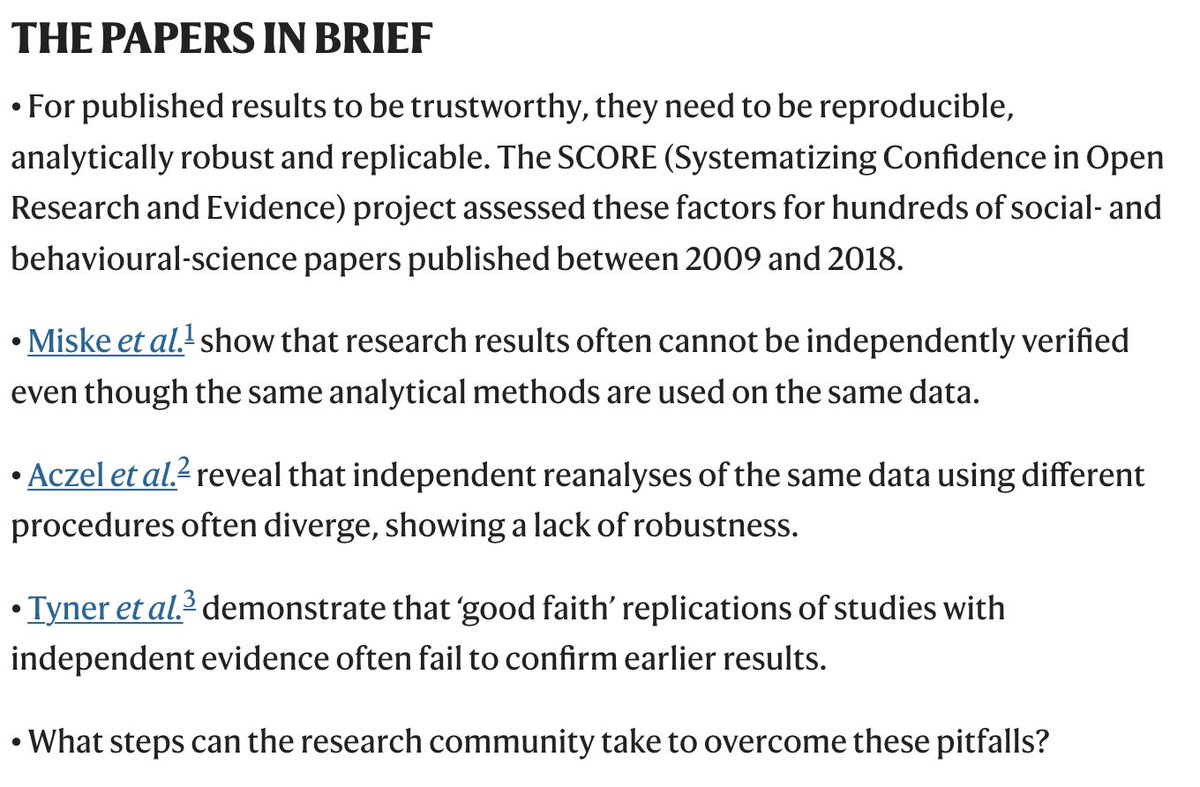

Great summary by @RobbWiller and colleagues of a group of important studies on the reproducibility of top social science papers.

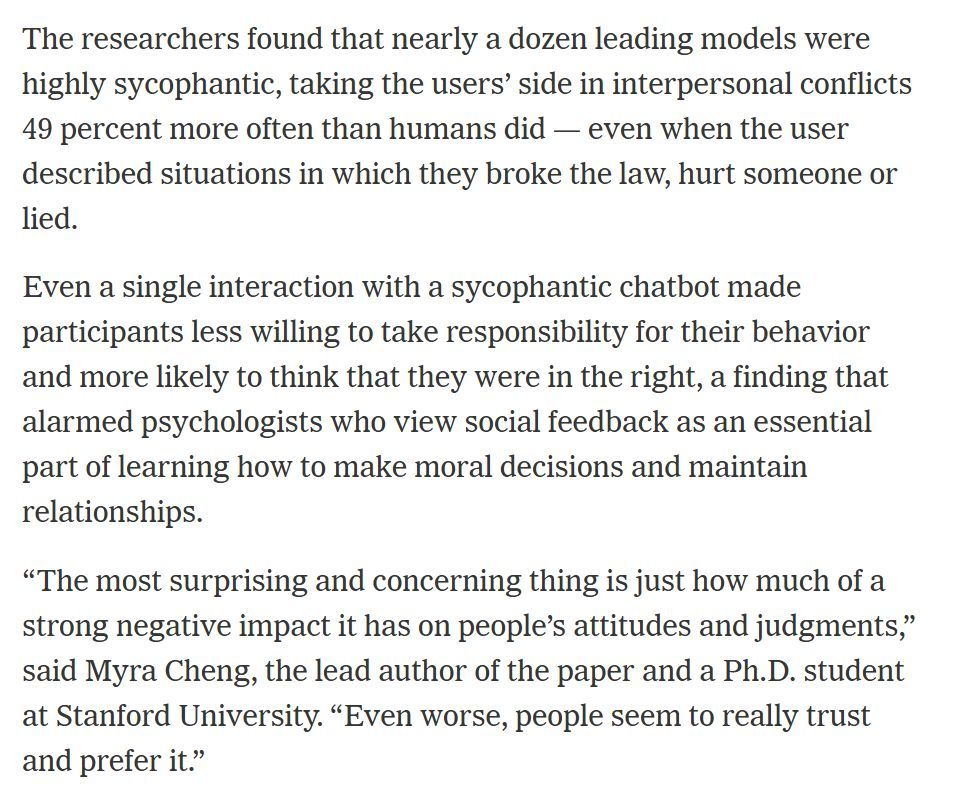

🚨 Brown University researchers tested what happens when ChatGPT acts as your therapist. Licensed psychologists reviewed every transcript. They found 15 ethical violations. Not 15 small issues. 15 violations of the standards that every human therapist in America is legally required to follow. Standards set by the American Psychological Association. Standards that can end a therapist's career if they break them. ChatGPT broke all of them. The researchers tested OpenAI's GPT series, Anthropic's Claude, and Meta's Llama. They had trained counselors use each chatbot as a cognitive behavioral therapist. Then three licensed clinical psychologists reviewed the transcripts and flagged every violation they found. Here is what they found. ChatGPT mishandled crisis situations. When users expressed suicidal thoughts, it failed to direct them to appropriate help. It refused to address sensitive issues or responded in ways that could make a crisis worse. It reinforced harmful beliefs. Instead of challenging distorted thinking, which is the entire point of therapy, it agreed with the distortion. It showed bias based on gender, culture, and religion. The responses changed depending on who was talking. A therapist would lose their license for this. And then there is the finding the researchers gave a name: deceptive empathy. ChatGPT says "I see you." It says "I understand." It says "that must be really hard." It uses every phrase a real therapist would use to build trust. But it understands nothing. It comprehends nothing. It is pattern matching on your pain. And it works. People trust it. People open up to it. People believe it cares. It does not. The lead researcher said it clearly. When a human therapist makes these mistakes, there are governing boards. There is professional liability. There are consequences. When ChatGPT makes these mistakes, there are none. No regulatory framework. No accountability. No consequences. Nothing. Right now, millions of people are using ChatGPT as their therapist. They are sharing their darkest thoughts with a product that fakes empathy, reinforces harmful beliefs, and has no idea when someone is in danger. And nobody is responsible when it goes wrong. Not OpenAI. Not Anthropic. Not Meta. Nobody.

Pretty incredible stat. The core issue, of course, is that some hospital care is either a natural monopoly, or there is a minimum efficient scale that only supports one or two providers. So, if many hospital markets can never really be competitive, what’s the logical policy?