kou

570 posts

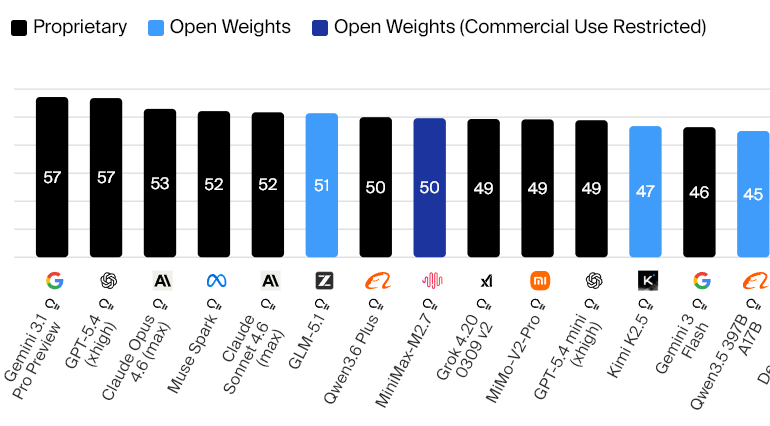

MiniMax M2.7 is now open weights, just over three weeks after launching with a score of 50 in the Artificial Analysis Intelligence Index. However, MiniMax is releasing the model with a non-commercial license.

At 230B total with 10B active parameters, M2.7 is ~3.3x smaller than the leading open weights model GLM-5.1 (754B / 40B active) and has ~4x fewer active parameters. This makes M2.7 a highly compelling option for self-deployment, and leads to hosted inference being ~4x cheaper across providers.

MiniMax’s decision to restrict commercial usage the M2.7 weights may be part of an emerging trend in how Chinese labs are approaching open source. Proprietary model releases in recent weeks from Chinese labs have included Xiaomi’s MiMo V2 Pro and Alibaba’s Qwen3.6 Plus.

Look out for an update on M2.7 providers soon!

English

This interface is actually hilarious. It doesn't follow any of Microsoft's current design guidelines and is such a poor use of screen real estate. I hope with the company refocusing on craft, it's able to give the install and recovery screens a freshed interface that more closely aligns with Windows 11's design language

English

We're delighted to announce that MiniMax M2.7 is now officially open source.

With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%).

You can find it on Hugging Face now. Enjoy!🤗

huggingface:huggingface.co/MiniMaxAI/Mini…

Blog: minimax.io/news/minimax-m…

MiniMax API: platform.minimax.io

English

You can infer the size of an AI model by how well it does Family Guy cutaways. This sounds strange, but hear me out:

ranking:

1. Gemini 3.1 -- this was actually kind of funny, I could hear the voice actors voicing each founding father in my head

2. Claude 4.6 opus -- not funny but it follows a bit of the style. It goes on for too long

3. Gpt 5.4 high -- one might just call this pure slop

We may be able to infer that Gemini 3.1 has a higher parameter count or is at least denser than 4.6 opus.

Gemini 3.1 was also the only model to avoid a "fatal" error. Other models would often mention the subject matter of the cutaway after the cutaway ended through character dialogue (this is not how they work).

I think I've watched all of family guy 20 times over from when I was a kid till now so I have a pretty good idea of what feels like a correct cutaway.

I ran multiple tests and I found this ranking to hold. Muse spark performs the worst out of frontier models.

English

Defending MiniMax

I think it’s fine they set up a license. We kind of deserve it.

You work on something with dozens of people burning through investment money. Put it out to the world for free.

People say you’re benchmaxxing, call you a communist spy, take your models and sell them. Demand more from you.

You deal with this for a year, your peer’s models are being used in the west with little to no credits, same story.

They released the research, gave us the weights, released the environments, proved you can max 230B params competitive.

Thank you MiniMax. I hope you win more and more.

English

Seeing from some friends the Vulkan backend seems to perform worse at least on Linux

But if they make it better it might outperform opengl

slicedlime 💙💛@slicedlime

New Minecraft Snapshot: 26.2-snapshot-1 minecraft.net/en-us/article/…

English