Закреплённый твит

OpenAGI Labs

43 posts

OpenAGI Labs

@agiopen_org

Building Computer-Use Agent Models and the open ecosystem around it.

Bay Area, USA Присоединился Ocak 2025

15 Подписки2.2K Подписчики

Watch our Computer Use model visually organize a chaotic local directory directly at the OS level.

Most agents are trapped inside the browser. Lux operates natively across Mac and Windows, driving the actual desktop GUI.

In this sequence, Lux isn't running a background Python script. It uses pure visual intelligence to execute the workflow:

Perceives the UI: Visually scans the native file manager to identify file types and icons.

Synthesizes Context: Uses semantic reasoning to categorize unstructured files.

Drives the OS: Executes rapid, native GUI selections to sort them into folders.

By optimizing how the model processes UI elements, this OS-level execution is faster, cheaper, and significantly more accurate than current SOTA computer use models.

#OpenAGILabs #ComputerUse #Automation #SoftwareEngineering #AI #OS

English

@shivsakhuja @agentmail @tryagentphone Yes, these tools are building the agent-first economy. AND now those brains need hands. The next evolution is secure Computer Use: giving agents the visual intelligence to securely take hold of the OS and drive any GUI natively. Check it out: developer.agiopen.org

English

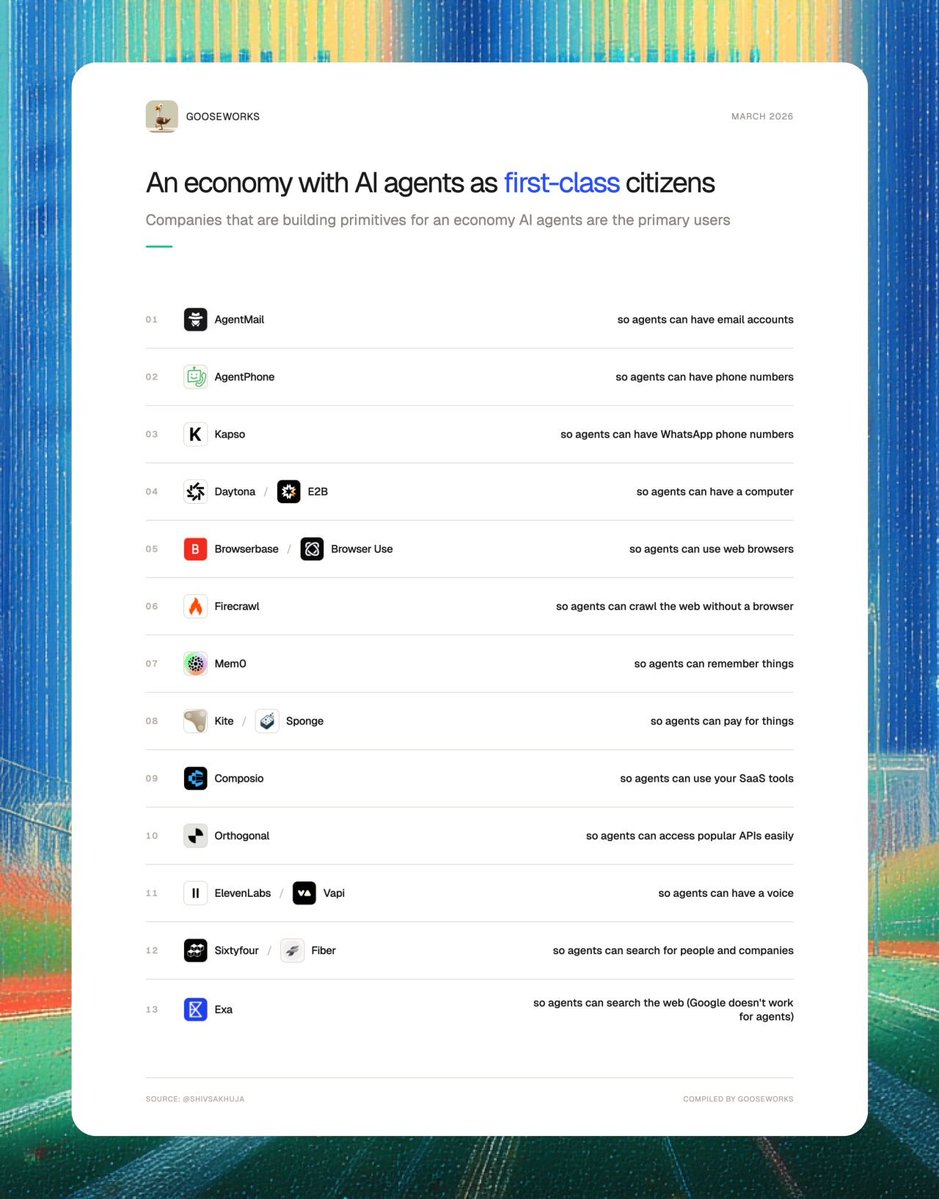

Lots of companies are now building primitives for an economy where AI agents are the primary users instead of humans.

They're betting on an economy of AI coworkers.

1. AgentMail (@agentmail): so agents can have email accounts

2. AgentPhone (@tryagentphone): so agents can have phone numbers

3. Kapso (@andresmatte): so agents can have WhatsApp phone numbers

4. Daytona (@daytonaio) / E2B (@e2b): so agents can have their own computers

5. Browserbase (@browserbase) / Browser Use (@browser_use) / Hyperbrowser (@hyperbrowser): so agents can use web browsers

6. Firecrawl (@firecrawl): so agents can crawl the web without a browser

7. Mem0 (@mem0ai): so agents can remember things

8. Kite (@GoKiteAI) / Sponge (@PayspongeLabs) : so agents can pay for things.

9. Composio (@composio): so agents can use your SaaS tools

10. Orthogonal (@orthogonal_sh) so agents can access APIs easily

11. ElevenLabs (@ElevenLabs) / Vapi (@Vapi_AI) so agents can have a voice

12. Sixtyfour (@sixtyfourai) so agents can search for people and companies.

13. Exa (@ExaAILabs): so agents can search the web (Google doesn’t work for agents)

If you stitch all of these together, you get a digital coworker that looks more human than AI.

English

Watch our Computer Use model visually navigate a complex Shopify dashboard to add a new product natively in the browser. Zero API integrations or CSV uploads required.

In this sequence, Lux executes a standard e-commerce operations task entirely through the GUI:

Visual Parsing: Scans the dense Shopify sidebar to locate and navigate to the 'Products' module.

Interface Interaction: Identifies and triggers the 'Add product' action within the dynamic DOM.

Data Synthesis: Maps the unstructured variables (Title, Description) into the correct rich-text input fields.

State Execution: Completes the workflow by saving the entry and visually confirming the success state.

Bypassing rigid backend integrations and driving workflows directly at the presentation layer is how automation actually scales.

#OpenAGILabs #ComputerUse #SoftwareEngineering #Automation #AI #Shopify

English

@felixrieseberg Cross-device dispatch is exactly how agents should feel. Text the brain on mobile, let the hands work on the desktop. We're building the visual GUI execution layer for this at OpenAGI. Async delegation + native OS navigation is the future of computing.

English

This is a great architecture: 147 specialized brains. But they still need hands. Right now, an agent can reason through a complex task, but it still relies on brittle APIs to actually execute it.

The next massive unlock is giving these models a visual execution layer so they can drive the GUI natively, just like a human operator. That’s exactly the gap we’re solving with Lux.

English

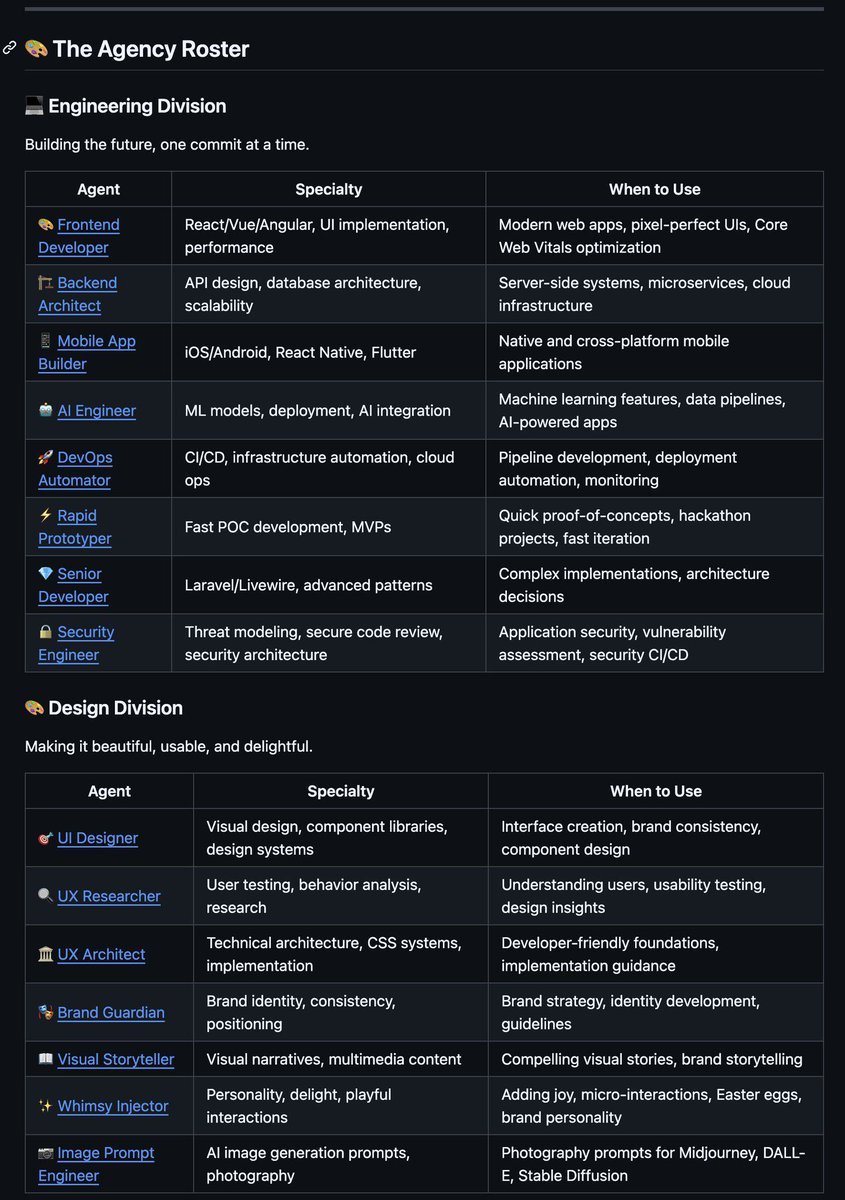

🚨BREAKING: Someone just open sourced a complete AI agency and it hit 50K GitHub stars in under two weeks.

It's called The Agency.

And it's not a prompt template.

It's 147 specialized AI agents across 12 divisions -- engineering, design, marketing, product, QA, support, spatial computing, each with its own personality, workflow, and deliverables.

Here's what you actually get:

→ 147 agents across 12 divisions, each with unique voice and expertise

→ Works natively with Claude Code, GitHub Copilot, Gemini CLI, Cursor, OpenCode, and more

→ One-command install for any supported tool

→ Agents have defined missions, success metrics, and production-ready code examples

→ Full modding support -- build and contribute your own agents

→ Interactive installer that auto-detects your dev environment

→ Conversion scripts for every major agentic coding tool

→ Lua-style Markdown templates with YAML frontmatter

Here's the wildest part:

Most people use AI like a generalist intern. One model doing everything from writing copy to debugging code.

This repo structures AI like an actual company. Specialized roles. Clear responsibilities. Defined workflows between agents.

It started as a Reddit thread. Now it has 50K+ stars, 7.5K forks, and contributions from developers around the world.

Greg Isenberg called it out. It hit 10K stars in 7 days.

This is what the future of AI-assisted development actually looks like.

50K+ GitHub stars. 7.5K forks. 147 agents. 12 divisions.

100% Open Source. MIT License.

(Link in the comments)

English

Watch our Computer Use model visually organize an inbox and execute a multi-step workflow right in the browser. It interacts with the Gmail interface natively, zero backend integrations required.

In this sequence, Lux executes a standard operations task entirely through the UI:

Visual Navigation: Navigates the sidebar to trigger the label creation menu and types "invoices".

Native Tooling: Uses the built-in search bar to filter the inbox for financial documents.

Interface Interaction: Clicks the bulk-select checkboxes and navigates the top menu to apply the new label.

This is true zero-shot visual execution. You give a command, and the agent drives the browser.

#OpenAGILabs #ComputerUse #SoftwareEngineering #Automation #AI

English

Watch Lux translate an unstructured chat message directly into a labeled GitHub issue. Our Computer Use model executes this cross-app GUI workflow with zero API integrations.

In this flow, Lux is doing more than just clicking coordinates. It reads the raw bug report from the chat interface, synthesizes the core technical context (iPhone 15, landscape UI overlap), and drives the browser to the repository.

It drafts the issue using structured Markdown, outlines the reproduction steps, and then physically interacts with the DOM to locate and assign the "bug" label in the sidebar before submitting.

This is how you bridge messy human communication and structured engineering trackers natively.

#OpenAGILabs #ComputerUse #SoftwareEngineering #GitHub #DevOps

English

Social media management shouldn't require endless clicking.

What if you could trigger your entire workflow with a single command?

In this demo, Lux handles social account management autonomously. The instruction is simple: "Boost my pinned X post for 2 days at $20/day."

Watch Lux visually navigate the profile, identify the pinned post, and execute the promotion flow perfectly. No APIs. No manual setup.

Just give the command, and let the Agent execute.

#MarketingAI #SocialMediaManagement #OpenAGILabs #Automation #Tech

English

We built Lux to handle the CRM admin work.

In this demo, our Computer Use model reads an incoming customer email about an integration failure and autonomously navigates Salesforce to log a new case. No rigid backend integrations to configure. It clicks, extracts the context, types the description, and submits the ticket exactly like a human rep does.

Stop paying top talent to act as human APIs. Let the Agent handle Salesforce.

#EnterpriseAI #Salesforce #CRM #OpenAGILabs #Automation

English

Code-based automation is notoriously brittle. One UI update, and your entire test suite breaks.

We built Lux to solve the "Fragility Problem."

In this demo, Lux performs Autonomous Visual Validation on a desktop build. It doesn't rely on hidden code selectors. It maps the app hierarchy instantly and verifies the 'Visualizer' and 'Settings' modules exactly like a human user would.

If the backend code changes but the UI looks the same, the test still passes.

Stop maintaining broken scripts. Start deploying autonomous agents.

#EnterpriseAI #QA #DevOps #OpenAGI #Infrastructure

English

Learn more about OpenAGI's approach to evaluating computer-use agents on our blog:

agiopen.org/blog03

English