Закреплённый твит

Kevin Vicent

29.3K posts

Kevin Vicent

@moebious

Cineasta de mentiras, escritor frustrado, Ingeniero de software en busca de su identidad.

Присоединился Kasım 2008

7.1K Подписки845 Подписчики

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Google just killed the document extraction industry.

LangExtract: Open-source. Free. Better than $50K enterprise tools.

What it does:

→ Extracts structured data from unstructured text

→ Maps EVERY entity to its exact source location

→ Handles 100+ page documents with high recall

→ Generates interactive HTML for verification

→ Works with Gemini, Ollama, local models

What it replaces:

→ Regex pattern matching

→ Custom NER pipelines

→ Expensive extraction APIs

→ Manual data entry

Define your task with a few examples.

Point it at any document.

Get structured, verifiable results.

No fine-tuning. No complex setup.

Clinical notes, legal docs, financial reports, same library.

This is what open-source from Google looks like.

English

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Claude Code now supports agent teams (in research preview)

Instead of a single agent working through a task sequentially, a lead agent can delegate to multiple teammates that work in parallel to research, debug, and build while coordinating with each other.

Try it out today by enabling agent teams in your settings.json!

English

Kevin Vicent ретвитнул

Technical Debt. By Martin Fowler.

martinfowler.com/bliki/Technica…

English

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Please do not run your projects on Vercel

Setting up your own server (VPS) has never been easier. Even a single $100/mo VPS can sustain unreal amounts of traffic. You need to try it to understand it

I've never had a surprise bill like this

$50k for a server bill is BONKERS

Riley Walz@rtwlz

Jmail has crossed 450M pageviews, and our Vercel bill has exploded, even after tons of cache mitigation. Many kind people chipped in to cover the bill, but it’s not sustainable. What are solid, cheaper alternatives?

English

Kevin Vicent ретвитнул

If you've ever wondered how Large Language Models like ChatGPT work, this course is for you.

It covers the data preparation, model training, and fine-tuning that has to happen before an LLM is ready to go.

You'll learn about the whole training process from tokenizing raw text to fine-tuning a functional chatbot.

freecodecamp.org/news/train-you…

English

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

I keep reading that quality needs to take a back seat to profit, but I'm beginning to think that the people who say that don't define "quality" the same way I do.

Let's take "well architected" as an example. That phrase does not mean that you use a lot of design patterns (which add complexity). It does not mean that you use microservices are any other specific architecture, especially if it's complicated. It does not mean that you can accomodate vast amounts of load (that you don't have) or that you can accomodate vast amount of customers (that you don't have). It does not mean that what you do build like Google, or Netflix, or Amazon, or whatever other flavor-of-the-month is the "standard" nowadays. It does not mean that you use certain packages ("React" comes to mind, immediately). If the words "of course, we must" went into a choice, it was probably a wrong choice.

It does mean that changes are easy to do. Among other things, that means that the code is well tested and relatively bug free. Bugs slow you down too much. Code goes together faster when you write the tests first (even/especially if you're using an AI assist—tests make great specifications).

It does mean that you have exactly as much architecture as you need. It helps if that architecture can grow incrementally, but that's not hard to acomplish. This is, again, critical in an AI context. A system make of small components with impermeable boundries easily contains problems that an AI might create; it's easy to test as black boxes without having to fully understand; it gives you a very small "blast radius" if something goes wrong. Writing a component system take no more effort than writing a disorganized blob of code. The cost is zero.

It does mean that you have exactly as much code as you need to solve the problems at hand. Not one semicolon more. No futureproofing. No just-in-case's. Simple code is easy to augment. Complicated code is impossible to change. Why waste time writing code that you don't need?

English

Kevin Vicent ретвитнул

the new bottlenecks are the breadth of your imagination, the intensity of your desire and your capacity to articulate it

Nat Eliason@nateliason

The limiting factor with AI at this point is no longer intelligence, but desire.

English

Kevin Vicent ретвитнул

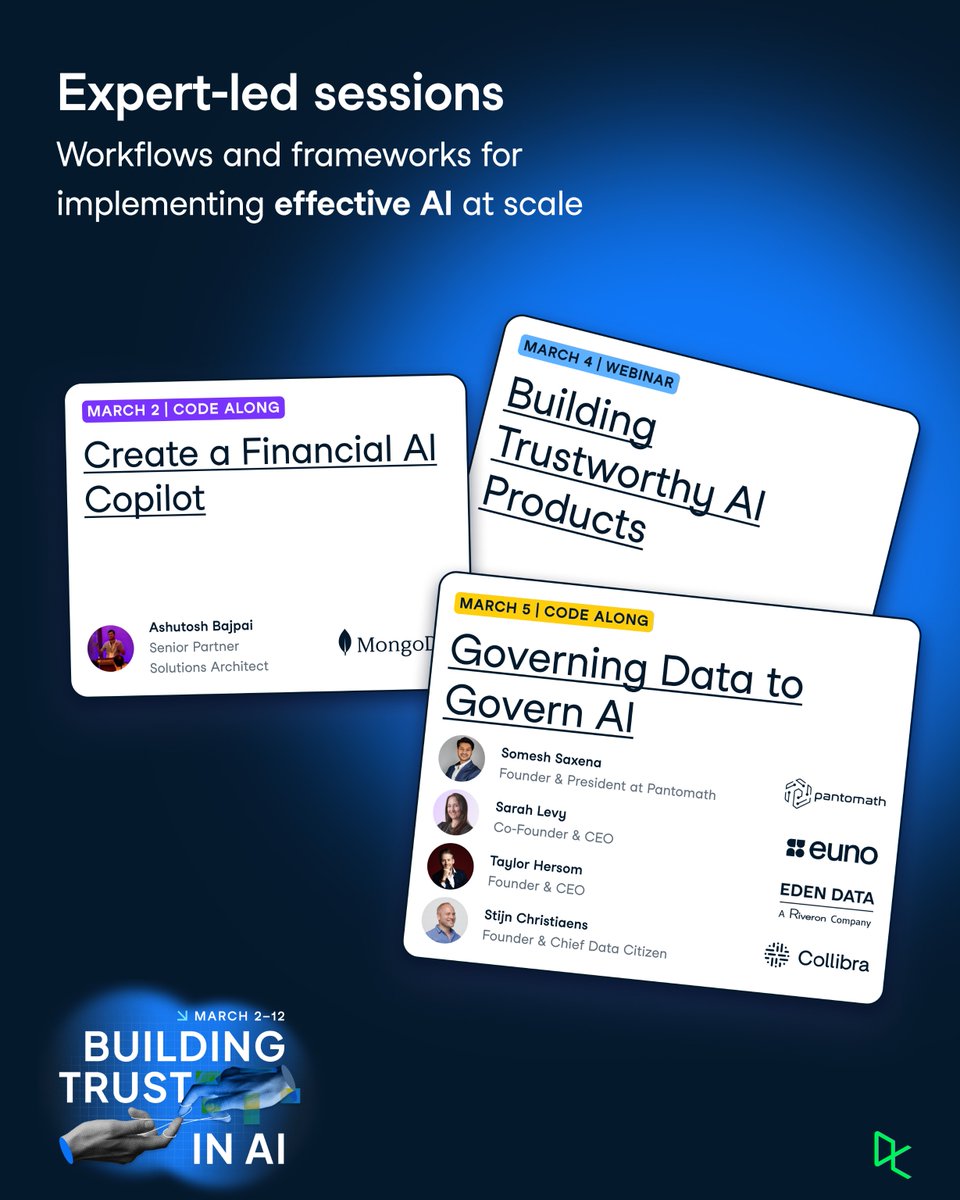

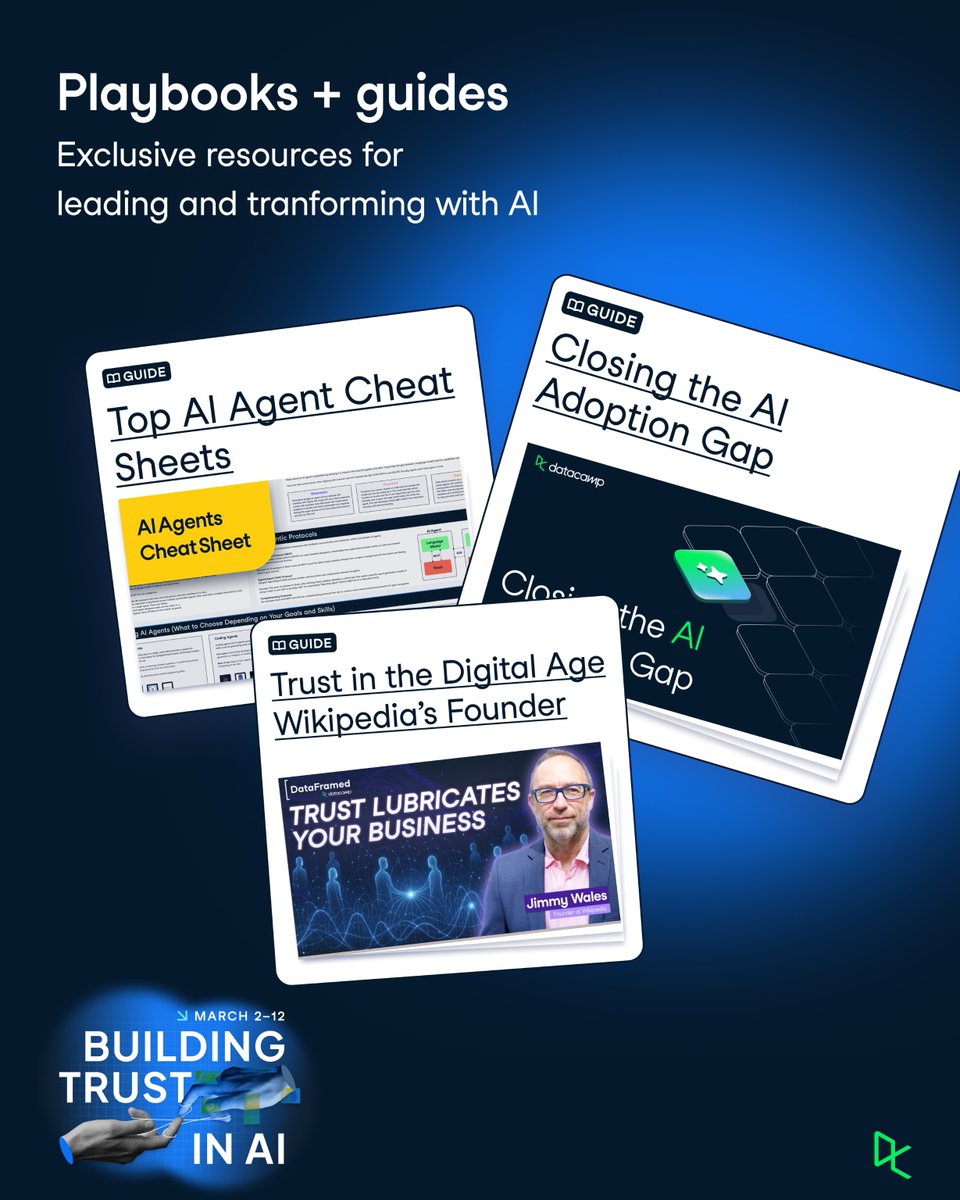

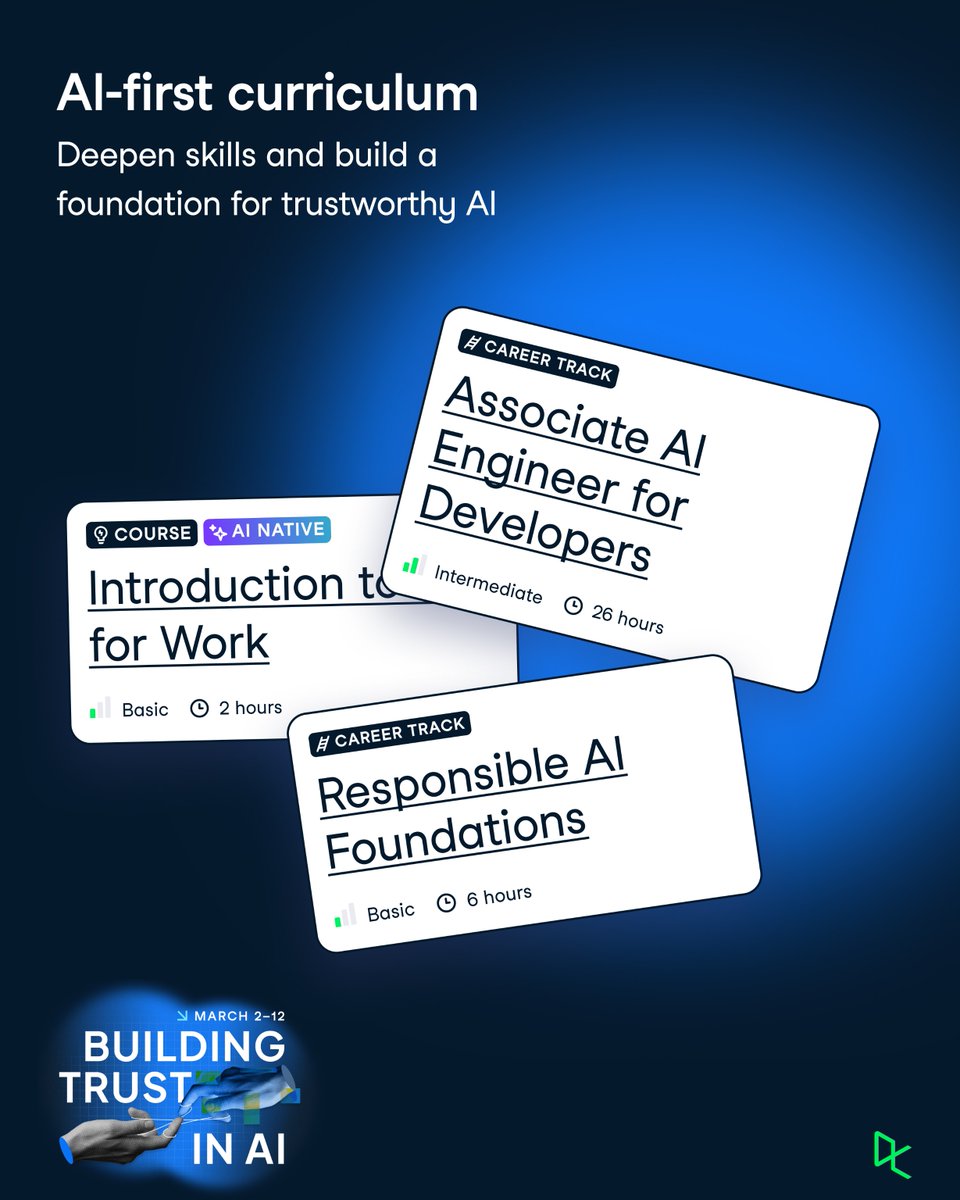

March 2–12 | Building Trust in AI

Join us for a two-week sprint and learn:

▸ How to design, build, and govern AI

▸ Build copilots, engineer agent content, and evaluate AI performance

▸ Apply trust to AI in the high-risk industry

Unlock everything 👉 ow.ly/Bmah50YbFjF

English

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

Claude Opus 4.6 works so well.

I asked for for a Palantir alternative to view conflicts around the world using Claude Opus 4.6 on @capacityso

Took 15 minutes and 3 prompts.

English

Kevin Vicent ретвитнул

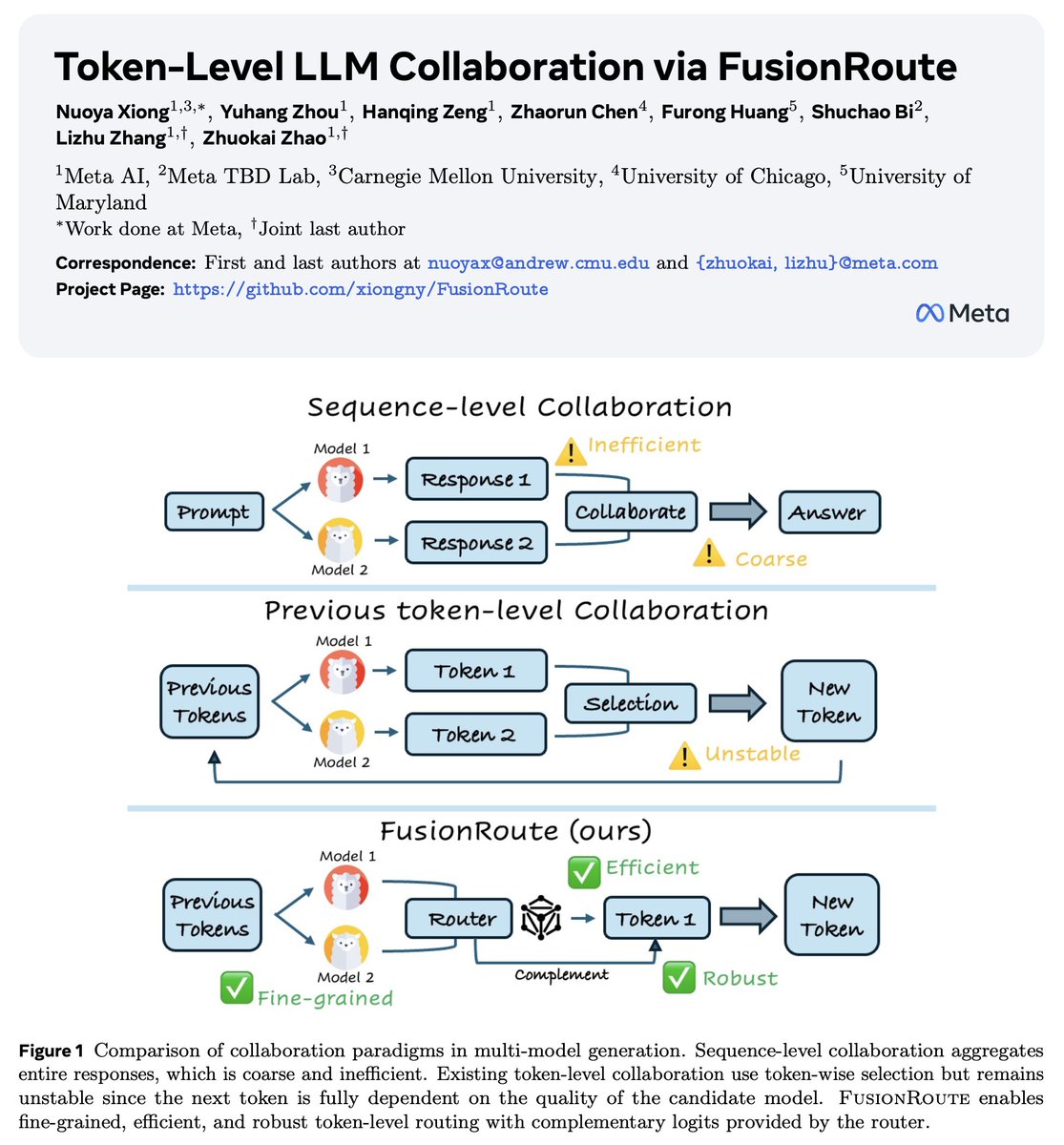

Meta × TBD Lab × CMU × UChicago × UMaryland

In our latest work, we introduce

Token-Level LLM Collaboration via FusionRoute

📝: arxiv.org/pdf/2601.05106

LLMs have come a long way, but we continue to face the same trade-off:

– one huge model that kind of does everything, but is expensive and inefficient, or

– many small specialist models that are cheap, but brittle outside their comfort zones

We’ve tried a lot of things in between — model merging, MoE, sequence-level agents, token-level routing, controlled decoding, etc.

Each helps a bit, but all come with real limitations.

A key realization behind FusionRoute is:

Pure token-level model selection is fundamentally limited, unless you assume unrealistically strong global coverage.

We show this formally. And then we fix it by letting the same router also generate.

Concretely, FusionRoute is a lightweight router LLM that

– performs token-level model selection, and

– directly contributes complementary logits to refine or correct the selected specialist when it fails

So it's not "routing + another model" — the router itself is part of the decoding policy as well.

This turns token-level collaboration from a brittle "pick-an-expert" problem into a strictly more expressive policy.

No joint training of specialized models.

No model merging.

No full multi-agent rollouts.

In our experiments, FusionRoute works across math, coding, instruction following, and consistently outperforms sequence-level collaboration, prior token-level methods, model merging, and even direct fine-tuning.

Feeling especially timely as LLM systems (e.g., GPT-5) move toward routing-based, heterogeneous model stacks (whether prompt-level or test-time).

English

Kevin Vicent ретвитнул

Kevin Vicent ретвитнул

we don't even call them Chinese LLMs, we just call them LLMs

just a glimpse into how Chinese our minds are...

WorldofAI@intheworldofai

China just released a desktop automation agent that runs 100% locally. It can run any desktop app, open files, browse websites, and automate tasks without needing an internet connection. 100% Open-Source.

English