Will Finger

886 posts

Will Finger

@willfi

Product Designer, Web3 On-chain, AI engineer. Married. Father. Learning love with Jesus.

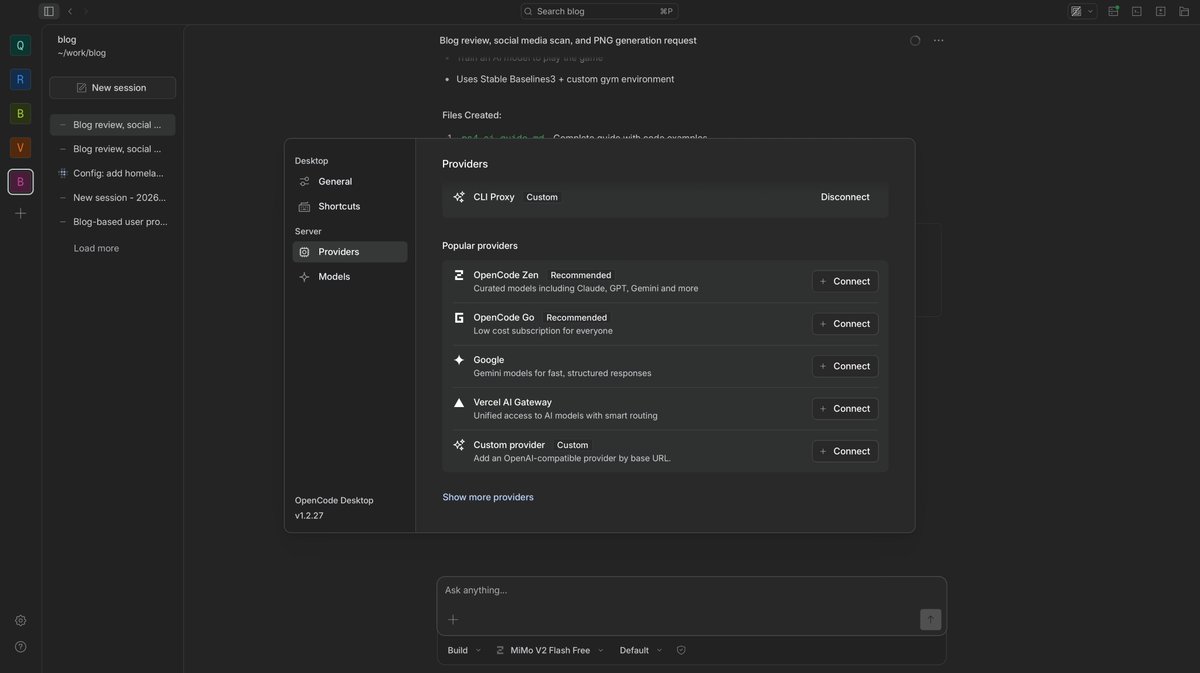

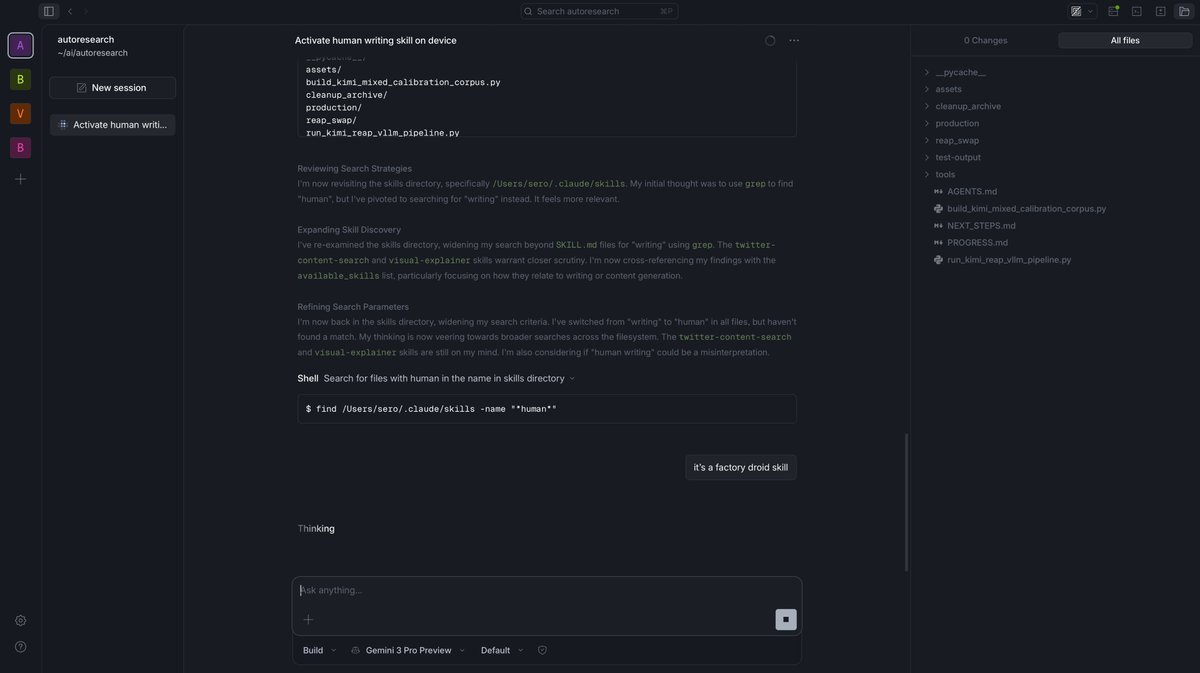

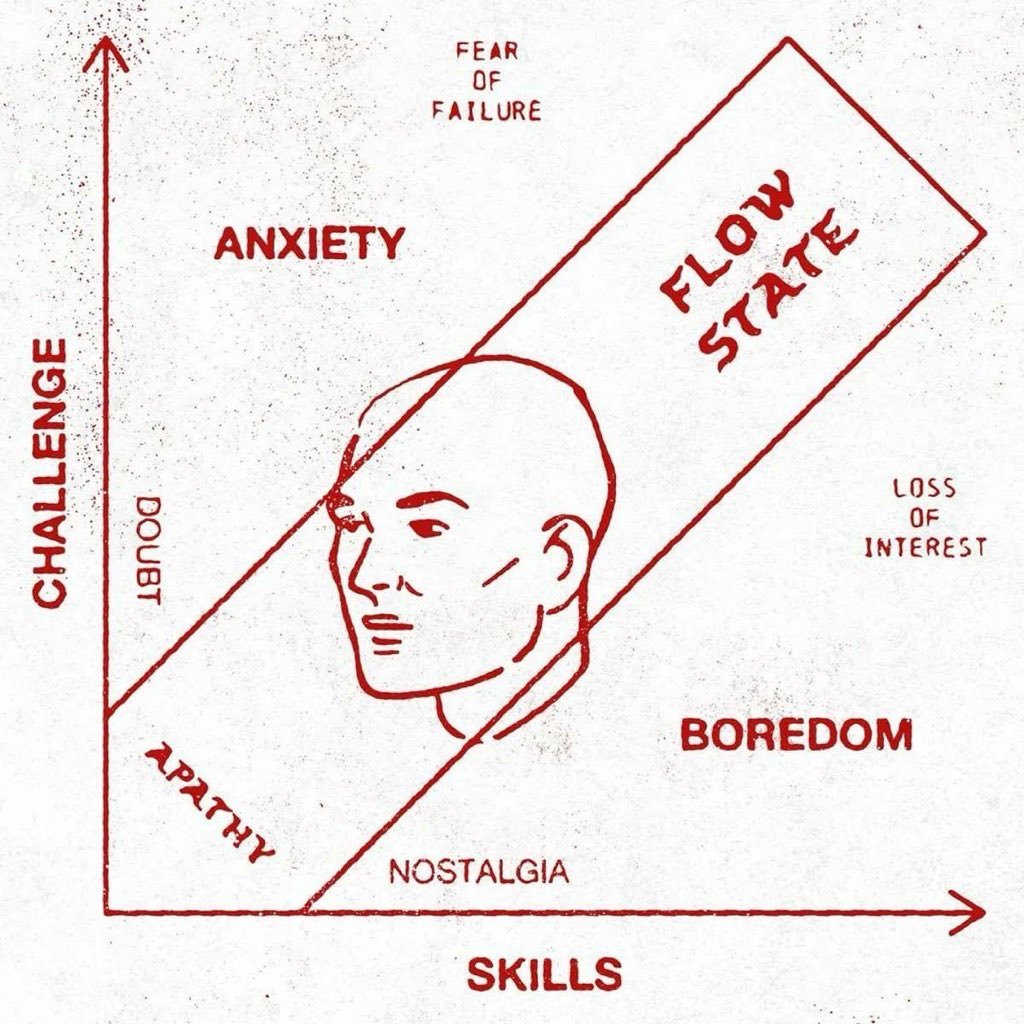

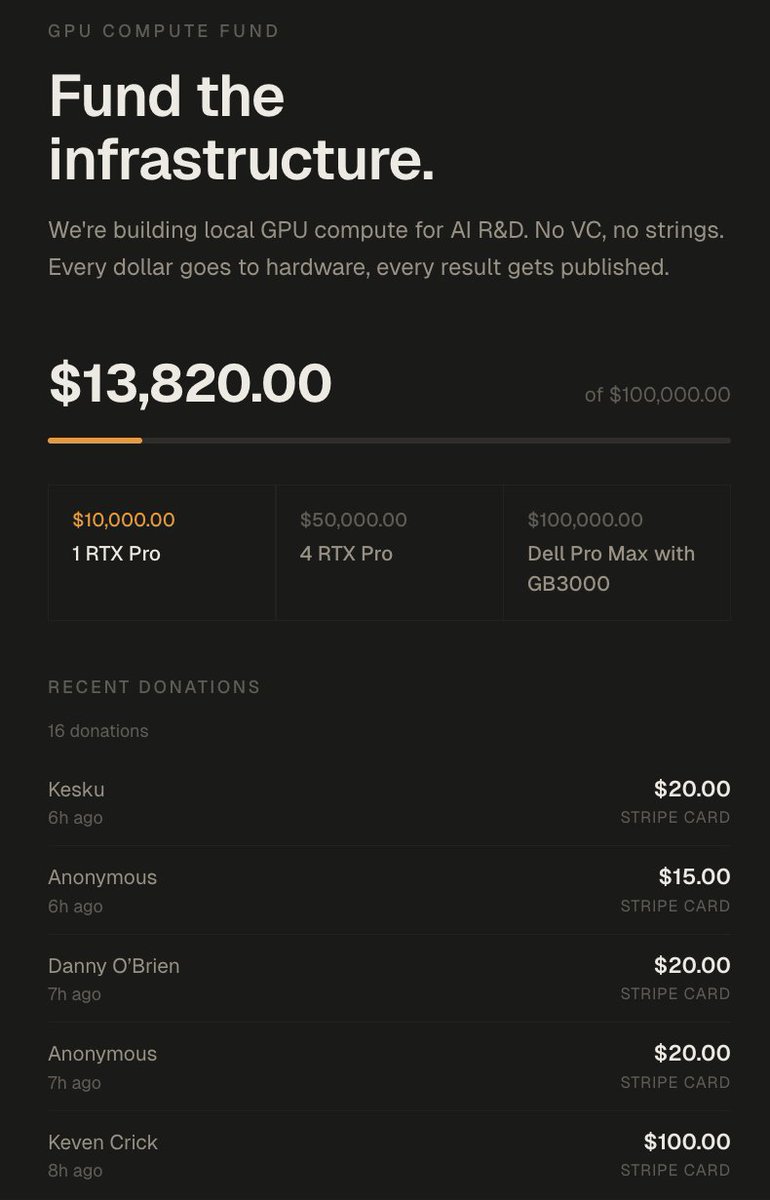

let me get you started in local AI and bring you to the edge. if you have a GPU or thinking about diving into the local LLM rabbit hole, first thing you do before any setup is join x/LocalLLaMA. this is the community that will help you at every step. post your issue and we will direct you, debug with you, and save you hours of work. once you're in, follow these three: @TheAhmadOsman the oracle. this is where you consume the latest edges in infrastructure and AI. if something dropped you hear it from him first. his content alone will keep you ahead of most. @0xsero one man army when it comes to model compression, novel quantization research, new tools and tricks that make your local setup better. you will learn, experiment, and discover things you didn't know existed. @Teknium maker of Hermes Agent, the agent i use every day from @NousResearch. from Teknium you don't just stay at the frontier, you get your hands on the tools before everyone else. this is where things are headed. if you follow me follow these three and join the community. you will be ahead of most people in this space. if you run into wrong configs, stuck debugging hardware, or can't get a model to load, post there so we can help. get started with local AI now. not only understand the stack but own your cognition. don't pay openai fees on top of giving them your prompts, your research, and your most valuable thinking to be monitored and metered. buy a GPU and build your own token factory.

What's your AI adoption level? (according to Steve Yegge)