Anotherdev

276 posts

Anotherdev

@0xAnotherdev

Physics student at @UdeA and full-stack engineer. Cryptography advocate. Web3 developer at @KolektivoLabs

Medellín, Colombia Sumali Ağustos 2021

309 Sinusundan131 Mga Tagasunod

Anotherdev nag-retweet

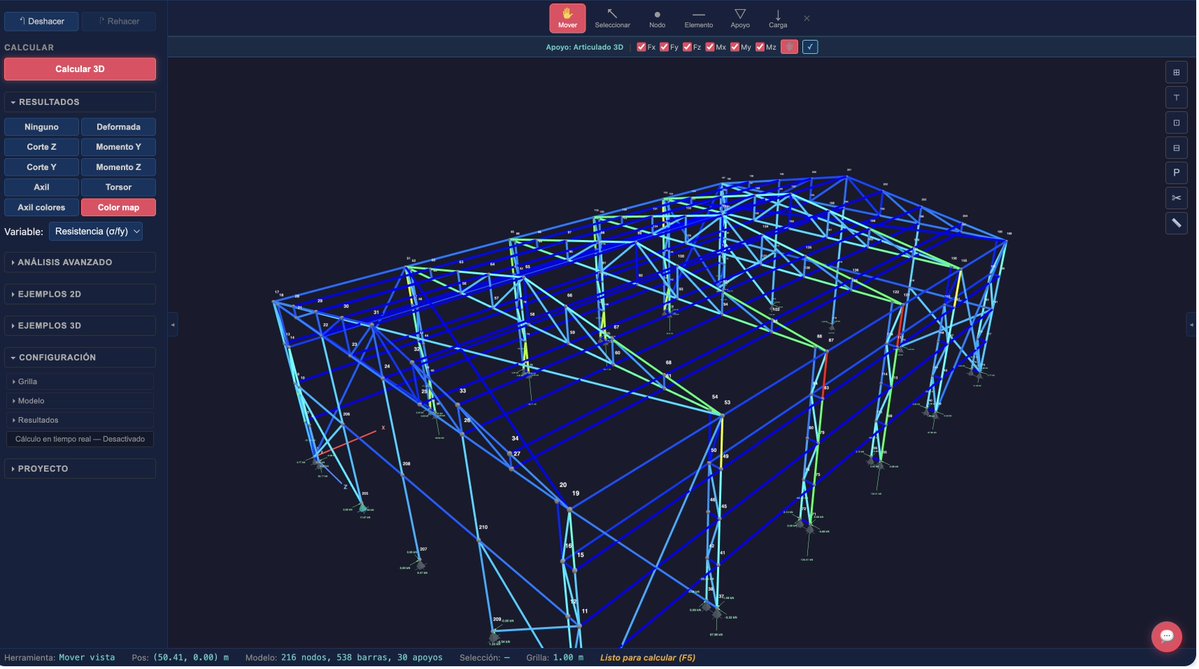

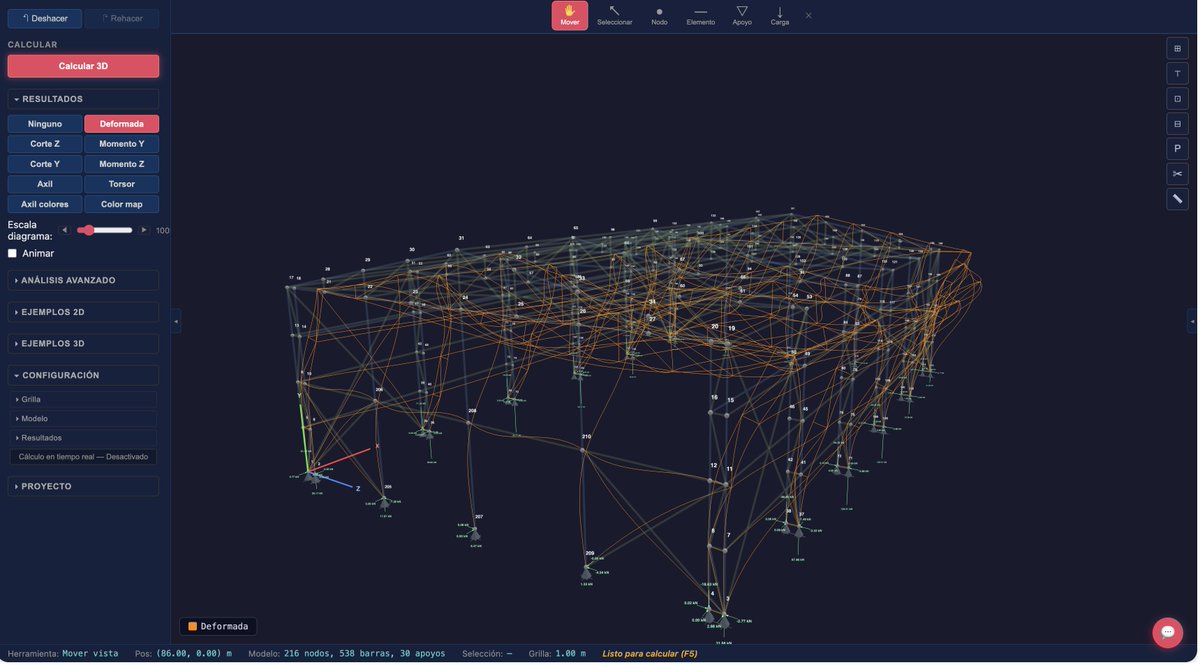

Lambda @class_lambda brings together engineers from every discipline, chemical, structural, civil, mechanical, industrial, with computer scientists, mathematicians, physicists, and experienced software engineers.

We're using that depth, plus LLMs, to ship free alternatives to the tools that have charged $5k to $50k/seat to the engineering profession for decades.

Pull request by pull request.

English

Anotherdev nag-retweet

There have recently been some discussions on the ongoing role of L2s in the Ethereum ecosystem, especially in the face of two facts:

* L2s' progress to stage 2 (and, secondarily, on interop) has been far slower and more difficult than originally expected

* L1 itself is scaling, fees are very low, and gaslimits are projected to increase greatly in 2026

Both of these facts, for their own separate reasons, mean that the original vision of L2s and their role in Ethereum no longer makes sense, and we need a new path.

First, let us recap the original vision. Ethereum needs to scale. The definition of "Ethereum scaling" is the existence of large quantities of block space that is backed by the full faith and credit of Ethereum - that is, block space where, if you do things (including with ETH) inside that block space, your activities are guaranteed to be valid, uncensored, unreverted, untouched, as long as Ethereum itself functions. If you create a 10000 TPS EVM where its connection to L1 is mediated by a multisig bridge, then you are not scaling Ethereum.

This vision no longer makes sense. L1 does not need L2s to be "branded shards", because L1 is itself scaling. And L2s are not able or willing to satisfy the properties that a true "branded shard" would require. I've even seen at least one explicitly saying that they may never want to go beyond stage 1, not just for technical reasons around ZK-EVM safety, but also because their customers' regulatory needs require them to have ultimate control. This may be doing the right thing for your customers. But it should be obvious that if you are doing this, then you are not "scaling Ethereum" in the sense meant by the rollup-centric roadmap. But that's fine! it's fine because Ethereum itself is now scaling directly on L1, with large planned increases to its gas limit this year and the years ahead.

We should stop thinking about L2s as literally being "branded shards" of Ethereum, with the social status and responsibilities that this entails. Instead, we can think of L2s as being a full spectrum, which includes both chains backed by the full faith and credit of Ethereum with various unique properties (eg. not just EVM), as well as a whole array of options at different levels of connection to Ethereum, that each person (or bot) is free to care about or not care about depending on their needs.

What would I do today if I were an L2?

* Identify a value add other than "scaling". Examples: (i) non-EVM specialized features/VMs around privacy, (ii) efficiency specialized around a particular application, (iii) truly extreme levels of scaling that even a greatly expanded L1 will not do, (iv) a totally different design for non-financial applications, eg. social, identity, AI, (v) ultra-low-latency and other sequencing properties, (vi) maybe built-in oracles or decentralized dispute resolution or other "non-computationally-verifiable" features

* Be stage 1 at the minimum (otherwise you really are just a separate L1 with a bridge, and you should just call yourself that) if you're doing things with ETH or other ethereum-issued assets

* Support maximum interoperability with Ethereum, though this will differ for each one (eg. what if you're not EVM, or even not financial?)

From Ethereum's side, over the past few months I've become more convinced of the value of the native rollup precompile, particuarly once we have enshrined ZK-EVM proofs that we need anyway to scale L1. This is a precompile that verifies a ZK-EVM proof, and it's "part of Ethereum", so (i) it auto-upgrades along with Ethereum, and (ii) if the precompile has a bug, Ethereum will hard-fork to fix the bug.

The native rollup precompile would make full, security-council-free, EVM verification accessible. We should spend much more time working out how to design it in such a way that if your L2 is "EVM plus other stuff", then the native rollup precompile would verify the EVM, and you only have to bring your own prover for the "other stuff" (eg. Stylus). This might involve a canonical way of exposing a lookup table between contract call inputs and outputs, and letting you provide your own values to the lookup table (that you would prove separately).

This would make it easy to have safe, strong, trustless interoperability with Ethereum. It also enables synchronous composability (see: ethresear.ch/t/combining-pr… and ethresear.ch/t/synchronous-… ). And from there, it's each L2's choice exactly what they want to build. Don't just "extend L1", figure out something new to add.

This of course means that some will add things that are trust-dependent, or backdoored, or otherwise insecure; this is unavoidable in a permissionless ecosystem where developers have freedom. Our job should make to make it clear to users what guarantees they have, and to build up the strongest Ethereum that we can.

English

Anotherdev nag-retweet

Humanity is completely unprepared for what's coming.

I've been talking with partners, employees, and countless people over the last few weeks. I'm amazed by most people's inability to adapt or even grasp second order effects of what's coming. They don't even want to think about the consequences. Some of the smartest people I've met in my life are trying to avoid accepting the reality: it's very likely we will have tools that are able to do almost everything better than a human in a very short timeline. It's very likely that even those of us who can generally adapt quickly won't be able to overcome this tsunami.

We will experience one of the biggest deflationary shocks in history. Only robotics combined with AI could be bigger than this.

I find it almost absurd to watch so many YouTube channels and X accounts showing you how to create your own simple app or SaaS. If anybody can build things fast, what do you think will happen? Is there demand for thousands of applications of every kind? During the last 20 years some of the smartest people alive battled for attention of people by building software and the limitation was execution costs, speed and distribution.

Right, but I'm forgeting that people say it's not the time of implementation anymore. In theory, we're now in the time of ideas. So what happens when someone has a good idea and anyone can copy it in days?

If implementation becomes worthless, what remains? Perhaps taste, distribution, or maybe in some cases deep domain expertise. But even those moats are eroding fast.

What makes this different from past disruptions is the pace. Previous technological shifts gave people decades to adapt. This one might give them months.

We built this. And yet it feels like it's happening to us, not by us. The strange position of being both the creator and the displaced. This is going to be very sad and fun at the same time. Happy to be alive during this time.

English

@krichard121212 Crazy, stock market is way more complex than a Lorenz attractor.

English

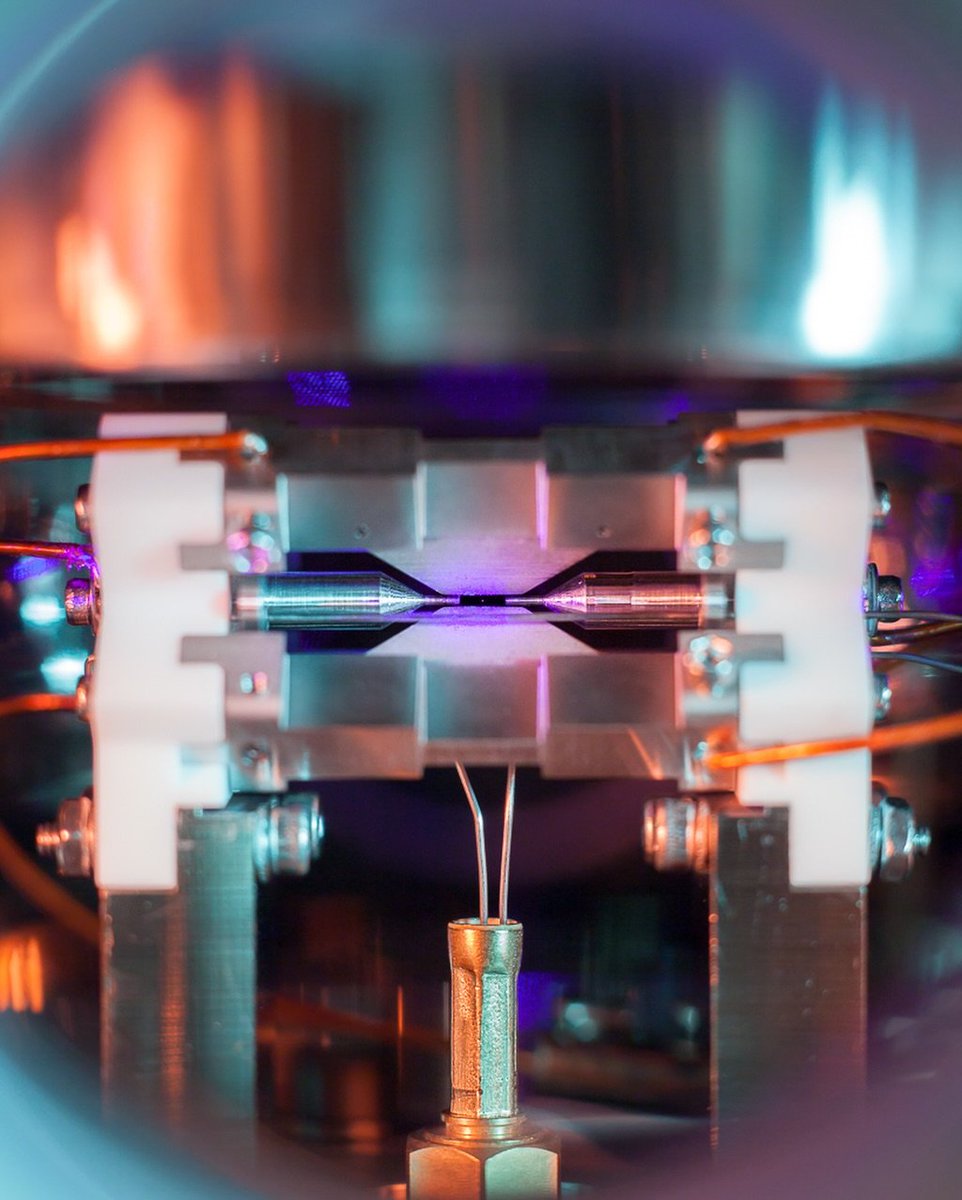

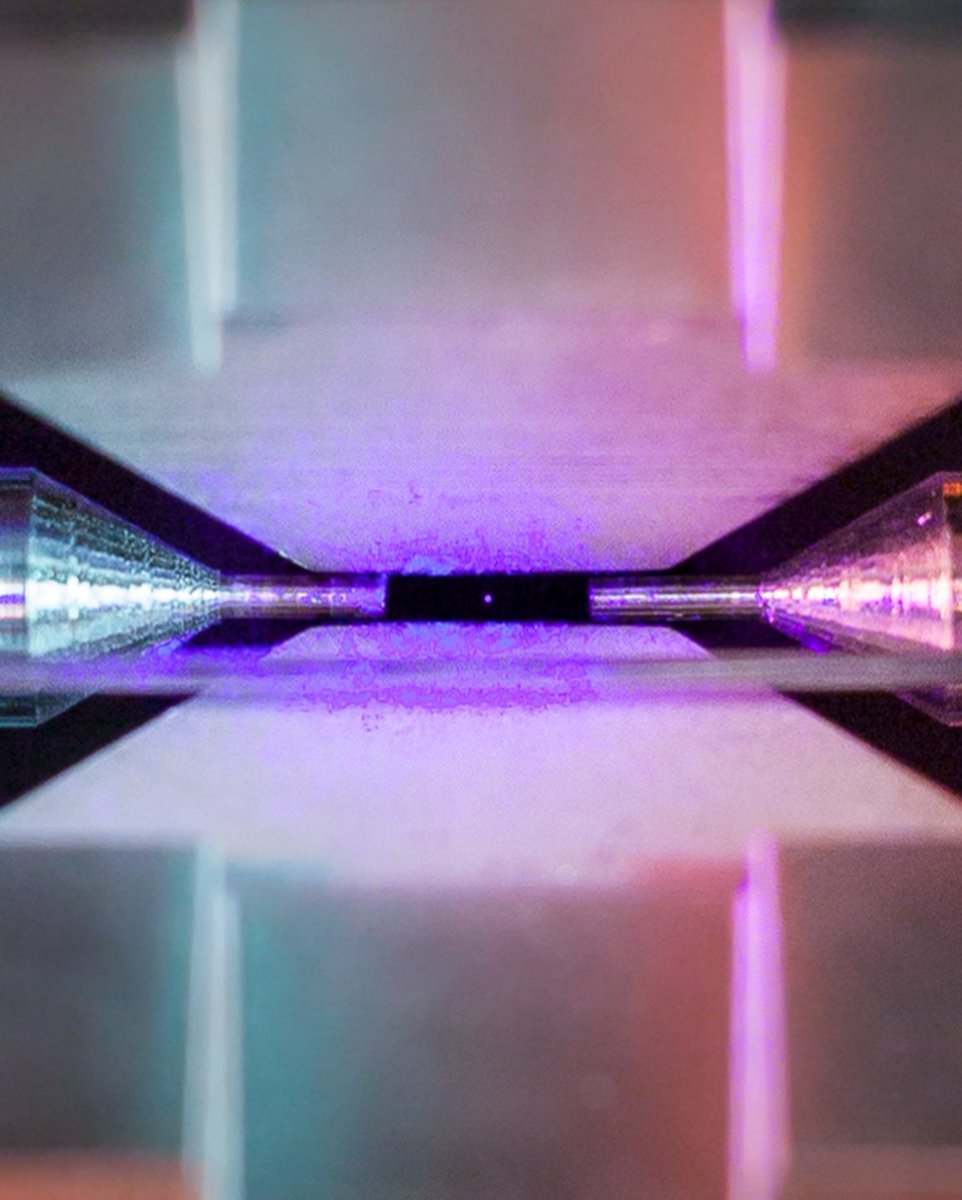

Quantum mechanics is what it’s because of this:

ⵣ🇲🇦@riffiya__

1s 2s 2p 3s 3p 4s 3d 4d 5s 6s 4f 5d 6p 7s 5f 6d 7p

English

@cryptosinomx privacypools.com

Y si, es un par de mappings en cualquier SC, y un par de circuitos de criptografia offchain ;b

Español

Anotherdev nag-retweet

Anotherdev nag-retweet

Anotherdev nag-retweet

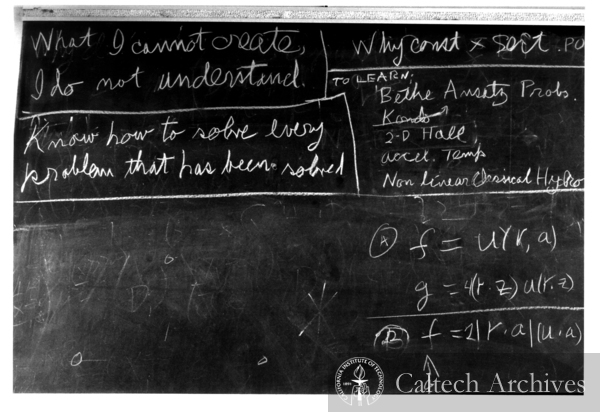

When Richard Feynman died in 1988, his last blackboard bore a curious note to himself: “To learn: Bethe Ansatz.” The Bethe Ansatz, introduced by Hans Bethe in 1931, is a method for finding the spectra of Hamiltonians in quantum integrable systems such as the Heisenberg magnet. For decades it helped produce astonishing results and conjectures, though no one quite knew why it worked.

I will revisit this enduring mystery today at @London_Inst (see the link below). In collaboration with Boris Feigin & Nicolai Reshetikhin, I had reinterpreted the Bethe Ansatz through the lens of the geometric Langlands correspondence in 1994, expressing its spectra in terms of mathematical objects known as opers. This framework led us to powerful generalizations involving q-characters of quantum affine algebras and q-opers, bridging quantum physics and modern geometry.

I will explore these ideas and reflect on why the Bethe Ansatz—an old key to new symmetries—still stood at the top of Feynman’s list.

English

Anotherdev nag-retweet

Maryna Viazovska, a mathematician from Kyiv, was awarded the Fields Medal in 2022—one of the most prestigious honors in mathematics—for solving the problem of sphere packing in eight-dimensional space.

Notably, before her breakthrough, the problem had only been solved in three dimensions, and that solution spanned 300 pages. Viazovska’s proof, in contrast, was just 23 pages long and stood out for its remarkable elegance.

She also holds the distinction of being only the second woman ever to receive the Fields Medal.

English

Anotherdev nag-retweet

@garosan1 Dios mio, los libros están carísimos, y no me gusta leer en pdf :(

Español

Anotherdev nag-retweet

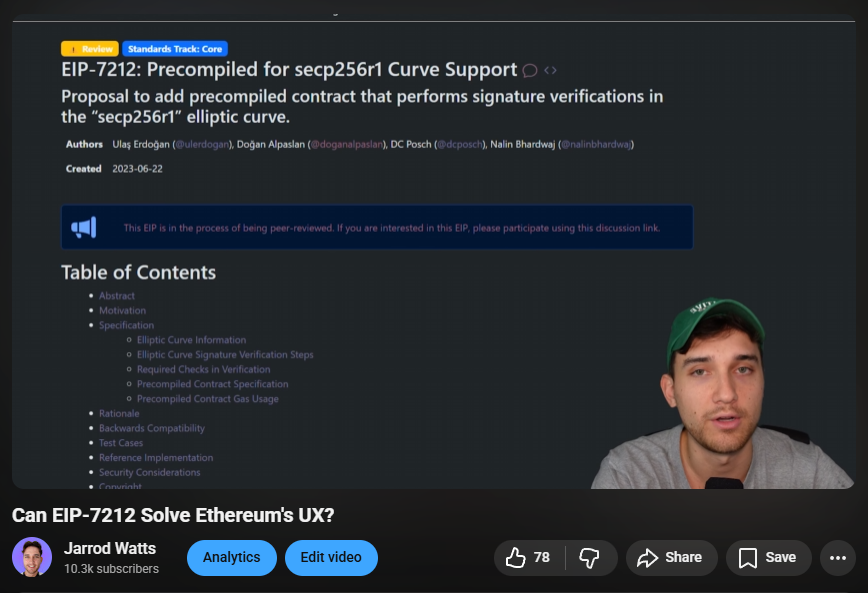

If you're interested in a nerdy deep dive, I made a video covering this a few years back (when it had a different eip name)

youtube.com/watch?v=HVlHfu…

YouTube

English

Anotherdev nag-retweet

I’m humble enough to admit you can replace me with AI.

But I’m confident enough to inform you that it’ll cost you more.

Ahmad@TheAhmadOsman

people that think like this still exist btw and theyʼre in for a big surprise sadly

English