Davide Buffelli nag-retweet

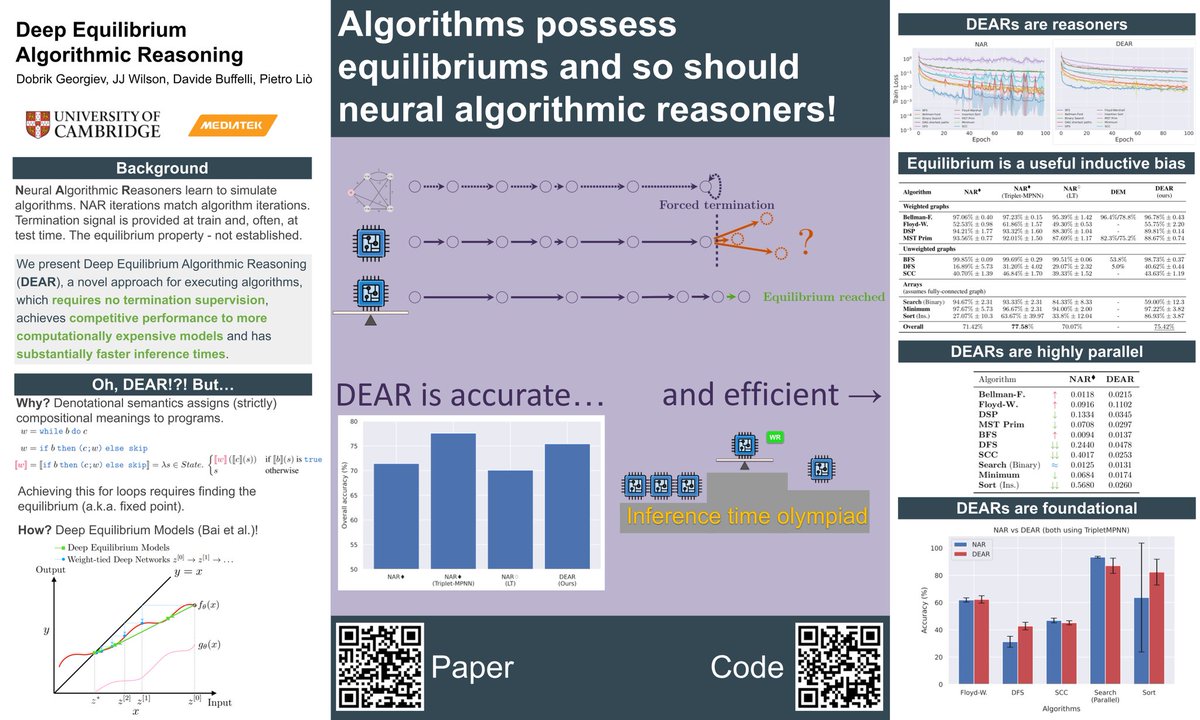

I will be presenting our paper on deep equilibrium algorithmic reasoning (poster sess 3)

Feel free to swing by, say hi, have a coffee break with me in between sessions or suggest me good locations for a photo

#NAR #NeurIPS2024 #NeurIPS

English