Longoria

9.7K posts

Juste avant de dormir, je me permets de vous faire une petite demande les CEO, PAR PITIÉ interdisez l’alcool au Major de Paris.. Un grand nombre de gens ne savent plus se tenir à la moindre goutte d’alcool… Certain comme moi attendent le retour d’un événement CoD en France depuis plus de 10 ans quasiment et l’alcool on peut tellement tout gâcher d’une minute à l’autre.. donc pls c’est la SEULE demande que j’ai à vous faire ! Merci d’avance à vous ! 🙏🏼 @gentlemates @Gotaga @xSqueeZie @BrokyBrawks @Nikof 🩷🩷 #M8WIN #GentleMates

Sincèrement, si c'était toi l'élu, c'est quoi les changements dans les premiers mois de ta nouvelle vie ? ?

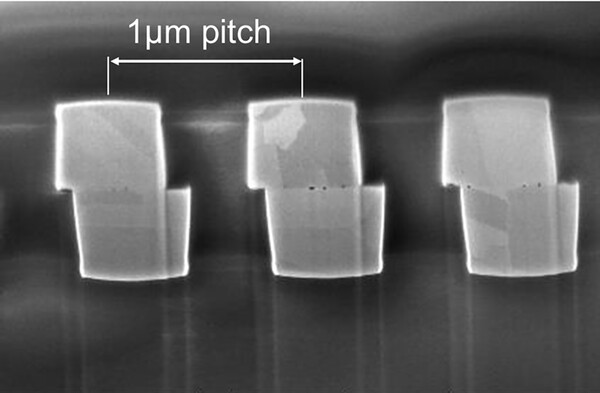

Nvidia's AI chips are consuming memory at an unprecedented pace: Nvidia's, $NVDA, most recent Rubin chip now requires 288GB of RAM. This is +800% more than the memory of a high-end PC, and +2,300% more than a high-end smartphone. By comparison, the H100, launched 4 years ago, needed 80GB of RAM, or 72% less. In other words, each new generation of Nvidia AI chips requires significantly more memory than the last, putting enormous strain on global supply. Furthermore, AI giants like Alphabet, $GOOGL, and OpenAI are locking up large portions of the global memory chip supply by purchasing millions of Nvidia AI chips. As a result, average spot prices for 16GB DDR4 RAM are up +2,352% YoY to a record $76.90, while 8GB DDR4 prices are up +1,873% YoY, to an all-time high of $28.90. The global memory chip shortage is out of control.

🇫🇷⛽️ INCROYABLE BUG À LA POMPE ! Station Carrefour Market de Breuillet (Essonne) : le litre d’essence affiché à 0,01 € (1 centime) par erreur de saisie ! Ruée immédiate : files interminables, bidons sortis des coffres, chaos total en quelques minutes.