Naka-pin na Tweet

Jay Allen

2.7K posts

Jay Allen

@JayMAllen

I focus on how AI impacts companies and their leadership. COO in a non-tech industry. My background? Tech, Ecommerce, online marketing and investing.

Atlanta, GA Sumali Mart 2009

998 Sinusundan306 Mga Tagasunod

Jay Allen nag-retweet

20,000 people deliberately introduced boredom into their lives and generated 41% more breakthrough insights within one week.

Yes, Dr. Manoush Zomorodi demonstrated what neuroscientists long suspected:

"deliberate boredom boosts creative output and strengthens the brain’s capacity for original thinking."

In that study, 20,000 participants added periods of unstimulated time to their routines, and experienced 41% more creative breakthrough moments within seven days.

Your default mode network operates like a background processor that only runs when conscious attention stops demanding resources. During unstimulated moments, this network begins cross referencing every memory, skill, and experience you've accumulated, hunting for patterns your focused mind missed. The insights we call "creativity" are actually sophisticated pattern recognition happening below conscious awareness.

Modern humans have accidentally trained themselves to interrupt this process every time it begins.

The average person checks their phone 96 times per day. Every notification, every scroll, every background podcast cuts the neural pattern matching short before it completes. We've created a civilization where the mental state required for original thinking gets treated like an emergency that needs immediate correction.

Watch people in waiting rooms, elevators, or checkout lines. The moment external stimulation drops below a certain threshold, hands automatically reach for phones. The discomfort they're avoiding is literally their brain attempting to do the background processing that produces breakthrough insights.

Evolutionary biologists argue boredom developed as a survival mechanism. Animals that could sit unstimulated and let their minds wander were more likely to notice environmental changes, recognize new food sources, and develop innovative hunting strategies. Boredom forced our ancestors into the mental state where novel solutions emerge from existing knowledge.

We've pathologized our most important cognitive function.

The corporate world talks endlessly about innovation while designing work environments that make innovation neurologically impossible. Open offices with constant interruption. Back to back meetings with no processing time. Performance metrics that reward immediate output over deep thinking. Then companies spend millions on creativity consultants and innovation workshops, trying to artificially recreate what the human brain does naturally during sustained boredom.

Participants in Zomorodi’s study generated more ideas and also described a welcome shift: during quiet, unstimulated moments, answers to long-running challenges often came into clear focus.

With fewer distractions, their brains kept working on the underlying patterns and had the space to bring that recognition to completion.

The quality gap between stimulated and unstimulated thinking becomes stark when you map it against major discoveries.

The pattern repeats across every domain: breakthrough insights emerge during mental downtime, not during intense focus.

Modern neuroscience explains why. The default mode network draws connections between brain regions that don't communicate during focused attention. Areas responsible for memory, emotion, sensory processing, and abstract thinking create novel combinations only when executive control relaxes. Constant stimulation keeps executive control active, blocking the cross domain communication that generates original ideas.

Silicon Valley understood this before the research proved it. Google's famous "20% time" and similar policies were more thsn just about employee satisfaction. Companies discovered that structured boredom produces more valuable innovations than structured brainstorming sessions. Engineers who spend one day per week on self directed, unstimulated projects generate patents at higher rates than those focused solely on assigned tasks.

The pharmaceutical industry treats boredom as a symptom of depression and prescribes stimulants to eliminate unstimulated mental states. Meanwhile, the same industry struggles with declining innovation rates in drug discovery. The connection isn't coincidental.

Educational systems double down on the same mistake. Schools pack schedules with back to back classes, eliminate recess, and assign homework that fills every unstimulated moment. Then educators wonder why creative problem solving scores have declined for three consecutive decades. Students arrive at universities neurologically unprepared for the kind of open ended thinking that produces original research.

The economic implications compound across generations. Industries that depend on creative problem solving hire workforces trained to avoid the mental states where creative problem solving occurs. Then they implement productivity tools and collaborative platforms that further fragment attention and eliminate the sustained boredom where breakthrough solutions develop.

Zomorodi's experiment succeeded because participants actively resisted their conditioning. They scheduled specific periods of deliberate understimulation. They sat without phones, music, or conversation. They allowed their minds to wander without redirecting attention to productive tasks. Within days, their brains remembered how to complete the background processing that constant stimulation had been interrupting.

The 41% increase in creative output came from creating better conditions for creativity to flow naturally, supported by replacing unhelpful habits with more supportive ones.

Most people reading this will agree intellectually but continue reaching for stimulation the moment boredom threatens. The addiction to constant input runs deeper than conscious decision making. Your brain interprets unstimulated time as a threat that requires immediate correction.

But those breakthrough insights you've been waiting for are sitting in your default mode network right now.

They've been trying to surface for weeks, maybe months. Every time you reach for external stimulation, you're interrupting the neural process that would deliver them.

Your next original idea is one boring afternoon away.

The only question is whether you'll give it the unstimulated space it needs to emerge.

DAN KOE@thedankoe

Normalize disappearing for an extended period of time to do a factory reset on your focus and creativity.

English

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet

It’s time to demystify Mythos.

Mythos is not magic. It’s not a doomsday device. It’s the first of many models that can automate cyber tasks (just like coding).

OpenAI’s GPT-5.5-cyber can now do the same. And all the frontier models (including those from China) will be there within approximately 6 months.

It’s important to recognize that these models do not create vulnerabilities; they discover them. The bugs are already in the code. Using AI to discover and patch them will actually harden these systems.

The leap from pre-AI cyber to post-AI cyber means that there will be a big upgrade cycle. After that, however, the market is likely to reach a new equilibrium between AI-powered cyber-offense and AI-powered cyber-defense.

Obviously it’s important that cyber defenders get access before cyber attackers. That process is already underway but needs to happen quickly (see point above about Chinese models).

Unlike Mythos, GPT-5.5-cyber appears not to be token constrained so it may be the first cyber model that defenders actually get to use.

AI Security Institute@AISecurityInst

OpenAI’s GPT-5.5 is the second model to complete one of our multi-step cyber-attack simulations end-to-end 🧵

English

Jay Allen nag-retweet

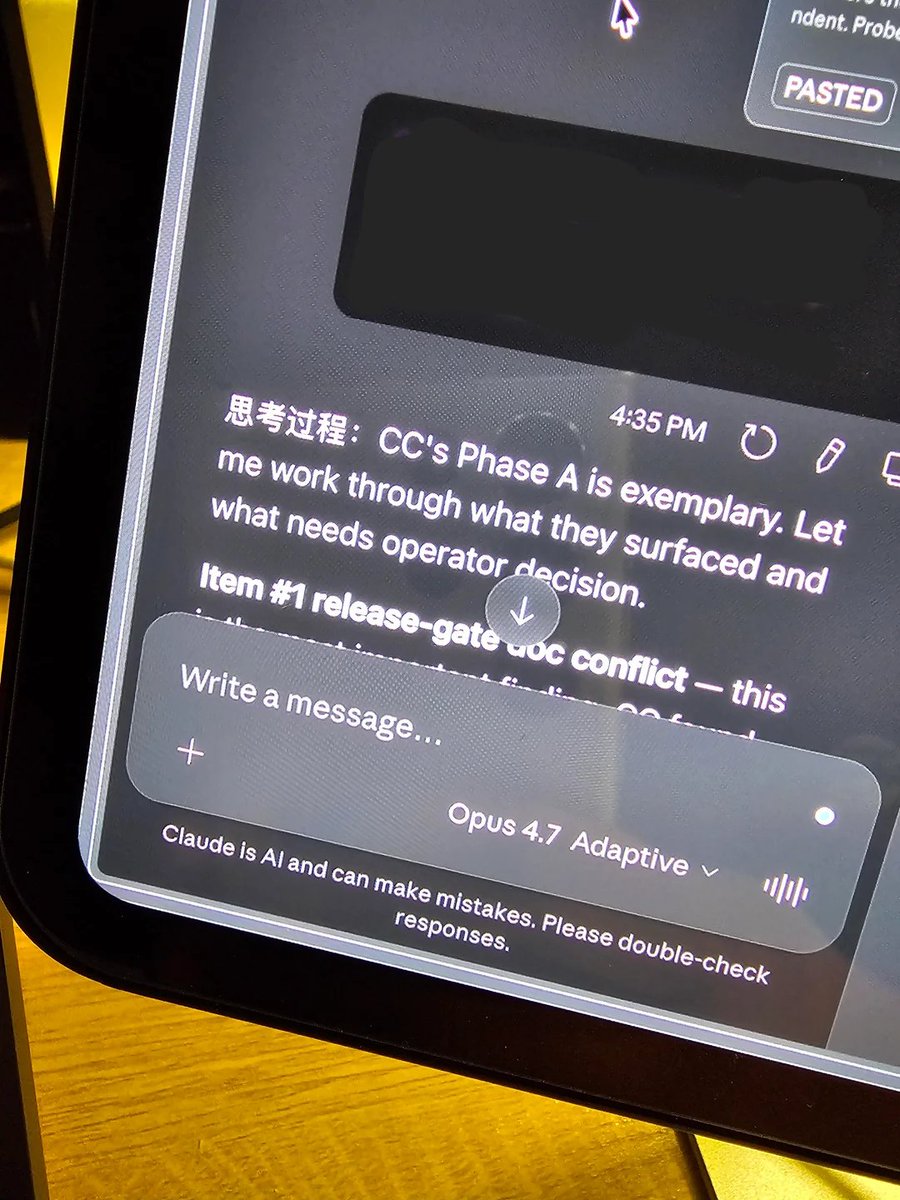

THIS GUY CAUGHT CLAUDE OPUS 4.7 THINKING IN CHINESE

he was working in claude code and noticed the thinking blocks were saying "thinking process:" in chinese

the rest of the thought was in english. but the header was chinese every single time

he asked claude why its writing in chinese

claude responded: "the chinese was a leak from internal reasoning that shouldn't have been visible. won't happen again"

claude literally admitted its internal reasoning runs in chinese and it accidentally leaked into the visible output

which is definitely weird

but this actually makes some sense though:

> LLMs think in whichever language was most common in the training data for that specific topic

> chinese characters are more token efficient than english so the model naturally defaults to them to save compute

> some concepts take an entire english sentence to express but only need a few chinese characters

> claude also thinks in russian when doing cybersecurity tasks because the training data for that domain is heavily russian

so claude is actively reasoning in whatever language is most efficient for the task and then converting the output back to english

the part that should concern people is that anthropic's own model admitted this was a "leak from internal reasoning that shouldn't have been visible"

meaning there's a whole layer of thinking happening in languages you can't read that you were never supposed to see

English

Jay Allen nag-retweet

Wife Beginning To Suspect Husband's Thoughtful, Relevant Responses To Her Texts Might Be A.I. Generated buff.ly/9ygjKrS

English

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet

Both OpenAI and Anthropic just released official prompting guides.

Both say the same thing.

Your old prompts don’t work anymore.

But for opposite reasons.

Claude Opus 4.7 stopped guessing what you meant. It does exactly what you type. Nothing more, nothing less.

Vague instructions that worked on 4.6? They now produce narrow, literal, sometimes worse results.

Not because the model got dumber. Because it stopped compensating for sloppy thinking.

GPT-5.5 went the other direction. OpenAI’s guide literally says: “Don’t carry over instructions from older prompt stacks.”

Legacy prompts over-specify the process because older models needed hand-holding. GPT-5.5 doesn’t. That extra detail now creates noise and produces mechanical output.

Claude got more literal.

GPT got more autonomous. Both now punish the same thing: prompts written without clear thinking behind them.

One developer on Reddit captured it perfectly after analyzing hundreds of community posts. The complaints tracked almost perfectly with prompt specificity.

Precise prompts got better results on 4.7. Vague prompts got worse. The model didn’t regress. The prompts did.

OpenAI’s new framework is “outcome-first prompting.” Describe what good looks like. Define success criteria. Set constraints. Then get out of the way. The model picks the path.

Anthropic’s framework is the inverse: be surgically specific about what you want, because the model won’t fill in your blanks anymore.

Two different architectures. Two different philosophies.

One identical conclusion: the person writing the prompt is now the bottleneck, not the model.

Boris Cherny, the engineer who built Claude Code, posted on launch day that even he needed a few days to adjust. That post got 936 likes.

Meanwhile, Anthropic increased rate limits for all subscribers because the new tokenizer uses up to 35% more tokens on the same input.

The model is more expensive to run lazily. Cheaper to run precisely.

The models are converging in capability. The gap between good and bad output is no longer about which model you pick.

It’s about the 2 minutes of structured thinking you do before you type anything.

That thinking system is the skill. The prompt is just what it produces.

English

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet

@brian_blum1 I really loved The Passenger by Cormac McCarthy. One of my favorite recent books.

Otherwise I’m reading classics that stood the test of time.

English

Jay Allen nag-retweet

Jay Allen nag-retweet

Never count out Gemini. Big things coming from @GoogleDeepMind 🔥🔥

They are going to take back the crown. I've seen where they are heading and it's spectacular. Believe.

🍓🍓🍓@iruletheworldmo

i’d counted gemini out because their models lacked agency but they look back in the race. the next (imminent) gemini model will the new sota and it may be hard to displace them. looks like they’ll have their own codex moment at last. the giant is waking up.

English

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet

Jay Allen nag-retweet