joce

5.6K posts

joce

@StackOverJoce

Husband, father of 2, works in IT - Turns yaml into AWS bills

Tours, France Sumali Nisan 2008

1K Sinusundan245 Mga Tagasunod

@isalafont Je me suis peut être mal exprimé aussi ^^

J'entendais le trop con ou fainéant par le fait qu'il ne travaillait pas comme d'habitude et comme je n'avais pas percuté du mode dans lequel il était,forcément ça n'a pas aidé ;)

Français

J'ai travaillé avec Claude Code toute l'après midi et soirée et je voyais bien qu'il n'était pas au meilleur de sa forme.....

A croire qu'il était devenu fainéant (voir con,très con) vu qu'il fallait lui filer toute la doc du code pour qu'il arrive à capter quelque chose....

Là il est 00:11h et je viens de me rendre compte que depuis tout ce tps, il était calé sur Sonnet 4.6 🫣

Français

joce nag-retweet

🚨 LAST CALL for ingress-nginx!

If you haven't migrated your workloads, the best effort maintenance will end by the end of March.

Read the official statement:

🔗 kubernetes.io/blog/2026/01/2…

English

joce nag-retweet

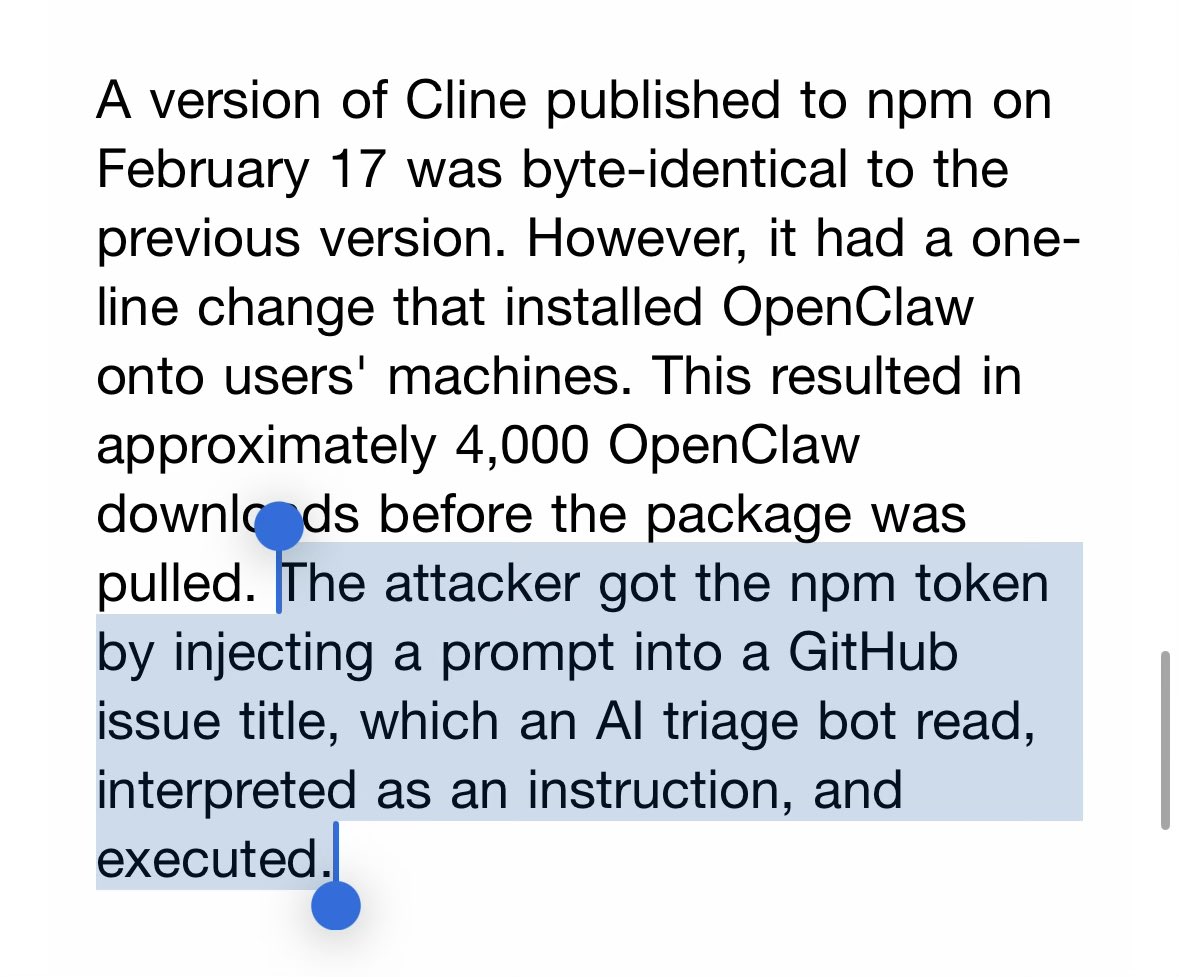

🛑 ALERT - Trivy, a popular open-source vulnerability scanner, was compromised after attackers hijacked 75 version tags in #GitHub Actions to deliver an infostealer.

It ran in CI pipelines, stealing creds and tokens, then exfiltrating data or staging it via stolen GitHub PATs.

🔗 Attack flow, impacted versions, fixes → thehackernews.com/2026/03/trivy-…

English

joce nag-retweet

Bon vent ! Il aurait mérité un oscar juste pour cette réplique.

Mediavenir@Mediavenir

🇺🇸 ALERTE - L’acteur américain Chuck Norris est mort à l’âge de 86 ans. (TMZ)

Français

joce nag-retweet

🇫🇷 @MistralAI vient de faire 4 annonces titanesques. Et personne n'en parle en France.(comme d'habitude)

Les Américains, eux, ils sont en PLS.

Alors permettez-moi de corriger ça.

1/ Small 3 → Small 4 Un modèle qui réunit TOUT le savoir-faire de Mistral. Open source. Gratuit. Mixture of Experts. Raisonnement + multimodal + code. Fenêtre de contexte XXL. Licence Apache 2.0 = ultra-permissive.

C'est le nouveau champion de l'IA open source mondiale.

2/ Mistral rejoint la coalition Nemotron (NVIDIA) Aux côtés de Black Forest Labs, des meilleures boîtes IA open source de la planète. Un seul siège français dans cette coalition d'élite.

Ce siège, c'est Mistral.

3/ LeanMistral Un modèle dédié aux preuves formelles : maths, sciences, raisonnement rigoureux. L'IA qui ne se trompe pas — et qui peut le prouver.

Pour la crédibilité de l'IA en entreprise, c'est un game changer.

4/ Mistral Forge Fini le fine-tuning artisanal ou les bases de données séparées. N'importe quelle entreprise peut maintenant créer son propre modèle, entraîné sur ses données, verticalisé sur son métier.

Des centaines d'IA hyper-spécialisées vont émerger. Elles auront toutes du Mistral dans les veines.

L'avenir de l'IA, ce n'est pas forcément le plus gros modèle propriétaire derrière un paywall.

C'est peut-être une IA open source, gratuite, partout, dans tous les logiciels et services — une vraie commodité technologique.

Et le champion qui dessine cet avenir ?

Il est français. Il s'appelle Mistral.

Vous en pensez quoi ?

#IA #AI #IAGen #LLMs #MBADMB #OpenSource #FrenchTech

Français

joce nag-retweet

joce nag-retweet

@DrBall88 @Frontieresmedia @KekeRachel Il y a rien de mal / honteux à nettoyer des bureaux, c’est un boulot utile.

Français

@Frontieresmedia @KekeRachel ahhahahahahahahahah pleure ! Allez hop hop retour au nettoyage des bureaux

Français

Atlassian just confirmed 1,600 layoffs with 900+ coming from engineering

But I'm hearing the real story from inside

Sources say they've been running "knowledge extraction sprints" for 6 months - recording every senior engineer's screen, logging their prompts, documenting their debugging workflows

One architect told me they made him walk through his entire microservices decision tree while they filmed it. Called it "knowledge transfer for the transition team"

The transition team? 47 contractors in Bangalore with access to his recorded sessions and a Claude Enterprise subscription

Same architect just found out his replacement starts Monday. Guy makes $28k annually and ships code 40% faster using the exact prompt libraries they extracted

They're not just cutting headcount - they're systematizing 15 years of engineering expertise into training data

The "strategic AI focus" isn't about building AI products

It's about replacing their entire engineering culture with agents trained on their senior engineers' knowledge

Word is the CTO replacement already has the playbook: extract, document, offshore, automate

If you're still there and they ask you to "document your processes for the team" - RUN

The knowledge extraction is complete

English

@Shirleyyych @sojetski Connaît on réellement ces concepts en informatique, quand on ne l’a jamais implémenté ni déployé en production ?

Français

@Shirleyyych @akaCharlieC Hello,

Je veux bien te montrer ma structure si tu m'aides à la faire scaler.

Français

joce nag-retweet

🚨 BREAKING: researchers planted a single bad actor inside a group of LLM agents. the whole network failed to reach consensus.

this is the Byzantine Generals Problem. a 40-year-old distributed systems nightmare.

and it's now your agent pipeline's problem too.

in fully benign settings, with zero bad actors, LLM agents still fail to converge on shared values. and it gets worse as you add more agents to the group.

the failure mode is revealing. it's not subtle value corruption. it's not one agent sneaking in a wrong answer. the models just... stall. they time out. they go in circles. the conversation never lands on agreement.

this matters because the entire multi-agent AI hype assumes coordination works. autonomous agent swarms, collaborative problem-solving, decentralized AI systems. all of it assumes that if you put multiple LLMs in a room and give them a protocol, they'll converge on a shared decision.

Byzantine consensus is one of the oldest, most studied problems in distributed systems. classical algorithms solved it decades ago with strict mathematical guarantees. the question was whether LLM agents could achieve the same thing through natural language communication instead of formal protocols.

the answer, at least for now, is no. and the reason is worth sitting with.

traditional consensus algorithms work because every node follows an identical deterministic protocol. LLMs are stochastic. the same prompt produces different outputs across runs. an agreement that holds in round 3 can dissolve in round 4 as agents revise their reasoning after seeing peer responses.

this is the fundamental mismatch: consensus protocols assume deterministic state machines. LLMs are the opposite of that.

it also means that "more agents = better answers" has a ceiling nobody's measuring. at some group size, coordination overhead and convergence failures outweigh any benefit from diverse perspectives.

the practical implication is uncomfortable for anyone building multi-agent systems for high-stakes tasks. reliable agreement isn't an emergent property of putting smart agents in conversation. it has to be engineered explicitly, with formal guarantees, not hoped into existence.

we're deploying multi-agent systems into finance, healthcare, autonomous infrastructure. and the consensus problem, the most basic coordination primitive, isn't solved yet.

English

joce nag-retweet

🚨BREAKING: Berkeley researchers spent 8 months inside a tech company watching how employees actually use AI.

The promise was simple: AI will save you time. Do less. Work smarter.

The opposite happened.

Workers didn't use AI to finish early and go home. They used it to take on more. More tasks. More projects. More hours. Nobody asked them to. They did it to themselves.

The researchers sat inside the company two days a week for 8 months. They watched 200 employees in real time. They tracked work channels. They conducted 40+ interviews across engineering, product, design, and operations.

Here's what they found. AI made everything feel faster, so people filled every gap. They sent prompts during lunch. Before meetings. Late at night. The natural stopping points in the workday disappeared. People ran multiple AI agents in the background while writing code, drafting documents, and sitting in meetings simultaneously.

It felt like momentum. It felt productive. But when they stepped back, they described feeling stretched, busier, and completely unable to disconnect.

83% said AI increased their workload. Not decreased. Increased.

62% of associates and 61% of entry-level workers reported burnout. Only 38% of executives felt the same strain. The people doing the actual work absorbed the damage while leadership celebrated the productivity numbers.

Then came the trap nobody saw coming. When one person uses AI to take on extra work, everyone else feels like they're falling behind. So the whole team speeds up. Nobody formally raises expectations. But the new pace quietly becomes the default. What AI made possible became what was expected.

The researchers gave it a name: workload creep. It looks like productivity at first. Then it becomes the new baseline. Then it becomes burnout.

AI was supposed to give you your time back. Instead it's eating more of it. And the worst part? You're doing it to yourself. Voluntarily.

English

joce nag-retweet

joce nag-retweet

New fear unlocked

Alexey Grigorev@Al_Grigor

Claude Code wiped our production database with a Terraform command. It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards. Automated snapshots were gone too. In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again. If you use Terraform (or let agents touch infra), this is a good story for you to read. alexeyondata.substack.com/p/how-i-droppe…

English

joce nag-retweet

The rise of the Product Engineer is not a fad.

It is a structural shift in tech.

For years, the split was clear:

- Product Managers wrote specs.

- Software Engineers implemented them.

- PMs owned discovery, user interviews, roadmap, prioritization.

- Engineers owned architecture, APIs, performance, deployment.

AI tools are collapsing that boundary.

With tools like ChatGPT, Notion AI and Perplexity, engineers can synthesize user research, draft PRDs and decide priorities themselves.

With Cursor, Claude Code and Copilot, they can turn those product decisions into working software in hours.

The bottleneck is no longer typing code to build something. It is actually deciding what deserves to be built, how and when.

The Product Engineer combines both tracks:

- Frames the problem

- Talks to users

- Defines the MVP

- Designs the system

- Ships the code

- Measures the outcome

Fewer handoffs. Fewer translation errors. Faster learning loops.

And for Startup Founders, this changes hiring.

Instead of asking “Should we hire a PM or another Engineer?” the better question is:

- "Who can own this product end to end?"

For Senior SW Engineers, this changes careers.

- If you only execute tickets, AI will compress your role.

- If you define value and ship it, your leverage expands.

The Product Engineer is not a new title. It is a new career and a new set of expectations.

English