Zodiac

637 posts

@Zoddiacc

Code whisperer. 🤖 Taming Android by day, 🧠 training models by night. My feed is all about AOSP, Deep Learning, and the code that connects them.

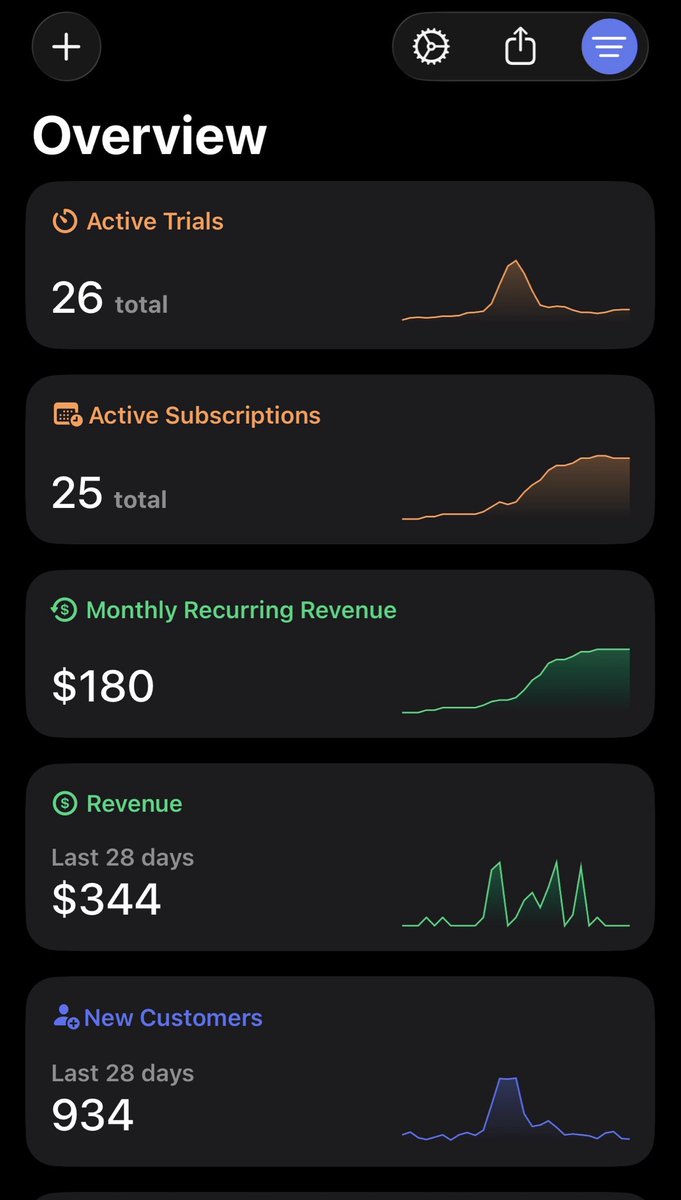

My first iOS app has been live for 3 weeks. Here are the stats so far ⚡️ - 800+ downloads - $159 MRR - $287 Revenue - 22 five star reviews Have gotten some real traction, time to double down!

claude code core

Walking alone through a foreign city at night and realizing how far you’ve come has to be a top 3 peak moment of all time

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

Introducing Helena: the world's first autonomous AI marketer. Businesses spend 4,000 hours on marketing…before their first $1M in revenue. We built Helena to solve this. Helena can: ➤ Track competitor ads & create TikTok slideshows, UGC, static ads - all while you sleep ➤ Analyze performance across GA4, Search Console, paid/organic social for daily insights ➤ Research trends to draft GEO optimized blogs directly on WordPress, Framer, Webflow ...and more Helena has her own memory, scheduled tasks, 100+ custom marketing tools and native integrations. No dev. No CLI. No n8n. No API keys needed. Helena doesn't replace CMOs, and every marketer who's demoed it has asked us for early access. Want to hire her? Check the next thread ⬇️

Introducing Helena: the world's first autonomous AI marketer. Businesses spend 4,000 hours on marketing…before their first $1M in revenue. We built Helena to solve this. Helena can: ➤ Track competitor ads & create TikTok slideshows, UGC, static ads - all while you sleep ➤ Analyze performance across GA4, Search Console, paid/organic social for daily insights ➤ Research trends to draft GEO optimized blogs directly on WordPress, Framer, Webflow ...and more Helena has her own memory, scheduled tasks, 100+ custom marketing tools and native integrations. No dev. No CLI. No n8n. No API keys needed. Helena doesn't replace CMOs, and every marketer who's demoed it has asked us for early access. Want to hire her? Check the next thread ⬇️

Switching to Gemini from other AI apps just got easier. Starting to roll out today on desktop, you can now bring your preferences and chat history into Gemini, so you can pick up right where you left off in just a few clicks. 🧵

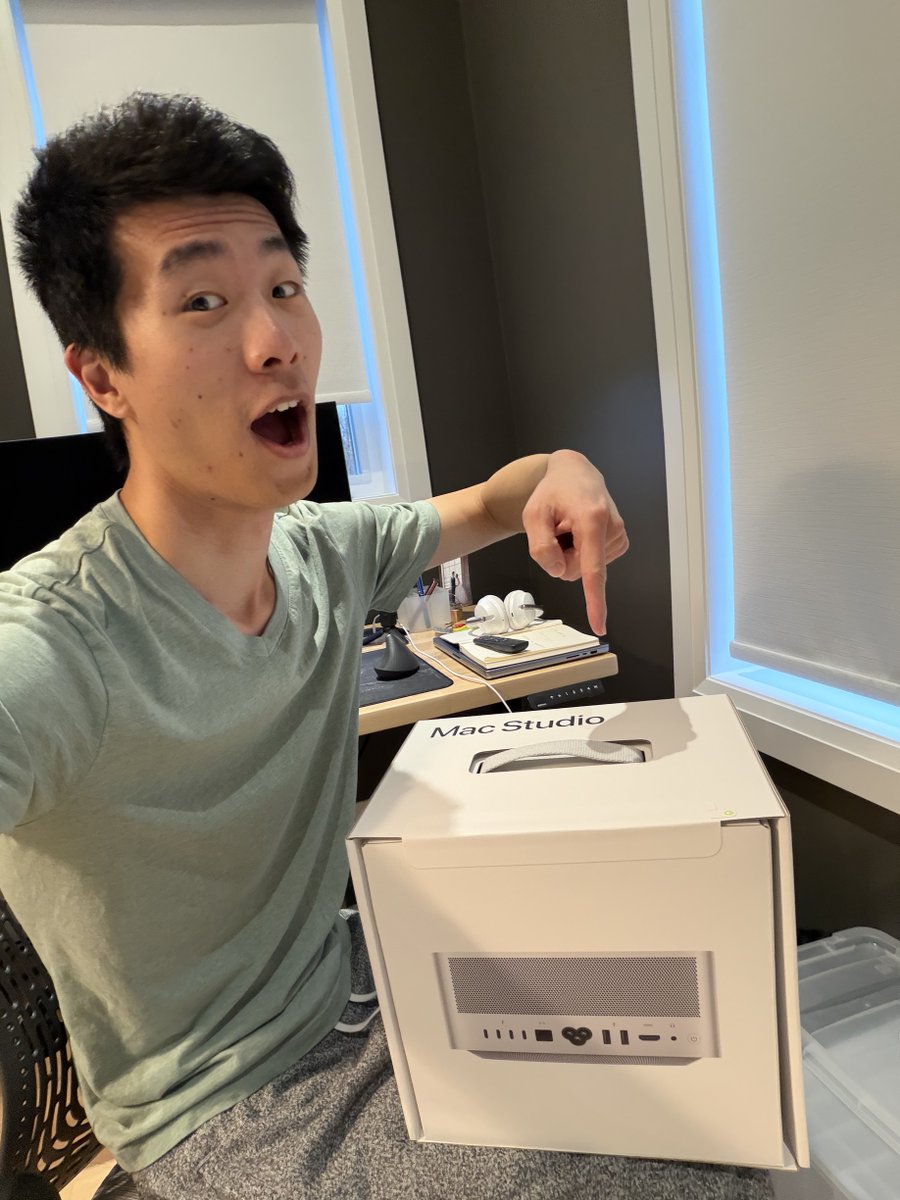

mac dilemma update (73 comments later): going with M4 Max 128GB. now. here's why: 1. opportunity cost - every day my agents are bottlenecked is a day I'm not shipping (wwdc in june is 5 AI years away) 2. pre-AI pricing won't last - apple priced these before AI agent demand 3. vertical scaling = focus - managing network I/O across multiple minis is time I'd rather spend building 4. local = control - no rate limits, no API outages, full autonomy 5. memory > compute - RAM is the bottleneck for agent swarms, not CPU my plan now is to use the studio to power my agent swarm but rely on cloud models (codex 5.3 mostly), but one comment that stuck with me from @HEXtheantidote: "running a local model is the only way for them to achieve true autonomy" local models aren't there yet. but in 12 months? having a self-hosted AI might be as normal as having a router. Mac is the body. agent is the soul.

You all are overthinking your app ideas. This app makes your Mac moan when you slap it -> it made $5,000 in 3 days. Just ship it.