BiggB nag-retweet

BiggB

12.9K posts

BiggB

@bigbindian

Every Story has 3 sides: mine, yours, and the truth

Earth Sumali Haziran 2016

477 Sinusundan98 Mga Tagasunod

BiggB nag-retweet

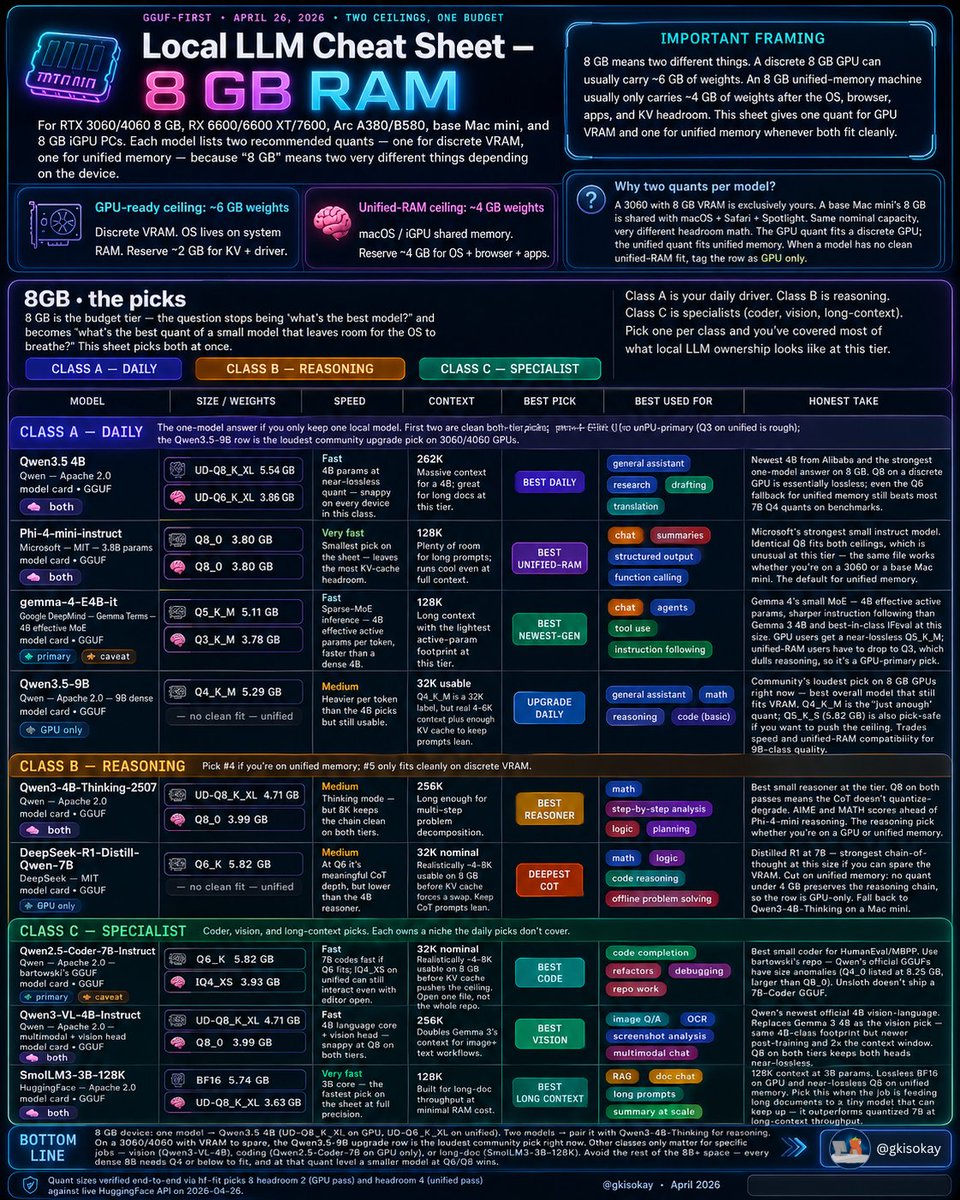

The Local LLM Cheat Sheet for Your 8GB VRAM or Unified-Memory Device

If you have a spare base Mac mini, RTX 3060, or 8GB iGPU device, these are the top LLMs you can run on them.

Best Daily Driving Models

Qwen3.5 4B - The Unified 8GB Daily-Driver

Newest 4B from Alibaba and the strongest one-model answer on 8GB. Q8 on a discrete GPU is essentially lossless, while the Q6 fallback for unified memory still beats most 7B Q4 quants on benchmarks.

Qwen3.5-9B - The Upgrade Daily-Driver for 8GB

The best overall model that fits VRAM. Q4_K_M is just enough, and Q5_K_S at 5.82GB is also pick-safe if you want to push the ceiling. Not a clean unified-memory fit.

Phi-4-mini-instruct - The Best Unified-Memory

Microsoft’s strongest small instruct model, and unusually clean at this tier because the same Q8_0 file fits both ceilings. Great for chat, summaries, structured output, and function calling.

Gemma-4-E4B-it - Newest Top Model

4B effective active params, sharper instruction following than Gemma 3 4B, and best-in-class IFEval at this size. GPU users get a near-lossless Q5_K_M, but unified-memory users have to drop to Q3, which dulls reasoning.

Best Reasoning Models

Qwen3-4B-Thinking-2507 - Best Reasoner

The best small reasoner at this tier. Q8 on both passes means the CoT doesn’t quantize-degrade. AIME and MATH scores land ahead of Phi-4-mini reasoning, making it the reasoning pick whether you’re on a GPU or unified memory.

DeepSeek-R1-Distill-Qwen-7B - Best Chain-of-Thought

The deepest chain-of-thought option if you can spare the VRAM. Distilled R1 at 7B is the strongest reasoning chain at this size, but there is no clean sub-4GB unified-memory quant that preserves the reasoning chain, so is GPU-only.

Best Specialist Models

Qwen2.5-Coder-7B-Instruct - Best Coder

The best small coder for HumanEval and MBPP. Use bartowski’s GGUF repo, since Qwen’s official GGUFs have size anomalies and Unsloth doesn’t ship a 7B-Coder GGUF. Best for code completion, refactors, debugging, and repo work.

Qwen3-VL-4B-Instruct - Best Vision

It replaces Gemma 3 4B as the vision choice with same 4B-class footprint, newer post-training, and 2x the context window. Q8 on both tiers keeps both the language core and vision head near-lossless.

SmolLM3-3B-128K - Best long-context

28K context at 3B params, lossless BF16 on GPU, and near-lossless Q8 on unified memory. Pick this when the job is feeding long documents to a tiny model that can keep up.

On a 3060/4060 with VRAM to spare, Qwen3.5-9B is the best pick right now. The other rows only matter for specific jobs: Qwen3-VL-4B for vision, Qwen2.5-Coder-7B for coding on GPU, and SmolLM3-3B-128K for long-doc work.

Which models have you been running on 8GB VRAM or unified-memory devices? Let me know in the comments.

Graeme@gkisokay

Local LLM Cheat Sheet Master Collection: All Tiers (April 2026) Bookmark this thread to access the top LLMs for your exact hardware and use case 🧵

English

@GordonGChang I don’t think India-US can make any progress to improve relationship under Trump’s presidency

English

BiggB nag-retweet

BiggB nag-retweet

BiggB nag-retweet

🚨 CEO of Nvidia: "I'd hire the graduate who's expert in AI over the one who isn't. Every time."

two types of people right now:

type 1: uses the chat window. copy-pastes answers.

type 2: knows the 25 tricks, and shortcuts most users have never seen.

type 1 gets replaced.

type 2 gets hired at $350K.

I wrote a full guide on how to start. zero experience needed.

Khairallah AL-Awady@eng_khairallah1

English

BiggB nag-retweet

Someone open-sourced the 17 Claude skills behind serious social media growth.

Tired of generic AI content that doesn’t sound like you?

charlie947 dropped social-media-skills: a complete set of 17 specialized skills that make any AI agent a pro-level social media operator.

Everything stays on-brand because it all starts with a single voice-builder skill that defines your personality and story. Then post-writer, carousel maker, reels scripter, analytics tool, and more all follow that voice perfectly.

Key skills included:

- Voice & profile setup

- Hook & post generation with scoring

- Carousels, infographics, reels scripts

- Thumbnail ideas, graphic prompts

- Niche research, content planning

- Analytics and performance insights

Works seamlessly with Claude Code and similar agents.

One-time install, then prompt in plain English and get platform-ready content.

If you’re building in public, running a newsletter, or growing any social presence, this repo will save you hours every week while improving quality.

MIT License. Actively updated.

English

BiggB nag-retweet

🚨 BREAKING: Anthropic just released their official prompt engineering course and it's free.

Interactive Jupyter notebooks covering:

→ Basic to advanced prompting techniques

→ Chain-of-thought and tool use

→ Real agent patterns from the Claude team

12,200 stars (+2,459 this week).

The only prompt engineering course you actually need

English

BiggB nag-retweet

CLAUDE CODE MASTERCLASS 4 HOURS: Build & Sell (2026)

Credit : Michele Torti

Mayank Agarwal 💡@TheAIWorld22

English

BiggB nag-retweet

This 185-page book unlocks the secrets of deep learning.

drive.google.com/file/d/188pV6F…

Foundations

> machine learning basics

> computation efficiency

> training methodologies

Deep Models

> activation functions

> pooling

> dropout

> normalization

> attention

Architectures

> MLPs

> CNNs

> attention

Applications

> image classification

> object detection

> speech recognition

> reinforcement learning

The Compute Schism

> prompt engineering

> quantization

> adapters

> model merging

English

BiggB nag-retweet

BiggB nag-retweet

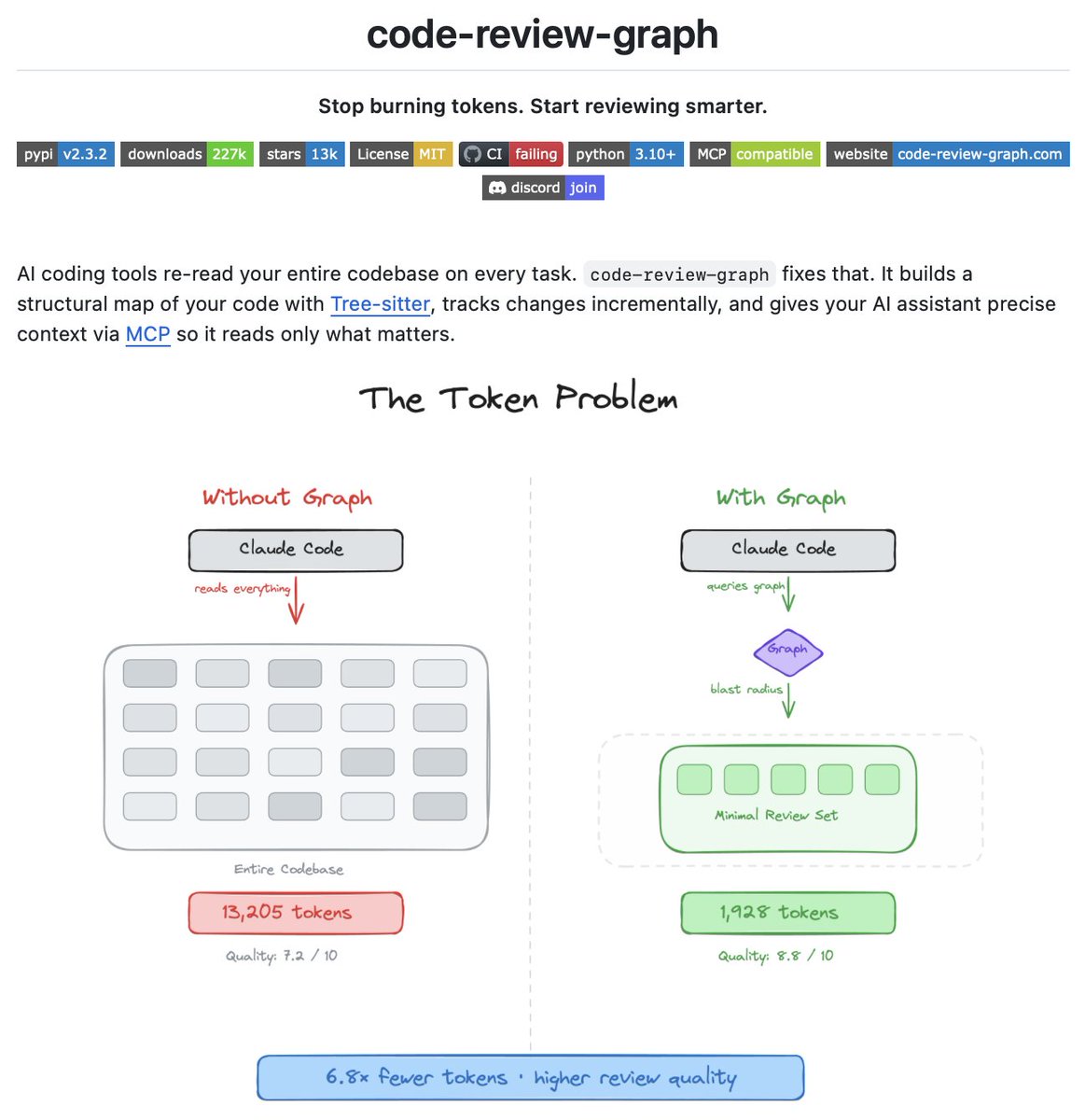

Stop letting Claude Code read files that have nothing to do with your task!

Every time you ask Claude Code to review a change, it scans your entire codebase. Most of those files are irrelevant. You are paying for tokens that add no value.

code-review-graph fixes this by building a persistent knowledge graph of your codebase using Tree-sitter. It maps every function, class, import, and dependency, then at review time computes the exact set of files Claude actually needs to read.

Here's how it works:

• Parses your codebase into an AST and stores it as a graph of nodes and edges in SQLite

• On every file save or git commit, a hook fires and re-parses only what changed

• At review time it traces the blast radius of your change: every caller, dependent, and test that could be affected

• Claude reads only those files instead of scanning the whole project

Real results across 6 open-source repositories: 6.8x fewer tokens on code reviews, up to 49x on daily coding tasks.

Supports 23 languages including Python, TypeScript, Rust, Go, Java, Vue, Solidity, and Jupyter notebooks.

Works with Claude Code, Cursor, Windsurf, and any MCP-compatible agent.

It's 100% open source.

Link to the GitHub repo in the comments!

English

BiggB nag-retweet

BiggB nag-retweet

Tremendo post de Daniel sobre cómo usar subagents

Esto él lo ha centrado en Claude Code pero en realidad esto se extrapola a cualquier cliente que permita usar subagents de esta forma

En OpenCode yo tengo un setup muy parecido. Casi siempre tengo entre 2 - 6 subagents corriendo

Daniel San@dani_avila7

Español

BiggB nag-retweet

EXERCISES FOR VISUAL ACUITY

Eye exercises work wonders if done regularly for 10 minutes a day.

1. Blink frequently for two minutes.

2. Slant your eyes to the right and then move your gaze in a straight line. Do the same in the opposite direction.

3. Move your gaze in different directions: right-left, up-down, circle, figure eight.

4. Close your eyes for 3-5 seconds, then open your eyes. Repeat 7 times.

5. Press on the upper eyelids with your fingers, but without much effort, hold in this position for about two seconds. Perform in series - 4-5 times each.

6. Stand near a window, focus on an object located in the immediate vicinity (a point on the glass), and then shift your gaze to a distant object. Repeat 10 times.

7. Close your eyes and slowly move your eyeballs up and down 5-10 times.

8. With open eyes draw in the air first simple geometric figures, and then complex objects and large-scale compositions.

English

BiggB nag-retweet

New release from @PacktDataML available at: amzn.to/40Sp4O9

"Design Multi-Agent AI Systems Using MCP and A2A: Engineer your own Python-based Agentic AI Framework with tool use, memory, and multi-agent workflows"

Table of Contents:

🟠Introduction to Generative AI and AI agents

⚫️Understanding How AI Agents Work

🟠A Hands on Walk-Through of a Simple AI Agent

⚫️Building a Tool-Based Agentic AI Framework

🟠Implementing Custom Tools

⚫️Creating Chat Interfaces Using Slack and Chainlit

🟠Integrating with the Model Context Protocol Ecosystem

⚫️Designing Multi-Agent Systems

🟠Implementing Multi-Agent Systems with A2A

⚫️Testing, Debugging, & Troubleshooting Multi-Agent Systems

🟠Deploying Multi- Agent Systems

⚫️Advanced Topics and Future Directions

English

BiggB nag-retweet